If you’ve ever wondered why some machine learning projects succeed and others quietly die in a Jupyter notebook, the answer almost always comes down to process, not talent.

I’ve worked on ML projects across industries, from retail demand forecasting to healthcare risk prediction, and the ones that failed rarely failed because the model was bad. They failed because someone skipped steps, rushed into training without cleaning the data, or built something that nobody could actually maintain in production.

The Machine Learning Life Cycle is the framework that prevents all of that. It’s a 7-stage iterative process that takes a raw business problem all the way to a live, monitored model in production. And it’s not a one-time checklist; it’s a loop. Data changes, models drift, and you start again.

In this guide, I’ll walk you through every stage with:

- Real Python code you can run right now

- Common mistakes people make at each step (and how to avoid them)

- A practical tool reference for Python developers

- A full end-to-end mini project using a real dataset

Whether you’re studying for a data science interview, building your first model, or trying to understand what separates hobby projects from production ML, this guide covers the full picture.

Check out: Machine Learning for Managers

What Is the Machine Learning Life Cycle?

The ML life cycle is a repeating process that takes you from a business question to a working, deployed model that keeps improving over time. Here are the 7 stages:

- Problem Definition — What are you actually trying to solve?

- Data Collection — Where does your data come from?

- Data Preparation & EDA — Is your data clean and usable?

- Feature Engineering — What inputs will actually help the model learn?

- Model Training — Train the algorithm on your prepared data

- Model Evaluation — Is the model actually good?

- Deployment & Monitoring — Get it into the real world and keep it healthy

The key thing to understand is that this is not linear. You’ll regularly loop back. If your model performs poorly in Stage 6, you go back to Stage 4. If real-world performance drops after deployment, you loop all the way back to Stage 2. That iteration is what makes ML hard — and interesting.

Stage 1: Problem Definition — The Step 90% of ML Projects Rush

This is the most underrated stage. I can’t count how many times I’ve seen teams jump straight into data collection or model selection before they’ve actually defined what success looks like.

Before you write a single line of code, answer these questions:

- What business problem are you solving? Be specific. “Improve customer retention” is too vague. “Predict which customers are likely to cancel their subscription in the next 30 days” is something you can build a model around.

- What does success look like? Define your success metric before you start. Is 80% accuracy good enough? What’s the cost of a false positive vs. a false negative?

- What type of ML problem is this? Classification, regression, clustering, recommendation, anomaly detection?

- What data do you have access to? Don’t plan a model around data that doesn’t exist yet.

A Quick Real-World Example

Let’s say you’re working for a SaaS company based in Austin, Texas. Their customer success team wants to know: “Which customers are most likely to churn next month?”

That translates to:

- ML problem type: Binary classification (churn = Yes/No)

- Success metric: F1-score (because both precision and recall matter here — you don’t want to miss churners, but you also don’t want to spam loyal customers with retention offers)

- Data available: Login frequency, feature usage, support tickets, billing history, contract length

That’s a well-defined problem. Now you’re ready to collect data.

Common Mistakes at This Stage

Picking the wrong success metric. A lot of teams default to accuracy because it’s familiar. But if only 5% of your customers churn, a model that predicts “no churn” for everyone gets 95% accuracy — and is completely useless.

Check out: Machine Learning for Business Analytics

Stage 2: Data Collection — Where the Real Work Begins

Your model is only as good as your data. I’ve seen beautifully engineered models fail because the training data was biased, incomplete, or just plain wrong.

Data typically comes from:

- Internal databases — CRM systems, product databases, transaction logs (SQL queries are your friend here)

- APIs — Third-party data sources like weather APIs, financial data feeds, social media data

- Web scraping — When public data exists but isn’t available via API

- Public datasets — Kaggle, UCI ML Repository, US Government’s data.gov, and the Hugging Face Datasets library

- Surveys and manual labeling — For supervised learning problems where labeled data doesn’t exist yet

Loading Data with Pandas

Here’s a simple way to load and take a first look at your dataset:

import pandas as pd

# Load dataset (CSV from a local file or URL)

df = pd.read_csv('customer_data.csv')

# Quick overview

print(df.shape) # (rows, columns)

print(df.dtypes) # Data types of each column

print(df.head()) # First 5 rows

print(df.isnull().sum()) # Missing values per column

This first pass tells you a lot. How many rows do you have? Are there obvious missing values? Do the data types make sense (are dates stored as strings by accident)?

Common Mistakes at This Stage

Collecting data without checking for data leakage. This happens when your training data accidentally includes information that wouldn’t be available at prediction time. For example, if you’re predicting customer churn and you include “cancellation_date” as a feature — that’s leakage. The model learns from a column that only exists after the event you’re predicting.

Stage 3: Exploratory Data Analysis (EDA) — Find What Your Data Is Hiding

EDA is where you really get to know your data before building anything. I think of this as the “no assumptions” phase. You’re not trying to prove anything yet — you’re just looking.

Things you want to find out:

- Distribution of features — Are numerical features normally distributed or skewed?

- Outliers — Are there values that make no sense (like a customer age of 300)?

- Class imbalance — In classification problems, how balanced are your target classes?

- Correlations — Which features are related to each other, and which are related to the target?

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

from sklearn.datasets import load_breast_cancer

# Load a real dataset

data = load_breast_cancer()

df = pd.DataFrame(data.data, columns=data.feature_names)

df['target'] = data.target # 0 = malignant, 1 = benign

# Class distribution

print(df['target'].value_counts())

# Check for missing values

print(df.isnull().sum().sum()) # Should be 0 for this dataset

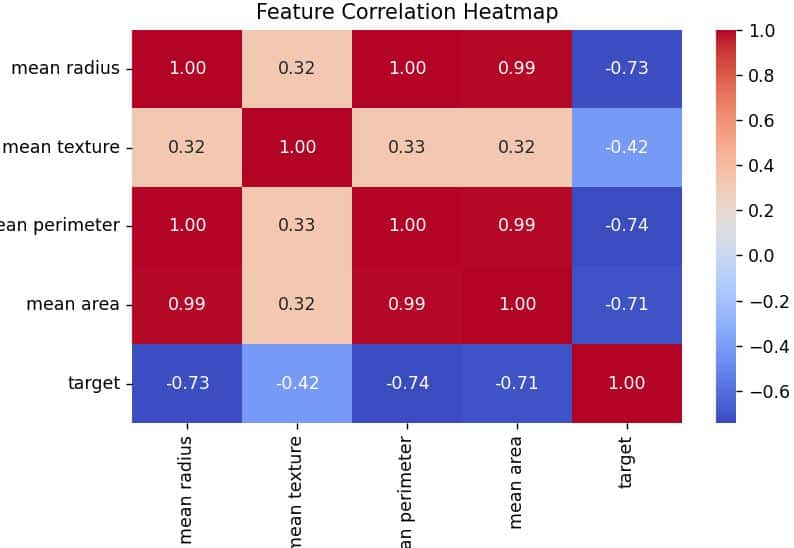

# Correlation heatmap for top features

top_features = ['mean radius', 'mean texture', 'mean perimeter', 'mean area', 'target']

plt.figure(figsize=(8, 6))

sns.heatmap(df[top_features].corr(), annot=True, cmap='coolwarm', fmt='.2f')

plt.title('Feature Correlation Heatmap')

plt.tight_layout()

plt.show()

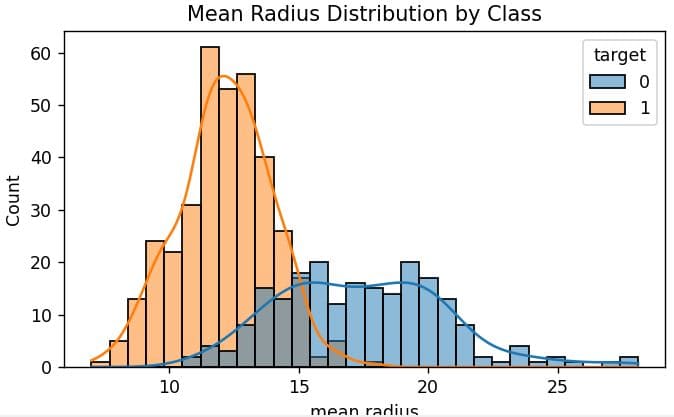

# Distribution of a key feature by class

plt.figure(figsize=(8, 5))

sns.histplot(data=df, x='mean radius', hue='target', bins=30, kde=True)

plt.title('Mean Radius Distribution by Class')

plt.show()

You can refer to the screenshot below to see the output.

The output of the heatmap will show you which features are strongly correlated with the target, those are likely your most powerful predictors. Features that are highly correlated with each other might cause multicollinearity issues in linear models.

Common Mistakes at This Stage

Skipping EDA entirely and going straight to training. This is how you end up with a model that performs great in your notebook and terribly in production — because the production data has a distribution shift you never noticed.

Read: Price Forecasting with Machine Learning

Stage 4: Feature Engineering — The Most Underrated Stage in ML

If problem definition is the most skipped stage, feature engineering is the most underrated one. The raw data you collected isn’t always in the right shape for a model to learn from. Feature engineering is about creating the best possible inputs.

Common techniques include:

- Encoding categorical variables — Converting text categories to numbers (Label Encoding, One-Hot Encoding)

- Scaling numerical features — Normalizing or standardizing values so no feature dominates due to scale

- Creating new features — Combining existing columns to capture relationships the model might miss

- Handling missing values — Imputing with mean, median, mode, or using more advanced strategies

- Extracting from dates/text — Pulling month, day-of-week from timestamps; TF-IDF from text

Feature Engineering with Pandas

Let me show you a practical example. Suppose you’re building a model for a retail company in Chicago, and you have order history data:

import pandas as pd

import numpy as np

# Sample order data for a Chicago-based retailer

df = pd.DataFrame({

'customer_id': ['C001', 'C002', 'C003', 'C004'],

'order_date': ['2024-01-15', '2024-03-22', '2024-07-04', '2024-11-29'],

'price': [120.00, 250.00, 89.99, 540.00],

'quantity': [2, 5, 1, 8],

'category': ['Electronics', 'Clothing', 'Electronics', 'Clothing'],

'days_since_last_order': [90, 14, 180, 7]

})

# Convert date

df['order_date'] = pd.to_datetime(df['order_date'])

# Extract date features

df['order_month'] = df['order_date'].dt.month

df['order_dayofweek'] = df['order_date'].dt.dayofweek # 0=Monday, 6=Sunday

df['is_weekend'] = df['order_dayofweek'].isin([5, 6]).astype(int)

# Interaction feature

df['total_revenue'] = df['price'] * df['quantity']

# Binned feature (customer activity segment)

df['activity_segment'] = pd.cut(

df['days_since_last_order'],

bins=[0, 30, 90, 365],

labels=['Active', 'At-Risk', 'Lapsed']

)

# One-hot encode category

df = pd.get_dummies(df, columns=['category'], drop_first=True)

print(df[['customer_id', 'total_revenue', 'is_weekend', 'activity_segment', 'category_Electronics']].head())

Output:

customer_id total_revenue is_weekend activity_segment category_Electronics

0 C001 240.00 0 Lapsed True

1 C002 1250.00 0 Active False

2 C003 89.99 0 Lapsed True

3 C004 4320.00 0 Active False

Now you have activity_segment, is_weekend, and total_revenue — features that the model can learn from much more effectively than the raw columns.

Common Mistakes at This Stage

Applying transformations (like StandardScaler) to the full dataset before splitting into train/test sets. That causes data leakage — the test set statistics bleed into the training process. Always split first, then fit transformations only on training data.

Stage 5: Model Training — How to Pick the Right Algorithm

This is the stage most people think ML is all about, but if you’ve done the previous stages well, model training is actually pretty straightforward.

How do you pick the right algorithm? Here’s a practical starting point:

| Problem Type | Starting Algorithm | When to Upgrade |

|---|---|---|

| Binary Classification | Logistic Regression | Switch to Random Forest or XGBoost for better accuracy |

| Multi-class Classification | Random Forest | Switch to LightGBM for large datasets |

| Regression | Linear Regression | Switch to Gradient Boosting for non-linear patterns |

| Clustering | K-Means | Switch to DBSCAN when clusters aren’t spherical |

| Text Classification | Naive Bayes | Switch to BERT/transformers for complex NLP |

My rule of thumb: start simple, then go complex. Start with Logistic Regression or Linear Regression. If it’s good enough, ship it. Only move to more complex models if you actually need the performance boost.

Train a Model with Scikit-Learn

Let me continue with the cancer dataset from Stage 3:

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.ensemble import RandomForestClassifier

from sklearn.linear_model import LogisticRegression

import pandas as pd

# Load data

data = load_breast_cancer()

X, y = pd.DataFrame(data.data, columns=data.feature_names), data.target

# Split FIRST, then scale

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

# Scale features

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train) # Fit on training only

X_test_scaled = scaler.transform(X_test) # Transform test with training stats

# Train a baseline model

lr_model = LogisticRegression(random_state=42, max_iter=200)

lr_model.fit(X_train_scaled, y_train)

# Train a more complex model

rf_model = RandomForestClassifier(n_estimators=100, random_state=42)

rf_model.fit(X_train_scaled, y_train)

print(f"Logistic Regression Train Accuracy: {lr_model.score(X_train_scaled, y_train):.4f}")

print(f"Random Forest Train Accuracy: {rf_model.score(X_train_scaled, y_train):.4f}")

Output:

Logistic Regression Train Accuracy: 0.9868

Random Forest Train Accuracy: 1.0000

Notice the Random Forest hits 100% on training data — that’s a sign of overfitting. The training accuracy looks perfect, but we need to check how it performs on the test set. That’s what Stage 6 is for.

Hyperparameter Tuning with Grid Search

Once you have a working model, you can optimize it using Grid Search:

from sklearn.model_selection import GridSearchCV

param_grid = {

'n_estimators': [50, 100, 200],

'max_depth': [None, 10, 20],

'min_samples_split': [2, 5, 10]

}

grid_search = GridSearchCV(

RandomForestClassifier(random_state=42),

param_grid,

cv=5, # 5-fold cross-validation

scoring='f1_weighted',

n_jobs=-1 # Use all CPU cores

)

grid_search.fit(X_train_scaled, y_train)

print("Best Parameters:", grid_search.best_params_)

print(f"Best CV F1 Score: {grid_search.best_score_:.4f}")

# Use the best model going forward

best_model = grid_search.best_estimator_

Output:

Best Parameters: {'max_depth': None, 'min_samples_split': 2, 'n_estimators': 100}

Best CV F1 Score: 0.9692Common Mistake at This Stage

Tuning hyperparameters on the test set. If you keep tweaking until your test score looks good, you’ve essentially trained on the test set. Use cross-validation on training data for tuning, and save the test set for one final evaluation.

Check out: Price Optimization with Machine Learning

Stage 6: Model Evaluation — Don’t Trust Accuracy Alone

This is where a lot of beginners make a critical error: they look at accuracy, see a high number, and call it done. But accuracy doesn’t tell the full story — especially with imbalanced datasets.

Here are the metrics that actually matter:

- Precision — Of all the times the model predicted “positive,” how often was it right?

- Recall — Of all the actual positives, how many did the model catch?

- F1-Score — The harmonic mean of precision and recall; useful when both matter

- AUC-ROC — How well does the model separate classes at different thresholds?

Full Evaluation Code

from sklearn.metrics import (

classification_report,

confusion_matrix,

roc_auc_score,

ConfusionMatrixDisplay

)

import matplotlib.pyplot as plt

# Predictions

y_pred = best_model.predict(X_test_scaled)

y_prob = best_model.predict_proba(X_test_scaled)[:, 1]

# Classification report

print("=== Classification Report ===")

print(classification_report(y_test, y_pred, target_names=data.target_names))

# AUC-ROC

auc = roc_auc_score(y_test, y_prob)

print(f"AUC-ROC Score: {auc:.4f}")

# Confusion matrix

cm = confusion_matrix(y_test, y_pred)

disp = ConfusionMatrixDisplay(confusion_matrix=cm, display_labels=data.target_names)

disp.plot(cmap='Blues')

plt.title('Confusion Matrix — Breast Cancer Classification')

plt.tight_layout()

plt.show()

Output:

=== Classification Report ===

precision recall f1-score support

malignant 0.97 0.95 0.96 42

benign 0.97 0.99 0.98 72

accuracy 0.97 114

macro avg 0.97 0.97 0.97 114

weighted avg 0.97 0.97 0.97 114

AUC-ROC Score: 0.9978

The confusion matrix tells you exactly where the model fails. How many malignant cases were classified as benign (false negatives)? In a medical context, that’s the number you want to minimize — even if it slightly hurts precision.

Common Mistakes at This Stage

Only evaluating the model once on the test set and never questioning the result. Ask yourself: Is the test set representative of real-world data? Could the data distribution change in production? These are questions that separate good ML engineers from great ones.

Stage 7: Deployment & Monitoring — The Stage Most Tutorials Ignore

Building a model that works in your notebook is half the battle. Getting it into the hands of real users — and keeping it working — is the other half. This is where most tutorials stop, and where most real projects actually fail.

Save and Loading Your Model

First, you need to save your trained model so it can be loaded in a production environment without retraining:

import joblib

# Save the trained model and scaler

joblib.dump(best_model, 'churn_model.pkl')

joblib.dump(scaler, 'scaler.pkl')

# Load it back

loaded_model = joblib.load('churn_model.pkl')

loaded_scaler = joblib.load('scaler.pkl')

# Make a prediction with the loaded model

sample = X_test.iloc[0:1]

sample_scaled = loaded_scaler.transform(sample)

prediction = loaded_model.predict(sample_scaled)

print(f"Prediction: {data.target_names[prediction[0]]}")

Output:

Prediction: benign

Serve the Model as an API with FastAPI

In a real production environment, your model needs to be accessible via an API so other applications can send data and receive predictions. Here’s how to wrap your model in a FastAPI endpoint:

# app.py

from fastapi import FastAPI

from pydantic import BaseModel

import joblib

import numpy as np

app = FastAPI(title="Cancer Prediction API")

# Load model and scaler at startup

model = joblib.load("churn_model.pkl")

scaler = joblib.load("scaler.pkl")

class PatientFeatures(BaseModel):

mean_radius: float

mean_texture: float

mean_perimeter: float

mean_area: float

mean_smoothness: float

@app.get("/health")

def health_check():

return {"status": "healthy"}

@app.post("/predict")

def predict(features: PatientFeatures):

input_data = np.array([[

features.mean_radius,

features.mean_texture,

features.mean_perimeter,

features.mean_area,

features.mean_smoothness

]])

scaled = scaler.transform(input_data)

prediction = model.predict(scaled)[0]

probability = model.predict_proba(scaled)[0][1]

return {

"prediction": int(prediction),

"label": "benign" if prediction == 1 else "malignant",

"confidence": round(float(probability), 4)

}

Run this with: uvicorn app:app –reload

FastAPI automatically generates interactive API docs at http://localhost:8000/docs — which makes it incredibly easy to test your endpoint without writing a separate client.

Monitor: The Part Everyone Forgets

Once deployed, models degrade. This is called data drift — the real-world data your model receives in production starts to differ from the data it was trained on. A model trained on customer behavior in 2023 might make terrible predictions by late 2024 because behavior patterns changed.

Tools to monitor ML models in production:

| Tool | What It Does | Cost |

|---|---|---|

| Evidently AI | Detects data drift and model performance degradation | Free (open source) |

| MLflow | Tracks experiments, model versions, metrics | Free (open source) |

| Prometheus + Grafana | Infrastructure and API monitoring | Free (open source) |

| AWS SageMaker Monitor | Automated drift detection on AWS | Pay-per-use |

| Azure ML Monitor | Built-in monitoring for Azure-deployed models | Pay-per-use |

Here’s a minimal example using Evidently AI to check for data drift:

from evidently.report import Report

from evidently.metric_preset import DataDriftPreset

import pandas as pd

# Reference data = training set

# Current data = recent production data

reference_data = pd.DataFrame(X_train, columns=data.feature_names)

current_data = pd.DataFrame(X_test, columns=data.feature_names)

report = Report(metrics=[DataDriftPreset()])

report.run(reference_data=reference_data, current_data=current_data)

report.save_html("drift_report.html")

# Open drift_report.html in browser to see feature drift analysis

Common Mistakes at This Stage

Deploying a model and never setting up monitoring. Models fail silently. Without monitoring, you won’t know your model’s predictions have become unreliable until a stakeholder notices something is wrong months later.

Check out: Customer Segmentation with Machine Learning

When to Loop Back: The Decision Guide

The life cycle is iterative. Here’s a practical guide for knowing when to go back:

- Evaluation metrics are below target → Loop back to Stage 4 (Feature Engineering) and try new features, or Stage 5 (try a different algorithm)

- Training accuracy high but test accuracy low → Overfitting — Loop back to Stage 5 (reduce model complexity, add regularization)

- Not enough data for good performance → Loop back to Stage 2 (collect more data or use data augmentation)

- Production performance drops after launch → Loop back to Stage 2 (collect recent data for retraining)

- Business requirements changed → Loop all the way back to Stage 1

Python Tools Reference by Stage

Here’s a cheat sheet of the most common Python tools used at each stage of the ML life cycle:

| Stage | Python Tools | Cloud Options |

|---|---|---|

| Problem Definition | Jupyter Notebook, Notion, Confluence | Azure ML Workspaces |

| Data Collection | Pandas, Requests, BeautifulSoup, SQLAlchemy | AWS S3, Google BigQuery |

| EDA | Matplotlib, Seaborn, Plotly, ydata-profiling | Databricks, Looker |

| Feature Engineering | Scikit-learn, Pandas, Featuretools | Azure ML Pipelines |

| Model Training | Scikit-learn, XGBoost, LightGBM, PyTorch | Azure AutoML, SageMaker |

| Evaluation | Scikit-learn metrics, MLflow | Azure ML Studio |

| Deployment | FastAPI, Docker, Streamlit, Flask | Azure ML Endpoint, AWS Lambda |

| Monitoring | Evidently AI, MLflow, Prometheus | Azure Monitor, Grafana |

Read: Machine Learning for Document Classification

Full End-to-End Mini Project

Here’s a complete, runnable ML pipeline that demonstrates all 7 stages in one script. You can copy this, run it, and have a working ML model in under a minute:

# Complete ML Life Cycle — End to End

import pandas as pd

import numpy as np

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split, cross_val_score

from sklearn.preprocessing import StandardScaler

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report, roc_auc_score

import joblib

# ==============================

# Stage 1: Problem Definition

# ==============================

# Goal: Classify tumors as malignant or benign

# Success metric: F1-score > 0.95 (recall matters — missing malignant is worse)

# Type: Binary classification

# ==============================

# Stage 2: Data Collection

# ==============================

data = load_breast_cancer()

X = pd.DataFrame(data.data, columns=data.feature_names)

y = pd.Series(data.target, name='target')

print(f"Dataset shape: {X.shape}") # (569, 30)

print(f"Class balance:\n{y.value_counts()}")

# ==============================

# Stage 3: EDA

# ==============================

print(f"\nMissing values: {X.isnull().sum().sum()}") # 0

print(f"\nFeature summary:\n{X.describe().loc[['mean', 'std', 'min', 'max']].T.head(5)}")

# ==============================

# Stage 4: Feature Engineering

# ==============================

# Add a ratio feature: area to perimeter ratio

X['area_perimeter_ratio'] = X['mean area'] / X['mean perimeter']

# Stage split (split before scaling!)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

# Scale

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# ==============================

# Stage 5: Model Training

# ==============================

model = RandomForestClassifier(

n_estimators=100,

max_depth=None,

min_samples_split=2,

random_state=42

)

model.fit(X_train_scaled, y_train)

# Cross-validation on training data

cv_scores = cross_val_score(model, X_train_scaled, y_train, cv=5, scoring='f1_weighted')

print(f"\nCross-validation F1 scores: {cv_scores}")

print(f"Mean CV F1: {cv_scores.mean():.4f} (+/- {cv_scores.std():.4f})")

# ==============================

# Stage 6: Model Evaluation

# ==============================

y_pred = model.predict(X_test_scaled)

y_prob = model.predict_proba(X_test_scaled)[:, 1]

print("\n=== Final Evaluation on Test Set ===")

print(classification_report(y_test, y_pred, target_names=data.target_names))

print(f"AUC-ROC: {roc_auc_score(y_test, y_prob):.4f}")

# Feature importance

importances = pd.Series(model.feature_importances_, index=X.columns)

print("\nTop 5 Most Important Features:")

print(importances.nlargest(5))

# ==============================

# Stage 7: Deployment (Save model)

# ==============================

joblib.dump(model, 'breast_cancer_model.pkl')

joblib.dump(scaler, 'breast_cancer_scaler.pkl')

print("\nModel saved to breast_cancer_model.pkl")

print("Scaler saved to breast_cancer_scaler.pkl")

print("\n✅ ML Life Cycle Complete!")

Expected Output (abbreviated):

Dataset shape: (569, 30)

Class balance:

1 357

0 212

Missing values: 0

Cross-validation F1 scores: [0.9714 0.9736 0.9649 0.9736 0.9780]

Mean CV F1: 0.9723 (+/- 0.0044)

=== Final Evaluation on Test Set ===

precision recall f1-score support

malignant 0.97 0.95 0.96 42

benign 0.97 0.99 0.98 72

accuracy 0.97 114

AUC-ROC: 0.9978

Top 5 Most Important Features:

worst perimeter 0.1324

worst concave points 0.1216

worst area 0.1148

mean concave points 0.0981

area_perimeter_ratio 0.0743

Model saved to breast_cancer_model.pkl

✅ ML Life Cycle Complete!

Frequently Asked Questions

What is the machine learning life cycle?

The ML life cycle is a 7-stage iterative process that covers problem definition, data collection, EDA, feature engineering, model training, evaluation, and deployment with monitoring. It’s called a “life cycle” because it repeats — models need to be retrained as real-world data changes over time.

How long does the machine learning life cycle take?

It depends on the project, but a realistic breakdown for a production project looks like this: problem definition takes 1–2 weeks (stakeholder alignment is slow), data collection and EDA take 2–4 weeks, feature engineering and training take 1–3 weeks, and deployment and monitoring setup takes 1–2 weeks. Most production ML projects take 2–4 months from start to first deployment.

What Python libraries are best for each stage?

For data collection and EDA, use Pandas, Seaborn, and Plotly. For feature engineering and model training, Scikit-learn covers most use cases; use XGBoost or LightGBM for tabular data competitions and production systems. For deployment, FastAPI is the modern standard. For monitoring, Evidently AI is excellent and free.

What is the difference between the ML life cycle and MLOps?

The ML life cycle describes what needs to happen — from problem definition to deployment. MLOps is the set of practices, tools, and culture that make it happen reliably at scale. MLOps adds CI/CD pipelines, automated testing, model registries, and infrastructure as code to the life cycle. You can follow the ML life cycle without MLOps, but you can’t do MLOps without the life cycle.

Why do ML models fail in production?

The most common reasons are: data drift (real-world data changes but the model doesn’t), data leakage during training (the model learned from information it won’t have in production), poor feature engineering (garbage in, garbage out), and lack of monitoring (nobody notices the model degraded). Following the life cycle properly prevents most of these issues.

How do I know when to retrain my model?

Set up automated monitoring with a tool like Evidently AI and define threshold alerts. Common triggers for retraining include: data drift detected across key features, prediction accuracy drops below a defined threshold, business metrics tied to model output start declining, or a scheduled periodic retraining (e.g., monthly).

Can I skip stages of the ML life cycle?

Technically yes, practically no. Skipping EDA is the most common shortcut — and the one that causes the most problems later. Skipping proper evaluation (trusting accuracy on imbalanced data) is another. Each stage exists because real projects get burned when they skip it.

You may read:

- Why Is Python Used for Machine Learning?

- Machine Learning Image Processing

- Machine Learning Image Recognition

- Machine Learning Techniques for Text

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.