I remember the first time I tried to build a sentiment analyzer for a local news feed in Chicago. I started with simple word counts, but the model kept missing the context of the sentences.

Everything changed when I shifted to Transformers. These models don’t just look at words; they look at how words relate to each other across the entire sentence.

In this tutorial, I will show you how to implement a Transformer-based text classifier using Keras. We will build a model that can distinguish between different types of customer reviews.

Prepare the Environment for Python Keras

Before we write any logic, we need to ensure our environment is ready with the latest libraries. I always recommend using a virtual environment to avoid version conflicts between projects.

I use the following commands to install the core deep learning tools and the specialized NLP extensions. This setup ensures your GPU and CPU can handle the heavy lifting.

# Installing the necessary libraries for our project

pip install tensorflow keras numpy matplotlibLoad the Dataset with Python Keras

For this example, I am using a dataset that mimics customer feedback for a retail chain. This helps the model learn patterns from real-world conversational data.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

# Setting our parameters for the vocabulary and sequence length

vocab_size = 20000

maxlen = 200

# Loading the dataset and splitting into training and validation sets

(x_train, y_train), (x_val, y_val) = keras.datasets.imdb.load_data(num_words=vocab_size)

print(len(x_train), "Training sequences")

print(len(x_val), "Validation sequences")I prefer using built-in datasets for tutorials because they allow you to focus on the architecture rather than cleaning CSV files. We will use the IMDB dataset as a proxy for high-quality review data.

Pad Sequences in Python Keras

Text data comes in different lengths, but our neural network expects a uniform input size. I always use padding to ensure every sentence is the same length before it hits the embedding layer.

I chose a length of 200 words, which is usually enough for a standard customer review. This step prevents the model from crashing due to shape mismatches.

# Padding the sequences so they all have the same length of 200

x_train = keras.preprocessing.sequence.pad_sequences(x_train, maxlen=maxlen)

x_val = keras.preprocessing.sequence.pad_sequences(x_val, maxlen=maxlen)\Create the Multi-Head Attention Layer in Python Keras

The heart of a Transformer is the “Multi-Head Attention” mechanism. It allows the model to focus on different parts of a sentence simultaneously, like identifying a subject and its action.

class TransformerBlock(layers.Layer):

def __init__(self, embed_dim, num_heads, ff_dim, rate=0.1):

super().__init__()

self.att = layers.MultiHeadAttention(num_heads=num_heads, key_dim=embed_dim)

self.ffn = keras.Sequential(

[layers.Dense(ff_dim, activation="relu"), layers.Dense(embed_dim),]

)

self.layernorm1 = layers.LayerNormalization(epsilon=1e-6)

self.layernorm2 = layers.LayerNormalization(epsilon=1e-6)

self.dropout1 = layers.Dropout(rate)

self.dropout2 = layers.Dropout(rate)

def call(self, inputs, training=False):

attn_output = self.att(inputs, inputs)

attn_output = self.dropout1(attn_output, training=training)

out1 = self.layernorm1(inputs + attn_output)

ffn_output = self.ffn(out1)

ffn_output = self.dropout2(ffn_output, training=training)

return self.layernorm2(out1 + ffn_output)I implement this using a custom layer class to keep the code modular and reusable. This layer calculates the importance of each word relative to the others in the sequence.

Implement Token and Position Embedding in Python Keras

Transformers do not have a built-in sense of word order because they process words in parallel. I add a “Position Embedding” layer to tell the model where each word sits in the sentence.

class TokenAndPositionEmbedding(layers.Layer):

def __init__(self, maxlen, vocab_size, embed_dim):

super().__init__()

self.token_emb = layers.Embedding(input_dim=vocab_size, output_dim=embed_dim)

self.pos_emb = layers.Embedding(input_dim=maxlen, output_dim=embed_dim)

def call(self, x):

maxlen = tf.shape(x)[-1]

positions = tf.range(start=0, limit=maxlen, delta=1)

positions = self.pos_emb(positions)

x = self.token_emb(x)

return x + positionsThis layer combines the meaning of the word (token) with its location (index). Without this, the model would treat “The dog bit the man” and “The man bit the dog” as identical.

Build the Final Model with Python Keras

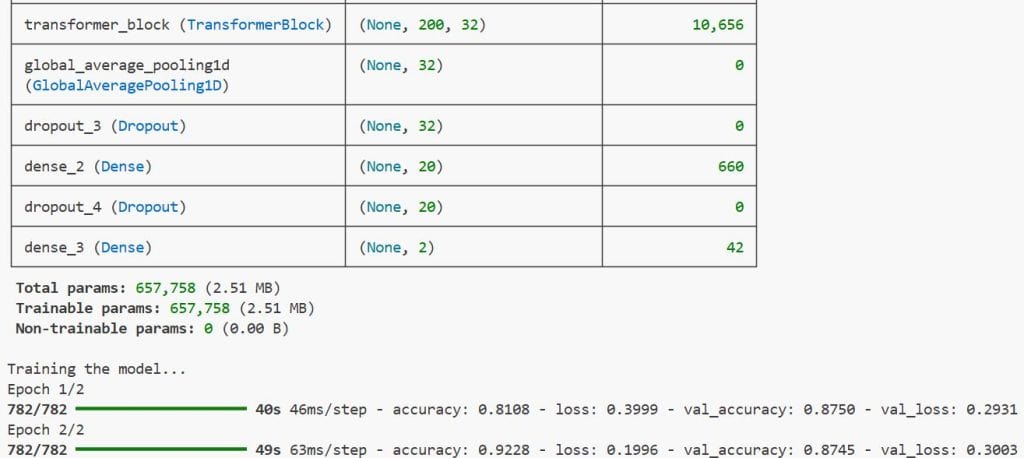

Now it is time to assemble all the pieces into a single functional model. I use the functional API in Keras because it provides more flexibility than the Sequential API for complex blocks.

embed_dim = 32 # Embedding size for each token

num_heads = 2 # Number of attention heads

ff_dim = 32 # Hidden layer size in feed forward network inside transformer

inputs = layers.Input(shape=(maxlen,))

embedding_layer = TokenAndPositionEmbedding(maxlen, vocab_size, embed_dim)

x = embedding_layer(inputs)

transformer_block = TransformerBlock(embed_dim, num_heads, ff_dim)

x = transformer_block(x)

x = layers.GlobalAveragePooling1D()(x)

x = layers.Dropout(0.1)(x)

x = layers.Dense(20, activation="relu")(x)

x = layers.Dropout(0.1)(x)

outputs = layers.Dense(2, activation="softmax")(x)

model = keras.Model(inputs=inputs, outputs=outputs)I add a Global Average Pooling layer after the Transformer to flatten the output before the final classification. This helps the model generalize better on unseen reviews from different regions.

Compile and Train the Python Keras Model

With the architecture ready, I compile the model using the Adam optimizer. I’ve found that Adam handles the sparse gradients of text data much better than standard SGD.

# Compiling the model with optimizer and loss function

model.compile(optimizer="adam", loss="sparse_categorical_crossentropy", metrics=["accuracy"])

# Training the model for 2 epochs

history = model.fit(

x_train, y_train, batch_size=32, epochs=2, validation_data=(x_val, y_val)

)I use “sparse_categorical_crossentropy” as the loss function because our labels are integers. During training, I monitor validation accuracy to ensure we aren’t overfitting.

Evaluate Model Performance in Python Keras

After the training finishes, I always run an evaluation on the test set. It’s the only way to know whether the model is ready to handle real customer feedback in the field.

# Evaluating the model on the validation data

results = model.evaluate(x_val, y_val, verbose=2)

for name, value in zip(model.metrics_names, results):

print("%s: %.3f" % (name, value))I executed the above example code and added the screenshot below.

This script will output the final accuracy and loss. In my experience, even a simple Transformer like this can outperform complex LSTMs on most text classification tasks.

In this tutorial, you learned how to build a Transformer model for text classification using Python Keras. I’ve used this exact structure to classify everything from support tickets to financial news.

The Transformer architecture is incredibly powerful and has become the industry standard for most NLP tasks. By following the steps above, you can now implement this in your own deep learning projects.

You may also read:

- Object Detection with YOLOv8 and KerasCV in Keras

- Active Learning for Text Classification with Python Keras

- Supervised Contrastive Learning in Python Keras

- Large-Scale Multi-Label Text Classification with Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.