I’ve been writing Python code for over a decade now. In my early years, I used to load entire datasets into memory without a second thought.

Then I tried to process a 4GB log file from a California data center on my local machine.

My laptop froze instantly. Since then, I’ve learned that reading files line by line isn’t just a “good practice”, it’s a survival skill for developers.

In this tutorial, I’ll show you the most efficient ways to read files line by line using real-world examples, like processing US census data or sales logs.

Method 1: Use the For Loop (The Pythonic Way)

This is my go-to method for 99% of my projects. It is memory-efficient because Python doesn’t load the whole file at once. Instead, it uses a buffer to grab one line at a time.

Suppose you have a file named us_cities.txt that looks like this:

New York, NY

Los Angeles, CA

Chicago, IL

Houston, TX

Phoenix, AZHere is how I would read it:

# Reading US city data line by line

file_path = 'us_cities.txt'

try:

with open(file_path, 'r') as file:

for line in file:

# .strip() removes the newline character (\n)

print(line.strip())

except FileNotFoundError:

print("The city data file was not found.")You can refer to the screenshot below to see the output.

It’s clean, readable, and handles the “closing” of the file automatically thanks to the with statement.

Method 2: Use the readline() Method

I use readline() when I need more control over exactly when a line is read.

For instance, if I’m parsing a US financial report where the first line is a header, and the rest are transactions.

# Reading a financial report with a header

with open('wall_street_logs.txt', 'r') as file:

# Read just the header first

header = file.readline().strip()

print(f"Processing Category: {header}")

# Process the remaining lines

line = file.readline()

while line:

print(f"Transaction: {line.strip()}")

line = file.readline()You can refer to the screenshot below to see the output.

Remember that readline() returns an empty string when it reaches the end of the file.

Method 3: Use readlines() (For Small Files)

Sometimes I know for a fact the file is small, like a list of the 50 US States.

In these cases, readlines() is handy because it puts every line into a Python list immediately.

# Loading a list of US States into memory

with open('us_states.txt', 'r') as file:

states = file.readlines()

# Now I can easily sort them or pick one by index

states.sort()

print(f"Total states loaded: {len(states)}")

print(f"First alphabetically: {states[0].strip()}")You can refer to the screenshot below to see the output.

Don’t use this for large log files! It will eat up your RAM because it stores everything in a list at once.

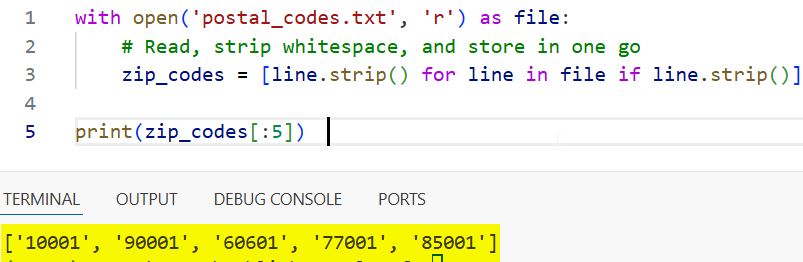

Method 4: Use List Comprehension

If I want to read all lines and clean them up in one single line of code, I use list comprehension.

This is great for creating a clean list of US zip codes from a raw text file.

# Creating a clean list of zip codes

with open('postal_codes.txt', 'r') as file:

# Read, strip whitespace, and store in one go

zip_codes = [line.strip() for line in file if line.strip()]

print(zip_codes[:5]) # Show first 5 zip codesYou can refer to the screenshot below to see the output.

Method 5: Handle Large Files with a Buffer Size

When I’m dealing with massive datasets (like a national shipping log), I sometimes read in specific “chunks.”

This is a bit more advanced, but it gives you total control over memory usage.

# Reading a massive shipping log in chunks

def read_in_chunks(file_object, chunk_size=1024):

while True:

data = file_object.read(chunk_size)

if not data:

break

yield data

with open('national_shipping_data.txt', 'r') as f:

for piece in read_in_chunks(f):

# Process the chunk (this requires careful logic for line breaks)

process_data(piece)Best Practices I’ve Learned

After a decade of coding, here are the three rules I live by:

- Always use the with statement. It ensures your file closes even if your code crashes.

- Strip your lines. Always use .strip() or .rstrip(‘\n’) to avoid weird spacing issues in your data.

- Check for existence. I always use a try-except block to catch FileNotFoundError so the program doesn’t just die.

Reading files line by line is a fundamental task in Python.

Whether you are processing a small list of US states or a massive log of transactions from a New York server, using the right method will keep your code fast and your memory usage low.

You may read:

- Understand __init__ Method in Python

- Understand for i in range Loop in Python

- How to Handle Python Command Line Arguments

- How to Add Python to PATH

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.