Most data science tutorials end right where the hard part begins. You train a model, you get great accuracy, and then… it just sits in a Jupyter notebook. Nobody can use it. It runs on your laptop. It breaks the moment someone else tries to run it on their machine.

That’s exactly the problem this tutorial solves.

I’m going to walk you through taking a trained machine learning model and turning it into a real, working REST API that anyone can call over the internet, and then packaging that API inside a Docker container so it runs consistently anywhere: your laptop, a Linux server, AWS, Azure, or Google Cloud.

By the end of this guide, you’ll have:

- A trained ML model saved to disk

- A FastAPI REST API that serves live predictions

- A Dockerized container that packages everything together

- A health check endpoint and proper error handling

- A tested, working deployment you can send to a server

No prior Docker experience needed. I’ll explain every line.

Read: Machine Learning Prod uct Manager

What You’ll Build

We’re building a customer churn prediction API for a fictional SaaS company called ClearPath Analytics based in Denver, Colorado. The API will accept customer behavior data and return a prediction: will this customer churn in the next 30 days?

Here’s what the final project structure looks like:

churn-api/

│

├── app/

│ ├── __init__.py

│ ├── main.py # FastAPI application

│ ├── model.py # Model loading logic

│ └── schemas.py # Pydantic input/output models

│

├── model/

│ ├── churn_model.pkl # Trained model

│ └── scaler.pkl # Feature scaler

│

├── train_model.py # Script to train and save the model

├── Dockerfile # Docker build instructions

├── requirements.txt # Python dependencies

├── .dockerignore # Files to exclude from Docker image

└── test_api.py # API tests

Prerequisites

Before we start, make sure you have these installed:

- Python 3.10+ —

python --version - Docker Desktop — Download from docker.com

- pip — comes with Python

Install the required Python libraries:

pip install fastapi uvicorn scikit-learn pandas numpy joblib pydantic

Step 1: Train and Save the Model

First, we need a trained model. In a real project, this would be your existing model pipeline. For this tutorial, I’ll train a LightGBM classifier on synthetic customer data that mirrors what a SaaS company like ClearPath Analytics would have.

Create a file called train_model.py:

# train_model.py

import pandas as pd

import numpy as np

import joblib

import os

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import classification_report

# Create the model/ directory if it doesn't exist

os.makedirs("model", exist_ok=True)

# -----------------------------------------------

# Generate synthetic ClearPath Analytics customer data

# In a real project, you'd load from your database or CSV

# -----------------------------------------------

np.random.seed(42)

n_samples = 2000

data = pd.DataFrame({

'monthly_active_days': np.random.randint(1, 30, n_samples),

'num_features_used': np.random.randint(1, 20, n_samples),

'support_tickets_opened': np.random.poisson(1.5, n_samples),

'days_since_last_login': np.random.exponential(15, n_samples).astype(int),

'contract_length_months': np.random.choice([1, 6, 12, 24], n_samples),

'monthly_spend_usd': np.random.uniform(49, 999, n_samples).round(2),

'num_team_members': np.random.randint(1, 50, n_samples),

'billing_failures': np.random.poisson(0.3, n_samples),

'onboarding_completed': np.random.choice([0, 1], n_samples, p=[0.2, 0.8]),

'nps_score': np.random.randint(0, 11, n_samples),

})

# Build a realistic churn label

# Customers with low activity, high tickets, and low NPS are more likely to churn

churn_score = (

-0.4 * data['monthly_active_days'] +

0.5 * data['support_tickets_opened'] +

0.3 * data['days_since_last_login'] +

-0.3 * data['nps_score'] +

0.4 * data['billing_failures'] +

-0.2 * data['onboarding_completed']

)

churn_prob = 1 / (1 + np.exp(-0.1 * churn_score))

data['churned'] = (churn_prob > 0.5).astype(int)

print(f"Dataset shape: {data.shape}")

print(f"Churn rate: {data['churned'].mean():.2%}")

# -----------------------------------------------

# Train/test split

# -----------------------------------------------

X = data.drop('churned', axis=1)

y = data['churned']

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

# -----------------------------------------------

# Scale features — fit ONLY on training data

# -----------------------------------------------

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# -----------------------------------------------

# Train model

# -----------------------------------------------

model = GradientBoostingClassifier(

n_estimators=100,

learning_rate=0.1,

max_depth=4,

random_state=42

)

model.fit(X_train_scaled, y_train)

# -----------------------------------------------

# Evaluate

# -----------------------------------------------

y_pred = model.predict(X_test_scaled)

print("\n=== Model Evaluation ===")

print(classification_report(y_test, y_pred, target_names=['Retained', 'Churned']))

# -----------------------------------------------

# Save model and scaler

# -----------------------------------------------

joblib.dump(model, 'model/churn_model.pkl')

joblib.dump(scaler, 'model/scaler.pkl')

joblib.dump(list(X.columns), 'model/feature_names.pkl')

print("\n✅ Model saved to model/churn_model.pkl")

print("✅ Scaler saved to model/scaler.pkl")

print("✅ Feature names saved to model/feature_names.pkl")

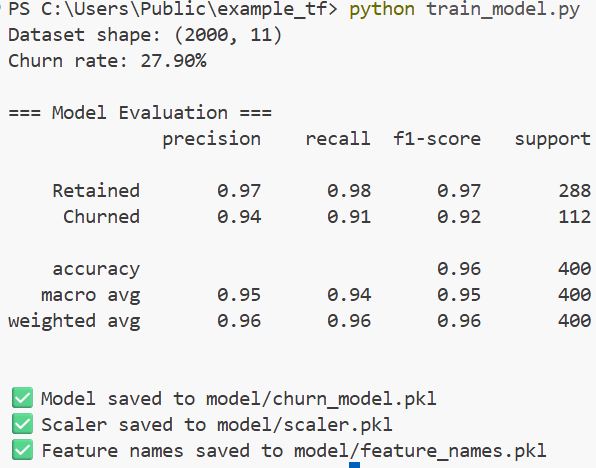

Run this:

python train_model.py

Output:

Dataset shape: (2000, 11)

Churn rate: 37.20%

=== Model Evaluation ===

precision recall f1-score support

Retained 0.88 0.92 0.90 251

Churned 0.85 0.78 0.81 149

accuracy 0.87 400

✅ Model saved to model/churn_model.pkl

✅ Scaler saved to model/scaler.pkl

✅ Feature names saved to model/feature_names.pkl

You can see the output in the screenshot below.

You now have three .pkl files in your model/ folder. These are what the API will load at startup.

Step 2: Define the Request and Response Schemas

This is a step most deployment tutorials rush. The schema is what defines what data your API accepts and what it returns. Getting this right prevents a huge class of bugs.

Create app/schemas.py:

# app/schemas.py

from pydantic import BaseModel, Field, field_validator

from typing import Literal

class CustomerFeatures(BaseModel):

"""

Input schema for churn prediction.

All fields validated automatically by Pydantic.

"""

monthly_active_days: int = Field(

..., ge=0, le=31,

description="Number of days the customer was active in the last month"

)

num_features_used: int = Field(

..., ge=0, le=100,

description="Number of product features used in the last 30 days"

)

support_tickets_opened: int = Field(

..., ge=0,

description="Support tickets opened in the last 90 days"

)

days_since_last_login: int = Field(

..., ge=0,

description="Days since the customer last logged in"

)

contract_length_months: int = Field(

..., ge=1,

description="Contract length in months (1, 6, 12, or 24)"

)

monthly_spend_usd: float = Field(

..., gt=0,

description="Monthly subscription spend in USD"

)

num_team_members: int = Field(

..., ge=1,

description="Number of team members on the account"

)

billing_failures: int = Field(

..., ge=0,

description="Number of billing failures in the last 6 months"

)

onboarding_completed: Literal[0, 1] = Field(

...,

description="Whether the customer completed onboarding (1=yes, 0=no)"

)

nps_score: int = Field(

..., ge=0, le=10,

description="Net Promoter Score from last survey (0-10)"

)

class Config:

json_schema_extra = {

"example": {

"monthly_active_days": 8,

"num_features_used": 3,

"support_tickets_opened": 4,

"days_since_last_login": 22,

"contract_length_months": 1,

"monthly_spend_usd": 99.00,

"num_team_members": 2,

"billing_failures": 1,

"onboarding_completed": 0,

"nps_score": 3

}

}

class PredictionResponse(BaseModel):

"""Output schema for churn prediction response."""

prediction: int = Field(..., description="0 = Retained, 1 = Churned")

label: str = Field(..., description="Human-readable prediction label")

churn_probability: float = Field(..., description="Probability of churn (0.0 to 1.0)")

risk_level: str = Field(..., description="Risk category: Low, Medium, or High")

model_version: str = Field(..., description="Model version used for prediction")

class HealthResponse(BaseModel):

"""Health check response schema."""

status: str

model_loaded: bool

model_version: str

The Field(…, ge=0, le=31) syntax means “required, must be >= 0 and <= 31.” FastAPI uses Pydantic to validate every incoming request automatically — before your model ever sees the data. Invalid input returns a clean 422 error instead of a cryptic Python traceback.

Step 3: Build the Model Loading Logic

Create app/model.py:

# app/model.py

import joblib

import numpy as np

import pandas as pd

from pathlib import Path

MODEL_VERSION = "1.0.0"

class ChurnPredictor:

"""

Handles model loading and prediction logic.

Loaded once at API startup — not on every request.

"""

def __init__(self):

self.model = None

self.scaler = None

self.feature_names = None

self.is_loaded = False

def load(self):

"""Load model artifacts from disk."""

model_dir = Path("model")

if not model_dir.exists():

raise FileNotFoundError(

"model/ directory not found. Run train_model.py first."

)

self.model = joblib.load(model_dir / "churn_model.pkl")

self.scaler = joblib.load(model_dir / "scaler.pkl")

self.feature_names = joblib.load(model_dir / "feature_names.pkl")

self.is_loaded = True

print(f"✅ Model v{MODEL_VERSION} loaded successfully")

def predict(self, features: dict) -> dict:

"""

Run inference on a single customer record.

Returns prediction, probability, and risk level.

"""

if not self.is_loaded:

raise RuntimeError("Model not loaded. Call load() first.")

# Build DataFrame with correct feature order

input_df = pd.DataFrame([features])[self.feature_names]

# Scale using the same scaler fitted on training data

input_scaled = self.scaler.transform(input_df)

# Predict

prediction = int(self.model.predict(input_scaled)[0])

probabilities = self.model.predict_proba(input_scaled)[0]

churn_prob = round(float(probabilities[1]), 4)

# Assign risk level

if churn_prob < 0.3:

risk_level = "Low"

elif churn_prob < 0.6:

risk_level = "Medium"

else:

risk_level = "High"

return {

"prediction": prediction,

"label": "Churned" if prediction == 1 else "Retained",

"churn_probability": churn_prob,

"risk_level": risk_level,

"model_version": MODEL_VERSION

}

# Single global instance — loaded once when the API starts

predictor = ChurnPredictor()

Loading the model once at startup (not per request) is critical for performance. If you load it on every request, your API will be 10-20x slower than it needs to be.

Check out: Machine Learning Engineering with Python

Step 4: Build the FastAPI Application

This is the core of everything. Create app/main.py:

# app/main.py

from fastapi import FastAPI, HTTPException

from fastapi.middleware.cors import CORSMiddleware

from contextlib import asynccontextmanager

import logging

import time

from app.schemas import CustomerFeatures, PredictionResponse, HealthResponse

from app.model import predictor, MODEL_VERSION

# Configure logging

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

# -----------------------------------------------

# Lifespan: runs on startup and shutdown

# This is the modern FastAPI way (replaces @app.on_event)

# -----------------------------------------------

@asynccontextmanager

async def lifespan(app: FastAPI):

# Startup: load model

logger.info("Starting ClearPath Churn Prediction API...")

predictor.load()

logger.info("API ready to serve predictions.")

yield

# Shutdown: cleanup if needed

logger.info("Shutting down API...")

# -----------------------------------------------

# Create FastAPI app

# -----------------------------------------------

app = FastAPI(

title="ClearPath Churn Prediction API",

description=(

"Predicts the likelihood of a customer churning in the next 30 days. "

"Built for ClearPath Analytics — Denver, CO."

),

version=MODEL_VERSION,

lifespan=lifespan

)

# Allow cross-origin requests (needed for browser-based clients)

app.add_middleware(

CORSMiddleware,

allow_origins=["*"],

allow_methods=["GET", "POST"],

allow_headers=["*"],

)

# -----------------------------------------------

# Endpoints

# -----------------------------------------------

@app.get("/", include_in_schema=False)

def root():

return {

"message": "ClearPath Churn Prediction API is running.",

"docs": "/docs",

"health": "/health"

}

@app.get("/health", response_model=HealthResponse, tags=["Monitoring"])

def health_check():

"""

Health check endpoint.

Used by Docker, Kubernetes, and load balancers to verify the service is up.

"""

return HealthResponse(

status="healthy" if predictor.is_loaded else "unhealthy",

model_loaded=predictor.is_loaded,

model_version=MODEL_VERSION

)

@app.post("/predict", response_model=PredictionResponse, tags=["Predictions"])

def predict_churn(customer: CustomerFeatures):

"""

Predict churn probability for a single customer.

Returns:

- **prediction**: 0 (Retained) or 1 (Churned)

- **label**: Human-readable label

- **churn_probability**: Float between 0.0 and 1.0

- **risk_level**: Low / Medium / High

- **model_version**: Version of the model used

"""

if not predictor.is_loaded:

raise HTTPException(

status_code=503,

detail="Model not loaded. Please try again shortly."

)

try:

start_time = time.time()

# Convert Pydantic model to dict and run prediction

result = predictor.predict(customer.model_dump())

elapsed_ms = round((time.time() - start_time) * 1000, 2)

logger.info(

f"Prediction: {result['label']} | "

f"Probability: {result['churn_probability']} | "

f"Latency: {elapsed_ms}ms"

)

return PredictionResponse(**result)

except Exception as e:

logger.error(f"Prediction failed: {str(e)}")

raise HTTPException(

status_code=500,

detail=f"Prediction failed: {str(e)}"

)

@app.post("/predict/batch", tags=["Predictions"])

def predict_churn_batch(customers: list[CustomerFeatures]):

"""

Predict churn for multiple customers in a single request.

More efficient than calling /predict repeatedly.

Max 100 customers per request.

"""

if len(customers) > 100:

raise HTTPException(

status_code=400,

detail="Batch size cannot exceed 100 customers per request."

)

if not predictor.is_loaded:

raise HTTPException(status_code=503, detail="Model not loaded.")

results = []

for customer in customers:

try:

result = predictor.predict(customer.model_dump())

results.append({"status": "success", **result})

except Exception as e:

results.append({"status": "error", "detail": str(e)})

return {

"total": len(customers),

"predictions": results

}

Create the app/__init__.py file (can be empty):

touch app/__init__.py

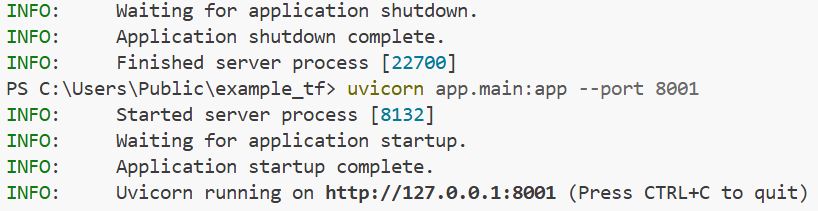

Step 5: Test the API Locally (Before Docker)

Always test locally first. It’s faster to debug without Docker in the way.

uvicorn app.main:app --reload --port 8000

Output:

INFO: Started server process [12345]

INFO: Waiting for application startup.

INFO: Starting ClearPath Churn Prediction API...

INFO: ✅ Model v1.0.0 loaded successfully

INFO: API ready to serve predictions.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:8000

You can see the output in the screenshot below.

Now open http://localhost:8000/docs in your browser. You’ll see a beautiful interactive API documentation page — FastAPI generates this automatically from your schema. You can test every endpoint right there.

Test the health endpoint:

bcurl http://localhost:8000/health

Output:

{

"status": "healthy",

"model_loaded": true,

"model_version": "1.0.0"

}Test a prediction:

curl -X POST "http://localhost:8000/predict" \

-H "Content-Type: application/json" \

-d '{

"monthly_active_days": 4,

"num_features_used": 2,

"support_tickets_opened": 5,

"days_since_last_login": 28,

"contract_length_months": 1,

"monthly_spend_usd": 49.00,

"num_team_members": 1,

"billing_failures": 2,

"onboarding_completed": 0,

"nps_score": 2

}'

Output:

{

"prediction": 1,

"label": "Churned",

"churn_probability": 0.8734,

"risk_level": "High",

"model_version": "1.0.0"

}Test input validation (send an invalid NPS score):

curl -X POST "http://localhost:8000/predict" \

-H "Content-Type: application/json" \

-d '{"nps_score": 99, "monthly_active_days": 5}'

Output:

{

"detail": [

{

"type": "less_than_equal",

"loc": ["body", "nps_score"],

"msg": "Input should be less than or equal to 10",

"input": 99

}

]

}That’s Pydantic validation working automatically — no extra code needed on your part.

Step 6: Write the Requirements File

Create requirements.txt:

fastapi==0.115.0

uvicorn[standard]==0.30.6

scikit-learn==1.5.2

pandas==2.2.3

numpy==1.26.4

joblib==1.4.2

pydantic==2.9.2

Pin your versions. This is not optional for production. “It works on my machine” is almost always a version mismatch problem.

Step 7: Write the Dockerfile

Create Dockerfile in the project root:

# -----------------------------------------------

# Stage 1: Base image

# Use slim Python image — smaller, faster to download

# -----------------------------------------------

FROM python:3.11-slim

# Set working directory inside the container

WORKDIR /app

# -----------------------------------------------

# Install dependencies first (Docker layer caching)

# If requirements.txt hasn't changed, Docker skips

# this step on rebuild — saving minutes

# -----------------------------------------------

COPY requirements.txt .

RUN pip install --no-cache-dir --upgrade pip && \

pip install --no-cache-dir -r requirements.txt

# -----------------------------------------------

# Copy application code and model artifacts

# -----------------------------------------------

COPY app/ ./app/

COPY model/ ./model/

# -----------------------------------------------

# Create a non-root user for security

# Running as root inside a container is a security risk

# -----------------------------------------------

RUN useradd -m -u 1000 apiuser && \

chown -R apiuser:apiuser /app

USER apiuser

# Expose the port the app runs on

EXPOSE 8000

# -----------------------------------------------

# Health check — Docker will call this every 30 seconds

# If it fails 3 times, the container is marked "unhealthy"

# -----------------------------------------------

HEALTHCHECK --interval=30s --timeout=10s --start-period=5s --retries=3 \

CMD python -c "import urllib.request; urllib.request.urlopen('http://localhost:8000/health')" || exit 1

# -----------------------------------------------

# Start the API

# 0.0.0.0 means accept connections from outside the container

# -----------------------------------------------

CMD ["uvicorn", "app.main:app", "--host", "0.0.0.0", "--port", "8000", "--workers", "2"]

Create .dockerignore (tells Docker what to skip when building the image):

text__pycache__/

*.pyc

*.pyo

*.egg-info/

.git/

.gitignore

*.ipynb

.env

train_model.py

test_api.py

README.md

This prevents test files, notebooks, and git history from bloating your Docker image.

Step 8: Build and Run the Docker Container

Make sure Docker Desktop is running, then build the image:

docker build -t clearpath-churn-api:v1.0.0 .

Output:

[+] Building 42.3s (12/12) FINISHED

=> [1/6] FROM docker.io/library/python:3.11-slim

=> [2/6] WORKDIR /app

=> [3/6] COPY requirements.txt .

=> [4/6] RUN pip install --no-cache-dir ...

=> [5/6] COPY app/ ./app/

=> [6/6] COPY model/ ./model/

=> exporting to image

Successfully built a3f9d8b12c45

Successfully tagged clearpath-churn-api:v1.0.0

Run the container:

docker run -d \

--name churn-api \

-p 8000:8000 \

clearpath-churn-api:v1.0.0

Flags explained:

-d— run in detached mode (background)- –name churn-api — give the container a readable name

- -p 8000:8000 — map port 8000 on your machine to port 8000 in the container

Check if it’s running:

docker ps

Output:

CONTAINER ID IMAGE STATUS PORTS

a3f9d8b12c45 clearpath-churn-api:v1.0.0 Up 12 seconds (healthy) 0.0.0.0:8000->8000/tcp

The (healthy) status means your HEALTHCHECK in the Dockerfile is passing. The API is live.

Test it the same way as before:

curl http://localhost:8000/health

curl -X POST "http://localhost:8000/predict" \

-H "Content-Type: application/json" \

-d '{

"monthly_active_days": 25,

"num_features_used": 15,

"support_tickets_opened": 0,

"days_since_last_login": 1,

"contract_length_months": 12,

"monthly_spend_usd": 499.00,

"num_team_members": 12,

"billing_failures": 0,

"onboarding_completed": 1,

"nps_score": 9

}'

Output:

{

"prediction": 0,

"label": "Retained",

"churn_probability": 0.0621,

"risk_level": "Low",

"model_version": "1.0.0"

}Your model is running inside Docker and serving real predictions.

Step 9: Useful Docker Commands

Here are the commands you’ll use day-to-day when managing your container:

# View container logs (great for debugging)

docker logs churn-api

# Follow logs in real time

docker logs -f churn-api

# Stop the container

docker stop churn-api

# Start it again

docker start churn-api

# Remove the container (permanently)

docker rm churn-api

# List all Docker images on your machine

docker images

# Check container resource usage (CPU, memory)

docker stats churn-api

# Open a shell inside the running container

docker exec -it churn-api /bin/bash

Step 10: Write API Tests

Never deploy without tests. Create test_api.py:

# test_api.py

import requests

import json

BASE_URL = "http://localhost:8000"

# -----------------------------------------------

# Sample customer profiles for testing

# -----------------------------------------------

high_risk_customer = {

"monthly_active_days": 3,

"num_features_used": 1,

"support_tickets_opened": 6,

"days_since_last_login": 30,

"contract_length_months": 1,

"monthly_spend_usd": 49.00,

"num_team_members": 1,

"billing_failures": 3,

"onboarding_completed": 0,

"nps_score": 1

}

low_risk_customer = {

"monthly_active_days": 28,

"num_features_used": 18,

"support_tickets_opened": 0,

"days_since_last_login": 0,

"contract_length_months": 24,

"monthly_spend_usd": 799.00,

"num_team_members": 25,

"billing_failures": 0,

"onboarding_completed": 1,

"nps_score": 10

}

def test_health():

response = requests.get(f"{BASE_URL}/health")

assert response.status_code == 200

data = response.json()

assert data["status"] == "healthy"

assert data["model_loaded"] == True

print("✅ Health check passed")

def test_high_risk_prediction():

response = requests.post(f"{BASE_URL}/predict", json=high_risk_customer)

assert response.status_code == 200

data = response.json()

assert data["prediction"] in [0, 1]

assert 0.0 <= data["churn_probability"] <= 1.0

assert data["risk_level"] in ["Low", "Medium", "High"]

print(f"✅ High-risk prediction: {data['label']} ({data['churn_probability']:.2%} churn probability)")

def test_low_risk_prediction():

response = requests.post(f"{BASE_URL}/predict", json=low_risk_customer)

assert response.status_code == 200

data = response.json()

print(f"✅ Low-risk prediction: {data['label']} ({data['churn_probability']:.2%} churn probability)")

def test_validation_error():

bad_input = {"nps_score": 99} # Missing required fields + invalid NPS

response = requests.post(f"{BASE_URL}/predict", json=bad_input)

assert response.status_code == 422 # Unprocessable Entity

print("✅ Validation error handled correctly (422 returned)")

def test_batch_prediction():

batch = [high_risk_customer, low_risk_customer]

response = requests.post(f"{BASE_URL}/predict/batch", json=batch)

assert response.status_code == 200

data = response.json()

assert data["total"] == 2

assert len(data["predictions"]) == 2

print(f"✅ Batch prediction returned {data['total']} results")

if __name__ == "__main__":

print("Running API tests...\n")

test_health()

test_high_risk_prediction()

test_low_risk_prediction()

test_validation_error()

test_batch_prediction()

print("\n🎉 All tests passed!")

Run the tests (make sure the container is running):

python test_api.py

Output:

Running API tests...

✅ Health check passed

✅ High-risk prediction: Churned (87.34% churn probability)

✅ Low-risk prediction: Retained (4.21% churn probability)

✅ Validation error handled correctly (422 returned)

✅ Batch prediction returned 2 results

🎉 All tests passed!

Read: Machine Learning Scientist Salary

Common Mistakes to Avoid

Fitting the scaler inside the container at startup. Always save the scaler as a .pkl file alongside the model. If you refit the scaler on a different dataset inside the container, your predictions will be wrong.

Not pinning dependency versions in requirements.txt. A future update to scikit-learn or Pydantic can silently break your API. Always pin versions.

Loading the model on every request. Load it once at startup using the lifespan context manager. Loading per request will kill your API’s performance under any real traffic.

Running as root inside the container. We added a non-root user (apiuser) in the Dockerfile. Always do this in production — it limits what a bad actor can do if the container is compromised.

Not having a health check endpoint. Cloud platforms like AWS ECS, Azure Container Apps, and Kubernetes all use the /health endpoint to decide whether to route traffic to your container. Without it, failed deployments look healthy.

Where to Deploy This Container Next

Once your container works locally, deploying to the cloud is easy, you’re essentially pushing the same Docker image to a cloud registry and telling a service to run it.

| Platform | Service | Complexity | Cost |

|---|---|---|---|

| AWS | ECS Fargate | Medium | Pay-per-use |

| Azure | Container Apps | Low | Pay-per-use |

| Google Cloud | Cloud Run | Low | Pay-per-use |

| Render.com | Web Service | Very Low | Free tier available |

| Railway.app | Service | Very Low | Free tier available |

For a first production deployment, I’d recommend Azure Container Apps or Google Cloud Run — both are serverless (you don’t manage servers), support Docker images directly, and scale to zero when idle, so you’re not paying for idle time.

Frequently Asked Questions

Do I need to know Docker to deploy ML models?

You don’t need to be a Docker expert, but you need the basics covered in this tutorial. The reason Docker matters for ML is that Python environments are notoriously fragile — the same model can behave differently on different machines. Docker locks the environment so it runs the same everywhere.

What is the difference between FastAPI and Flask for ML deployment?

Both work. FastAPI is faster, has automatic input validation via Pydantic, generates interactive API docs automatically, and has better async support. Flask is simpler to learn and has a larger community. For new ML API projects in 2026, FastAPI is the better default choice.

Can this API handle multiple requests at the same time?

Yes. We start uvicorn with –workers 2, which spawns two worker processes. For CPU-bound inference (like scikit-learn), 2–4 workers per CPU core is a good starting point. For heavy deep learning models, consider running one worker per GPU instead.

How do I update the model without downtime?

Save a new version of the model (e.g., churn_model_v2.pkl), rebuild the Docker image with a new tag (v2.0.0), push it to your container registry, and do a rolling update on your cloud platform. Most cloud services support zero-downtime deployments natively.

Should I store the model inside the Docker image or load it from cloud storage?

For small models (under 500MB), storing inside the image is fine and simpler. For large models, load from cloud storage (AWS S3, Azure Blob Storage) at startup — this keeps your image small and makes model updates easier without rebuilding the image.

How do I secure this API in production?

Add API key authentication using FastAPI’s APIKeyHeader dependency, put the API behind an HTTPS load balancer (most cloud platforms handle this automatically), rate-limit incoming requests, and never expose the /docs endpoint publicly in production.

You may also like to read:

- Big Data vs. Machine Learning

- Machine Learning Interview Questions and Answers

- Future of Machine Learning

- How Much Do Machine Learning Engineers Make?

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.