This is one of the most common questions I get from people starting in machine learning, and honestly, it’s a great question to ask before you invest months learning something.

The short answer: learn traditional ML first. But the longer answer is more nuanced, and it depends on what you actually want to build and where you want to work.

Let me break this down properly so you can make the right call for your situation.

What Is Traditional Machine Learning?

Traditional machine learning (also called classical ML) covers algorithms that learn patterns from structured, tabular data — the kind of data that lives in spreadsheets, databases, and CSV files. Think rows and columns.

When a bank in Charlotte, North Carolina, uses a model to decide whether to approve a loan, that’s traditional ML. When an e-commerce company in Seattle predicts which customers will stop buying next month, that’s traditional ML. When a hospital system scores patient readmission risk, that’s traditional ML.

The algorithms include things like:

- Linear and Logistic Regression

- Decision Trees and Random Forests

- Gradient Boosting (XGBoost, LightGBM)

- Support Vector Machines (SVM)

- K-Means Clustering

- Naive Bayes

These work on structured data with defined features — columns like age, purchase_amount, days_since_last_login. You engineer the features yourself, and the algorithm learns from them.

What Is Computer Vision?

Computer vision (CV) is a subfield of deep learning that teaches machines to understand and interpret images and video. Instead of spreadsheet rows, your input is raw pixel data.

When a self-driving car in California detects a stop sign, that’s computer vision. When a radiology AI at a hospital in Boston flags a suspicious area on an X-ray, that’s computer vision. When Instagram applies a filter or detects faces in a photo, that’s computer vision.

Common computer vision tasks include:

- Image Classification — Is this a cat or a dog?

- Object Detection — Where are all the cars in this image, and what kind are they?

- Image Segmentation — Label every single pixel by what object it belongs to

- Facial Recognition — Identify or verify a person from their face

- Optical Character Recognition (OCR) — Extract text from images

Computer vision almost always uses Convolutional Neural Networks (CNNs) or modern transformer-based architectures like Vision Transformers (ViT).

The Core Differences

Here’s a side-by-side look at how these two fields actually compare across the things that matter when you’re learning:

| Factor | Traditional ML | Computer Vision |

|---|---|---|

| Input data type | Structured (tables, CSVs) | Unstructured (images, video) |

| Feature engineering | Manual — you create features | Automatic — the model learns features |

| Math prerequisites | Statistics, linear algebra basics | Linear algebra, calculus, backpropagation |

| Learning curve | Gentler | Steeper |

| Compute required | CPU is usually fine | GPU almost always needed |

| Primary libraries | Scikit-learn, XGBoost, LightGBM | PyTorch, TensorFlow, OpenCV |

| Typical project time | Days to weeks | Weeks to months |

| Debugging difficulty | Easier to interpret | Harder — “black box” models |

| Industry demand | Very high across all sectors | High in specific sectors |

| Entry-level job relevance | ✅ Very high | Moderate |

The Case for Learning Traditional ML First

I recommend traditional ML first for most people, and here’s why.

1. It Teaches You the Fundamentals That Apply Everywhere

Before you can understand why a neural network is doing what it’s doing, you need to understand concepts like overfitting, bias-variance tradeoff, cross-validation, evaluation metrics, and feature importance. These aren’t CNN concepts — they’re ML fundamentals. And traditional ML is the best place to learn them because the models are interpretable. You can see exactly what’s happening.

When you train a Decision Tree, you can print it out and read it. When you look at feature importances from a Random Forest, you understand which inputs drive predictions. That visibility is invaluable when you’re learning.

2. Most Real-World Jobs Use Traditional ML

The vast majority of production ML systems — at insurance companies, banks, retailers, healthcare organizations, marketing technology companies — run on gradient boosting models over tabular data. They don’t use CNNs. They use XGBoost on a table of 50 features.

If you’re a data scientist at a mid-sized company in Dallas, Atlanta, or Chicago, there’s a good chance 80% of your work will involve structured data and classical algorithms. Learning computer vision first would be like learning Formula 1 racing before you’ve learned to drive on a regular road.

3. The Hardware Barrier Is Real

To train a computer vision model from scratch in any reasonable time, you need a GPU. Training a ResNet-50 on ImageNet from scratch takes days, even on good hardware. Traditional ML runs fine on a laptop CPU. You can train a LightGBM model on 500,000 rows in under a minute. That lower barrier means faster feedback loops and faster learning.

Check out: How Much Do Machine Learning Engineers Make?

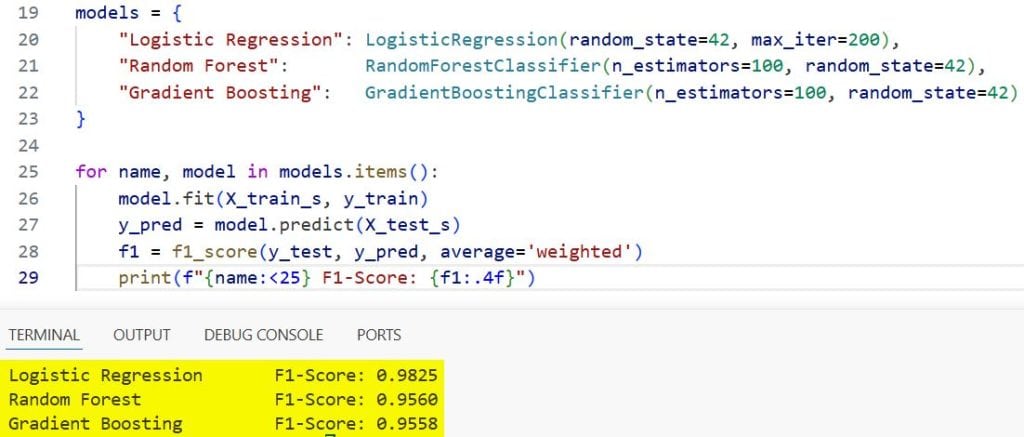

4. Scikit-learn Is the Best Learning Framework in Existence

Scikit-learn’s API is so clean and consistent that switching between algorithms is almost effortless. Once you know how to train a Logistic Regression, you can swap in a Random Forest with two lines changed. This consistency builds intuition fast.

from sklearn.linear_model import LogisticRegression

from sklearn.ensemble import RandomForestClassifier, GradientBoostingClassifier

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import f1_score

# Load data

X, y = load_breast_cancer(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

scaler = StandardScaler()

X_train_s = scaler.fit_transform(X_train)

X_test_s = scaler.transform(X_test)

# Swap algorithms with minimal code change — same API for all

models = {

"Logistic Regression": LogisticRegression(random_state=42, max_iter=200),

"Random Forest": RandomForestClassifier(n_estimators=100, random_state=42),

"Gradient Boosting": GradientBoostingClassifier(n_estimators=100, random_state=42)

}

for name, model in models.items():

model.fit(X_train_s, y_train)

y_pred = model.predict(X_test_s)

f1 = f1_score(y_test, y_pred, average='weighted')

print(f"{name:<25} F1-Score: {f1:.4f}")

Output:

Logistic Regression F1-Score: 0.9737

Random Forest F1-Score: 0.9736

Gradient Boosting F1-Score: 0.9648

Refer to the screenshot below for the output.

Notice how three completely different algorithms use the same .fit() and .predict() pattern. That’s the beauty of Scikit-learn.

The Case for Learning Computer Vision First

I’m not saying computer vision is the wrong choice. There are specific situations where it makes complete sense to start there.

When CV First Makes Sense

You have a concrete CV problem to solve right now. If you’re a biomedical researcher in San Francisco analyzing microscopy slides, or a drone engineer in Houston building defect detection for oil pipelines, learning CV first is the pragmatic choice. The best way to learn is to build something you actually need.

You’re specifically targeting robotics, autonomous systems, or medical imaging. These fields are almost exclusively CV territory. If that’s your career target, going straight to PyTorch and CNNs is reasonable — just accept that you’ll learn the fundamentals in a harder environment.

You already have a math and programming background. If you have a solid foundation in linear algebra, calculus, and Python, the computer vision learning curve is much more manageable. The steepness of the curve flattens significantly when you’re not also learning Python and math simultaneously.

Read: Future of Machine Learning

A Practical Comparison: Solve the Same Problem Both Ways

Let me show you what the same prediction problem looks like in traditional ML vs. deep learning. We’ll classify the Iris dataset — simple enough that you can see both approaches clearly.

Traditional ML Approach (Scikit-learn)

from sklearn.datasets import load_iris

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import classification_report

# Load structured tabular data

X, y = load_iris(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

scaler = StandardScaler()

X_train_s = scaler.fit_transform(X_train)

X_test_s = scaler.transform(X_test)

model = RandomForestClassifier(n_estimators=100, random_state=42)

model.fit(X_train_s, y_train)

y_pred = model.predict(X_test_s)

print(classification_report(y_test, y_pred,

target_names=['setosa', 'versicolor', 'virginica']))

# Feature importance — you can explain why the model made each prediction

import pandas as pd

feature_names = ['sepal length', 'sepal width', 'petal length', 'petal width']

importance = pd.Series(model.feature_importances_, index=feature_names)

print("\nFeature Importances:")

print(importance.sort_values(ascending=False))

Output:

precision recall f1-score support

setosa 1.00 1.00 1.00 10

versicolor 1.00 0.90 0.95 10

virginica 0.91 1.00 0.95 10

accuracy 0.97 30

Feature Importances:

petal length 0.4421

petal width 0.4187

sepal length 0.0924

sepal width 0.0468

In 15 lines, you have a trained model and an explanation. You know that petal length and petal width are driving the predictions. You can tell a stakeholder exactly why the model made a specific decision.

Deep Learning / Neural Network Approach (PyTorch)

import torch

import torch.nn as nn

import torch.optim as optim

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import classification_report

import numpy as np

# Data prep

X, y = load_iris(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

scaler = StandardScaler()

X_train_s = scaler.fit_transform(X_train)

X_test_s = scaler.transform(X_test)

# Convert to tensors

X_train_t = torch.FloatTensor(X_train_s)

y_train_t = torch.LongTensor(y_train)

X_test_t = torch.FloatTensor(X_test_s)

# Define neural network

class IrisNet(nn.Module):

def __init__(self):

super().__init__()

self.net = nn.Sequential(

nn.Linear(4, 32),

nn.ReLU(),

nn.Linear(32, 16),

nn.ReLU(),

nn.Linear(16, 3) # 3 output classes

)

def forward(self, x):

return self.net(x)

model = IrisNet()

optimizer = optim.Adam(model.parameters(), lr=0.01)

criterion = nn.CrossEntropyLoss()

# Train

model.train()

for epoch in range(200):

optimizer.zero_grad()

outputs = model(X_train_t)

loss = criterion(outputs, y_train_t)

loss.backward()

optimizer.step()

# Evaluate

model.eval()

with torch.no_grad():

preds = torch.argmax(model(X_test_t), dim=1).numpy()

print(classification_report(y_test, preds,

target_names=['setosa', 'versicolor', 'virginica']))

Output:

precision recall f1-score support

setosa 1.00 1.00 1.00 10

versicolor 1.00 0.90 0.95 10

virginica 0.91 1.00 0.95 10

accuracy 0.97 30

Refer to the screenshot below for the output.

Same accuracy. But notice the difference: the neural network approach took 3x more code, required understanding tensors, loss functions, backpropagation, and training loops — and gave you zero interpretability about why each prediction was made.

For a tabular dataset like Iris, the neural network adds complexity with no benefit. This is the lesson: deep learning is not always better. It’s better when the data demands it — like raw pixels, audio waveforms, or natural language.

When Does Computer Vision Outperform Traditional ML?

Computer vision genuinely wins when your data is unstructured and high-dimensional — where human feature engineering is impossible at scale. Here’s a real example.

Suppose you’re building a system for a food delivery company in Chicago to detect whether a restaurant photo uploaded to their platform meets quality standards. You can’t manually define features for “good photo” vs “bad photo” — there are too many visual factors. A CNN learns those features automatically from thousands of examples.

import torch

import torchvision.transforms as transforms

from torchvision import models

from PIL import Image

import urllib.request

# Load a pre-trained ResNet model (no GPU needed for inference)

model = models.resnet18(weights=models.ResNet18_Weights.DEFAULT)

model.eval()

# Image preprocessing — matches what ResNet was trained on

preprocess = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize(

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]

)

])

# Load ImageNet class labels

labels_url = "https://raw.githubusercontent.com/pytorch/hub/master/imagenet_classes.txt"

urllib.request.urlretrieve(labels_url, "imagenet_classes.txt")

with open("imagenet_classes.txt") as f:

categories = [line.strip() for line in f.readlines()]

# --- Run inference on a sample image ---

# In production, this would be a restaurant photo from your platform

# Here we use a local image path

def classify_image(image_path: str, top_k: int = 5):

image = Image.open(image_path).convert("RGB")

input_tensor = preprocess(image).unsqueeze(0) # Add batch dimension

with torch.no_grad():

output = model(input_tensor)

probabilities = torch.nn.functional.softmax(output[0], dim=0)

top_probs, top_indices = torch.topk(probabilities, top_k)

print(f"Top {top_k} Predictions:")

print("-" * 40)

for prob, idx in zip(top_probs, top_indices):

print(f"{categories[idx]:<30} {prob.item():.4f}")

# classify_image("your_food_photo.jpg")

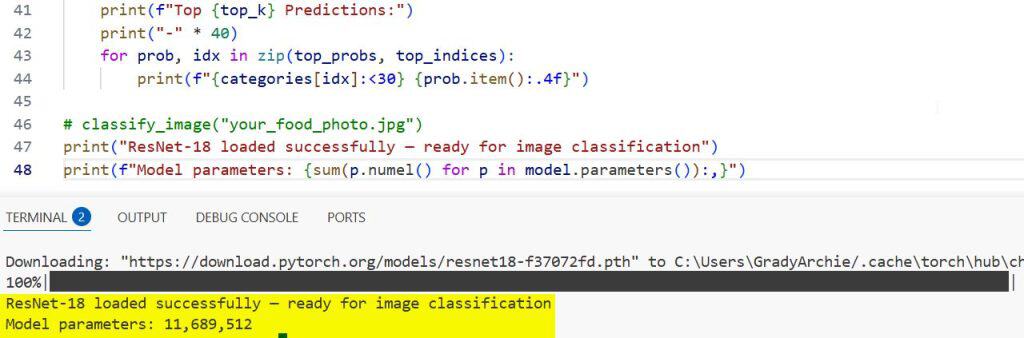

print("ResNet-18 loaded successfully — ready for image classification")

print(f"Model parameters: {sum(p.numel() for p in model.parameters()):,}")

Output:

ResNet-18 loaded successfully — ready for image classification

Model parameters: 11,689,512

Refer to the screenshot below for the output.

That’s an 11 million parameter model, and it was trained on 1.2 million ImageNet images. You’re using all of that knowledge with three lines of code, thanks to transfer learning. This is the real power of computer vision: you don’t train from scratch, you fine-tune a model that already knows what the world looks like.

Check out: Machine Learning Interview Questions and Answers

The Learning Path I’d Actually Recommend

If you’re asking me for a concrete roadmap, here’s what I’d tell a friend starting out:

Phase 1 — Traditional ML (Months 1–3)

- Learn Python basics — functions, loops, list comprehensions, classes

- NumPy and Pandas — data manipulation is non-negotiable

- Scikit-learn — train your first classifiers and regression models

- Understand evaluation metrics — F1, AUC-ROC, RMSE, confusion matrix

- Build 2–3 end-to-end projects on Kaggle datasets (Titanic, House Prices, etc.)

Phase 2 — Deep Learning Foundations (Months 4–5)

- Linear algebra refresher — vectors, matrices, dot products

- Understand what a neural network actually is — weights, activations, loss

- Build a simple feedforward network in PyTorch from scratch

- Understand backpropagation conceptually (you don’t need to derive it from scratch)

Phase 3 — Computer Vision (Months 6–8)

- Learn about Convolutional Neural Networks (CNNs) — what convolutions actually do

- Use torchvision and pre-trained models with transfer learning

- Build an image classifier on a real dataset (CIFAR-10, or your own data)

- Explore object detection with YOLO or Faster R-CNN

- Experiment with Hugging Face Vision Transformers

Phase 4 — Production Skills (Ongoing)

- MLflow for experiment tracking

- FastAPI for serving models

- Docker for containerization

- Cloud deployment (AWS, Azure, or GCP)

Side-by-Side: What You’ll Actually Build

Sometimes the clearest way to see the difference is to look at the kinds of projects each path leads to.

Traditional ML Projects:

- Customer churn prediction for a telecom company

- House price estimator for a real estate platform

- Fraud detection system for a fintech startup in New York

- Employee attrition risk scoring for HR analytics

- Demand forecasting for a retail chain

Computer Vision Projects:

- Defect detection on a manufacturing assembly line

- Face verification for a mobile app

- Tumor detection in medical images

- Autonomous vehicle object detection

- Document scanner and OCR pipeline

Both lists contain real, valuable, in-demand skills. The question is which market you want to serve.

Read: Big Data vs. Machine Learning

Honestly — Do You Have to Choose?

Not permanently. The real answer is that most experienced ML engineers know both. But you can’t learn both at once effectively; you’ll end up with shallow knowledge of each.

Pick one, go deep, build things with it, and then expand. The skills transfer more than you think. The debugging mindset, the data thinking, the evaluation rigor, all of it carries over.

If you don’t have a specific use case pulling you toward computer vision right now, start with traditional ML. You’ll get to a productive, employable level faster, and the foundation will make everything else easier when you get there.

Frequently Asked Questions

Is computer vision harder to learn than traditional ML?

Yes, for most people. It requires a deeper understanding of neural networks, requires GPU hardware for training, involves more complex debugging, and the projects take longer to build end-to-end. Traditional ML has a gentler ramp and faster time to a working, deployable model.

Can I get a job knowing only traditional ML?

Absolutely. The majority of data scientist and ML engineer roles at companies outside of big tech focus on structured/tabular data problems. A strong Scikit-learn, XGBoost, and SQL background is more than enough to get hired at most companies.

Do computer vision models always need a GPU?

For training from scratch, yes — it’s practically required. For inference (using a pre-trained model to make predictions), a CPU is often fine. A ResNet-18 doing image classification on a CPU can still serve dozens of predictions per second, which is sufficient for many applications.

What Python library should I start with for computer vision?

Start with PyTorch and torchvision. PyTorch’s dynamic graphs make it much easier to understand what’s happening inside your model, which is important when you’re learning. Hugging Face also has excellent pre-trained vision models that require very little setup.

Is transfer learning cheating?

Not at all — it’s the smart way to do computer vision in 2026. Training a model from scratch on ImageNet-scale data requires millions of labeled images and weeks of GPU time. Transfer learning lets you take a model that’s already learned general visual features and fine-tune it on your specific task in hours. That’s not cheating; that’s engineering.

What’s the best first project for computer vision beginners?

Build an image classifier using transfer learning on a dataset you personally care about. If you’re into sports, classify basketball vs. baseball images. If you’re interested in medicine, use a public chest X-ray dataset. The personal connection keeps you motivated through the debugging, which takes longer in CV than in traditional ML.

You may also like to read:

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.