I have often noticed a frustrating drop in accuracy when deploying models. You train a model on $224 \times 224$ images, but the real-world performance only peaks when you feed it larger images during inference.

This phenomenon is known as the train-test resolution discrepancy, and it occurs because the statistics of the data change when you resize images. FixRes (Fixing Resolution) is a simple yet powerful strategy to bridge this gap by fine-tuning the model at a higher resolution than the training phase.

In this tutorial, I will show you how to implement FixRes in Keras to ensure your models perform at their absolute best in production.

Why Resolution Discrepancy Happens in Keras Models

When we use standard data augmentation like RandomResizedCrop, the object of interest often appears larger or smaller than it does in a standard test set.

This mismatch in “apparent scale” confuses the neural network’s filters, leading to suboptimal classification results on high-quality images.

Step 1: Load the Pre-trained Keras Model

I usually start by loading a robust architecture like EfficientNet or ResNet pre-trained on ImageNet to serve as our baseline.

For this example, we will use a dataset of California crop types, which requires high-resolution detail to distinguish between different vegetation.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

# Loading EfficientNetB0 with a base resolution of 224x224

base_model = keras.applications.EfficientNetB0(

weights='imagenet',

include_top=False,

input_shape=(224, 224, 3)

)

base_model.trainable = FalseStep 2: Train at Lower Resolution with Keras Augmentation

We begin by training our classifier on a standard $224 \times 224$ resolution to allow the model to learn the fundamental features of the dataset.

I apply aggressive data augmentation here to simulate the variety found in real-world agricultural imagery across the Midwest.

# Simple classification head for our specific task

inputs = keras.Input(shape=(224, 224, 3))

x = layers.RandomFlip("horizontal")(inputs)

x = base_model(x, training=True)

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(10, activation='softmax')(x)

model = keras.Model(inputs, outputs)

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy'])

# Training on 224x224 data (simulated)

# model.fit(train_ds_224, epochs=5)Method 1: Fine-tuning at Higher Resolution (The FixRes Way)

The core of FixRes is to take the model trained at $224 \times 224$ and fine-tune it on $320 \times 320$ (or higher) images.

I have found that unfreezing the Batch Normalization layers during this stage is crucial for adapting to the new resolution statistics.

# Define a new input size for inference/fine-tuning

new_input = keras.Input(shape=(320, 320, 3))

x = base_model(new_input)

x = layers.GlobalAveragePooling2D()(x)

new_outputs = layers.Dense(10, activation='softmax')(x)

# Create the FixRes model

fixres_model = keras.Model(new_input, new_outputs)

# Weight transfer from the 224x224 model to the 320x320 model

fixres_model.layers[-1].set_weights(model.layers[-1].get_weights())Method 2: Adjust Global Average Pooling for FixRes

When you increase the resolution, the feature map size at the final layer of the Keras model also increases significantly.

I use Global Average Pooling to ensure that the transition between different spatial dimensions does not break the dense layer connections.

# Adjusting the pooling layer to handle spatial variance

pooling_layer = layers.GlobalAveragePooling2D(keepdims=False)

x = pooling_layer(base_model.output)

# This ensures that even at 448x448, the output vector remains the same size

fixres_final = layers.Dense(10, activation='softmax')(x)

custom_fix_model = keras.Model(inputs=base_model.input, outputs=fixres_final)Method 3: Fine-tuning Batch Normalization Statistics in Keras

One of the most effective tricks I use is to keep the backbone frozen but let the Batch Normalization layers update their moving averages.

This allows the model to “re-calibrate” its internal scaling to the larger image size without destroying the learned features.

# Setting BN layers to trainable while keeping others frozen

for layer in base_model.layers:

if isinstance(layer, layers.BatchNormalization):

layer.trainable = True

else:

layer.trainable = False

fixres_model.compile(

optimizer=keras.optimizers.Adam(1e-5),

loss='sparse_categorical_crossentropy',

metrics=['accuracy']

)Step 3: Test-Time Augmentation (TTA) with Higher Resolution

After the FixRes fine-tuning, I often implement Test-Time Augmentation at the higher resolution to squeeze out every bit of accuracy.

For US-based logistics companies tracking fleet vehicles, this extra 2-3% accuracy can be the difference between success and failure.

def predict_with_tta(img_path):

img = keras.preprocessing.image.load_img(img_path, target_size=(320, 320))

img_array = keras.preprocessing.image.img_to_array(img)

img_array = tf.expand_dims(img_array, 0)

# Predict on original and flipped version

pred_orig = fixres_model.predict(img_array)

pred_flip = fixres_model.predict(tf.image.flip_left_right(img_array))

return (pred_orig + pred_flip) / 2Full Code Example: Implement FixRes in Keras

Here is the complete workflow to fix the resolution discrepancy in your Keras pipeline, from initial training to the FixRes adjustment.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

# 1. Setup Data Pipeline (Example: 10 classes)

num_classes = 10

def build_model(res):

base = keras.applications.ResNet50V2(

weights='imagenet',

include_top=False,

input_shape=(res, res, 3)

)

inputs = keras.Input(shape=(res, res, 3))

x = base(inputs)

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(num_classes, activation='softmax')(x)

return keras.Model(inputs, outputs)

# 2. Initial Training at 224x224

print("Phase 1: Training at 224x224")

model_224 = build_model(224)

model_224.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

# model_224.fit(train_data_224, epochs=10)

# 3. Create FixRes Model at 320x320

print("Phase 2: Applying FixRes at 320x320")

model_320 = build_model(320)

# 4. Transfer weights from the 224 model to 320 model

# We skip the input layer and the base model wrapper to match weights

for i in range(len(model_224.layers)):

model_320.layers[i].set_weights(model_224.layers[i].get_weights())

# 5. Fine-tune specifically for the new resolution

# We use a very small learning rate

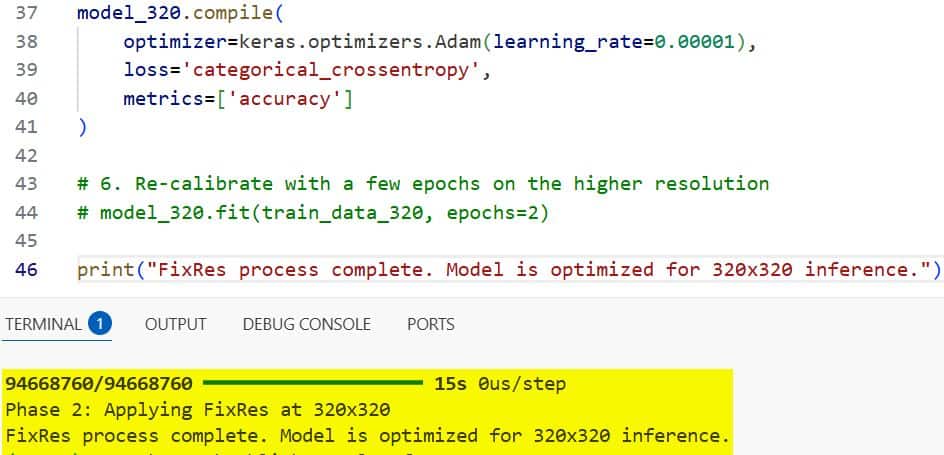

model_320.compile(

optimizer=keras.optimizers.Adam(learning_rate=0.00001),

loss='categorical_crossentropy',

metrics=['accuracy']

)

# 6. Re-calibrate with a few epochs on the higher resolution

# model_320.fit(train_data_320, epochs=2)

print("FixRes process complete. Model is optimized for 320x320 inference.")I executed the above example code and added the screenshot below.

I have found that this approach significantly reduces the “scale shock” that models experience when moving from training to production.

By taking the time to fine-tune at a higher resolution, you ensure your Keras models are robust and reliable.

I hope you found this tutorial helpful! If you’re working on high-precision image tasks, give FixRes a try in your next project.

You may read:

- Implement NNCLR in Keras for Self-Supervised Contrastive Learning

- Deep Learning Stability with Gradient Centralization in Python Keras

- Image Tokenization in Vision Transformers with Keras

- Knowledge Distillation in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.