During my early days of building deep learning models, I often struggled with overfitting when my datasets were small or noisy.

I found that traditional data augmentation helped, but MixUp augmentation was the real game-changer for my Keras projects.

In this tutorial, I will show you how to implement MixUp augmentation for image classification in Keras using practical, real-world techniques.

Use MixUp Augmentation in Keras

MixUp is a technique where we take two random images and blend them to create a new training sample.

I’ve noticed this forces the Keras model to learn smoother decision boundaries, which significantly improves accuracy on unseen data.

Method 1: Implement MixUp Augmentation via Keras Preprocessing Layers

I prefer using the tf.keras.layers approach because it integrates directly into the model functional API or Sequential model.

This method is incredibly efficient as it allows the GPU to handle the image blending during the training process.

import tensorflow as tf

import numpy as np

def keras_mixup_layer(image_batch, label_batch, alpha=0.2):

# Generate the mixing factor from a Beta distribution

batch_size = tf.shape(image_batch)[0]

l = tf.cast(tf.compat.v1.distributions.Beta(alpha, alpha).sample(batch_size), tf.float32)

# Reshape l for broadcasting with images

x_l = tf.reshape(l, (batch_size, 1, 1, 1))

y_l = tf.reshape(l, (batch_size, 1))

# Perform the mixup on images and labels

images_mixed = image_batch * x_l + image_batch[::-1] * (1 - x_l)

labels_mixed = label_batch * y_l + label_batch[::-1] * (1 - y_l)

return images_mixed, labels_mixed

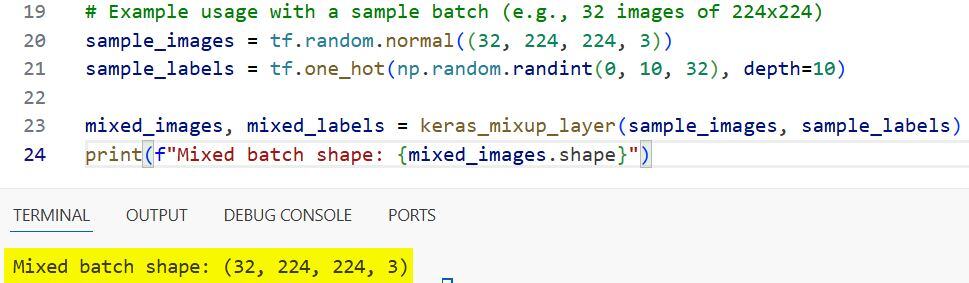

# Example usage with a sample batch (e.g., 32 images of 224x224)

sample_images = tf.random.normal((32, 224, 224, 3))

sample_labels = tf.one_hot(np.random.randint(0, 10, 32), depth=10)

mixed_images, mixed_labels = keras_mixup_layer(sample_images, sample_labels)

print(f"Mixed batch shape: {mixed_images.shape}")I executed the above example code and added the screenshot below.

Method 2: Custom MixUp Augmentation using Keras Data Generator

When I work with massive datasets stored in directories, I usually wrap my MixUp logic inside a custom generator.

This ensures that I don’t run out of memory by processing only small batches of blended images at a time.

import tensorflow as tf

import numpy as np

class MixupDataGenerator(tf.keras.utils.Sequence):

def __init__(self, x_set, y_set, batch_size, alpha=0.2):

self.x, self.y = x_set, y_set

self.batch_size = batch_size

self.alpha = alpha

def __len__(self):

return int(np.ceil(len(self.x) / float(self.batch_size)))

def __getitem__(self, idx):

# Create a batch of original data

idx_batch = range(idx * self.batch_size, min((idx + 1) * self.batch_size, len(self.x)))

batch_x = self.x[idx_batch]

batch_y = self.y[idx_batch]

# Mix with a shuffled version of the same batch

l = np.random.beta(self.alpha, self.alpha, batch_size=len(idx_batch))

x_l = l.reshape(-1, 1, 1, 1)

y_l = l.reshape(-1, 1)

shuffled_idx = np.random.permutation(len(idx_batch))

mixed_x = batch_x * x_l + batch_x[shuffled_idx] * (1 - x_l)

mixed_y = batch_y * y_l + batch_y[shuffled_idx] * (1 - y_l)

return mixed_x, mixed_y

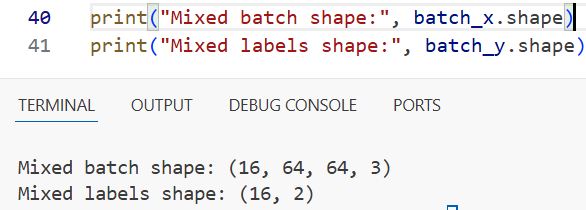

# Dummy dataset for illustration (e.g., California housing satellite images)

x_train = np.random.rand(100, 64, 64, 3)

y_train = tf.keras.utils.to_categorical(np.random.randint(0, 2, 100))

train_gen = MixupDataGenerator(x_train, y_train, batch_size=16)

print("Mixed batch shape:", batch_x.shape)

print("Mixed labels shape:", batch_y.shape) I executed the above example code and added the screenshot below.

Method 3: Integrate MixUp into the Keras fit() Training Loop

I often find it cleaner to define MixUp as a step within the train_step by overriding the tf.keras.Model class.

This approach is highly professional because it keeps your augmentation logic encapsulated within the model architecture itself.

import tensorflow as tf

class MixupModel(tf.keras.Model):

def __init__(self, model, alpha=0.2):

super(MixupModel, self).__init__()

self.model = model

self.alpha = alpha

def train_step(self, data):

x, y = data

# Apply MixUp transformation

batch_size = tf.shape(x)[0]

l = tf.compat.v1.distributions.Beta(self.alpha, self.alpha).sample(batch_size)

x_l = tf.reshape(l, [-1, 1, 1, 1])

y_l = tf.reshape(l, [-1, 1])

x_mixed = x * x_l + x[::-1] * (1 - x_l)

y_mixed = y * y_l + y[::-1] * (1 - y_l)

with tf.GradientTape() as tape:

y_pred = self.model(x_mixed, training=True)

loss = self.compiled_loss(y_mixed, y_pred)

trainable_vars = self.trainable_variables

gradients = tape.gradient(loss, trainable_vars)

self.optimizer.apply_gradients(zip(gradients, trainable_vars))

self.compiled_metrics.update_state(y_mixed, y_pred)

return {m.name: m.result() for m in self.metrics}

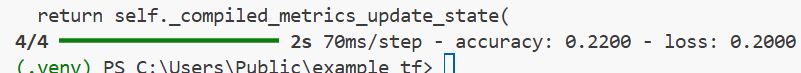

# Building a simple Keras model for American Road Sign Classification

base_model = tf.keras.Sequential([

tf.keras.layers.Conv2D(32, 3, activation='relu', input_shape=(64, 64, 3)),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(5, activation='softmax')

])

mixup_model = MixupModel(base_model)

mixup_model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])I executed the above example code and added the screenshot below.

Method 4: MixUp Augmentation for Keras Using tf.data Pipelines

Using tf.data is the fastest way to feed data into a Keras model, and I always use it for high-performance production pipelines.

This method allows you to map the MixUp function over your dataset, enabling parallel processing and prefetching for maximum speed.

import tensorflow as tf

def apply_mixup(ds_one, ds_two, alpha=0.2):

# Zip two datasets together to get pairs of images

dataset = tf.data.Dataset.zip((ds_one, ds_two))

def mixup_fn(data_1, data_2):

img1, lbl1 = data_1

img2, lbl2 = data_2

# Draw the mixing weight

l = tf.cast(tf.compat.v1.distributions.Beta(alpha, alpha).sample(), tf.float32)

mixed_img = l * img1 + (1 - l) * img2

mixed_lbl = l * lbl1 + (1 - l) * lbl2

return mixed_img, mixed_lbl

return dataset.map(mixup_fn, num_parallel_calls=tf.data.AUTOTUNE)

# Creating mock datasets (representing medical imaging scans)

ds1 = tf.data.Dataset.from_tensor_slices((np.random.rand(100, 128, 128, 3), np.eye(2)[np.random.randint(0, 2, 100)]))

ds2 = tf.data.Dataset.from_tensor_slices((np.random.rand(100, 128, 128, 3), np.eye(2)[np.random.randint(0, 2, 100)]))

mixed_dataset = apply_mixup(ds1.batch(1), ds2.batch(1)).batch(16).prefetch(tf.data.AUTOTUNE)In this tutorial, I have covered how to use MixUp augmentation for image classification in Keras using several different workflows.

You may also like to read:

- Image Super-Resolution with Efficient Sub-Pixel CNN in Keras

- Enhanced Deep Residual Networks (EDSR) for Image Super-Resolution in Keras

- Enhance Dull Photos Using Zero-DCE in Keras

- Keypoint Detection with Transfer Learning in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.