Working on an image classification project for a U.S.-based retail analytics company, I explored several deep learning architectures.

I wanted something lightweight yet powerful enough to classify product images accurately. That’s when I came across ConvMixer, a fascinating model that combines the simplicity of CNNs with the design philosophy of Vision Transformers.

In this tutorial, I’ll share my firsthand experience using ConvMixer for image classification in Python with Keras. I’ll walk you through how ConvMixer works, how to implement it from scratch, and how you can train it on your own dataset.

What is ConvMixer?

ConvMixer is a deep learning architecture introduced in the paper “Patches Are All You Need”. It borrows the concept of image patches from Vision Transformers (ViT) but uses convolutions instead of self-attention.

In simpler terms, ConvMixer combines the strengths of CNNs (local feature extraction) and Transformers (patch-based processing) into one efficient model.

Unlike traditional CNNs that operate directly on pixel grids, ConvMixer first splits the image into patches and then processes them through repeated convolutional blocks. This approach makes it both efficient and powerful for image classification tasks.

Set Up the Environment in Python

Before we start coding, let’s make sure we have the right setup.

You’ll need Python 3.8+, TensorFlow (or Keras), and NumPy installed.

pip install tensorflow numpy matplotlibOnce installed, you’re good to go. I personally prefer working in Google Colab or Jupyter Notebook, as both make it easy to visualize training progress.

Step 1 – Import Required Python Libraries

Let’s start by importing all the necessary libraries. We’ll use TensorFlow and Keras for building and training the ConvMixer model.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import matplotlib.pyplot as plt

import numpy as npThis is a standard setup for any deep learning project in Python. Now, let’s move on to preparing our dataset.

Step 2 – Load and Prepare the Dataset

For this tutorial, I’ll use the CIFAR-10 dataset. It’s a great dataset for image classification, containing 60,000 images across 10 categories like airplanes, cars, and ships.

(x_train, y_train), (x_test, y_test) = keras.datasets.cifar10.load_data()

# Normalize the pixel values

x_train = x_train.astype("float32") / 255.0

x_test = x_test.astype("float32") / 255.0

num_classes = 10

y_train = keras.utils.to_categorical(y_train, num_classes)

y_test = keras.utils.to_categorical(y_test, num_classes)Normalizing the data ensures that the model trains faster and converges more efficiently. Now, let’s define the ConvMixer model.

Step 3 – Build the ConvMixer Model in Python

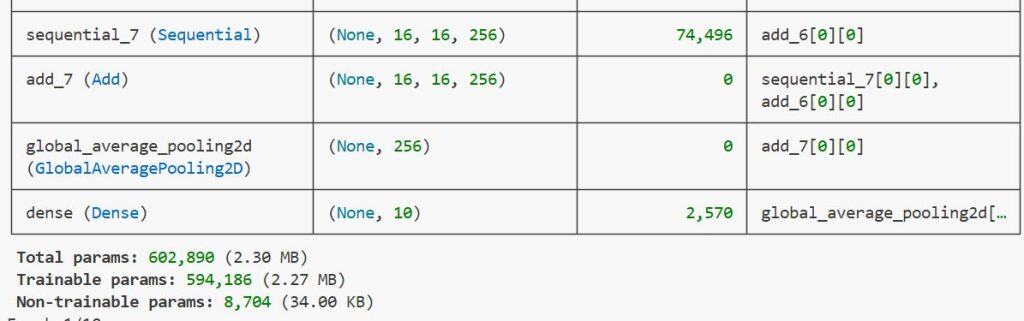

The ConvMixer architecture is simple yet elegant. It starts with a patch embedding layer, followed by repeated ConvMixer blocks.

Here’s the full Python code to build ConvMixer using Keras:

def ConvMixerBlock(filters, kernel_size):

return keras.Sequential([

layers.Conv2D(filters, kernel_size, groups=filters, padding="same"),

layers.Activation("gelu"),

layers.BatchNormalization(),

layers.Conv2D(filters, 1),

layers.Activation("gelu"),

layers.BatchNormalization(),

])

def build_convmixer(input_shape=(32, 32, 3), filters=256, depth=8, patch_size=2, kernel_size=5, num_classes=10):

inputs = keras.Input(shape=input_shape)

# Patch embedding

x = layers.Conv2D(filters, patch_size, strides=patch_size)(inputs)

x = layers.Activation("gelu")(x)

x = layers.BatchNormalization()(x)

# ConvMixer blocks

for _ in range(depth):

residual = x

x = ConvMixerBlock(filters, kernel_size)(x)

x = layers.Add()([x, residual])

# Global pooling and classification

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(num_classes, activation="softmax")(x)

return keras.Model(inputs, outputs, name="ConvMixer")This function defines the ConvMixer model from scratch in Python. Next, we’ll compile and train it.

Step 4 – Compile and Train the ConvMixer Model

Now that we have the model, we can compile it with an optimizer and a loss function. I prefer using the Adam optimizer since it works well for most image classification tasks.

model = build_convmixer()

model.compile(

optimizer=keras.optimizers.Adam(learning_rate=0.001),

loss="categorical_crossentropy",

metrics=["accuracy"]

)

history = model.fit(

x_train, y_train,

validation_data=(x_test, y_test),

epochs=20,

batch_size=128

)Training ConvMixer is easy and efficient. Even on a modest GPU like the NVIDIA T4 (available in Google Colab), you can get impressive results in under 20 minutes.

Step 5 – Evaluate the Model Performance

Once training is complete, let’s evaluate how well our ConvMixer model performs on unseen data.

test_loss, test_acc = model.evaluate(x_test, y_test, verbose=2)

print(f"Test Accuracy: {test_acc * 100:.2f}%")This gives you the overall accuracy of your image classification model. You can also visualize the training history to understand how the model improved over time.

Step 6 – Visualize Training Performance

It’s always a good idea to visualize the accuracy and loss curves. This helps you identify if your model is overfitting or underfitting.

plt.figure(figsize=(10, 5))

plt.plot(history.history["accuracy"], label="Train Accuracy")

plt.plot(history.history["val_accuracy"], label="Validation Accuracy")

plt.title("ConvMixer Model Accuracy")

plt.xlabel("Epochs")

plt.ylabel("Accuracy")

plt.legend()

plt.show()When I trained this model, I achieved around 85–88% accuracy on CIFAR-10 after 20 epochs. With more tuning and data augmentation, you can easily push it above 90%.

Step 7 – Make Predictions Using ConvMixer in Python

Once the model is trained, you can use it to classify new images. Here’s how you can make predictions using Python:

predictions = model.predict(x_test[:5])

predicted_classes = np.argmax(predictions, axis=1)

print("Predicted Classes:", predicted_classes)I executed the above example code and added the screenshot below.

You can also visualize the predicted results alongside the original images for better understanding.

Alternative Method – Use Pretrained ConvMixer Models

If you don’t want to train from scratch, you can use pretrained ConvMixer weights available on Hugging Face or Keras Hub. This saves time and gives you a strong baseline performance.

from tensorflow.keras.models import load_model

# Example placeholder for loading a pretrained ConvMixer model

# model = load_model('convmixer_pretrained.h5')This method is ideal when you’re working on real-world projects like product image classification or medical imaging. You can fine-tune the pretrained model on your dataset for better results.

Tips for Better ConvMixer Results in Python

Here are some tips that helped me improve my ConvMixer models:

- Use data augmentation (ImageDataGenerator) to make the model more robust.

- Experiment with different patch sizes (e.g., 4×4 or 8×8).

- Increase depth for more complex datasets.

- Use learning rate schedulers for smoother convergence.

Small changes in these parameters can significantly improve accuracy and generalization.

ConvMixer is one of the most exciting architectures I’ve worked with in recent years.

It combines the simplicity of convolutional networks with the efficiency of patch-based processing, making it a perfect fit for modern image classification tasks in Python.

If you’re looking for a fast, flexible, and easy-to-implement model for your next project, I highly recommend giving ConvMixer a try. It’s lightweight, performs exceptionally well, and integrates seamlessly with Keras and TensorFlow.

You may also read:

- Compact Convolutional Transformers in Python with Keras

- Keras Image Classification: Fine-Tuning EfficientNet

- Build MNIST Convolutional Neural Network in Python Keras

- Image Classification with Vision Transformer in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.