I’ve often found myself needing to process data row by row. While Pandas is designed for vectorization, there are times when a custom logic requires a manual loop.

In this tutorial, I will show you exactly how to iterate through rows in a Pandas DataFrame. I’ll share the methods I use daily and point out which ones are the fastest for your data projects.

Why You Might Need to Iterate Through Rows

Most of the time, I try to avoid loops in Python because they can be slow. However, if you are calling an external API for each row or performing complex conditional logic, a loop is often necessary.

In the examples below, we will use a dataset representing real estate listings across different US states like New York, California, and Texas.

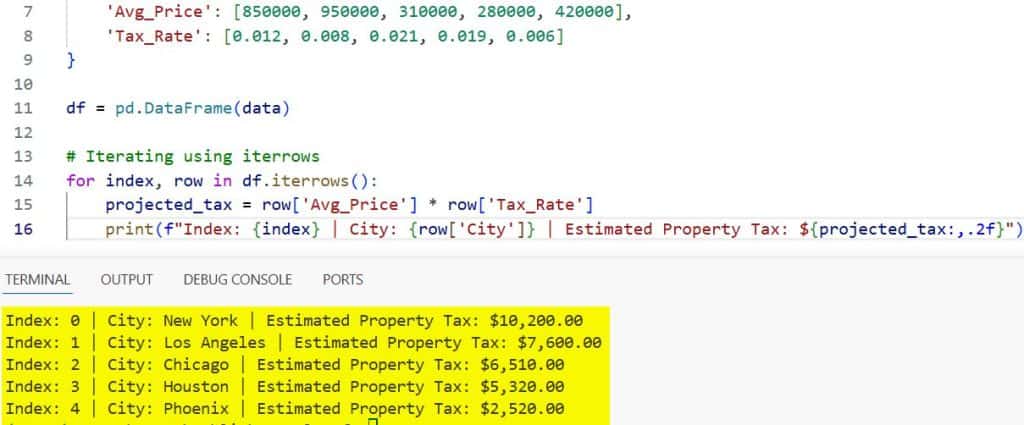

Method 1: Use the iterrows() Function

The iterrows() method is perhaps the most common way to loop. It returns an iterator yielding the index and the row data as a Series.

In my experience, this is the most readable method for beginners, although it isn’t the fastest for massive datasets.

import pandas as pd

# Creating a dataset of US Real Estate listings

data = {

'City': ['New York', 'Los Angeles', 'Chicago', 'Houston', 'Phoenix'],

'State': ['NY', 'CA', 'IL', 'TX', 'AZ'],

'Avg_Price': [850000, 950000, 310000, 280000, 420000],

'Tax_Rate': [0.012, 0.008, 0.021, 0.019, 0.006]

}

df = pd.DataFrame(data)

# Iterating using iterrows

for index, row in df.iterrows():

projected_tax = row['Avg_Price'] * row['Tax_Rate']

print(f"Index: {index} | City: {row['City']} | Estimated Property Tax: ${projected_tax:,.2f}")I executed the above example code and added the screenshot below.

When I use this, I always remember that iterrows() does not preserve dtypes across the rows. Since it converts each row to a Series, it might change your integers to floats if there’s a mix of types.

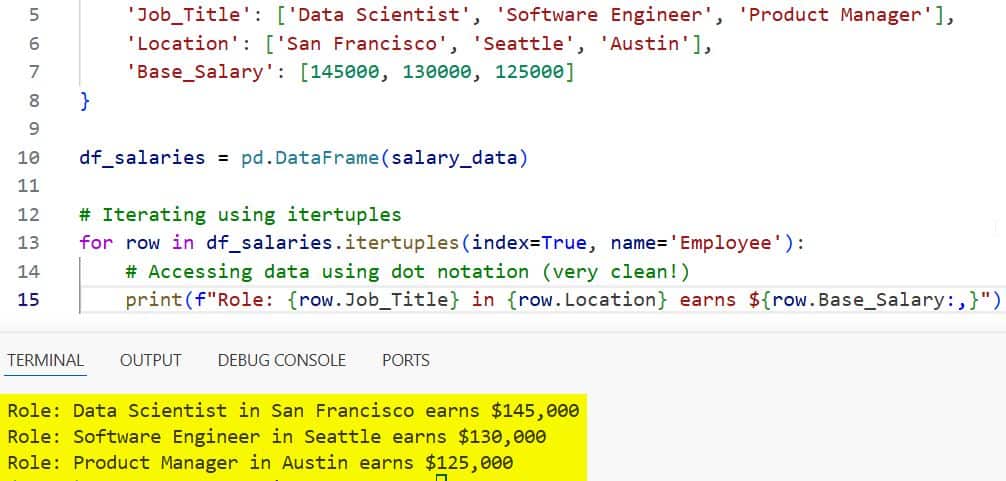

Method 2: Use the itertuples() Function

If you have a larger DataFrame, I highly recommend using itertuples(). It is significantly faster than iterrows().

This method returns a named tuple for each row. I prefer this because accessing attributes is faster than looking up values in a Series.

import pandas as pd

# Data representing US Tech Salaries

salary_data = {

'Job_Title': ['Data Scientist', 'Software Engineer', 'Product Manager'],

'Location': ['San Francisco', 'Seattle', 'Austin'],

'Base_Salary': [145000, 130000, 125000]

}

df_salaries = pd.DataFrame(salary_data)

# Iterating using itertuples

for row in df_salaries.itertuples(index=True, name='Employee'):

# Accessing data using dot notation (very clean!)

print(f"Role: {row.Job_Title} in {row.Location} earns ${row.Base_Salary:,}")I executed the above example code and added the screenshot below.

By default, itertuples() is much more memory-efficient. If you don’t need the index, you can set index=False to speed it up even further.

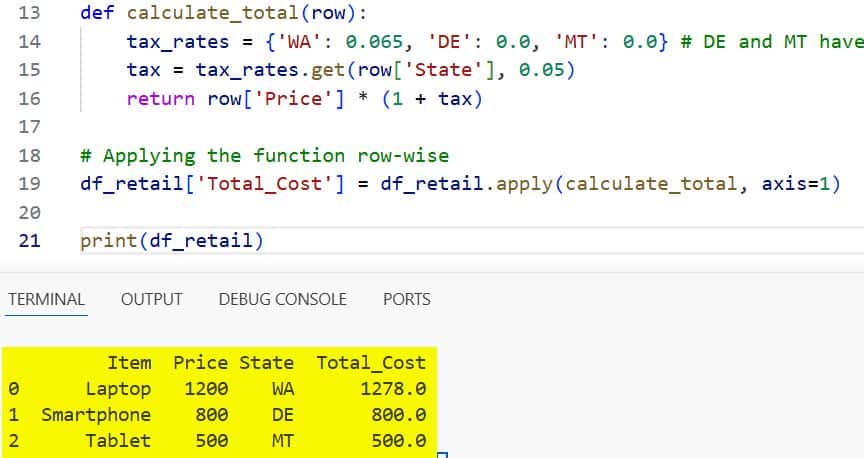

Method 3: Use the apply() Function

Technically, apply() isn’t a “loop” in the traditional Python sense, but it allows you to apply a function along an axis. I use this when I want to create a new column based on existing row values.

In the US, sales tax varies by state. Let’s use apply to calculate the total cost of an item including state-specific tax.

import pandas as pd

# Retail data for US stores

retail_data = {

'Item': ['Laptop', 'Smartphone', 'Tablet'],

'Price': [1200, 800, 500],

'State': ['WA', 'DE', 'MT']

}

df_retail = pd.DataFrame(retail_data)

# Function to calculate tax (simplified US rates)

def calculate_total(row):

tax_rates = {'WA': 0.065, 'DE': 0.0, 'MT': 0.0} # DE and MT have no sales tax

tax = tax_rates.get(row['State'], 0.05)

return row['Price'] * (1 + tax)

# Applying the function row-wise

df_retail['Total_Cost'] = df_retail.apply(calculate_total, axis=1)

print(df_retail)I executed the above example code and added the screenshot below.

I find apply() to be the most “Pandas-native” way to handle row-level operations when vectorization isn’t easy.

Method 4: Iterate Using the DataFrame Index

Sometimes, the simplest way is to use a standard range loop with the DataFrame index. This is useful if you need to look at the “next” or “previous” row while iterating.

import pandas as pd

# Monthly US Fuel Prices (Example)

fuel_data = {

'Month': ['Jan', 'Feb', 'Mar', 'Apr'],

'Price_Per_Gallon': [3.15, 3.22, 3.45, 3.38]

}

df_fuel = pd.DataFrame(fuel_data)

# Using index to compare with the previous month

for i in range(len(df_fuel)):

current_price = df_fuel.iloc[i]['Price_Per_Gallon']

if i > 0:

prev_price = df_fuel.iloc[i-1]['Price_Per_Gallon']

diff = current_price - prev_price

print(f"{df_fuel.iloc[i]['Month']}: Change from last month: ${diff:.2f}")

else:

print(f"{df_fuel.iloc[i]['Month']}: Starting Price ${current_price}")While iloc access inside a loop is generally slow, it gives me the most control over the iteration logic.

Method 5: Use loc for Conditional Updates

If your goal for iterating is to update specific rows based on a condition, you should skip the loop entirely and use .loc.

I see many developers write long loops to filter data. Instead, I use this vectorized approach, which is nearly instantaneous.

import pandas as pd

# US Employee Performance Data

emp_data = {

'Name': ['Alice', 'Bob', 'Charlie', 'David'],

'Rating': [4.5, 3.2, 4.8, 2.5],

'Current_Salary': [90000, 80000, 110000, 70000]

}

df_emp = pd.DataFrame(emp_data)

# Instead of looping, use .loc to apply a 10% bonus for high performers

df_emp.loc[df_emp['Rating'] > 4.0, 'Bonus'] = df_emp['Current_Salary'] * 0.10

df_emp.loc[df_emp['Rating'] <= 4.0, 'Bonus'] = 0

print(df_emp)Performance Comparison: Which one should you use?

I have tested these methods on dataframes with over 100,000 rows. Here is my rule of thumb:

- Vectorization: Always the first choice. 100x faster than any loop.

- itertuples(): Use this if you absolutely must loop. It is the fastest iteration method.

- apply(): Best for complex logic that results in new columns.

- iterrows(): Only use for very small DataFrames where performance doesn’t matter.

Common Pitfalls to Avoid

One mistake I often see is trying to modify a DataFrame while iterating through it with iterrows().

Pandas returns a copy of the row, not a view. This means if you change a value in the row variable, it won’t reflect in your original DataFrame.

Always use .at or .loc if you need to update values during an iteration.

Iterate with Dictionary Conversion (The “Secret” Speed Trick)

When I’m dealing with extremely high-performance requirements, and vectorization is impossible, I convert the DataFrame to a list of dictionaries.

This bypasses the Pandas overhead entirely during the loop.

import pandas as pd

# US Census Population (Small subset)

pop_data = {

'State': ['Florida', 'Georgia', 'North Carolina'],

'Population': [21500000, 10700000, 10500000]

}

df_pop = pd.DataFrame(pop_data)

# Convert to list of dicts for raw Python speed

data_dict = df_pop.to_dict('records')

for record in data_dict:

# This loop runs at native Python speed

if record['Population'] > 20000000:

print(f"{record['State']} is a high-population state.")I’ve found this to be even faster than itertuples() in certain scenarios involving heavy string manipulation.

Handle Specific Data Types

In US-based datasets, we often deal with Currency (USD) and Dates (MM/DD/YYYY). When iterating, ensure your columns are cast correctly first.

If a column is an ‘object’ type instead of ‘datetime’, your iteration logic might fail or return unexpected results. I always run df.info() before starting any iteration process.

Use Vectorized String Operations

If you are iterating through rows just to clean text, like removing “USD” from a price column, don’t use a loop.

Pandas provides the .str accessor. I use df[‘Price’].str.replace(‘$’, ”) which processes all rows at once. It’s cleaner and much more professional.

Iterating through rows in a Pandas DataFrame is a fundamental skill, but it’s one you should use sparingly.

I’ve found that while iterrows() is easy to write, itertuples() and apply() offer much better performance for real-world US data applications. Always remember to check if a vectorized solution exists before reaching for a loop.

- How to Convert Pandas Column to List in Python

- How to Get Row by Index in Pandas

- How to Get the Number of Rows in a Pandas DataFrame

- Pandas Split Column by Delimiter

I am Bijay Kumar, a Microsoft MVP in SharePoint. Apart from SharePoint, I started working on Python, Machine learning, and artificial intelligence for the last 5 years. During this time I got expertise in various Python libraries also like Tkinter, Pandas, NumPy, Turtle, Django, Matplotlib, Tensorflow, Scipy, Scikit-Learn, etc… for various clients in the United States, Canada, the United Kingdom, Australia, New Zealand, etc. Check out my profile.