I’ve spent years wrangling data in Python, and one of the most common things I do is check the size of my dataset.

Whether I am loading a CSV of US Census data or analyzing California housing prices, I always need to know how many records I am dealing with.

Knowing the number of rows helps me verify if my data filters worked or if a merge operation went as expected.

In this tutorial, I will show you the different ways to get the row count of a Pandas DataFrame.

Use the len() Function

The simplest way to get the number of rows is by using the built-in Python len() function.

I use this method most often because it is readable and very fast. It works exactly like finding the length of a list.

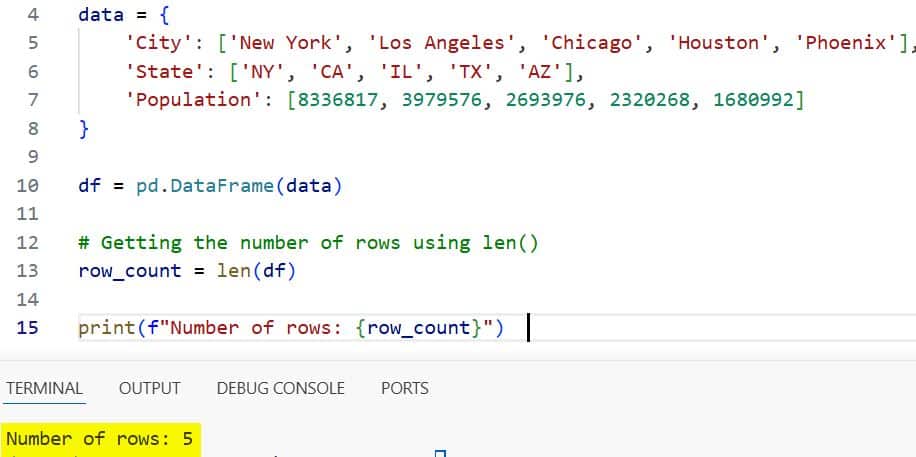

In the example below, I’ll create a DataFrame containing some basic information about major US cities.

import pandas as pd

# Creating a dataset of US Cities

data = {

'City': ['New York', 'Los Angeles', 'Chicago', 'Houston', 'Phoenix'],

'State': ['NY', 'CA', 'IL', 'TX', 'AZ'],

'Population': [8336817, 3979576, 2693976, 2320268, 1680992]

}

df = pd.DataFrame(data)

# Getting the number of rows using len()

row_count = len(df)

print(f"Number of rows: {row_count}")I executed the above example code and added the screenshot below.

When you pass the DataFrame to len(), it returns the number of rows in the index.

Use the shape Attribute

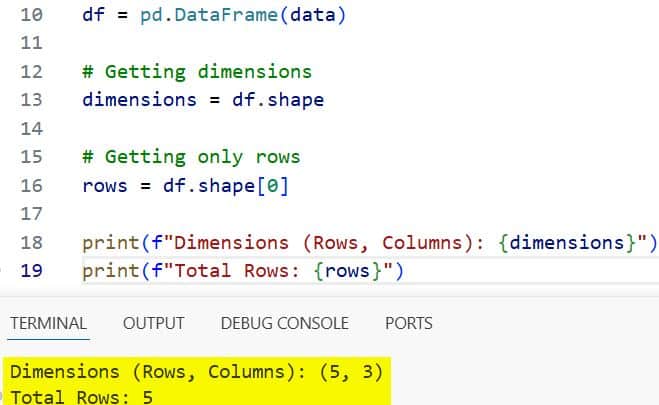

Another reliable method is using the .shape attribute. I find this particularly useful when I want to know both the number of rows and columns at the same time.

The shape attribute returns a tuple where the first element is the number of rows and the second is the number of columns.

import pandas as pd

# Dataset of US Tech Companies

data = {

'Company': ['Apple', 'Microsoft', 'Alphabet', 'Amazon', 'Meta'],

'Headquarters': ['Cupertino', 'Redmond', 'Mountain View', 'Seattle', 'Menlo Park'],

'Founded': [1976, 1975, 1998, 1994, 2004]

}

df = pd.DataFrame(data)

# Getting dimensions

dimensions = df.shape

# Getting only rows

rows = df.shape[0]

print(f"Dimensions (Rows, Columns): {dimensions}")

print(f"Total Rows: {rows}")I executed the above example code and added the screenshot below.

Since .shape is an attribute and not a function, it doesn’t require parentheses. It is often slightly faster than len() because it accesses the underlying array’s metadata directly.

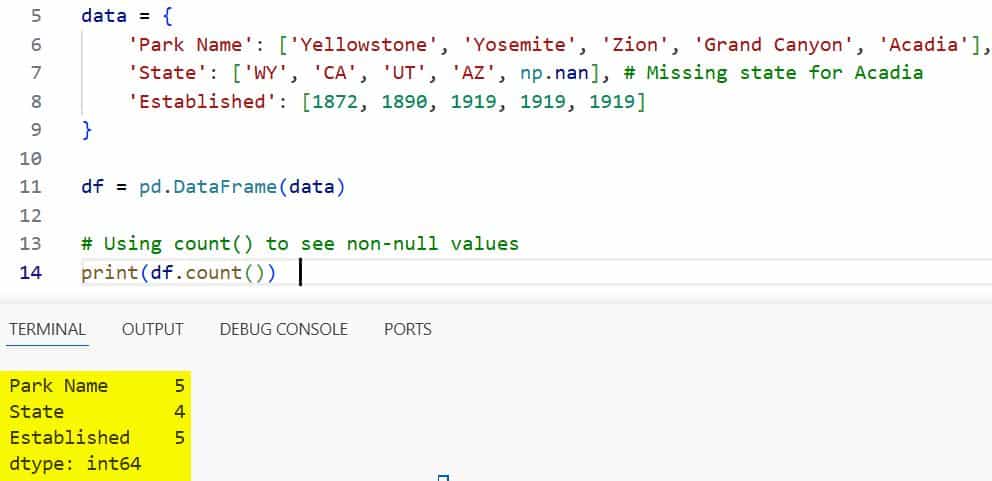

Use the count() Method

Sometimes, I don’t just want the total number of rows; I want to know how many rows actually contain data.

The .count() method is what I use when I suspect there are missing values (NaNs) in my dataset.

By default, .count() returns the number of non-null values for each column.

import pandas as pd

import numpy as np

# US National Parks dataset with some missing data

data = {

'Park Name': ['Yellowstone', 'Yosemite', 'Zion', 'Grand Canyon', 'Acadia'],

'State': ['WY', 'CA', 'UT', 'AZ', np.nan], # Missing state for Acadia

'Established': [1872, 1890, 1919, 1919, 1919]

}

df = pd.DataFrame(data)

# Using count() to see non-null values

print(df.count())I executed the above example code and added the screenshot below.

In this case, the ‘State’ column will show a count of 4, while the others show 5. This is a lifesaver when cleaning messy US government data files.

Use the len(df.index) Property

If you are working with massive datasets, millions of rows, performance becomes a priority. In my experience, len(df.index) is the fastest way to get the row count.

It skips the overhead of the DataFrame object and goes straight to the index.

import pandas as pd

# Large dataset simulation: US ZIP Codes

# For this example, we'll just use a small representative set

data = {'ZipCode': range(10000, 10010)}

df = pd.DataFrame(data)

# The high-performance way

row_count = len(df.index)

print(f"Fast row count: {row_count}")While the speed difference is negligible for small files, it adds up quickly in complex data pipelines.

Count Rows Based on a Condition

Often, I need to know how many rows meet a specific criterion.

For example, if I am looking at a list of US States, I might want to know how many have a population over 10 million.

I usually combine a boolean filter with len() or .shape[0] to get this number.

import pandas as pd

# Sample US State Population data (in millions)

data = {

'State': ['California', 'Texas', 'Florida', 'New York', 'Pennsylvania', 'Illinois'],

'Population': [39.2, 29.1, 21.5, 20.2, 13.0, 12.8]

}

df = pd.DataFrame(data)

# Count states with population > 20 million

high_pop_count = len(df[df['Population'] > 20])

print(f"States with over 20M residents: {high_pop_count}")This approach is flexible and allows you to count specific subsets of your data quickly.

Comparison of Methods

| Method | What it Returns | Best For |

len(df) | Total number of rows | General use and readability |

df.shape[0] | Total number of rows | When you already need columns too |

df.count() | Non-null values per column | Identifying missing data |

len(df.index) | Total number of rows | Maximum performance on large data |

Which Method Should You Use?

For most of my daily tasks, I stick with len(df). It is clean, Pythonic, and easy for others to read.

If I am building a production-level data application where every millisecond counts, I switch to len(df.index).

And of course, if I’m checking for data quality, df.count() is the only way to go to see those missing values.

I hope this tutorial helped you understand the different ways to find the number of rows in a Pandas DataFrame. Each method has its own place depending on whether you need speed, metadata, or a count of valid data points.

In this guide, I’ve shared the techniques I use every day as a developer. Whether you are dealing with a small list of US cities or a massive database of financial transactions, these tools will help you manage your data more effectively.

You may also like to read:

- Pandas Convert Column to Integer

- How to Convert String to Datetime in Pandas

- How to Convert Pandas Column to List in Python

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.