I have often found myself staring at a massive dataset and wondering if a specific variable was actually there.

When you are automating data pipelines for retail or financial reports, assuming a column exists is a quick way to crash your script.

Checking for a column’s presence is one of those small but vital steps that ensures your Python code remains robust and error-free.

In this tutorial, I will show you exactly how to check if a column exists in a Pandas DataFrame using several reliable methods.

The Sample Dataset: US Retail Sales

Before we dive into the methods, let’s create a sample DataFrame. We will use a dataset representing monthly sales for a US-based retail chain.

import pandas as pd

# Creating a sample US Retail Sales DataFrame

data = {

'Store_ID': [101, 102, 103, 104],

'State': ['California', 'Texas', 'New York', 'Florida'],

'Monthly_Revenue': [45000, 38000, 52000, 41000],

'Employee_Count': [12, 10, 15, 11]

}

df = pd.DataFrame(data)

print(df)Method 1: Use the ‘in’ Keyword (The Most Pythonic Way)

In my experience, the simplest way is usually the best. Using the in operator is the most readable and “Pythonic” approach.

When you use if ‘column_name’ in df:, Pandas actually checks the column index of the DataFrame.

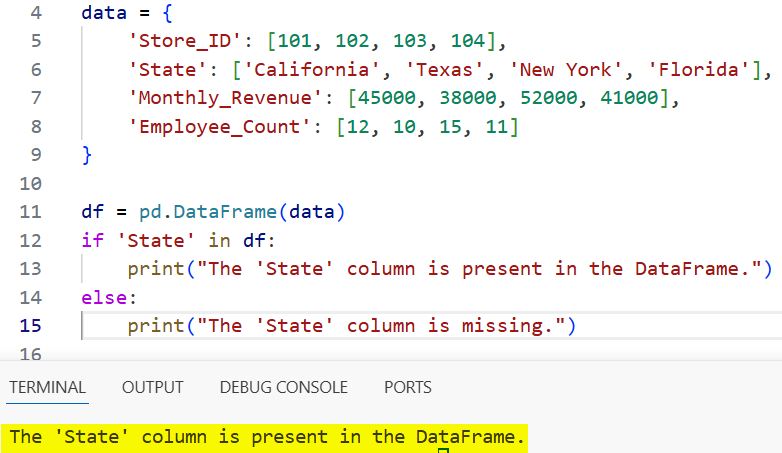

Example: Check for the ‘State’ Column

Suppose we want to check if our dataset includes the ‘State’ information before we perform any geographical analysis.

# Check if 'State' column exists

if 'State' in df:

print("The 'State' column is present in the DataFrame.")

else:

print("The 'State' column is missing.")I executed the above example code and added the screenshot below.

I prefer this method because it is incredibly fast and the syntax is very intuitive for anyone who has used Python lists or dictionaries.

Method 2: Use the .columns Attribute

Sometimes I need to be more explicit. Every Pandas DataFrame has a .columns attribute that returns an Index object containing all the column labels.

You can check for a column name within this specific Index object.

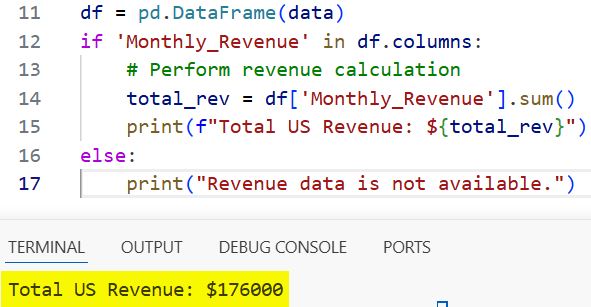

Example: Verify ‘Monthly_Revenue’

# Using df.columns to check for revenue data

if 'Monthly_Revenue' in df.columns:

# Perform revenue calculation

total_rev = df['Monthly_Revenue'].sum()

print(f"Total US Revenue: ${total_rev}")

else:

print("Revenue data is not available.")I executed the above example code and added the screenshot below.

While this does the same thing as Method 1, it makes it very clear to other developers that you are specifically looking at the column headers.

Method 3: Use the .get() Method

The .get() method is a hidden gem that I often use when I want to avoid if-else blocks entirely.

This method works similarly to the .get() method in a Python dictionary. If the column exists, it returns the column; otherwise, it returns None (or a default value you specify).

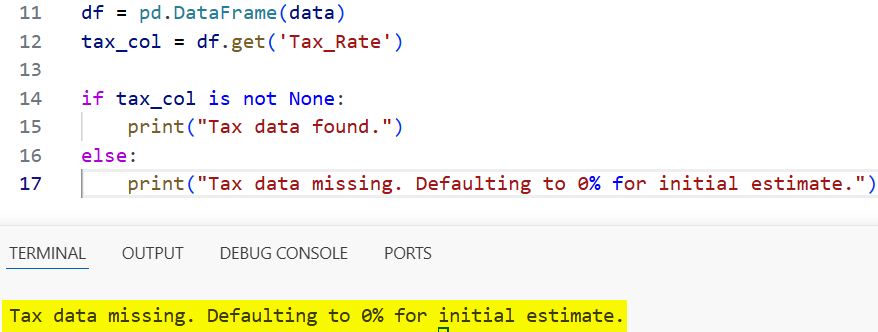

Example: Safely Retrieving ‘Tax_Rate’

In the US retail sector, tax rates vary by state. If the ‘Tax_Rate’ column isn’t in our file yet, we want our code to keep running without an error.

# Try to get the 'Tax_Rate' column, return None if it doesn't exist

tax_col = df.get('Tax_Rate')

if tax_col is not None:

print("Tax data found.")

else:

print("Tax data missing. Defaulting to 0% for initial estimate.")I executed the above example code and added the screenshot below.

This is extremely useful when you are dealing with optional columns in a data processing pipeline.

Method 4: Check for Multiple Columns at Once

In professional data science projects, you rarely check for just one column. Usually, you need a set of required features, like ‘Store_ID’ and ‘Employee_Count’.

I found that using sets is the most efficient way to handle multiple checks.

Example: Verify Required Reporting Fields

required_columns = {'Store_ID', 'Employee_Count', 'Manager_Name'}

existing_columns = set(df.columns)

# Find which required columns are missing

missing_columns = required_columns - existing_columns

if not missing_columns:

print("All required columns for the US report are present.")

else:

print(f"Alert: The following columns are missing: {missing_columns}")This method is a lifesaver when you are validating a CSV file uploaded by a third-party vendor.

Method 5: Use the .isin() Method on Columns

If you want a more “Pandas-native” way to check for multiple columns, you can use the .isin() method on the Index object.

Example: Check a List of US Geographic Markers

# List of columns we are looking for

cols_to_check = ['State', 'Zip_Code', 'City']

# Returns a boolean array

found_mask = pd.Series(cols_to_check).isin(df.columns)

print("Status of requested columns:")

for col, found in zip(cols_to_check, found_mask):

print(f"{col}: {'Found' if found else 'Not Found'}")Method 6: Use a try-except Block

I don’t use this as often for simple checks, but it is useful when you are performing an operation and want to handle the error immediately if it fails.

In Python, it is often “easier to ask for forgiveness than permission” (EAFP).

Example: Calculate Profit per Employee

try:

# Attempting an operation that requires specific columns

df['Rev_Per_Employee'] = df['Monthly_Revenue'] / df['Employee_Count']

print("Calculation successful.")

except KeyError as e:

print(f"Error: The column {e} is missing from the dataset.")This approach is best when the absence of a column is an exceptional case that should stop the process.

Why Checking for Columns is Important in Data Engineering

Working with data from different US states often means dealing with inconsistent schemas.

For instance, a dataset from a New York branch might include ‘Sales_Tax’, while a dataset from Oregon (which has no state sales tax) might omit it entirely.

If your script tries to access df[‘Sales_Tax’] on the Oregon data, it will raise a KeyError.

By implementing one of the checks above, you can:

- Prevent Crashes: Your automation scripts won’t stop midway.

- Data Validation: Ensure the input file meets the required format.

- Default Values: Assign zeros or “N/A” if a column is missing.

Case Study: Validate a US Payroll File

Let’s look at a full example where we combine these techniques to validate a payroll file before processing.

import pandas as pd

# Incoming payroll data

payroll_data = {

'Employee_Name': ['Alice Smith', 'Bob Jones'],

'Base_Salary': [85000, 92000],

'State_Work_Location': ['WA', 'TX']

}

df_payroll = pd.DataFrame(payroll_data)

def validate_payroll_schema(df):

# We strictly need these for US compliance

essential_fields = ['Employee_Name', 'Base_Salary', 'SSN_Last4']

for field in essential_fields:

if field not in df:

print(f"CRITICAL ERROR: {field} is missing. Cannot process payroll.")

return False

return True

# Running the validation

if validate_payroll_schema(df_payroll):

print("Proceeding to payment processing.")

else:

print("Payroll processing aborted due to missing columns.")Compare the Methods: Performance and Use Cases

| Method | Best For | Readability |

in df | Quick, single column checks | Excellent |

.columns | Explicit index checking | Good |

.get() | Avoiding errors with optional columns | Good |

set() | Checking multiple columns at once | Moderate |

try-except | Handling missing columns during operations | Moderate |

In my daily workflow, I stick to the in df check for 90% of my tasks. It is clean and never fails to do the job.

Pro-Tip: Case Sensitivity and Extra Spaces

One thing that tripped me up early in my career was case sensitivity. In Pandas, ‘State’ and ‘state’ are different columns.

Additionally, sometimes CSV files have leading or trailing spaces (e.g., ” State”).

If you suspect your data is messy, I recommend cleaning the column names first:

# Strip spaces and convert to lowercase for easier checking

df.columns = df.columns.str.strip().str.lower()

if 'state' in df:

print("Found 'state' after cleaning column names.")This small step has saved me hours of debugging time when dealing with manual entry data from various US regional offices.

I hope you found this tutorial helpful!

Checking if a column exists is a fundamental skill that will make your Pandas code much more resilient. Whether you are building a financial dashboard or a machine learning model, always verify your inputs.

You may also like to read:

- How to Change Data Type of Column in Pandas

- How to Reset Pandas DataFrame Index

- How to Create a Pandas DataFrame from a Dictionary

- How to Use Pandas pivot_table in Python

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.