When I first started working with heavy datasets, I often felt overwhelmed by the sheer volume of repetitive information.

One of the first things I learned to do, and something I still do every single day, is to check for unique values.

It helps you understand the diversity of your data and identifies potential entry errors before they become a problem.

In this tutorial, I will show you exactly how to count unique values in a Pandas column using the methods I’ve relied on throughout my career.

Create Our Sample US Tech Dataset

Before we dive into the methods, let’s set up a dataset we can work with.

I’ve put together a small DataFrame representing various tech companies, the US states where they are headquartered, and their primary sectors.

import pandas as pd

import numpy as np

# Creating a dataset of US Tech Companies

data = {

'Company': ['Apple', 'Google', 'Meta', 'Apple', 'Microsoft', 'Google', 'Amazon', 'Netflix', 'Tesla', 'SpaceX', 'Apple'],

'State': ['California', 'California', 'California', 'California', 'Washington', 'California', 'Washington', 'California', 'Texas', 'Texas', 'California'],

'Sector': ['Hardware', 'Search', 'Social Media', 'Software', 'Cloud', 'Ads', 'E-commerce', 'Streaming', 'Automotive', 'Aerospace', 'Hardware'],

'Employee_Rating': [4.5, 4.2, 4.0, 4.5, 4.3, 4.2, 3.8, 4.6, 3.9, 4.7, np.nan]

}

df = pd.DataFrame(data)

print(df)In this example, we have some repeats (like Apple and Google) and even a missing value (NaN) in the ratings. This is exactly what real-world data looks like.

1. Use the nunique() Method

The most direct way to get a count of unique values is the nunique() function. I find myself using this more than any other method because it is concise and purpose-built.

When you call nunique() on a specific column, it returns an integer representing how many distinct entries exist.

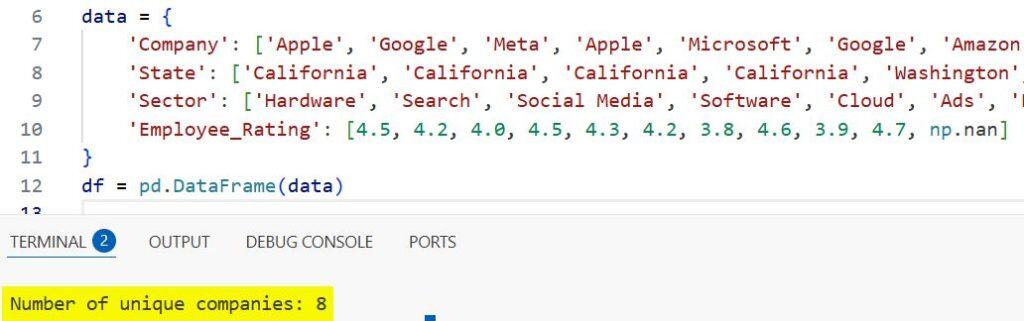

# Counting unique companies in our list

unique_companies = df['Company'].nunique()

print(f"Number of unique companies: {unique_companies}")I executed the above example code and added the screenshot below.

In the code above, even though “Apple” appears three times, nunique() only counts it once.

From my experience, this is the cleanest way to get a high-level summary of your categorical data.

2. Handle Missing Values with nunique()

One thing that tripped me up early in my career was how Pandas handles missing values (NaNs).

By default, nunique() does not count NaNs as a unique value. However, sometimes you need to know if there is a “missing” category.

To include NaNs in your count, you can use the dropna parameter.

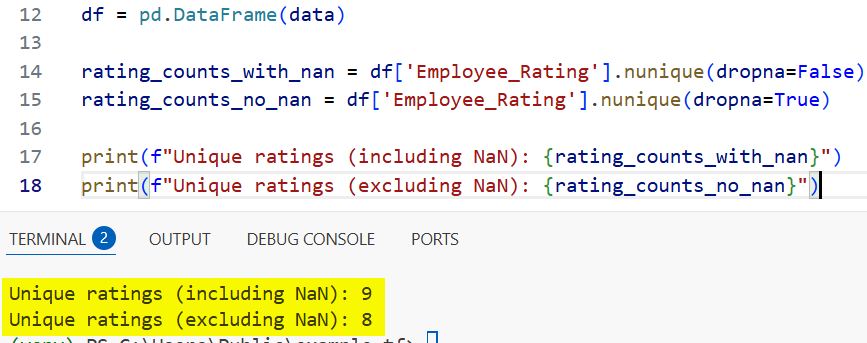

# Counting unique ratings, including the missing value

rating_counts_with_nan = df['Employee_Rating'].nunique(dropna=False)

rating_counts_no_nan = df['Employee_Rating'].nunique(dropna=True)

print(f"Unique ratings (including NaN): {rating_counts_with_nan}")

print(f"Unique ratings (excluding NaN): {rating_counts_no_nan}")I executed the above example code and added the screenshot below.

I usually keep dropna=True (the default) unless I am specifically looking for data quality gaps where “missing” is a significant data point.

3. Count Unique Values Across the Entire DataFrame

Sometimes you don’t just want to look at one column. You might want a bird’s-eye view of every column in your DataFrame.

If you call nunique() on the entire DataFrame object, Pandas will return a Series where the index is the column name and the value is the count of unique items.

# Getting unique counts for all columns at once

df_unique_summary = df.nunique()

print("Summary of unique values per column:")

print(df_unique_summary)I frequently use this during the initial exploration phase of a project. It tells me instantly which columns are “IDs” (very high unique count) and which are “Categories” (low unique count).

4. Use value_counts() for Frequency Distribution

While nunique() gives you a single number, value_counts() gives you the full picture. It shows you every unique value and exactly how many times it appears.

I prefer this method when I need to see which unique values are the most dominant in my dataset.

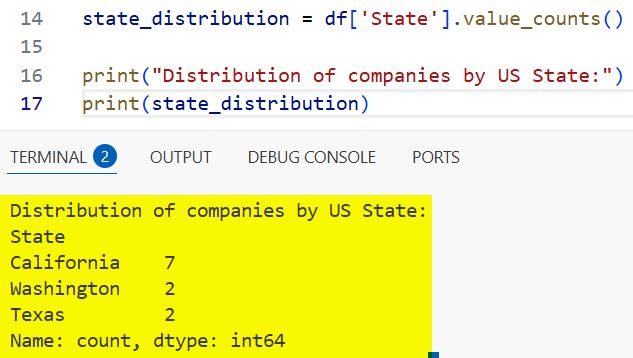

# Seeing how many times each state appears in our dataset

state_distribution = df['State'].value_counts()

print("Distribution of companies by US State:")

print(state_distribution)I executed the above example code and added the screenshot below.

This is incredibly useful for spotting data imbalances. For instance, if you see “California” 50 times and “Texas” only once, you know your analysis might be biased toward West Coast trends.

5. The len() and unique() Combination

Before nunique() was the standard, many of us used a combination of the unique() method and the Python len() function.

The unique() method returns an array of the unique values themselves. Wrapping that in len() gives you the count.

# The 'Old School' way of counting unique values

count = len(df['Sector'].unique())

print(f"Number of unique sectors: {count}")Is there a reason to use this over nunique()? Generally, no.

However, if you already need the list of unique values for a loop or a dropdown menu, it makes sense to just take the length of that list rather than calling a second function.

6. Count Unique Values per Group (groupby)

In many professional scenarios, you need to know the unique count within specific categories.

For example, you might want to know how many unique sectors exist per state. For this, we combine groupby() with nunique().

# Counting unique sectors for each US State

grouped_uniques = df.groupby('State')['Sector'].nunique()

print("Unique sectors represented in each state:")

print(grouped_uniques)This is a powerful technique for regional analysis. I often use this when comparing market diversity across different territories or time periods.

Performance Tip for Large Datasets

When you are dealing with millions of rows, common in US financial or census data, performance becomes an issue.

If you only need to know if there is at least one unique value or if the count exceeds a certain threshold, nunique() is quite fast.

However, if you find your script slowing down, consider converting your object columns to the category dtype.

Pandas handles unique counts much faster on categorical data than on raw strings.

# Optimizing a column for faster unique counting

df['State'] = df['State'].astype('category')

print(f"Optimized unique count: {df['State'].nunique()}")In my experience, this simple change can reduce memory usage and speed up your analysis by a significant margin.

Summary of Key Methods

| Method | What it returns | Best used when… |

df['col'].nunique() | Integer | You need a quick count of distinct items. |

df['col'].value_counts() | Series (Value: Count) | You need to see the frequency of each item. |

df.nunique() | Series (Col: Count) | You want a summary of the whole DataFrame. |

df.groupby('x')['y'].nunique() | Series | You need unique counts within categories. |

Understanding how to count unique values is a fundamental skill in data science.

I hope this guide helps you navigate your data more efficiently. Whether you are cleaning data or building complex models, these methods will serve as the backbone of your exploratory process.

You may also like to read:

- Fix “Function Not Implemented for This Dtype” Error in Python

- How to Rename Columns in Pandas

- How to Delete Columns in a Pandas DataFrame

- How to Concatenate Two DataFrames in Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.