I remember working on a data migration project for a retail chain based in Chicago. I had twelve different CSV files, each representing a month of sales data across various US states.

Combining these manually would have been a nightmare, but that is where the Pandas concat() function saved my day.

In my years of developing Python applications, I have found that merging datasets is one of the most frequent tasks you will face.

Whether you are combining regional reports or appending new user logs, you need a method that is both fast and reliable.

In this tutorial, I will show you exactly how to use the pd.concat() function to join DataFrames effectively.

The Basic Syntax of pd.concat()

Before we dive into the specific scenarios, let’s look at the basic structure of the function.

I usually think of concat() as a way to “stack” data either on top of each other or side-by-side.

Here is the standard syntax I use:

pd.concat(objs, axis=0, join='outer', ignore_index=False, keys=None)The objs parameter is simply a list or dictionary of the DataFrames you want to combine.

The axis parameter is the most important one to remember; 0 is for rows (vertical) and 1 is for columns (horizontal).

Set Up Our US Sales Data

To make this realistic, let’s create two DataFrames representing sales from different US regions.

I will use these examples throughout the tutorial so you can see the results clearly.

import pandas as pd

# Sales data for the East Coast (New York, Boston)

east_coast_sales = pd.DataFrame({

'City': ['New York', 'Boston', 'Philadelphia'],

'Revenue': [450000, 275000, 190000],

'Store_ID': [101, 102, 103]

})

# Sales data for the West Coast (Los Angeles, San Francisco)

west_coast_sales = pd.DataFrame({

'City': ['Los Angeles', 'San Francisco', 'Seattle'],

'Revenue': [520000, 480000, 310000],

'Store_ID': [201, 202, 203]

})

print("East Coast Data:")

print(east_coast_sales)

print("\nWest Coast Data:")

print(west_coast_sales)Method 1: Concatenate DataFrames Vertically (Default)

This is the most common task I perform when I receive periodic data updates. If you have two DataFrames with the same column names, you can stack them vertically.

I simply pass the DataFrames as a list to the concat function.

# Combining the East and West coast sales

national_sales = pd.concat([east_coast_sales, west_coast_sales])

print(national_sales)You can refer to the screenshot below to see the output.

When I run this, Pandas keeps the original indices from both DataFrames. I have found that this can sometimes cause issues if you try to select data by index later.

To fix this, I usually use the ignore_index parameter.

Use ignore_index to Clean the Result

If you don’t care about the original row numbers, set ignore_index=True. This creates a fresh, continuous index from 0 to the end of the new DataFrame.

# Concatenating with a fresh index

clean_national_sales = pd.concat([east_coast_sales, west_coast_sales], ignore_index=True)

print(clean_national_sales)I highly recommend doing this unless the original index carries a specific meaning, like a Date or a Social Security Number.

Method 2: Concatenate DataFrames Horizontally (By Columns)

Sometimes, I have the same entities but different sets of information about them.

For example, imagine you have a list of US Tech companies and their Stock Symbols in one table, and their Market Cap in another.

To join them side-by-side, you change the axis to 1.

# Company basic info

tech_firms = pd.DataFrame({

'Company': ['Apple', 'Microsoft', 'NVIDIA'],

'Ticker': ['AAPL', 'MSFT', 'NVDA']

}, index=[0, 1, 2])

# Company financial info

market_stats = pd.DataFrame({

'Market_Cap_Trillions': [2.8, 3.1, 2.2],

'HQ_State': ['California', 'Washington', 'California']

}, index=[0, 1, 2])

# Concatenating horizontally

full_tech_report = pd.concat([tech_firms, market_stats], axis=1)

print(full_tech_report)I use this method frequently when I am building a feature set for a machine learning model. It is a very efficient way to attach new features to an existing dataset.

Method 3: Handle DataFrames with Different Columns (Join Logic)

In the real world, your data sources are rarely perfect matches. I often encounter situations where one DataFrame has a column that the other is missing.

By default, pd.concat uses an “Outer” join, which keeps every column from both sets and fills the gaps with NaN (Not a Number).

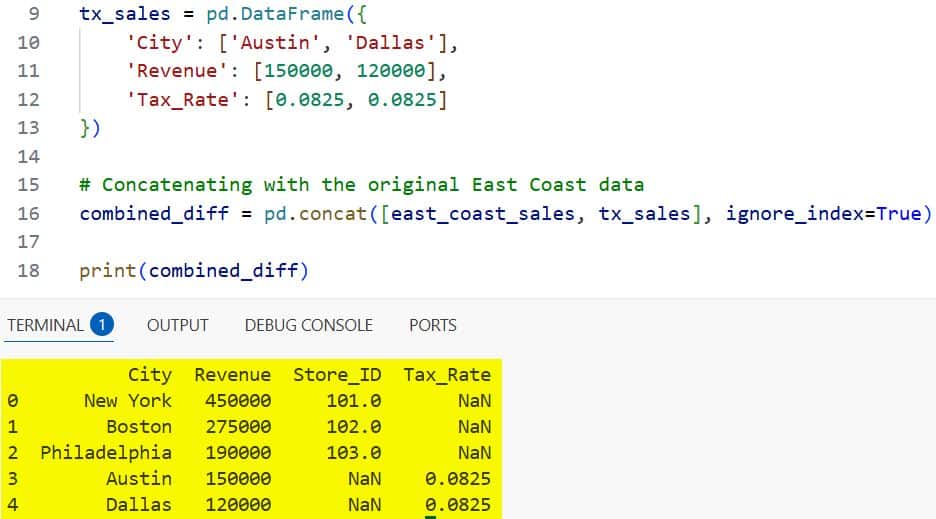

# DataFrame with an extra 'Tax_Rate' column

tx_sales = pd.DataFrame({

'City': ['Austin', 'Dallas'],

'Revenue': [150000, 120000],

'Tax_Rate': [0.0825, 0.0825]

})

# Concatenating with the original East Coast data

combined_diff = pd.concat([east_coast_sales, tx_sales], ignore_index=True)

print(combined_diff)You can refer to the screenshot below to see the output.

If you only want to keep the columns that appear in both DataFrames, you can use join=’inner’.

I find this useful when I want a clean dataset without any missing values.

# Only keeping common columns

common_columns_only = pd.concat([east_coast_sales, tx_sales], join='inner', ignore_index=True)

print(common_columns_only)In this case, the ‘Tax_Rate’ column is discarded because it wasn’t in the East Coast dataset.

Method 4: Add Keys to Identify Data Sources

When I combine data from different US states, I sometimes lose track of where a row came from.

To prevent this, I use the keys parameter to create a “MultiIndex.” This allows me to label each chunk of data while keeping it in a single DataFrame.

# Labeling the data by region

labeled_data = pd.concat([east_coast_sales, west_coast_sales], keys=['East', 'West'])

print(labeled_data)I find this incredibly helpful for debugging or when I need to perform a groupby operation later. You can easily pull out all “East” records without having to filter the entire dataset manually.

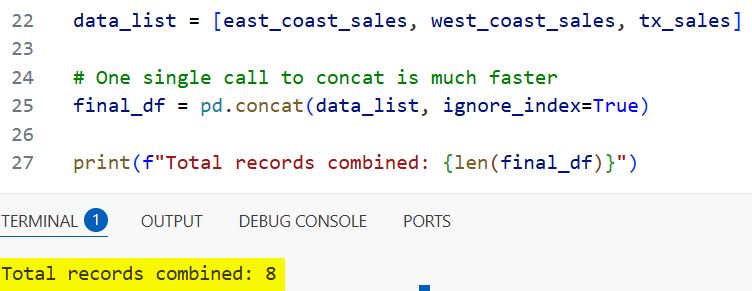

Method 5: Concatenate a List of Many DataFrames

I have seen many developers use a for loop to append DataFrames one by one. Please, do not do this. It is extremely slow because Pandas creates a new object every time.

Instead, I collect all my DataFrames into a list first, and then call concat just once.

import glob

# Imagine we have many CSV files for different US states

# I collect them into a list first

data_list = [east_coast_sales, west_coast_sales, tx_sales]

# One single call to concat is much faster

final_df = pd.concat(data_list, ignore_index=True)

print(f"Total records combined: {len(final_df)}")You can refer to the screenshot below to see the output.

I once reduced the processing time of a script from ten minutes to thirty seconds just by making this change.

Troubleshoot Duplicate Indices

One thing I always check for after a concatenation is duplicate indices. If you don’t use ignore_index=True, you might end up with multiple rows labeled “0”.

I use the verify_integrity parameter if I want the code to crash and alert me if there are duplicates.

try:

# This will raise an error if duplicate indices exist

pd.concat([east_coast_sales, west_coast_sales], verify_integrity=True)

except ValueError as e:

print(f"Error caught: {e}")In my experience, it is better to catch these errors early than to have weird bugs in your analysis later.

Summary of Best Practices

Through years of trial and error, I have developed a few rules for using concat:

- Always use ignore_index=True unless your index is a specific ID you need to keep.

- Use axis=1 when you want to add new information (columns) about the same rows.

- Use keys if you need to remember which file or source a row originated from.

- Avoid loops; collect your DataFrames in a list and concatenate them at the very end.

I hope this tutorial helped you understand how to concatenate DataFrames in Pandas.

It is a versatile tool that, once mastered, makes data cleaning significantly faster.

You may also like to read:

- Convert DataFrame To NumPy Array Without Index in Python

- Fix “Function Not Implemented for This Dtype” Error in Python

- How to Rename Columns in Pandas

- How to Delete Columns in a Pandas DataFrame

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.