I’ve handled massive amounts of US retail and financial data. One thing I’ve learned is that raw data is almost always useless until you summarize it.

In Pandas, the groupby function combined with aggregation is the most powerful tool in your arsenal.

I remember the first time I tried to manually calculate averages for different regions in a spreadsheet. It was a nightmare.

Once I discovered how to use the aggregation function in Pandas, my workflow changed forever.

In this tutorial, I’ll show you exactly how to master the Pandas GroupBy aggregation function using real-world examples.

Create Our US Retail Dataset

Before we get into the methods, let’s create a dataset that reflects a real-world scenario.

I’ll use a dataset representing sales for a national retail chain with stores across different US regions.

import pandas as pd

import numpy as np

# Setting up a representative US Retail Dataset

data = {

'Region': ['Northeast', 'West', 'South', 'Midwest', 'West', 'Northeast', 'South', 'Midwest', 'West', 'South'],

'State': ['New York', 'California', 'Texas', 'Illinois', 'Washington', 'Massachusetts', 'Florida', 'Ohio', 'Oregon', 'Georgia'],

'Category': ['Electronics', 'Electronics', 'Furniture', 'Clothing', 'Electronics', 'Furniture', 'Clothing', 'Electronics', 'Furniture', 'Clothing'],

'Sales_USD': [12000, 15000, 8000, 5000, 11000, 7500, 6200, 9500, 8800, 5400],

'Quantity': [24, 30, 12, 45, 22, 10, 55, 18, 15, 48],

'Profit_Margin': [0.15, 0.20, 0.10, 0.08, 0.18, 0.12, 0.07, 0.14, 0.11, 0.09]

}

df = pd.DataFrame(data)

print("Original US Retail Sales Data:")

print(df)This dataset gives us plenty of variables to play with, from categorical regions to numerical sales figures.

Method 1: Use Basic Built-in Aggregation Functions

When I’m in a hurry and just need a quick sum or average, I use the built-in methods. Pandas allows you to call functions like sum(), mean(), or count() directly after a group by.

I often use this when I’m doing a quick sanity check on regional performance.

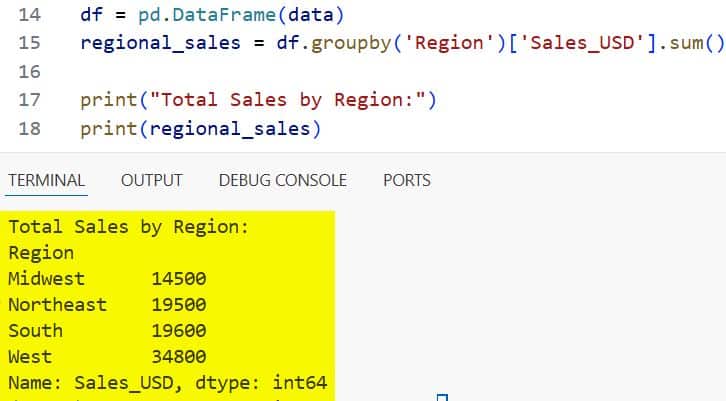

# Calculating total sales per Region

regional_sales = df.groupby('Region')['Sales_USD'].sum()

print("Total Sales by Region:")

print(regional_sales)You can see the output in the screenshot below.

In this example, I grouped the data by the ‘Region’ column and then applied the sum() function to the ‘Sales_USD’ column.

It is a very easy way to see which part of the country is bringing in the most revenue.

Method 2: The Versatile .agg() Method

While built-in functions are great, the .agg() (or .aggregate()) method is where the real magic happens. In my experience, this is the most flexible way to handle data summaries.

It allows you to pass strings of common functions or even actual NumPy functions.

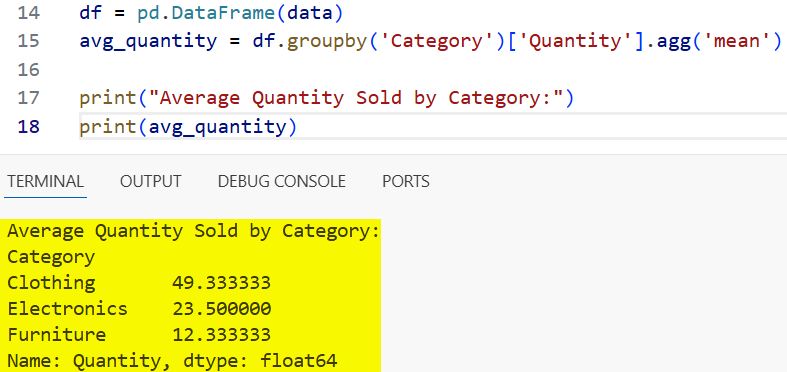

# Using the .agg() method for a single aggregation

avg_quantity = df.groupby('Category')['Quantity'].agg('mean')

print("Average Quantity Sold by Category:")

print(avg_quantity)You can see the output in the screenshot below.

I prefer using .agg() because it makes the code more readable when you decide to add more complexity later.

It clearly signals to anyone reading your code that an aggregation is taking place.

Method 3: Apply Multiple Aggregations at Once

In a professional setting, I rarely need just one metric. I usually want the “Big Three”: Sum, Mean, and Count.

The .agg() method allows you to pass a list of functions, which Pandas will apply to the grouped data.

I find this incredibly useful for creating high-level executive reports.

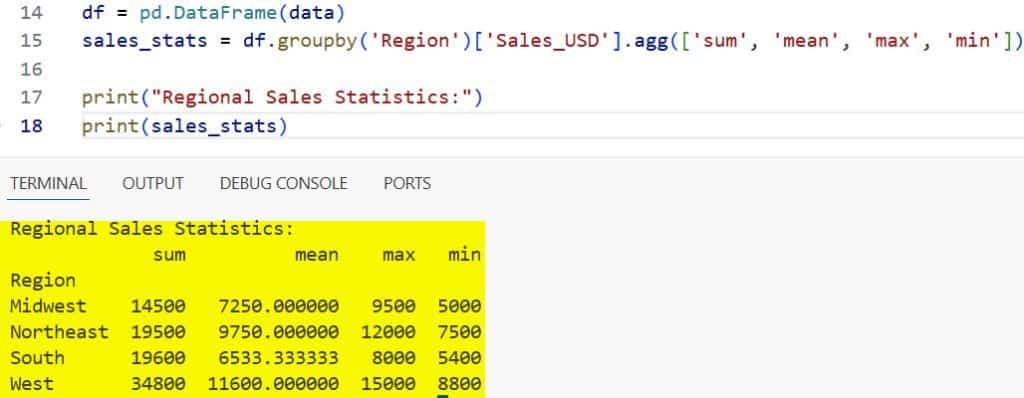

# Applying multiple functions to Sales

sales_stats = df.groupby('Region')['Sales_USD'].agg(['sum', 'mean', 'max', 'min'])

print("Regional Sales Statistics:")

print(sales_stats)You can see the output in the screenshot below.

When you pass a list like [‘sum’, ‘mean’, ‘max’], Pandas creates a MultiIndex for the columns.

This gives you a very clean, table-like structure that summarizes the entire distribution of your US sales data.

Method 4: Use a Dictionary for Different Aggregations

This is my “Pro-Tip” for anyone working with complex datasets. Often, you don’t want the same calculation for every column.

For example, I might want the total sum of Sales_USD but the average of the Profit_Margin.

Using a dictionary within the .agg() function allows you to map specific columns to specific functions.

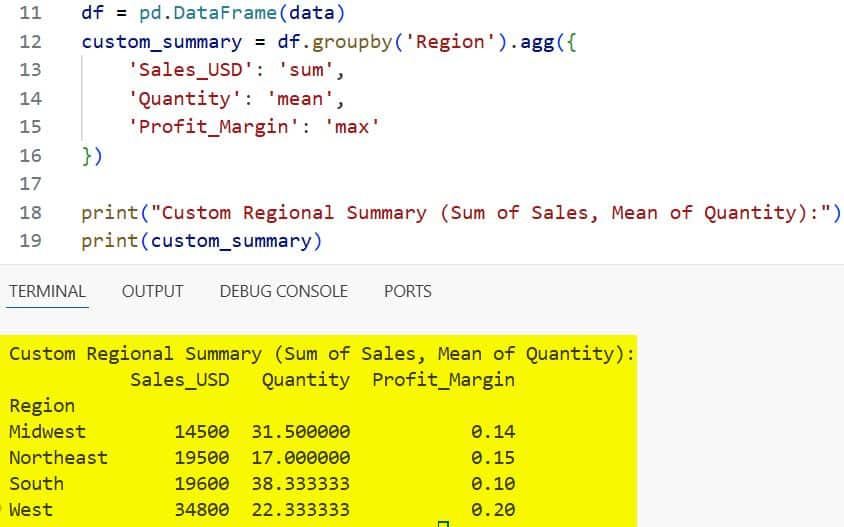

# Mapping different functions to different columns

custom_summary = df.groupby('Region').agg({

'Sales_USD': 'sum',

'Quantity': 'mean',

'Profit_Margin': 'max'

})

print("Custom Regional Summary (Sum of Sales, Mean of Quantity):")

print(custom_summary)You can see the output in the screenshot below.

I use this method daily. It prevents your output from being cluttered with unnecessary calculations.

It keeps your analysis focused on the metrics that actually matter for each specific data point.

Method 5: Named Aggregations for Cleaner Columns

One thing that used to annoy me about the standard .agg() method was the resulting column names. Usually, you end up with a MultiIndex or column names that aren’t very descriptive.

Pandas introduced “Named Aggregation” to solve this, allowing you to name the output column directly.

# Using Named Aggregation for cleaner output

clean_summary = df.groupby('Region').agg(

Total_Revenue=('Sales_USD', 'sum'),

Average_Order_Size=('Quantity', 'mean'),

Highest_Margin=('Profit_Margin', 'max')

)

print("Named Aggregation Summary:")

print(clean_summary)Notice how the output columns are now Total_Revenue and Average_Order_Size instead of just the original column names.

I highly recommend this for any data that is going to be exported to a CSV or used in a presentation.

Method 6: Aggregate with Custom Lambda Functions

Sometimes, the standard library of functions isn’t enough for specific US business logic.

I’ve had cases where I needed to calculate a specific tax-adjusted revenue or a custom weighted average.

This is where lambda functions come in handy.

# Using a lambda function to calculate range (Max - Min)

range_summary = df.groupby('Category')['Sales_USD'].agg(lambda x: x.max() - x.min())

print("Sales Range per Category:")

print(range_summary)Lambda functions allow you to perform virtually any calculation during the grouping process.

While they can be slightly slower on massive datasets, they offer unparalleled flexibility for niche calculations.

Method 7: Group by Multiple Columns

In many of my projects, grouping by a single attribute like ‘Region’ isn’t granular enough. I often need to see how specific categories are performing within those regions.

You can pass a list of columns to the groupby function to create a hierarchical summary.

# Grouping by both Region and Category

multi_group = df.groupby(['Region', 'Category']).agg({

'Sales_USD': ['sum', 'count']

})

print("Sales Summary by Region and Category:")

print(multi_group)This is perfect for spotting trends, like high electronics sales in the West vs. furniture sales in the Northeast.

It helps you drill down into the data to find the “why” behind the numbers.

Handle the Output: Reset the Index

After an aggregation, Pandas usually sets the grouped columns as the index. In my experience, this can make further data manipulation or plotting a bit difficult.

I almost always use .reset_index() to turn the grouped labels back into regular columns.

# Resetting index for a flat DataFrame

flat_report = df.groupby('Region')['Sales_USD'].sum().reset_index()

print("Flat DataFrame Report:")

print(flat_report)This makes the DataFrame look like a standard table again, which is much easier to work with in downstream tasks.

Conclusion

Mastering the GroupBy aggregation function in Pandas has been a cornerstone of my career as a developer.

It turns hundreds of lines of manual logic into a single, readable line of code.

Whether you are using simple built-in functions or complex named aggregations, the key is to choose the method that makes your data most readable.

I hope this guide helps you clean up your US sales data and find the insights you need.

Try running these examples with your own datasets and see how much time you save.

You may also like to read:

- Lambda Functions in Pandas DataFrames

- How to Read Excel Files in Pandas

- How to Compare Two Pandas DataFrames in Python

- How to Use Pandas Concat with Ignore Index

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.