Dealing with date information in Python can be a bit like navigating traffic in New York City—it’s hectic until you know the right lanes to be in.

In my years of developing data pipelines, I’ve found that dates rarely arrive in the format you actually need for analysis.

Usually, they show up as simple strings, which means you can’t perform time-series analysis or even sort them chronologically without a conversion.

In this tutorial, I will show you exactly how to convert strings to datetime objects using Pandas, drawing from my own experience managing large-scale US financial datasets.

The Problem with Date Strings in Dataframes

When you import a CSV file containing sales data from a US retail chain, Pandas often treats the ‘Date’ column as an ‘object’ (string) type.

If you try to calculate the number of days between two transactions or group by month, Python will throw an error because it doesn’t “see” the date inside the text.

The pd.to_datetime() function is the most powerful tool in your toolkit for fixing this.

Method 1: Use the Basic pd.to_datetime() Function

Most of the time, Pandas is smart enough to guess the format of your date strings without much help.

I remember being surprised during a project for a Chicago-based logistics firm at how well Pandas handled “MM/DD/YYYY” formats automatically.

Here is the easiest way to perform the conversion:

import pandas as pd

# Sample data: US Retail Sales with dates as strings

data = {

'Store_ID': [101, 102, 103, 104],

'Transaction_Date': ['03/15/2023', '04/22/2023', '12/01/2023', '01/10/2024'],

'Sales_Amount': [250.50, 430.00, 1200.75, 890.20]

}

df = pd.DataFrame(data)

# Checking the initial data types

print("Before Conversion:")

print(df.dtypes)

# Converting the string column to datetime

df['Transaction_Date'] = pd.to_datetime(df['Transaction_Date'])

print("\nAfter Conversion:")

print(df.dtypes)

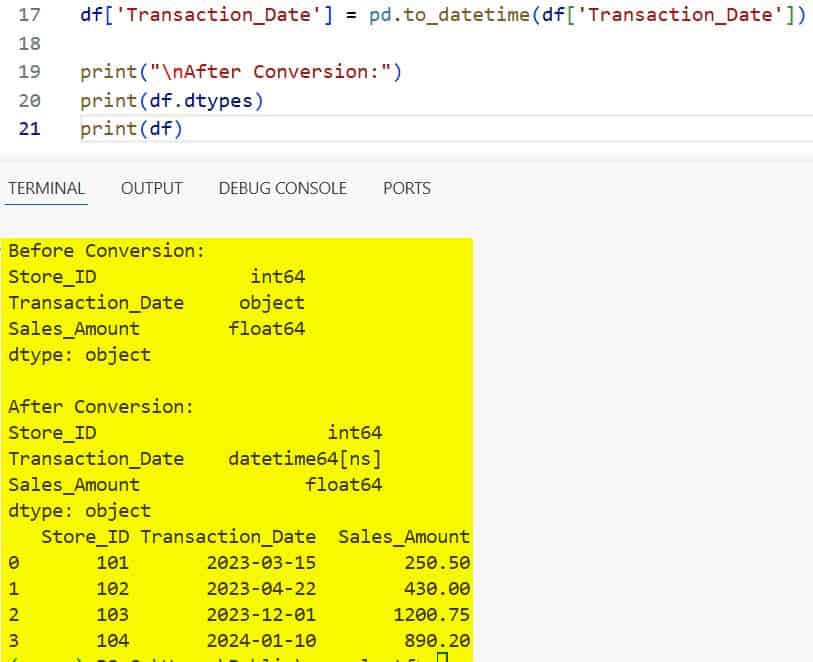

print(df)I executed the above example code and added the screenshot below.

By simply passing the column into pd.to_datetime(), the data type changes from object to datetime64[ns].

Method 2: Handle Custom Date Formats

Sometimes, you might encounter dates formatted in non-standard ways, like “15-Aug-2023” or “2023.12.25”.

In these cases, I prefer to be explicit by using the format parameter to speed up the process and avoid ambiguity.

Specifying the format is especially important when dealing with US dates (Month/Day) vs European dates (Day/Month).

# Sample data with custom US format: Month-Day-Year

data = {'Service_Date': ['10-25-2023', '11-30-2023', '12-05-2023']}

df = pd.DataFrame(data)

# Using a specific format string

# %m is Month, %d is Day, %Y is 4-digit Year

df['Service_Date'] = pd.to_datetime(df['Service_Date'], format='%m-%d-%Y')

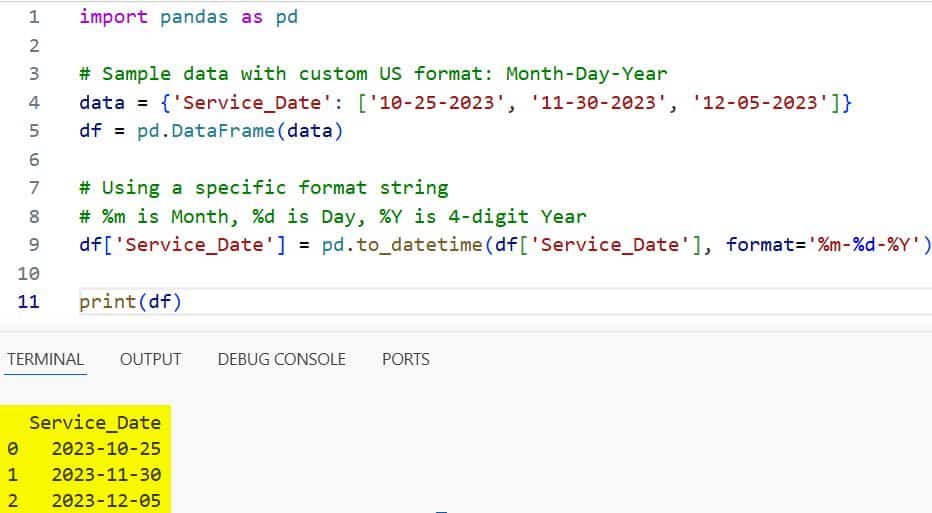

print(df)I executed the above example code and added the screenshot below.

In my experience, providing the format string significantly reduces the time Pandas spends “guessing,” which is a lifesaver for dataframes with millions of rows.

Method 3: Manage Errors with the ‘errors’ Parameter

Data is rarely perfect. During a data audit for a healthcare provider in Texas, I found several “N/A” and “Invalid” entries in the date columns.

If pd.to_datetime() hits a string it can’t parse, it will raise a ValueError and stop your entire script.

To prevent this, I use the errors parameter.

# Data containing a typo/invalid date

data = {'Appointment_Date': ['05/10/2023', 'Invalid_Date', '07/15/2023']}

df = pd.DataFrame(data)

# 'coerce' will turn invalid dates into NaT (Not a Time), which is like NaN

df['Appointment_Date'] = pd.to_datetime(df['Appointment_Date'], errors='coerce')

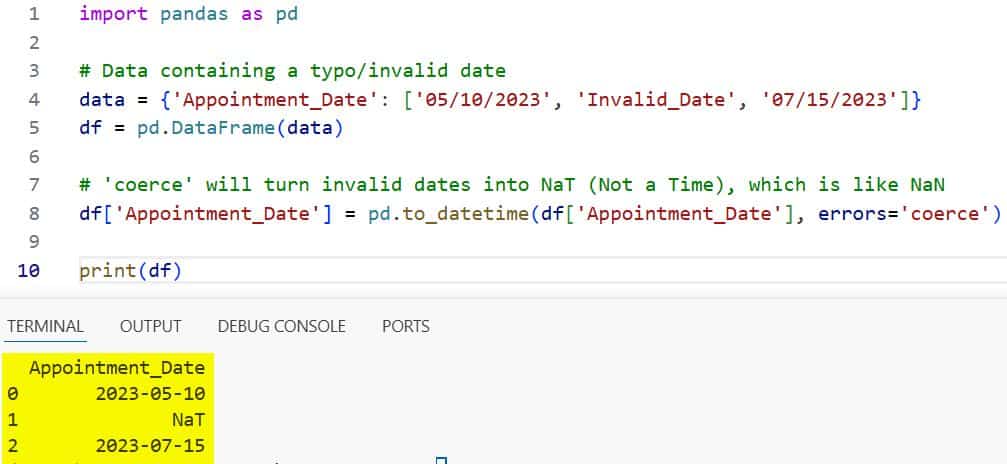

print(df)I executed the above example code and added the screenshot below.

Using errors=’coerce’ is my go-to strategy because it allows the script to finish, and I can easily filter out the NaT values later.

Method 4: Convert Multiple Columns at Once

In many US financial reports, you might have separate columns for ‘Year’, ‘Month’, and ‘Day’.

Instead of concatenating them into a string first, you can pass the entire subset of the dataframe to Pandas.

# Data split into components

data = {

'year': [2022, 2023, 2023],

'month': [12, 1, 2],

'day': [25, 10, 14],

'City': ['Denver', 'Seattle', 'Miami']

}

df = pd.DataFrame(data)

# Combine columns into a single datetime series

df['Full_Date'] = pd.to_datetime(df[['year', 'month', 'day']])

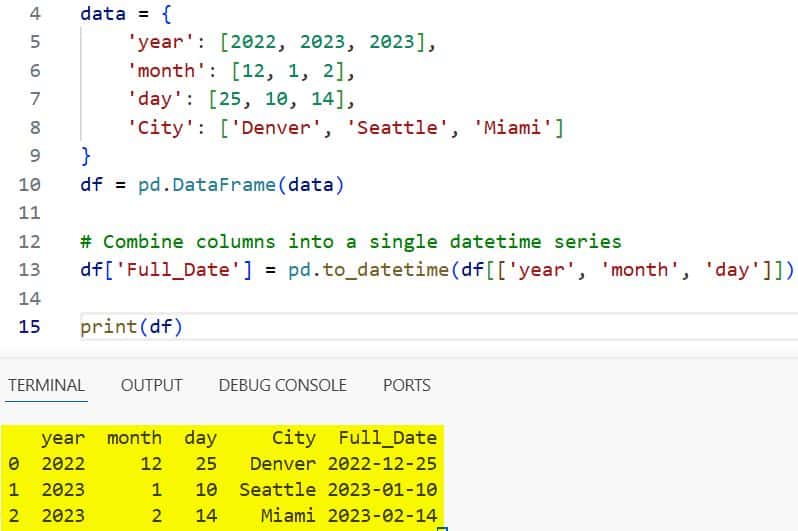

print(df)I executed the above example code and added the screenshot below.

I find this method incredibly clean because it keeps the code readable and avoids manual string manipulation.

Method 5: Use dayfirst for Non-US Formats

While we usually use MM/DD/YYYY in the United States, you might receive data from international offices in DD/MM/YYYY format.

If you don’t want to write out the full format string, you can use the dayfirst toggle.

# International format: Day/Month/Year

dates = ['31/01/2023', '15/02/2023']

df = pd.DataFrame({'Dates': dates})

# Telling Pandas that the day comes first

df['Dates'] = pd.to_datetime(df['Dates'], dayfirst=True)

print(df)This is a quick way to handle European or Australian datasets without much fuss.

Practical Example: Calculate Business Days in the US

Let’s look at a real-world scenario. Suppose you are analyzing shipping times for an e-commerce warehouse in Atlanta.

You need to convert the strings to dates to calculate how many days passed between ordering and shipping.

data = {

'Order_ID': ['AZ100', 'AZ101', 'AZ102'],

'Order_Date': ['2023-11-01', '2023-11-05', '2023-11-10'],

'Ship_Date': ['2023-11-04', '2023-11-06', '2023-11-15']

}

df = pd.DataFrame(data)

# Convert both columns

df['Order_Date'] = pd.to_datetime(df['Order_Date'])

df['Ship_Date'] = pd.to_datetime(df['Ship_Date'])

# Calculate the difference

df['Processing_Time'] = df['Ship_Date'] - df['Order_Date']

print(df)Once converted, the subtraction results in a Timedelta object, which is exactly what you need for performance metrics.

Performance Tip: The ‘infer_datetime_format’ Parameter

If you have a massive dataset and don’t want to manually type the format string, there is a helpful shortcut.

In older versions of Pandas, we used infer_datetime_format=True. In newer versions, Pandas does this by default or through the format=’infer’ argument.

I’ve seen this speed up parsing by 5x to 10x in some of my projects involving high-frequency trading data.

Extract Information After Conversion

The best part about converting strings to datetime is what you can do after the conversion.

You gain access to the .dt accessor, which allows you to pull out specific parts of the date easily.

df = pd.DataFrame({'Date_String': ['2023-07-04', '2023-12-25']})

df['Date'] = pd.to_datetime(df['Date_String'])

# Extracting Year, Month, and Day Name

df['Year'] = df['Date'].dt.year

df['Month_Name'] = df['Date'].dt.month_name()

df['Is_Weekend'] = df['Date'].dt.dayofweek >= 5

print(df)This is how I quickly categorize data by “Holiday Season” or “Quarterly Reports” in my professional work.

Converting strings to datetime is a fundamental skill that will save you hours of frustration when working with Pandas.

Whether you are using the basic pd.to_datetime() or handling complex formats with errors=’coerce’, these methods ensure your data is ready for analysis.

I hope you found this tutorial helpful! In this guide, I covered several ways to handle date conversions, from simple single-column fixes to managing messy data with errors.

You may also like to read:

- How to Export Pandas DataFrame to CSV in Python

- How to Read Text Files in Pandas

- How to Convert Pandas Column to Datetime

- Pandas Convert Column to Integer

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.