I’ve spent countless hours cleaning messy datasets. One of the most frequent hurdles I encounter is dealing with numeric data that Pandas mistakenly loads as strings or floats.

Whether I’m analyzing California real estate prices or New York Stock Exchange ticks, having my ID columns or zip codes in the wrong format breaks my analysis.

In this tutorial, I’ll show you exactly how to convert Pandas columns to integers using the same methods I use in my daily production code.

The Importance of Correct Data Types

When you import a CSV or an Excel file, Pandas does its best to guess the data types. Often, it gets it wrong.

A column containing “100” might be treated as a string, which means you can’t perform math on it. Or a “Year” column might show up as a float (2023.0), which looks unprofessional in reports.

Converting these to integers saves memory and ensures your functions—like grouping or plotting—work without throwing those annoying TypeErrors.

Method 1: Use the astype() Function

The astype() method is my go-to “quick fix.” It is straightforward and works perfectly when you know your data is clean and has no missing values.

When I’m processing a list of employee IDs for a payroll system, this is the first tool I reach for.

import pandas as pd

# Creating a dataset of US Tech Company Employee counts

data = {

'Company': ['Apple', 'Microsoft', 'Amazon', 'Google', 'Meta'],

'Employee_Count_Str': ['164000', '221000', '1541000', '190000', '71000']

}

df = pd.DataFrame(data)

# Checking current data types

print("Before Conversion:")

print(df.dtypes)

# Converting the string column to integer

df['Employee_Count_Str'] = df['Employee_Count_Str'].astype(int)

print("\nAfter Conversion:")

print(df.dtypes)

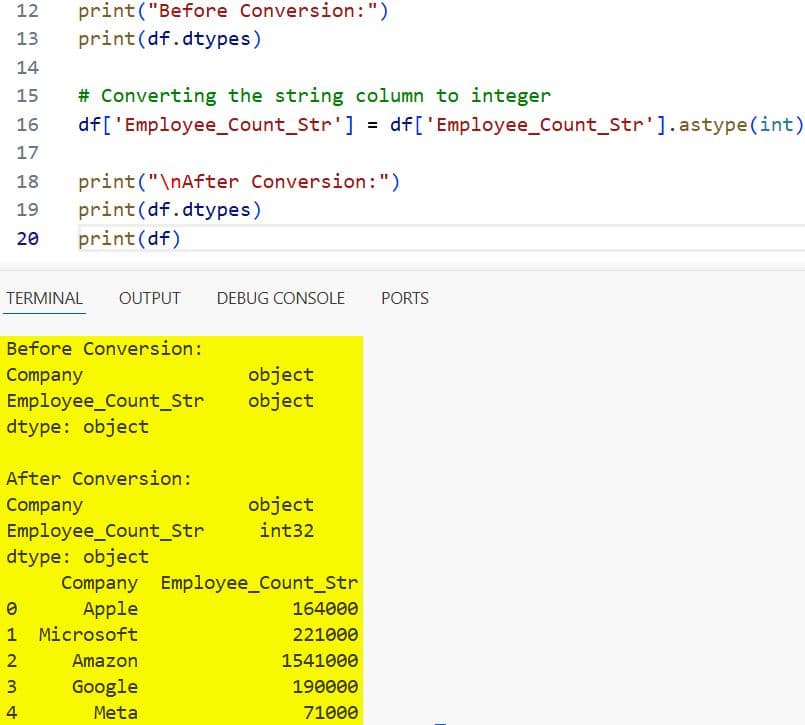

print(df)You can refer to the screenshot below to see the output.

In the code above, I took a column of strings and cast them directly to integers. It’s fast and efficient for most standard datasets.

Method 2: Use pd.to_numeric() for Messy Data

In the real world, data is rarely perfect. I often find “N/A” strings or random characters mixed into numeric columns.

If you use astype() on a column that contains a letter, the code will crash. That’s why I prefer pd.to_numeric() when I’m dealing with unpredictable web-scraped data.

import pandas as pd

# Sample data representing US Retail Store Sales (with some errors)

data = {

'Store_ID': [101, 102, 103, 104],

'Quarterly_Revenue': ['550000', '620000', 'Missing', '480000']

}

df = pd.DataFrame(data)

# Using to_numeric with errors='coerce' to handle 'Missing'

# This turns non-numeric values into NaN (Not a Number)

df['Quarterly_Revenue'] = pd.to_numeric(df['Quarterly_Revenue'], errors='coerce')

# Note: Once it has NaNs, it becomes a float. We fill NaNs to convert to Int.

df['Quarterly_Revenue'] = df['Quarterly_Revenue'].fillna(0).astype(int)

print(df)

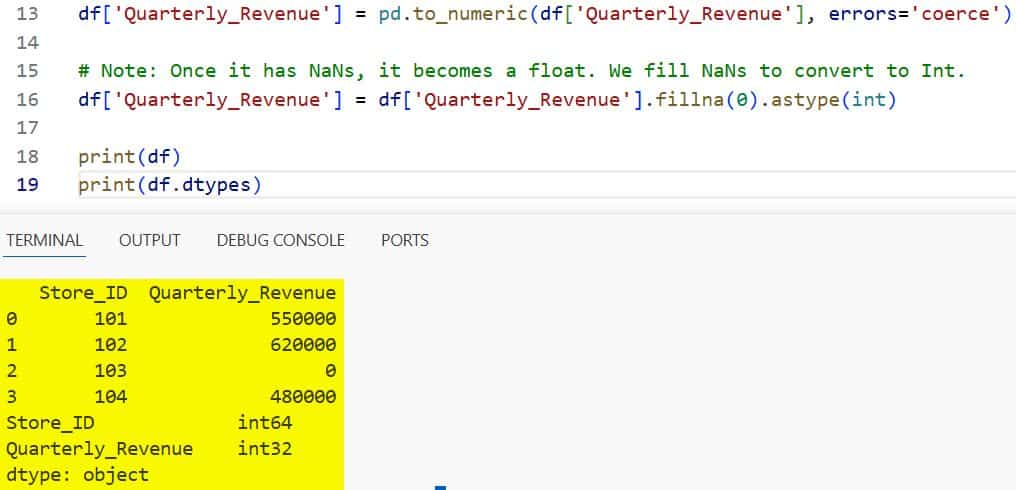

print(df.dtypes)You can refer to the screenshot below to see the output.

By setting errors=’coerce’, I tell Pandas to turn anything it can’t understand into a NaN. Then, I fill those gaps with 0 before final conversion.

Method 3: Convert Multiple Columns at Once

When I’m working with large datasets, like US Census Bureau stats, I usually have five or six columns that need fixing simultaneously.

Instead of writing five lines of code, I pass a dictionary to astype(). This keeps my notebooks clean and readable.

import pandas as pd

# Dataset of US City Statistics

data = {

'City': ['Austin', 'Seattle', 'Denver'],

'Population_2020': ['961855', '737015', '715522'],

'Median_Age': ['33.0', '35.0', '34.0'],

'Avg_Commute_Min': ['27', '30', '26']

}

df = pd.DataFrame(data)

# Converting multiple columns using a dictionary

df = df.astype({

'Population_2020': int,

'Median_Age': float, # First to float to handle decimals

'Avg_Commute_Min': int

})

# Final step: Convert Median_Age to int if decimals aren't needed

df['Median_Age'] = df['Median_Age'].astype(int)

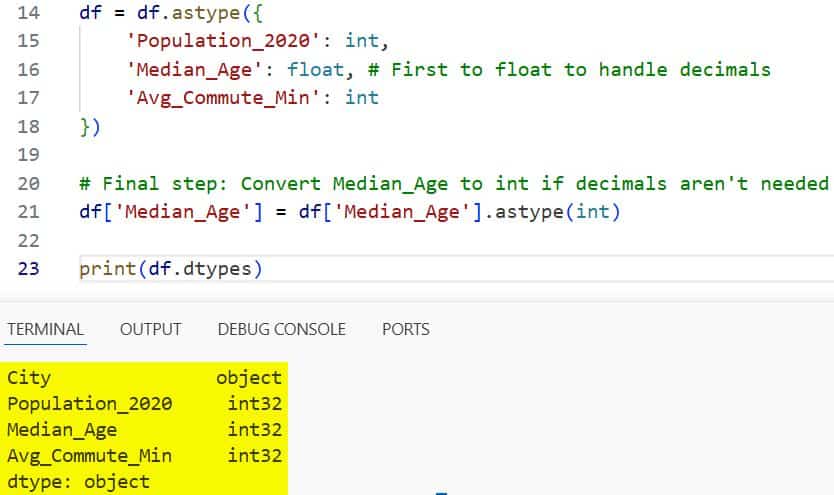

print(df.dtypes)You can refer to the screenshot below to see the output.

This “mapping” approach is my preferred way to handle bulk data cleaning in production scripts.

Method 4: Handle the “Int with NaNs” Problem (Int64)

One of the biggest frustrations I had for years was that standard NumPy integers (the default in Pandas) did not support NaN values.

If a column had a missing value, Pandas would force the whole column to become a float64. This resulted in IDs looking like 101.0.

Thankfully, Pandas introduced the nullable integer type: Int64 (note the capital ‘I’).

import pandas as pd

# US Federal Holidays Data (Some years might miss certain data)

data = {

'Holiday': ['Labor Day', 'Veterans Day', 'Thanksgiving'],

'Days_Off': ['1', '1', None]

}

df = pd.DataFrame(data)

# Using the nullable 'Int64' type

df['Days_Off'] = df['Days_Off'].astype('Int64')

print(df)

print(df.dtypes)In the output, you’ll see the NaN stays as <NA>, but the other numbers remain integers without the trailing .0. This is a lifesaver for data integrity.

Method 5: Use apply() with Custom Logic

Sometimes, the data format is truly bizarre. I once worked on a project involving US currency strings (e.g., “$1,200.00”).

Neither astype() nor to_numeric() can handle dollar signs and commas directly. In these cases, I use apply() with a lambda function.

import pandas as pd

# US Real Estate Listing Prices

data = {

'Property': ['Condo', 'Townhouse', 'Single Family'],

'Price': ['$450,000', '$625,000', '$1,100,000']

}

df = pd.DataFrame(data)

# Custom function to strip symbols and convert

df['Price_Int'] = df['Price'].apply(lambda x: x.replace('$', '').replace(',', '')).astype(int)

print(df)

print(df.dtypes)While apply() is slightly slower than vectorized methods, it provides the flexibility needed for complex string manipulation.

Which Method Should You Use?

In my experience, the “best” method depends entirely on your data quality.

If your data is already clean and just stored as the wrong type, stick with Method 1 (astype). It is the fastest and most readable.

If you are dealing with external data from APIs or CSVs where “None” or “Unknown” might appear, Method 2 (to_numeric) is the safest bet.

For reports where you must preserve missing values but want an integer “look,” Method 4 (Int64) is the modern standard.

Common Errors and How to Avoid Them

The most frequent error I see is the ValueError: invalid literal for int() with base 10.

This usually happens because you have a hidden space in your string or a decimal point. Remember: int cannot directly convert a string like “25.5”.

You must convert “25.5” to a float first, then to an int. If you try to skip that middle step, Python will complain.

Another tip: always check your df.info() after a conversion to verify the memory usage has actually decreased.

I hope this tutorial helps you clean up your Pandas DataFrames more efficiently.

Dealing with data types is one of those foundational skills that makes the rest of your data science journey much smoother.

- How to Display All Columns in a Pandas DataFrame

- How to Export Pandas DataFrame to CSV in Python

- How to Read Text Files in Pandas

- How to Convert Pandas Column to Datetime

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.