When I first started working with massive datasets from US retail chains, I often found myself needing to know exactly how much data I was handling.

Knowing the length of your DataFrame is the first step in data validation and cleaning.

In this tutorial, I will show you different ways to get the length of a pandas DataFrame using firsthand examples.

Use the len() Function

The most common way I get the row count is by using Python’s built-in len() function. It is simple and very fast because it only looks at the length of the index.

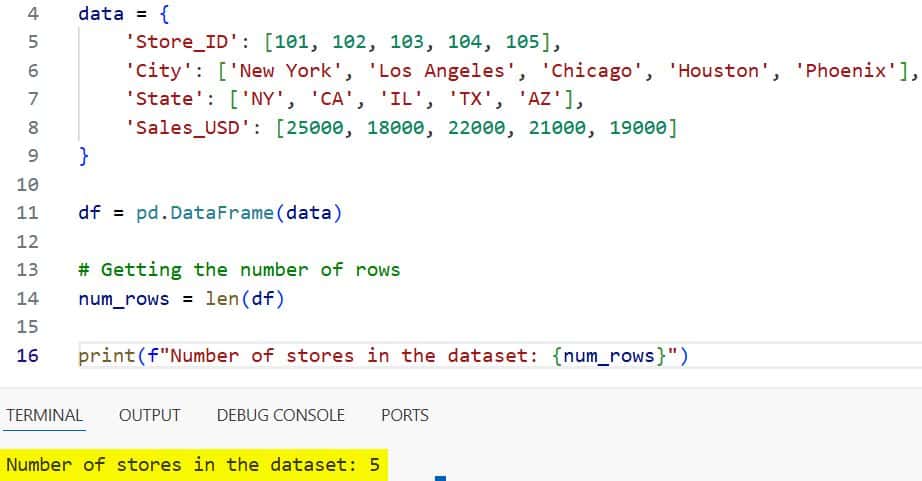

import pandas as pd

# Creating a dataset of retail stores across the USA

data = {

'Store_ID': [101, 102, 103, 104, 105],

'City': ['New York', 'Los Angeles', 'Chicago', 'Houston', 'Phoenix'],

'State': ['NY', 'CA', 'IL', 'TX', 'AZ'],

'Sales_USD': [25000, 18000, 22000, 21000, 19000]

}

df = pd.DataFrame(data)

# Getting the number of rows

num_rows = len(df)

print(f"Number of stores in the dataset: {num_rows}")You can see the output in the screenshot below.

In this case, len(df) returns 5, which corresponds to the five stores in our list.

Use the shape Attribute

Whenever I need to know both the number of rows and columns at once, I use the .shape attribute.

It returns a tuple where the first element is the number of rows and the second is the number of columns.

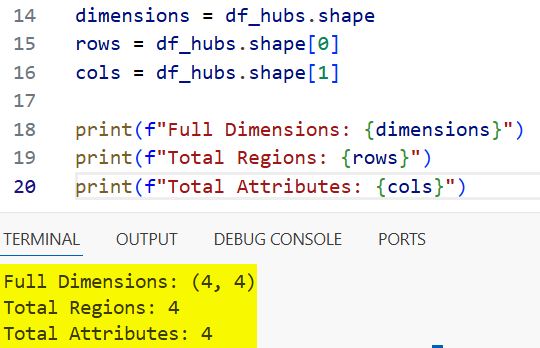

import pandas as pd

# Data representing employee counts in different US tech hubs

tech_hubs = {

'Hub': ['Silicon Valley', 'Austin', 'Seattle', 'Boston'],

'State': ['CA', 'TX', 'WA', 'MA'],

'Employees': [150000, 85000, 110000, 70000],

'Average_Salary': [145000, 115000, 130000, 120000]

}

df_hubs = pd.DataFrame(tech_hubs)

# Getting dimensions (rows, columns)

dimensions = df_hubs.shape

rows = df_hubs.shape[0]

cols = df_hubs.shape[1]

print(f"Full Dimensions: {dimensions}")

print(f"Total Regions: {rows}")

print(f"Total Attributes: {cols}")You can see the output in the screenshot below.

I find this extremely useful when I’m building automated scripts that need to verify the structure of an incoming CSV file.

Use the index Attribute

Another way I sometimes calculate the length is by accessing the .index attribute directly.

This is technically what len(df) does under the hood, but sometimes calling len(df.index) is slightly faster in high-performance loops.

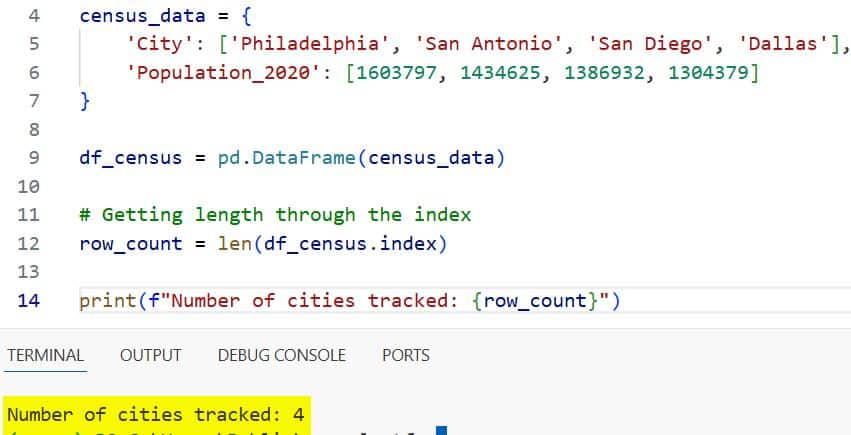

import pandas as pd

# US Census population estimates for select cities

census_data = {

'City': ['Philadelphia', 'San Antonio', 'San Diego', 'Dallas'],

'Population_2020': [1603797, 1434625, 1386932, 1304379]

}

df_census = pd.DataFrame(census_data)

# Getting length through the index

row_count = len(df_census.index)

print(f"Number of cities tracked: {row_count}")You can see the output in the screenshot below.

It’s a clean way to be explicit about exactly what part of the DataFrame you are measuring.

Use the count() Method

If I specifically need to know how many non-missing values are in my data, I use the .count() method.

Unlike the previous methods, this one ignores NaN or null values, which is vital for data quality checks.

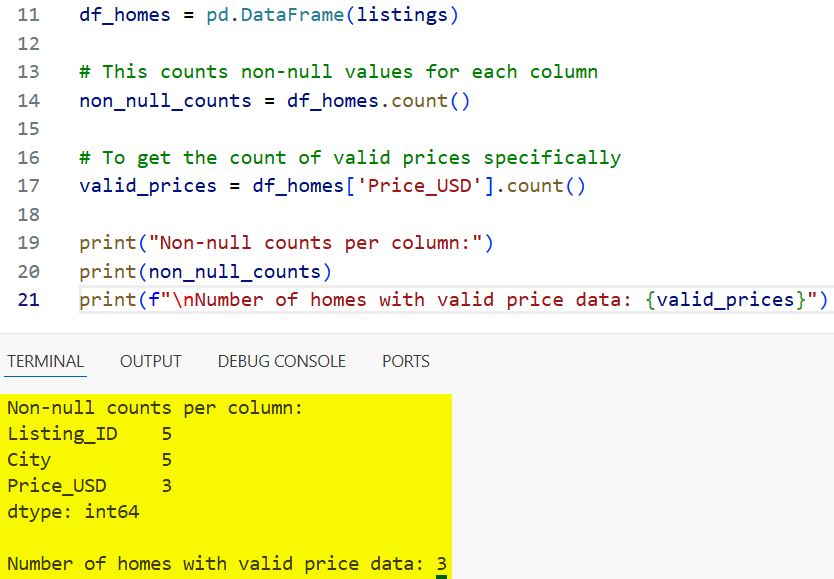

import pandas as pd

import numpy as np

# Real estate listing data in Florida with some missing prices

listings = {

'Listing_ID': [1, 2, 3, 4, 5],

'City': ['Miami', 'Orlando', 'Tampa', 'Jacksonville', 'Naples'],

'Price_USD': [450000, np.nan, 320000, 290000, np.nan]

}

df_homes = pd.DataFrame(listings)

# This counts non-null values for each column

non_null_counts = df_homes.count()

# To get the count of valid prices specifically

valid_prices = df_homes['Price_USD'].count()

print("Non-null counts per column:")

print(non_null_counts)

print(f"\nNumber of homes with valid price data: {valid_prices}")You can see the output in the screenshot below.

I often use this to decide if a column has enough data to be included in a final report or a machine learning model.

Find Length with Conditions

In my experience, you rarely want the total length; usually, you want to know how many rows meet a certain US-specific criterion.

To do this, I filter the DataFrame first and then apply len().

import pandas as pd

# Automobile production data from various US plants

auto_data = {

'Plant': ['Detroit', 'Fremont', 'Chattanooga', 'Georgetown', 'Spartanburg'],

'State': ['MI', 'CA', 'TN', 'KY', 'SC'],

'Daily_Output': [1200, 950, 400, 1100, 1500]

}

df_autos = pd.DataFrame(auto_data)

# How many plants produce more than 1,000 vehicles daily?

high_output_plants = df_autos[df_autos['Daily_Output'] > 1000]

count_high_output = len(high_output_plants)

print(f"Number of high-capacity plants: {count_high_output}")This pattern is my “bread and butter” for generating quick summary statistics.

Get Length for Groups

When I work with multi-state data, I often need the count for each state. I use the .groupby() method followed by .size() to get a clean breakdown.

import pandas as pd

# Tech startup data across different US states

startups = {

'Company': ['TechA', 'TechB', 'TechC', 'TechD', 'TechE', 'TechF'],

'State': ['CA', 'NY', 'CA', 'TX', 'NY', 'CA'],

'Funding_Millions': [10, 5, 20, 15, 8, 12]

}

df_startups = pd.DataFrame(startups)

# Count companies per state

state_counts = df_startups.groupby('State').size()

print("Number of startups per state:")

print(state_counts)The .size() method includes all rows in each group, even those with null values, making it very reliable for total counts.

I hope you found this tutorial useful!

In this article, I have explained several ways to get the length of a pandas DataFrame, from simple row counts to conditional filtering and grouping.

Whether you are performing initial data exploration or final validation, these methods will help you handle your data more effectively.

You may also like to read:

- How to Use Pandas Concat with Ignore Index

- How to Use Pandas GroupBy Aggregation

- Read CSV Using Pandas in Python

- Ways to Set Column Names in Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.