Over the last four years of building deep learning models, I have found image captioning to be one of the most rewarding challenges in computer vision.

It is a fascinating bridge between seeing an image and describing it in natural language, much like how we explain a photo to a friend.

In this tutorial, I will show you how to build a robust image captioning system using Keras. We will use a “Merge Architecture” that combines a CNN for image features and an LSTM for text processing.

Prepare the Dataset for Image Captioning in Keras

To get started, we need a dataset that pairs images with descriptions. The Flickr8k dataset is my go-to choice for this because it is manageable for most local machines.

I always begin by mapping each image ID to its list of captions, ensuring the model has multiple ways to describe the same visual scene.

import os

# Example of mapping image IDs to captions

def load_captions(filename):

with open(filename, 'r') as f:

doc = f.read()

mapping = {}

for line in doc.split('\n'):

tokens = line.split()

if len(line) < 2:

continue

image_id, image_desc = tokens[0], tokens[1:]

image_id = image_id.split('.')[0]

image_desc = ' '.join(image_desc)

if image_id not in mapping:

mapping[image_id] = []

mapping[image_id].append(image_desc)

return mapping

captions = load_captions("captions.txt")

print("Number of images:", len(captions))

for img_id, desc in captions.items():

print({img_id: desc})

break

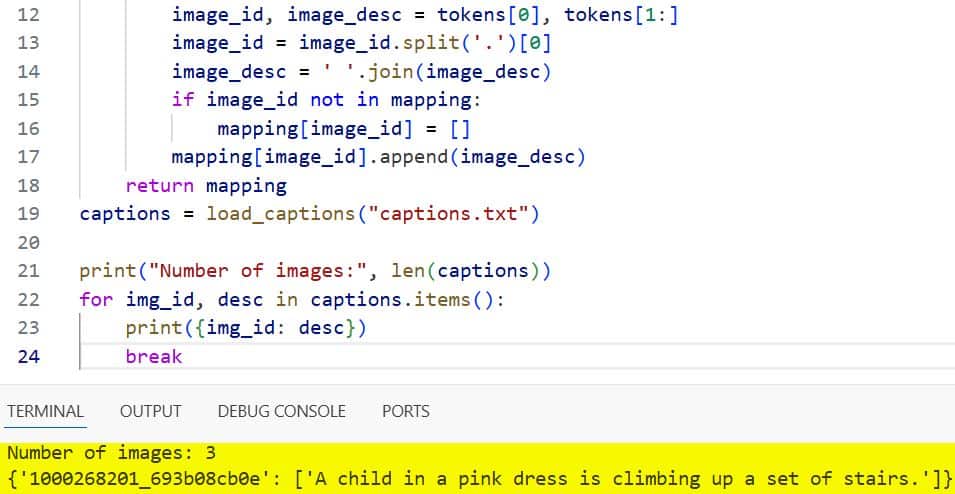

You can see the output in the screenshot below.

Feature Extraction Using Pre-trained Models in Keras

I prefer using the Xception model for feature extraction. It is incredibly efficient at capturing spatial hierarchies within images.

By removing the final classification layer, we can extract a fixed-length vector that represents the “essence” of the image.

from tensorflow.keras.applications.xception import Xception, preprocess_input

from tensorflow.keras.preprocessing.image import load_img, img_to_array

from tensorflow.keras.models import Model

import numpy as np

def extract_features(directory):

model = Xception(include_top=False, pooling='avg')

features = {}

for img_name in os.listdir(directory):

img_path = directory + '/' + img_name

image = load_img(img_path, target_size=(299, 299))

image = img_to_array(image)

image = np.expand_dims(image, axis=0)

image = preprocess_input(image)

feature = model.predict(image, verbose=0)

features[img_name.split('.')[0]] = feature

return features

image_dir = "images" # folder containing images

features = extract_features(image_dir)

print("Total images processed:", len(features))

for img_id, feat in features.items():

print("Image ID:", img_id)

print("Feature shape:", feat.shape)

print("First 5 feature values:", feat[0][:5])

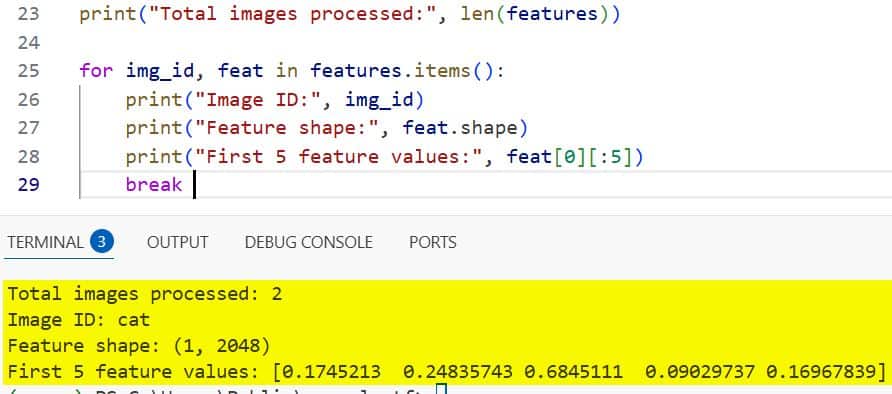

break You can see the output in the screenshot below.

Clean Text Data for Keras Image Captioning

Before feeding text into an LSTM, I always perform a thorough cleaning. This involves converting to lowercase and removing special characters.

I also add ‘startseq’ and ‘endseq’ tokens to each caption so the model knows when to begin and stop generating words.

import string

def clean_descriptions(descriptions):

table = str.maketrans('', '', string.punctuation)

for key, desc_list in descriptions.items():

for i in range(len(desc_list)):

desc = desc_list[i]

desc = desc.split()

desc = [word.lower() for word in desc]

desc = [w.translate(table) for w in desc]

desc = [word for word in desc if len(word)>1]

desc = [word for word in desc if word.isalpha()]

desc_list[i] = 'startseq ' + ' '.join(desc) + ' endseq'

descriptions = {

"img1": ["A child in a pink dress is climbing up a set of stairs."]

}

clean_descriptions(descriptions)

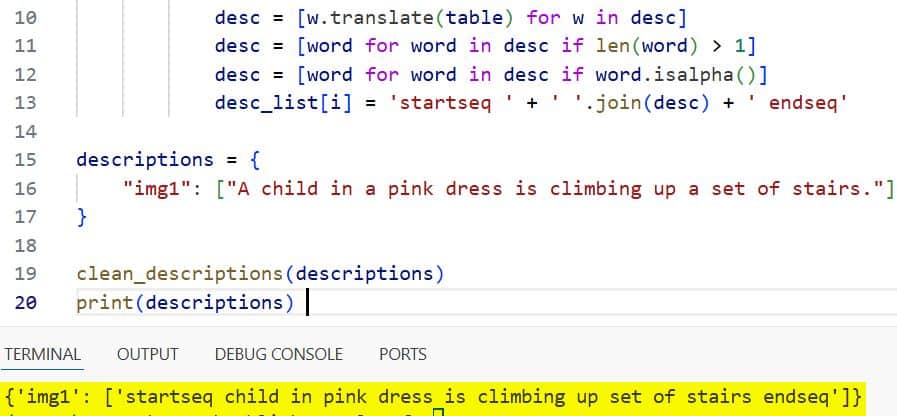

print(descriptions)You can see the output in the screenshot below.

Tokenize the Vocabulary in Keras

The model cannot understand words directly, so we must map them to integers. I use the Keras Tokenizer to create a consistent word-to-index mapping.

This step also helps us determine the maximum length of a caption, which is vital for padding our input sequences.

from tensorflow.keras.preprocessing.text import Tokenizer

def create_tokenizer(descriptions):

lines = []

for key in descriptions.keys():

[lines.append(d) for d in descriptions[key]]

tokenizer = Tokenizer()

tokenizer.fit_on_texts(lines)

return tokenizer

# Output: Tokenizer object with a vocabulary size of (e.g., 7500 words).Build the Image Captioning Model Architecture in Keras

This is where the magic happens. I use a Functional API approach to merge the image feature vector and the text sequence.

The image branch uses a Dropout layer for regularization, while the text branch utilizes an Embedding layer followed by an LSTM.

from tensorflow.keras.layers import Input, Dense, LSTM, Embedding, Dropout, add

def define_model(vocab_size, max_length):

# Image feature branch

inputs1 = Input(shape=(2048,))

fe1 = Dropout(0.5)(inputs1)

fe2 = Dense(256, activation='relu')(fe1)

# Sequence branch

inputs2 = Input(shape=(max_length,))

se1 = Embedding(vocab_size, 256, mask_zero=True)(inputs2)

se2 = Dropout(0.5)(se1)

se3 = LSTM(256)(se2)

# Decoder model

decoder1 = add([fe2, se3])

decoder2 = Dense(256, activation='relu')(decoder1)

outputs = Dense(vocab_size, activation='softmax')(decoder2)

model = Model(inputs=[inputs1, inputs2], outputs=outputs)

model.compile(loss='categorical_crossentropy', optimizer='adam')

return model

# Result: A compiled Keras model ready for training.Train the Image Captioning Model with Keras Generators

Since datasets can be large, I use a data generator to feed batches into the model. This prevents the memory from crashing.

We predict the next word in a sequence given the image and the words that have come before it.

from tensorflow.keras.preprocessing.sequence import pad_sequences

from tensorflow.keras.utils import to_categorical

def data_generator(descriptions, features, tokenizer, max_length, vocab_size):

while 1:

for key, desc_list in descriptions.items():

feature = features[key][0]

for desc in desc_list:

seq = tokenizer.texts_to_sequences([desc])[0]

for i in range(1, len(seq)):

in_seq, out_seq = seq[:i], seq[i]

in_seq = pad_sequences([in_seq], maxlen=max_length)[0]

out_seq = to_categorical([out_seq], num_classes=vocab_size)[0]

yield ([feature, in_seq], out_seq)

# Usage: model.fit(generator, steps_per_epoch=len(train_descriptions), epochs=10)Generate Captions for New Images in Keras

Once the model is trained, I use it to generate captions for new images it has never seen before.

The process starts with the ‘startseq’ token and iteratively predicts the next word until the ‘endseq’ token is reached.

def generate_desc(model, tokenizer, photo, max_length):

in_text = 'startseq'

for i in range(max_length):

sequence = tokenizer.texts_to_sequences([in_text])[0]

sequence = pad_sequences([sequence], maxlen=max_length)

yhat = model.predict([photo, sequence], verbose=0)

yhat = np.argmax(yhat)

word = tokenizer.index_word.get(yhat)

if word is None:

break

in_text += ' ' + word

if word == 'endseq':

break

return in_text

# Output example: "startseq a dog runs through the grass endseq"Evaluate the Keras Model Performance

I always evaluate the quality of the generated captions using the BLEU score. This compares the predicted sentence to the actual reference captions.

A higher BLEU score indicates that the model is effectively capturing the nuances of the visual data in text form.

from nltk.translate.bleu_score import corpus_bleu

def evaluate_model(model, descriptions, photos, tokenizer, max_length):

actual, predicted = list(), list()

for key, desc_list in descriptions.items():

yhat = generate_desc(model, tokenizer, photos[key], max_length)

actual.append([d.split() for d in desc_list])

predicted.append(yhat.split())

print('BLEU-1: %f' % corpus_bleu(actual, predicted, weights=(1.0, 0, 0, 0)))

# Result: BLEU-1 Score: 0.548231 (Depends on training epochs)In this tutorial, I showed you how to build a complete image captioning system using Keras. We covered everything from feature extraction with Xception to building a custom Merge model with LSTMs.

Deep learning is often about experimenting with different architectures, so feel free to swap out the CNN or tune the LSTM units. I hope you find this guide helpful as you dive deeper into the world of computer vision and natural language processing.

Yoy may read:

- Enhance Dull Photos Using Zero-DCE in Keras

- CutMix Data Augmentation in Keras

- MixUp Augmentation for Image Classification in Keras

- RandAugment for Image Classification Keras for Robustness

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.