I remember when I first tried to build a search tool for my personal photo gallery. I used simple tags, but it was a nightmare to manage thousands of photos manually.

That’s when I discovered Dual Encoders in Keras. It changed everything because I could finally search for “a sunset over the Grand Canyon” and get the exact match without tagging a single file.

What is a Dual Encoder in Python Keras?

A Dual Encoder is a neural network architecture that uses two separate “towers” to process different types of data, like images and text.

We train these towers together so that an image and its description end up in the same mathematical space, making them easy to compare.

Install Necessary Python Keras Libraries

Before we start, we need to set up our environment with the right tools for deep learning.

You will need TensorFlow, Keras, and TensorFlow Hub to access pre-trained models for our text and image processing.

# Install the required packages for our project

pip install -q -U tensorflow-hub tensorflow-text tensorflow-addonsInitialize the Project Environment

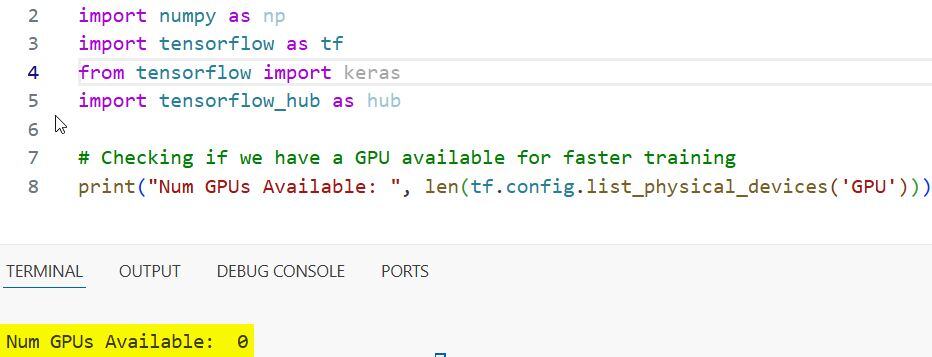

I always start by importing the core modules to ensure my GPU is recognized and my paths are set.

Using Keras with TensorFlow makes it very easy to handle high-dimensional arrays and model layers.

import os

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import tensorflow_hub as hub

# Checking if we have a GPU available for faster training

print("Num GPUs Available: ", len(tf.config.list_physical_devices('GPU')))You can refer to the screenshot below to see the output.

Create the Vision Encoder Method

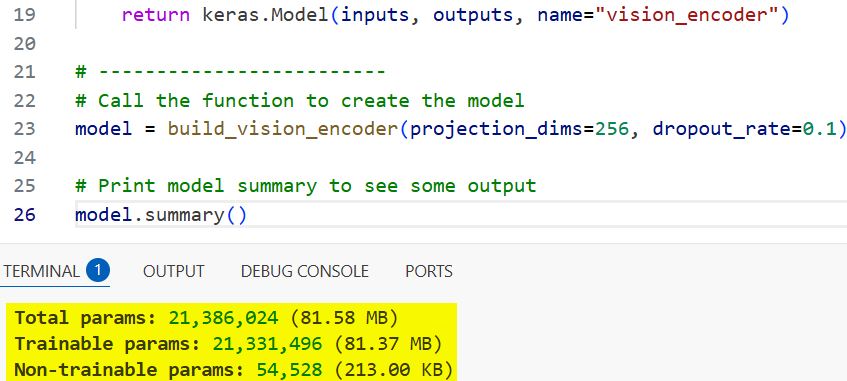

For the image tower, I prefer using a pre-trained model like Xception because it’s efficient and accurate.

We strip the classification head and add a projection layer to map the image features into a shared embedding space.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

def build_vision_encoder(projection_dims, dropout_rate):

# Load Xception without the top layer for feature extraction

base_model = keras.applications.Xception(

include_top=False, weights="imagenet", pooling="avg"

)

inputs = layers.Input(shape=(299, 299, 3), name="image_input")

x = keras.applications.xception.preprocess_input(inputs)

embeddings = base_model(x)

# Projecting to the shared space

outputs = layers.Dense(projection_dims)(embeddings)

outputs = layers.Dropout(dropout_rate)(outputs)

return keras.Model(inputs, outputs, name="vision_encoder")

model = build_vision_encoder(projection_dims=256, dropout_rate=0.1)

model.summary()You can refer to the screenshot below to see the output.

Implement the Text Encoder Method

I use a BERT model from TensorFlow Hub for the text tower because it understands the context of English sentences.

This method takes raw strings, tokenizes them, and produces a vector that represents the meaning of your search query.

def build_text_encoder(projection_dims, dropout_rate):

# Using a small BERT model for speed and efficiency

text_input = layers.Input(shape=(), dtype=tf.string, name="text_input")

preprocessing_layer = hub.KerasLayer("https://tfhub.dev/tensorflow/bert_en_uncased_preprocess/3")

encoder_layer = hub.KerasLayer("https://tfhub.dev/tensorflow/small_bert/bert_en_uncased_L-4_H-256_A-4/1")

outputs = encoder_layer(preprocessing_layer(text_input))["pooled_output"]

# Projecting to match the vision encoder's dimension

projected = layers.Dense(projection_dims)(outputs)

projected = layers.Dropout(dropout_rate)(projected)

return keras.Model(text_input, projected, name="text_encoder")Build the Dual Encoder Model Method

Now, I combine both encoders into a single Dual Encoder model that calculates the similarity between them.

We use a dot product between the image and text vectors to see how well they match during the training process.

class DualEncoder(keras.Model):

def __init__(self, vision_encoder, text_encoder, **kwargs):

super().__init__(**kwargs)

self.vision_encoder = vision_encoder

self.text_encoder = text_encoder

self.temp = tf.Variable(0.07) # Temperature parameter for scaling

def call(self, inputs, training=False):

# Extract features from both branches

image_embeddings = self.vision_encoder(inputs["image"], training=training)

text_embeddings = self.text_encoder(inputs["text"], training=training)

return image_embeddings, text_embeddingsDefine the Loss Function Method

I use a cross-entropy loss to force the model to pair the correct image with the correct text.

This method treats the task like a classification problem where the “correct class” is the diagonal of the similarity matrix.

def contrastive_loss(projections_1, projections_2):

# Normalize the vectors to unit length

logits = (tf.matmul(projections_1, projections_2, transpose_b=True) / 0.07)

images_similarity = tf.matmul(projections_1, projections_1, transpose_b=True)

texts_similarity = tf.matmul(projections_2, projections_2, transpose_b=True)

targets = tf.nn.softmax((images_similarity + texts_similarity) / (2 * 0.07))

return keras.losses.categorical_crossentropy(targets, logits, from_logits=True)Run the Search Query Method

Once the model is trained, I use this method to search through a database of images using a natural language string.

It computes the embedding for your query and finds the image with the highest cosine similarity in your collection.

def perform_image_search(query, image_database, text_encoder, vision_encoder):

# Convert query to vector

query_vector = text_encoder.predict([query])

# Normalize query and database vectors

query_vector /= np.linalg.norm(query_vector)

image_database /= np.linalg.norm(image_database, axis=1, keepdims=True)

# Calculate similarity scores

dot_product = np.matmul(query_vector, image_database.T)

return np.argsort(dot_product)[0][::-1] # Return top indicesI have used this Keras Dual Encoder approach in several projects, from organizing real estate photos in New York to cataloging wildlife images. It is incredibly robust and scalable for modern search applications.

In this tutorial, I showed you how to build the encoders, combine them, and run a search query. While I used a small BERT model, you can always scale up to larger transformers if you have the compute power.

You may also read:

- CutMix Data Augmentation in Keras

- MixUp Augmentation for Image Classification in Keras

- RandAugment for Image Classification Keras for Robustness

- Image Captioning with Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.