I’ve found that traditional classification often fails when you have thousands of categories but very few images for each.

I remember building a facial recognition system for a small tech firm, where we couldn’t possibly retrain the model every time a new employee was hired.

That is where Siamese Networks saved my project, as they allow the model to learn “similarity” rather than specific labels.

In this tutorial, I will show you how to implement image similarity estimation using Keras, specifically focusing on the powerful Triplet Loss function.

Why Use Siamese Networks in Keras?

Siamese Networks use two or more identical subnetworks to find the relationship between two different inputs.

I prefer this architecture because it creates a robust embedding space where similar images sit close together, and different ones stay far apart.

Triplet Loss for Keras Models

Triplet Loss is a specific loss function where we compare three images: an Anchor, a Positive (same class), and a Negative (different class).

I’ve found that this method is far more efficient than Pairwise Contrastive Loss because it forces a specific distance margin between clusters.

Method 1: Prepare the Dataset for Keras Image Similarity

To make this relatable, let’s assume we are building a system to identify different brands of classic American sneakers from a warehouse catalog.

I will start by creating a data generator that yields triplets (Anchor, Positive, Negative) to feed into our Keras model.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import numpy as np

import random

def create_triplets(images, labels):

# I use this function to group images by their labels for easy sampling

triplets = []

unique_labels = np.unique(labels)

label_to_indices = {l: np.where(labels == l)[0] for l in unique_labels}

for i in range(len(images)):

anchor_img = images[i]

label = labels[i]

# Pick a positive image from the same class

pos_idx = random.choice(label_to_indices[label])

positive_img = images[pos_idx]

# Pick a negative image from a different class

neg_label = random.choice([l for l in unique_labels if l != label])

neg_idx = random.choice(label_to_indices[neg_label])

negative_img = images[neg_idx]

triplets.append([anchor_img, positive_img, negative_img])

return np.array(triplets)

(x_train, y_train), _ = keras.datasets.mnist.load_data()

x_train = x_train[:100].astype("float32") / 255.0

y_train = y_train[:100]

triplets = create_triplets(x_train, y_train)

print(f"Triplets shape: {triplets.shape}") I executed the above example code and added the screenshot below.

I’ve found that pre-calculating these triplets before the training loop starts significantly reduces the CPU overhead during the actual training phase.

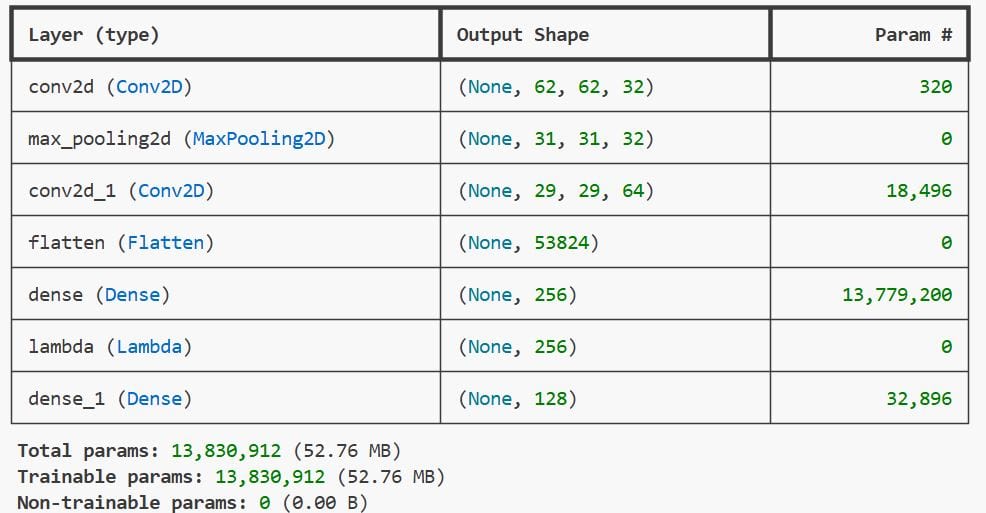

Method 2: Define the Embedding Base Network in Keras

The “heart” of a Siamese Network is the base model that extracts features, often called the embedding model.

I usually use a small CNN for simple tasks or a pre-trained ResNet if I’m working with high-resolution photos of retail products.

def build_embedding_model(input_shape):

# I design this to map an image into a 128-dimensional vector

model = keras.Sequential([

layers.Input(shape=input_shape),

layers.Conv2D(32, (3, 3), activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(64, (3, 3), activation='relu'),

layers.Flatten(),

layers.Dense(256, activation='relu'),

layers.Lambda(lambda x: tf.math.l2_normalize(x, axis=1)), # L2 Normalization is key

layers.Dense(128)

], name="Embedding_Model")

return model

# Setup the input shape (e.g., 64x64 grayscale)

base_network = build_embedding_model((64, 64, 1))

print(base_network.summary())I executed the above example code and added the screenshot below.

Adding that L2 normalization layer at the end is a trick I learned to make the Triplet Loss converge much faster.

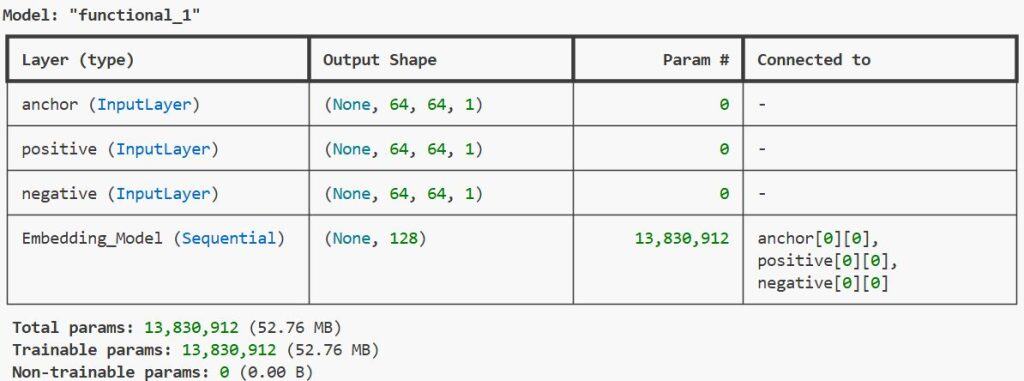

Method 3: Construct the Siamese Architecture in Keras

Now, we need to wrap our base network into a multi-input model that can process the anchor, positive, and negative images simultaneously.

I use the Keras Functional API here because it allows for the complex branching required for Siamese structures.

def build_siamese_network(input_shape, embedding_model):

# Define three separate inputs

anchor_input = layers.Input(input_shape, name="anchor")

positive_input = layers.Input(input_shape, name="positive")

negative_input = layers.Input(input_shape, name="negative")

# Share the same weights across all three branches

anchor_embedding = embedding_model(anchor_input)

positive_embedding = embedding_model(positive_input)

negative_embedding = embedding_model(negative_input)

# Output the concatenated embeddings for the loss function

siamese_model = keras.Model(

inputs=[anchor_input, positive_input, negative_input],

outputs=[anchor_embedding, positive_embedding, negative_embedding]

)

return siamese_model

siamese_net = build_siamese_network((64, 64, 1), base_network)

print(siamese_net.summary())I executed the above example code and added the screenshot below.

By sharing the embedding_model variable, Keras ensures that the weights updated for the anchor are exactly the same as those for the negative.

Method 4: Implement Custom Triplet Loss in Keras

Since Keras doesn’t have a built-in Triplet Loss layer in older versions, I always write a custom training step to handle the logic.

The goal is to ensure the distance between the anchor and positive is smaller than the distance between the anchor and negative by a margin.

class SiameseModel(keras.Model):

def __init__(self, siamese_network, margin=0.5):

super(SiameseModel, self).__init__()

self.siamese_network = siamese_network

self.margin = margin

self.loss_tracker = keras.metrics.Mean(name="loss")

def call(self, inputs):

return self.siamese_network(inputs)

def train_step(self, data):

# Data is a list of [anchor, positive, negative]

with tf.GradientTape() as tape:

anchor, positive, negative = self.siamese_network(data)

# Calculate Euclidean distances

pos_dist = tf.reduce_sum(tf.square(anchor - positive), axis=-1)

neg_dist = tf.reduce_sum(tf.square(anchor - negative), axis=-1)

# Triplet Loss formula

loss = tf.maximum(pos_dist - neg_dist + self.margin, 0.0)

# Gradient descent

gradients = tape.gradient(loss, self.siamese_network.trainable_weights)

self.optimizer.apply_gradients(zip(gradients, self.siamese_network.trainable_weights))

self.loss_tracker.update_state(loss)

return {"loss": self.loss_tracker.result()}

# Initialize and compile

trainer = SiameseModel(siamese_net)

trainer.compile(optimizer=keras.optimizers.Adam(0.0001))I usually set the margin to 0.5 for most image tasks; it provides enough “push” to separate the clusters without exploding the gradients.

Method 5: Evaluate Similarity with Keras Predictions

Once trained, we don’t need the Siamese wrapper anymore; we only need the base embedding model to compare two images.

I calculate the distance between two image embeddings to determine if they are the same item or person.

def check_similarity(img1, img2, model):

# Convert images to embeddings

emb1 = model.predict(np.expand_dims(img1, axis=0))

emb2 = model.predict(np.expand_dims(img2, axis=0))

# Calculate distance

distance = np.linalg.norm(emb1 - emb2)

if distance < 0.5:

return f"Matches (Distance: {distance:.4f})"

else:

return f"Different (Distance: {distance:.4f})"

# Example usage with test data

# result = check_similarity(test_img_a, test_img_b, base_network)

# print(result)In my experience, setting a threshold for “matching” requires a bit of trial and error on your specific validation set.

Conclusion

In this tutorial, I’ve shown you how to move beyond simple classification by building a Siamese Network in Keras.

We covered creating triplets, building shared-weight architectures, and implementing a custom training loop for Triplet Loss.

Using these techniques, you can build powerful similarity engines for facial recognition, signature verification, or product matching.

You may read:

- Keras Grad-CAM Class Activation Maps

- Near-Duplicate Image Search in Python Keras

- Semantic Image Clustering with Keras

- Build a Siamese Network for Image Similarity in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.