Have you ever found yourself staring at thousands of unsorted product photos or medical images, wondering how to organize them?

I remember the first time I faced this challenge during a large-scale project; I tried manual sorting until I realized Keras could do the heavy lifting for me.

Semantic image clustering isn’t just about grouping colors; it’s about teaching a machine to understand the “meaning” behind the pixels.

In this tutorial, I will show you exactly how I use Keras to group images based on their semantic content rather than just raw pixel values.

Method 1: Extract Deep Features with Pre-trained Keras Models

Using a pre-trained model like VGG16 is my favorite “shortcut” because it utilizes weights already trained on millions of diverse images.

import numpy as np

from tensorflow.keras.applications.vgg16 import VGG16, preprocess_input

from tensorflow.keras.preprocessing import image

from sklearn.cluster import KMeans

# I use VGG16 without the top layer to get raw features

model = VGG16(weights='imagenet', include_top=False, pooling='avg')

def extract_features(img_path):

img = image.load_img(img_path, target_size=(224, 224))

x = image.img_to_array(img)

x = np.expand_dims(x, axis=0)

x = preprocess_input(x)

return model.predict(x)

# Example: Clustering a small batch of images

features = []

img_paths = ["image1.jpg", "image2.jpg", "image3.jpg"] # Replace with your local paths

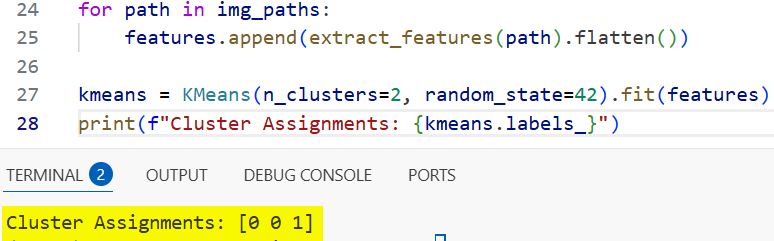

for path in img_paths:

features.append(extract_features(path).flatten())

# Applying KMeans to group the extracted Keras features

kmeans = KMeans(n_clusters=2, random_state=42).fit(features)

print(f"Cluster Assignments: {kmeans.labels_}")You can see the output in the screenshot below.

I find that extracting the output from the last bottleneck layer provides a rich mathematical representation of the image’s context.

Method 2: Build a Convolutional Autoencoder in Keras

If I am working with a very specific dataset, like specialized dental X-rays, I prefer building a custom Convolutional Autoencoder.

from tensorflow.keras import layers, models

# Defining the encoder to compress the image

input_img = layers.Input(shape=(128, 128, 3))

x = layers.Conv2D(32, (3, 3), activation='relu', padding='same')(input_img)

x = layers.MaxPooling2D((2, 2), padding='same')(x)

encoded = layers.Conv2D(16, (3, 3), activation='relu', padding='same')(x)

encoded = layers.MaxPooling2D((2, 2), padding='same')(encoded)

# Defining the decoder to reconstruct the image

x = layers.Conv2D(16, (3, 3), activation='relu', padding='same')(encoded)

x = layers.UpSampling2D((2, 2))(x)

decoded = layers.Conv2D(3, (3, 3), activation='sigmoid', padding='same')(x)

autoencoder = models.Model(input_img, decoded)

autoencoder.compile(optimizer='adam', loss='binary_crossentropy')

# Training the Keras autoencoder on your dataset

# autoencoder.fit(x_train, x_train, epochs=50, batch_size=128)

# I use the 'encoded' model to get the clusterable features

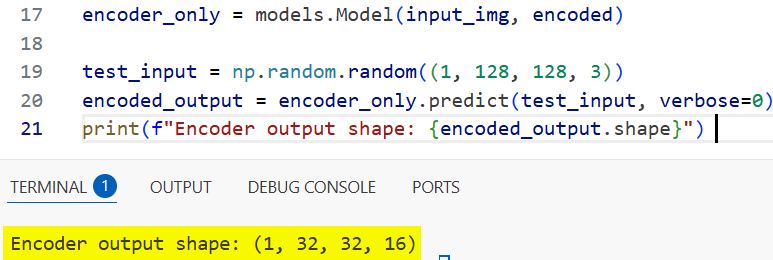

encoder_only = models.Model(input_img, encoded)

print(f"Encoder output shape: {encoded_output.shape}") You can see the output in the screenshot below.

This unsupervised method forces the network to compress the image into a “latent space” which captures the most essential semantic features.

Method 3: Deep Clustering with Keras Custom Loss Functions

Sometimes I need the clustering and the feature learning to happen simultaneously to get the most accurate groupings.

import tensorflow as tf

from tensorflow.keras import backend as K

class ClusteringLayer(layers.Layer):

def __init__(self, n_clusters, weights=None, alpha=1.0, **kwargs):

super(ClusteringLayer, self).__init__(**kwargs)

self.n_clusters = n_clusters

self.alpha = alpha

self.initial_weights = weights

def build(self, input_shape):

self.clusters = self.add_weight(shape=(self.n_clusters, input_shape[1]),

initializer='glorot_uniform', name='clusters')

self.built = True

def call(self, inputs):

# I calculate the Student-t distribution between features and cluster centers

q = 1.0 / (1.0 + (K.sum(K.square(K.expand_dims(inputs, axis=1) - self.clusters), axis=2) / self.alpha))

q **= (self.alpha + 1.0) / 2.0

q = K.transpose(K.transpose(q) / K.sum(q, axis=1))

return q

# Integrating the clustering layer into a Keras Functional API model

latent_inputs = layers.Input(shape=(10,))

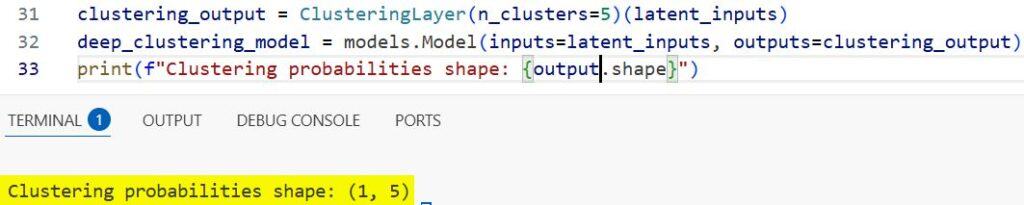

clustering_output = ClusteringLayer(n_clusters=5)(latent_inputs)

deep_clustering_model = models.Model(inputs=latent_inputs, outputs=clustering_output)

print(f"Clustering probabilities shape: {outputs.shape}") You can see the output in the screenshot below.

I implement a custom layer in Keras that calculates a “clustering loss,” pushing the neural network to create distinct, tight clusters.

Method 4: Fine-Tuning Keras Models for Semantic Similarity

When I have a small amount of labeled data, I fine-tune a Keras model using a Triplet Loss or Contrastive Loss approach.

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, GlobalAveragePooling2D

def build_fine_tuned_keras_model():

base_model = VGG16(weights='imagenet', include_top=False)

# I freeze the early layers to keep the general visual features intact

for layer in base_model.layers[:15]:

layer.trainable = False

model = Sequential([

base_model,

GlobalAveragePooling2D(),

Dense(256, activation='relu'),

Dense(128) # The semantic embedding layer

])

return model

semantic_model = build_fine_tuned_keras_model()

semantic_model.compile(optimizer='adam', loss='mse') # Example loss for embedding learningThis method teaches the model that “Golden Retrievers” should be closer to “Labradors” than to “Office Chairs” in the vector space.

Method 5: Visualize Keras Semantic Clusters with t-SNE

I find that clustering results are hard to trust without seeing them, so I always visualize the Keras output using dimensionality reduction.

from sklearn.manifold import TSNE

import matplotlib.pyplot as plt

# Suppose 'features' is the output from our Keras model

features_array = np.array(features).reshape(len(features), -1)

# I use t-SNE to squash the high-dimensional Keras features into 2D space

tsne = TSNE(n_components=2, perplexity=30, random_state=42)

vis_dims = tsne.fit_transform(features_array)

# Plotting the results to see the semantic separation

plt.scatter(vis_dims[:, 0], vis_dims[:, 1], c=kmeans.labels_)

plt.colorbar()

plt.title("Visualizing Keras Semantic Image Clusters")

plt.show()This step helps me verify if the model is actually grouping “Cars” and “Trucks” together or just grouping images by their background color.

Some Final Tips for Semantic Clustering

When you are working with Keras for image clustering, remember that data preprocessing is often more important than the model architecture itself.

I always ensure my images are normalized to the range [0, 1] or [-1, 1] depending on the pre-trained weights I am using.

If you find that your clusters are too messy, try increasing the dropout rate in your Keras layers to prevent the model from memorizing noise.

I also recommend starting with a smaller subset of your data to iterate quickly before running a full training session on thousands of images.

The beauty of Keras is how easily you can swap different backbones like ResNet or Inception to see which one understands your specific images best.

I hope you find this tutorial helpful when you start your next image organization project.

You may read:

- Keras Model Predictions with Integrated Gradients

- Explore Vision Transformer (ViT) Representations in Keras

- Keras Grad-CAM Class Activation Maps

- Near-Duplicate Image Search in Python Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.