I’ve often felt like I was working with a “black box.” It’s one thing to get 98% accuracy on a dataset, but it’s another thing entirely to know why the model made that choice.

I remember working on a project for a local botanical garden where the model was identifying invasive plant species. I needed to be sure it was looking at the leaf shape and not just the background soil.

That is when I started using Grad-CAM (Gradient-weighted Class Activation Mapping). It produces “heatmaps” over your images, showing exactly which pixels influenced the final prediction the most.

In this tutorial, I’ll show you how to implement Grad-CAM in Keras so you can start “seeing” through the eyes of your neural networks.

The Logic Behind Keras Grad-CAM Visualization

Grad-CAM works by using the gradients of any target concept flowing into the final convolutional layer. It produces a coarse localization map highlighting the important regions in the image.

I find this incredibly useful for debugging. If your model is supposed to identify a Ford Mustang but the heatmap highlights the sky, you know your training data might be biased.

Set Up Your Keras Environment for Visualization

Before we dive into the math and the heatmaps, we need to ensure our environment is ready with the necessary libraries.

I always prefer using TensorFlow’s built-in Keras implementation because it is stable and highly optimized for these types of gradient calculations.

import numpy as np

import tensorflow as tf

from tensorflow import keras

import matplotlib.pyplot as plt

import matplotlib.cm as cm

# Displaying versions to ensure compatibility

print(f"TensorFlow version: {tf.__version__}")Load a Pre-trained Model for Grad-CAM

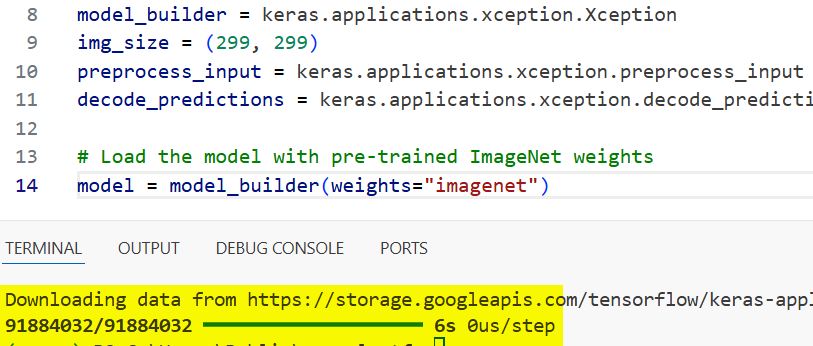

To make this example practical, I’ll use the Xception model pre-trained on ImageNet. It’s a robust architecture that handles high-resolution images very well.

# We use Xception as our base model

model_builder = keras.applications.xception.Xception

img_size = (299, 299)

preprocess_input = keras.applications.xception.preprocess_input

decode_predictions = keras.applications.xception.decode_predictions

# Load the model with pre-trained ImageNet weights

model = model_builder(weights="imagenet")You can refer to the screenshot below to see the output.

In this case, let’s imagine we are classifying an image of a Golden Retriever, a popular family dog across many American households.

Prepare the Image for Keras Class Activation

You can’t just throw a raw JPEG into a neural network. You need to resize it and expand the dimensions to create a “batch” of one.

def get_img_array(img_path, size):

# Load the image and resize it to the model's expected input size

img = keras.preprocessing.image.load_img(img_path, target_size=size)

array = keras.preprocessing.image.img_to_array(img)

# Expand dimensions to transform (299, 299, 3) into (1, 299, 299, 3)

array = np.expand_dims(array, axis=0)

return array

# Replace with a local path to a dog image

img_path = "golden_retriever_california.jpg"

img_array = preprocess_input(get_img_array(img_path, size=img_size))

print(img_array.shape)You can refer to the screenshot below to see the output.

I usually write a small helper function for this to keep my workspace clean and repeatable across different projects.

Create the Keras Grad-CAM Heatmap Function

This is the core of the process. We create a model that maps the input image to the activations of the last conv layer and the output predictions.

def make_gradcam_heatmap(img_array, model, last_conv_layer_name, pred_index=None):

# Create a model that maps input to the activations of the last conv layer

grad_model = tf.keras.models.Model(

[model.inputs], [model.get_layer(last_conv_layer_name).output, model.output]

)

# Compute the gradient of the top predicted class for our input image

with tf.GradientTape() as tape:

last_conv_layer_output, preds = grad_model(img_array)

if pred_index is None:

pred_index = tf.argmax(preds[0])

class_channel = preds[:, pred_index]

# This is the gradient of the output neuron with regard to the output feature map

grads = tape.gradient(class_channel, last_conv_layer_output)

# Vector of mean intensity of the gradient over a specific feature map channel

pooled_grads = tf.reduce_mean(grads, axis=(0, 1, 2))

# We multiply each channel in the feature map array by "how important this channel is"

last_conv_layer_output = last_conv_layer_output[0]

heatmap = last_conv_layer_output @ pooled_grads[..., tf.newaxis]

heatmap = tf.squeeze(heatmap)

# For visualization, we normalize the heatmap between 0 & 1

heatmap = tf.maximum(heatmap, 0) / tf.reduce_max(heatmap)

return heatmap.numpy()I use tf.GradientTape to record the gradients of the top predicted class with respect to the activations of the last convolutional layer.

Generate the Visualization Map in Keras

Once we have the raw heatmap, we need to scale it back up to the original image size so it aligns perfectly with the object in the photo.

def save_and_display_gradcam(img_path, heatmap, cam_path="cam.jpg", alpha=0.4):

# Load the original image

img = keras.preprocessing.image.load_img(img_path)

img = keras.preprocessing.image.img_to_array(img)

# Rescale heatmap to a range 0-255

heatmap = np.uint8(255 * heatmap)

# Use jet colormap to colorize heatmap

jet = cm.get_cmap("jet")

# Use RGB values of the colormap

jet_colors = jet(np.arange(256))[:, :3]

jet_heatmap = jet_colors[heatmap]

# Create an image with RGB colorized heatmap

jet_heatmap = keras.preprocessing.image.array_to_img(jet_heatmap)

jet_heatmap = jet_heatmap.resize((img.shape[1], img.shape[0]))

jet_heatmap = keras.preprocessing.image.img_to_array(jet_heatmap)

# Superimpose the heatmap on original image

superimposed_img = jet_heatmap * alpha + img

superimposed_img = keras.preprocessing.image.array_to_img(superimposed_img)

# Save and display the result

superimposed_img.save(cam_path)

plt.imshow(superimposed_img)

plt.axis('off')

plt.show()

# Execute the visualization

last_conv_layer_name = "block14_sepconv2_act"

heatmap = make_gradcam_heatmap(img_array, model, last_conv_layer_name)

save_and_display_gradcam(img_path, heatmap)I personally like using the “jet” colormap because it provides a high-contrast visual that is easy to present to stakeholders or non-technical clients.

Troubleshoot Common Keras Grad-CAM Issues

During my time implementing this, I’ve noticed that choosing the wrong “last convolutional layer” name is the most common error.

You must ensure the layer name matches exactly what is in model.summary(), otherwise, Keras will throw a ValueError during the gradient recording.

Apply Grad-CAM to Custom Keras Models

If you aren’t using a pre-trained model like Xception, the process remains the same as long as you have convolutional layers.

I often use this for medical imaging models built in-house, where showing a doctor exactly which part of an X-ray the AI flagged is mandatory for trust.

# For a custom model, find the name of the last conv layer

for layer in reversed(model.layers):

if 'conv' in layer.name.lower():

print(f"Recommended layer for Grad-CAM: {layer.name}")

breakInterpret the Results of Keras Visualization

When you look at the final image, the “red” areas are the parts of the image that most heavily influenced the model’s decision.

If you are classifying a baseball game photo and the “red” is on the bat, your model has learned the correct features; if it’s on the grass, you might need to retrain.

Using Grad-CAM has completely changed the way I develop and debug deep learning models. It provides a level of transparency that standard metrics simply cannot match.

Whether you are working on a commercial computer vision product or a personal research project, visualizing your class activations is a step you shouldn’t skip.

You may also read:

- Image Captioning with Keras

- Natural Language Image Search Engine with Keras Dual Encoders

- Ways to Visualize Convolutional Neural Network Filters in Keras

- Keras Model Predictions with Integrated Gradients

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.