Keypoint detection is a fascinating computer vision task with applications in pose estimation, facial landmark detection, and more. Over the past four years working with Keras and Python, I’ve found transfer learning to be a powerful way to build accurate keypoint detectors without starting from scratch.

In this tutorial, I’ll walk you through how to implement keypoint detection using transfer learning in Keras. I’ll provide complete code examples for each step so you can follow along easily and build your own model.

What is Keypoint Detection in Python Keras?

Keypoint detection involves identifying specific points of interest on an object, such as the corners of the eyes or the tips of fingers. In Keras, we can train models to detect these points precisely by leveraging pre-trained networks and fine-tuning them on our dataset.

Using transfer learning speeds up training and improves accuracy because the model already understands general image features.

Prepare the Dataset for Keypoint Detection

Before training, you need a dataset with images and their corresponding keypoint coordinates. For example, a face dataset might have images labeled with nose tip, eye corners, etc.

Here’s a simple method to load and preprocess such data:

import numpy as np

import tensorflow as tf

from tensorflow.keras.preprocessing.image import load_img, img_to_array

def load_and_preprocess(image_path, keypoints, img_size=(224, 224)):

# Load image and resize

img = load_img(image_path, target_size=img_size)

img_array = img_to_array(img) / 255.0 # Normalize pixel values

# Normalize keypoints to be between 0 and 1 relative to image size

keypoints = np.array(keypoints) / np.array([img_size[1], img_size[0]])

return img_array, keypointsThis method ensures your images and keypoints are ready for training with Keras.

Build a Keypoint Detection Model with Transfer Learning in Keras

I prefer using a pre-trained convolutional base like MobileNetV2 for feature extraction. Then, I add custom layers to predict keypoints.

Here’s an easy way to build the model:

from tensorflow.keras.applications import MobileNetV2

from tensorflow.keras import layers, models

def build_keypoint_model(input_shape=(224, 224, 3), num_keypoints=10):

base_model = MobileNetV2(include_top=False, input_shape=input_shape, weights='imagenet')

base_model.trainable = False # Freeze base layers

model = models.Sequential([

base_model,

layers.GlobalAveragePooling2D(),

layers.Dense(512, activation='relu'),

layers.Dropout(0.2),

layers.Dense(num_keypoints * 2, activation='sigmoid') # x,y for each keypoint normalized

])

return model

model = build_keypoint_model()

model.summary()This model outputs normalized (x, y) coordinates for each keypoint.

Compile and Train the Model in Keras

For keypoint detection, mean squared error (MSE) is a good loss function since we want to minimize the distance between predicted and true points.

Here’s how I compile and train the model:

model.compile(optimizer='adam', loss='mse', metrics=['mae'])

# Assuming X_train and y_train are your images and keypoints arrays

history = model.fit(X_train, y_train, epochs=25, batch_size=32, validation_split=0.2)This trains the model while monitoring mean absolute error (MAE) for performance.

Fine-Tuning the Transfer Learning Model in Keras

After initial training, unfreeze some layers of the base model to fine-tune and improve accuracy.

base_model = model.layers[0]

base_model.trainable = True

# Freeze first few layers, unfreeze last 20 layers for fine-tuning

for layer in base_model.layers[:-20]:

layer.trainable = False

model.compile(optimizer=tf.keras.optimizers.Adam(1e-5), loss='mse', metrics=['mae'])

history_fine = model.fit(X_train, y_train, epochs=10, batch_size=32, validation_split=0.2)Fine-tuning helps the model adapt better to your specific dataset.

Evaluate the Keypoint Detection Model

To evaluate, I plot predicted keypoints on test images and calculate error metrics like MAE.

import matplotlib.pyplot as plt

def plot_keypoints(image, true_points, pred_points):

plt.imshow(image)

plt.scatter(true_points[:, 0] * image.shape[1], true_points[:, 1] * image.shape[0], c='g', label='True')

plt.scatter(pred_points[:, 0] * image.shape[1], pred_points[:, 1] * image.shape[0], c='r', label='Predicted')

plt.legend()

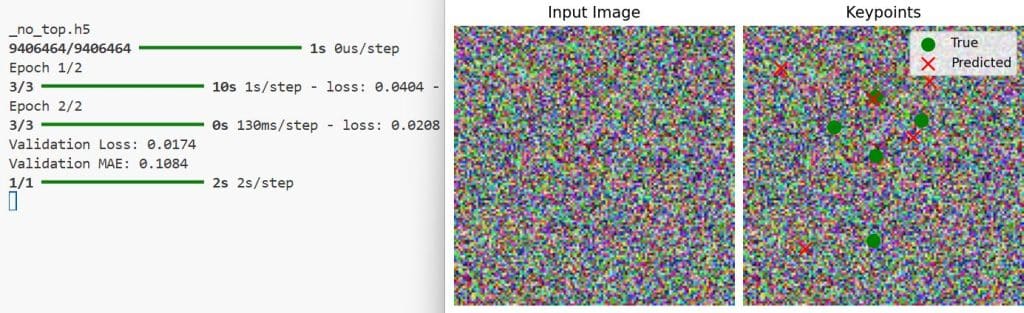

plt.show()You can see the output in the screenshot below.

Visualizing predictions helps understand model performance intuitively.

Using transfer learning in Keras for keypoint detection saves time and leverages powerful pre-trained models. With the methods above, you can build, train, fine-tune, and evaluate your own keypoint detector.

The key is preparing your dataset properly and experimenting with model architectures and training strategies. I hope this guide helps you get started with your project confidently.

If you want to explore further, check out the official Keras example on keypoint detection here.

You may also like to read:

- Multiclass Semantic Segmentation Using DeepLabV3+ in Keras

- Highly Accurate Boundary Segmentation Using BASNet in Keras

- Image Segmentation Using Composable Fully-Convolutional Networks in Keras

- Mastering Object Detection with RetinaNet in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.