Object detection is one of the most exciting problems in computer vision. It involves identifying and localizing multiple objects within an image. Over the years, I’ve worked extensively with Python Keras to build robust object detection models, and RetinaNet has always stood out for its balance between speed and accuracy.

RetinaNet is a single-stage detector that uses a novel focal loss function to handle class imbalance during training. This makes it particularly effective when detecting objects that appear less frequently in images. In this tutorial, I’ll walk you through how to build and train a RetinaNet model using the Keras framework with complete code examples.

What is RetinaNet in Python Keras?

RetinaNet is a deep learning model designed for object detection tasks. Unlike two-stage detectors like Faster R-CNN, RetinaNet performs detection in a single pass, making it faster without compromising much on accuracy. The key innovation is the focal loss, which reduces the impact of easy negatives and focuses training on hard, misclassified examples.

Set Up Your Environment

Before jumping into the code, ensure you have the necessary libraries installed. You can install TensorFlow (which includes Keras) and other dependencies using:

!pip install tensorflow numpy matplotlibBuild RetinaNet Model in Keras

I always start by using a backbone network like ResNet50 for feature extraction. Here’s how you can build the RetinaNet model with Keras:

import tensorflow as tf

from tensorflow.keras import layers, models

from tensorflow.keras.applications import ResNet50

def build_retinanet(input_shape=(None, None, 3), num_classes=80):

backbone = ResNet50(include_top=False, input_shape=input_shape)

# Freeze backbone layers for transfer learning

for layer in backbone.layers:

layer.trainable = False

# Feature Pyramid Network (FPN)

c3_output = backbone.get_layer("conv3_block4_out").output

c4_output = backbone.get_layer("conv4_block6_out").output

c5_output = backbone.get_layer("conv5_block3_out").output

def conv_block(x, filters, kernel_size, strides=1):

x = layers.Conv2D(filters, kernel_size, strides=strides, padding='same')(x)

x = layers.BatchNormalization()(x)

return layers.ReLU()(x)

# Build FPN layers

p5 = conv_block(c5_output, 256, 1)

p4 = conv_block(c4_output, 256, 1)

p3 = conv_block(c3_output, 256, 1)

# Upsample p5 and add to p4

p5_upsampled = layers.UpSampling2D()(p5)

p4 = layers.Add()([p4, p5_upsampled])

# Upsample p4 and add to p3

p4_upsampled = layers.UpSampling2D()(p4)

p3 = layers.Add()([p3, p4_upsampled])

# Classification and box regression subnetworks

def classification_subnet(num_classes, num_anchors=9):

inputs = layers.Input(shape=(None, None, 256))

x = inputs

for _ in range(4):

x = conv_block(x, 256, 3)

outputs = layers.Conv2D(num_classes * num_anchors, 3, padding='same', activation='sigmoid')(x)

return models.Model(inputs=inputs, outputs=outputs, name='classification_subnet')

def box_regression_subnet(num_anchors=9):

inputs = layers.Input(shape=(None, None, 256))

x = inputs

for _ in range(4):

x = conv_block(x, 256, 3)

outputs = layers.Conv2D(num_anchors * 4, 3, padding='same')(x)

return models.Model(inputs=inputs, outputs=outputs, name='box_regression_subnet')

cls_subnet = classification_subnet(num_classes)

box_subnet = box_regression_subnet()

p3_cls = cls_subnet(p3)

p4_cls = cls_subnet(p4)

p5_cls = cls_subnet(p5)

p3_box = box_subnet(p3)

p4_box = box_subnet(p4)

p5_box = box_subnet(p5)

# Concatenate outputs

classification = layers.Concatenate(axis=1)([p3_cls, p4_cls, p5_cls])

box_regression = layers.Concatenate(axis=1)([p3_box, p4_box, p5_box])

model = models.Model(inputs=backbone.input, outputs=[classification, box_regression])

return model

model = build_retinanet()

model.summary()This builds the core RetinaNet architecture using ResNet50 as the backbone.

Implement Focal Loss in Keras

The focal loss is crucial for RetinaNet. It focuses training on hard examples, reducing the effect of easy negatives.

import tensorflow.keras.backend as K

def focal_loss(gamma=2.0, alpha=0.25):

def focal_loss_fixed(y_true, y_pred):

cross_entropy = K.binary_crossentropy(y_true, y_pred)

p_t = y_true * y_pred + (1 - y_true) * (1 - y_pred)

alpha_factor = y_true * alpha + (1 - y_true) * (1 - alpha)

modulating_factor = K.pow((1 - p_t), gamma)

return K.mean(alpha_factor * modulating_factor * cross_entropy)

return focal_loss_fixedUse this loss function when compiling your model to improve detection performance.

Prepare Your Dataset for Training

For training, you’ll need images and annotations in COCO or Pascal VOC format. I recommend using a dataset like Open Images or a custom dataset labeled with bounding boxes.

You can preprocess your dataset using TensorFlow’s tf.data API or Keras ImageDataGenerator. Here’s a simple example of loading and resizing images:

import tensorflow as tf

def preprocess_image(image_path, target_size=(512, 512)):

image = tf.io.read_file(image_path)

image = tf.image.decode_jpeg(image, channels=3)

image = tf.image.resize(image, target_size)

image = image / 255.0 # normalize

return imageTrain the RetinaNet Model in Keras

Once your data pipeline is ready, compile and train your model:

model.compile(

optimizer=tf.keras.optimizers.Adam(learning_rate=1e-4),

loss=[focal_loss(), 'mean_squared_error'] # classification and box regression losses

)

# Assuming you have train_dataset and val_dataset prepared

history = model.fit(

train_dataset,

validation_data=val_dataset,

epochs=20,

batch_size=8

)This trains the RetinaNet model using focal loss for classification and mean squared error for bounding box regression.

Make Predictions and Visualize Results

After training, you can use the model to predict bounding boxes on new images:

import numpy as np

import matplotlib.pyplot as plt

def visualize_predictions(model, image_path):

image = preprocess_image(image_path)

image_expanded = tf.expand_dims(image, axis=0)

cls_preds, box_preds = model.predict(image_expanded)

# Post-processing to decode boxes and apply NMS would go here

# For simplicity, just displaying the image

plt.imshow(image)

plt.axis('off')

plt.show()

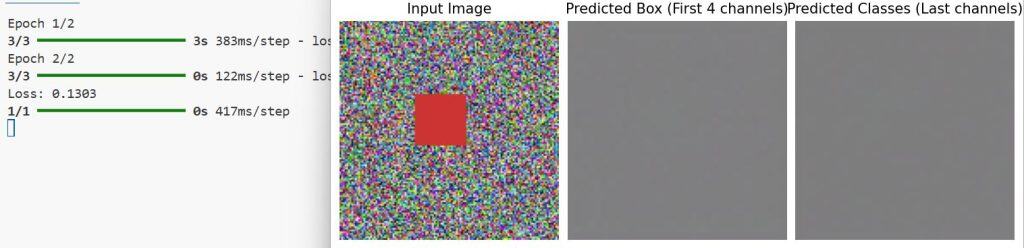

visualize_predictions(model, 'test_image.jpg')You can refer to the screenshot below to see the output.

You can extend this by adding non-maximum suppression (NMS) to filter overlapping boxes.

RetinaNet in Keras offers a powerful yet accessible way to tackle object detection. The balance of accuracy and speed makes it suitable for many real-world applications, from surveillance to automated retail.

With this guide, you now have a solid foundation to build your own RetinaNet models using Python Keras. Experiment with different backbones, datasets, and hyperparameters to fit your specific needs.

If you want to dive deeper, explore libraries like KerasCV, which provide pre-built RetinaNet implementations that can speed up your development.

You may also like to read:

- Image Segmentation with a U-Net-Like Architecture in Keras

- Multiclass Semantic Segmentation Using DeepLabV3+ in Keras

- Highly Accurate Boundary Segmentation Using BASNet in Keras

- Image Segmentation Using Composable Fully-Convolutional Networks in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.