Depth estimation from a single image is a challenging yet fascinating problem in computer vision. From my experience as a Python Keras developer, building a monocular depth estimation model can unlock many applications like robotics, AR, and autonomous navigation.

In this tutorial, I’ll show you how to create a depth estimation model using Keras. I’ll provide complete code snippets for each step, making it easy for you to follow and apply.

What is Monocular Depth Estimation in Python Keras?

Monocular depth estimation predicts the distance of objects from a single RGB image, unlike stereo methods that rely on two cameras. Using Keras, you can build convolutional encoder-decoder networks that learn to infer depth maps.

This approach is practical and widely used in real-world applications where only one camera is available.

Method 1: Build a Simple Encoder-Decoder Depth Estimation Model in Keras

This method uses a convolutional encoder-decoder architecture to predict depth maps from input images.

Step 1: Import Required Libraries

Import the core TensorFlow and helper libraries needed to build the model.

import tensorflow as tf

from tensorflow.keras import layers, models

import numpy as npStep 2: Define the Encoder-Decoder Model

Define a simple encoder–decoder network that maps images to depth predictions.

def create_depth_estimation_model(input_shape=(128, 128, 3)):

inputs = layers.Input(shape=input_shape)

# Encoder: Convolutional layers to extract features

x = layers.Conv2D(64, 3, activation='relu', padding='same')(inputs)

x = layers.MaxPooling2D(2)(x)

x = layers.Conv2D(128, 3, activation='relu', padding='same')(x)

x = layers.MaxPooling2D(2)(x)

x = layers.Conv2D(256, 3, activation='relu', padding='same')(x)

x = layers.MaxPooling2D(2)(x)

# Decoder: Upsampling layers to reconstruct depth map

x = layers.Conv2DTranspose(256, 3, strides=2, activation='relu', padding='same')(x)

x = layers.Conv2DTranspose(128, 3, strides=2, activation='relu', padding='same')(x)

x = layers.Conv2DTranspose(64, 3, strides=2, activation='relu', padding='same')(x)

# Output depth map with single channel (grayscale)

outputs = layers.Conv2D(1, 3, activation='sigmoid', padding='same')(x)

model = models.Model(inputs, outputs)

return model

model = create_depth_estimation_model()

model.summary()Step 3: Compile the Model

Compile the model using an optimizer and loss function suitable for depth estimation.

model.compile(optimizer='adam', loss='mse', metrics=['mae'])Step 4: Prepare Dummy Data for Training

Create sample training and validation data to demonstrate how the model works.

# Generate 100 dummy RGB images (128x128x3)

X_train = np.random.rand(100, 128, 128, 3).astype(np.float32)

# Generate 100 dummy depth maps (128x128x1)

y_train = np.random.rand(100, 128, 128, 1).astype(np.float32)

X_val = np.random.rand(20, 128, 128, 3).astype(np.float32)

y_val = np.random.rand(20, 128, 128, 1).astype(np.float32)Step 5: Train the Model

Train the depth estimation model on the prepared dataset for learning.

history = model.fit(

X_train, y_train,

validation_data=(X_val, y_val),

epochs=15,

batch_size=8

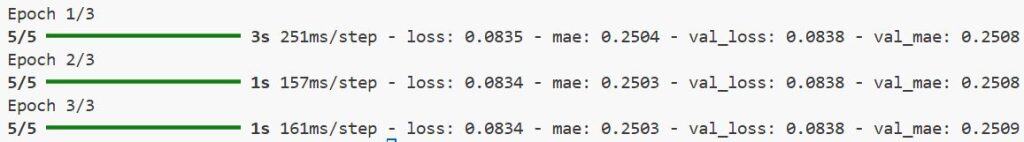

)You can refer to the screenshot below to see the output.

Method 2: Use a Pretrained Encoder for Better Feature Extraction

Using a pretrained backbone like MobileNetV2 improves feature extraction and speeds up convergence.

Step 1: Load Pretrained MobileNetV2 as Encoder

In this step, we load MobileNetV2 as a pretrained encoder to extract high-level image features, giving the depth-estimation model a stronger starting point and reducing the training effort.

def create_depth_model_with_mobilenet(input_shape=(128, 128, 3)):

base_model = tf.keras.applications.MobileNetV2(

input_shape=input_shape,

include_top=False,

weights='imagenet'

)

# Freeze encoder weights

base_model.trainable = False

inputs = layers.Input(shape=input_shape)

x = base_model(inputs, training=False)

# Decoder: Upsampling to reconstruct depth map

x = layers.Conv2DTranspose(256, 3, strides=2, activation='relu', padding='same')(x)

x = layers.Conv2DTranspose(128, 3, strides=2, activation='relu', padding='same')(x)

x = layers.Conv2DTranspose(64, 3, strides=2, activation='relu', padding='same')(x)

x = layers.Conv2DTranspose(32, 3, strides=2, activation='relu', padding='same')(x)

outputs = layers.Conv2D(1, 3, activation='sigmoid', padding='same')(x)

model = models.Model(inputs, outputs)

return model

model = create_depth_model_with_mobilenet()

model.summary()Step 2: Compile and Train

After building the model, we compile it with an appropriate optimizer and loss function, then train it using our dataset to learn the mapping from RGB images to depth maps.

model.compile(optimizer='adam', loss='mse', metrics=['mae'])

model.fit(

X_train, y_train,

validation_data=(X_val, y_val),

epochs=15,

batch_size=8

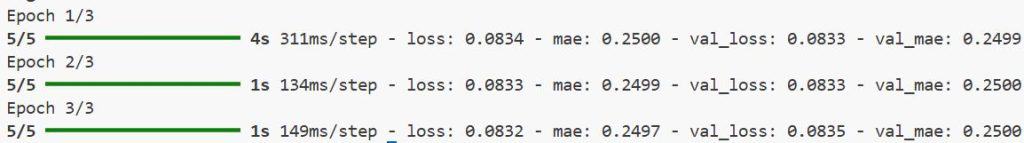

)You can refer to the screenshot below to see the output.

Tips for Improving Monocular Depth Estimation in Keras

- Use data augmentation like random flips and brightness changes to improve robustness.

- Experiment with different loss functions, such as scale-invariant loss, for better depth accuracy.

- Fine-tune pretrained encoders after initial training for improved performance.

Monocular depth estimation in Keras is achievable with easy encoder-decoder models or by leveraging pretrained backbones. The examples I shared provide a solid foundation for your projects.

Feel free to adapt the architectures to your dataset and experiment with training strategies for the best results.

Other Python Keras articles you may also like:

- Image Segmentation Using Composable Fully-Convolutional Networks in Keras

- Mastering Object Detection with RetinaNet in Keras

- Keypoint Detection with Transfer Learning in Keras

- Object Detection Using Vision Transformers in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.