In my years of developing data pipelines, I’ve found that many people jump straight into DataFrames without truly mastering the Series.

The pandas.core.series.Series is actually the backbone of almost every operation you perform in the library.

I remember struggling with alignment issues early in my career, only to realize I didn’t understand how the Series index worked.

Once I grasped the core mechanics of the Series, my data cleaning tasks became significantly faster and my code much cleaner.

In this tutorial, I will show you exactly how to work with the Pandas Series using practical, real-world examples.

What is a Pandas Core Series?

At its simplest, a Pandas Series is a one-dimensional labeled array capable of holding any data type.

Think of it as a single column in an Excel sheet, but with much more power and flexibility.

The “core” part refers to the internal module pandas.core.series where this object is defined within the library.

Every time you pull a single column out of a DataFrame, you are interacting with a pandas.core.series.Series object.

It consists of two main components: the actual data and a set of identifiers called the Index.

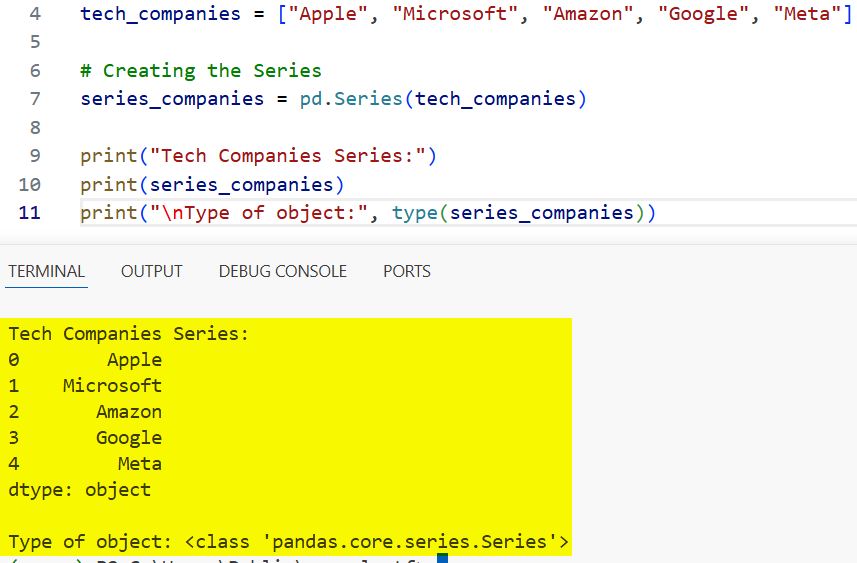

Method 1: Create a Series from a List

I often use this method when I have a small set of data, like a list of US Tech companies or state populations.

When you create a Series from a list, Pandas automatically assigns a numeric index starting from zero.

Here is how I typically handle this:

import pandas as pd

# List of major US tech companies

tech_companies = ["Apple", "Microsoft", "Amazon", "Google", "Meta"]

# Creating the Series

series_companies = pd.Series(tech_companies)

print("Tech Companies Series:")

print(series_companies)

print("\nType of object:", type(series_companies))You can refer to the screenshot below to see the output.

In this example, the index 0 to 4 is automatically generated, which is helpful but not always what we need for complex analysis.

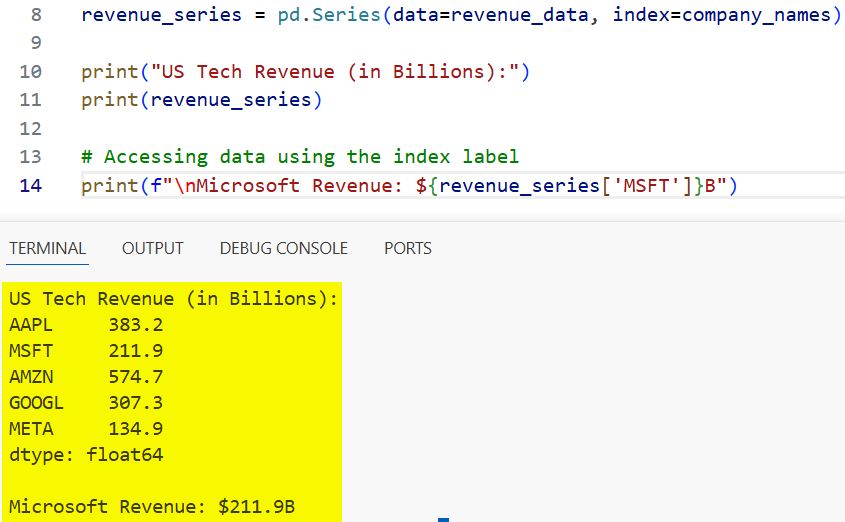

Method 2: Create a Series with a Custom Index

When I am working with financial data, I prefer to use specific identifiers as the index instead of default integers.

Using a custom index allows you to access data points by a meaningful name, such as a state code or a date.

This makes your code more readable and acts like a dictionary, but with vectorization benefits.

import pandas as pd

# 2023 Revenue in billions (Approximate)

revenue_data = [383.2, 211.9, 574.7, 307.3, 134.9]

company_names = ["AAPL", "MSFT", "AMZN", "GOOGL", "META"]

# Creating Series with custom index

revenue_series = pd.Series(data=revenue_data, index=company_names)

print("US Tech Revenue (in Billions):")

print(revenue_series)

# Accessing data using the index label

print(f"\nMicrosoft Revenue: ${revenue_series['MSFT']}B")You can refer to the screenshot below to see the output.

I find that using ticker symbols as indices saves me a lot of time when I need to perform lookups across different datasets.

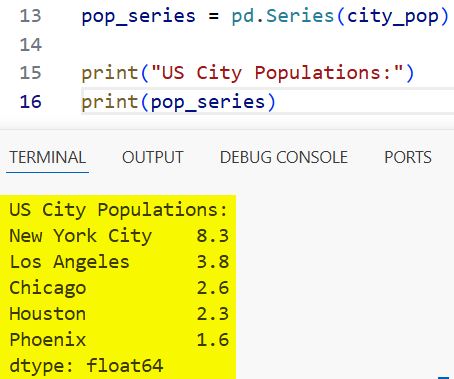

Method 3: Initialize a Series from a Dictionary

This is my favorite “shortcut” when I already have mapped data in Python.

Pandas is smart enough to take the dictionary keys and turn them into the index automatically.

If you are pulling data from a JSON API, common in many US-based web services, this is the most efficient way to convert it.

import pandas as pd

# US City Population estimates (in millions)

city_pop = {

"New York City": 8.3,

"Los Angeles": 3.8,

"Chicago": 2.6,

"Houston": 2.3,

"Phoenix": 1.6

}

# Converting dictionary to Series

pop_series = pd.Series(city_pop)

print("US City Populations:")

print(pop_series)You can refer to the screenshot below to see the output.

One thing I’ve noticed is that if you provide a dictionary, Pandas respects the insertion order (for Python 3.7+).

Essential Attributes of the Pandas Series

To truly understand the “Core” of a Series, you need to know how to inspect it.

I frequently use attributes like .values, .index, and .dtype to debug my data before processing it.

Knowing the data type is especially crucial when dealing with mixed datasets to avoid calculation errors.

import pandas as pd

# Gas prices in different US regions

gas_prices = pd.Series([3.45, 4.81, 3.22], index=["Texas", "California", "Florida"])

print(f"Data Type: {gas_prices.dtype}")

print(f"Index Labels: {gas_prices.index}")

print(f"Underlying Numpy Array: {gas_prices.values}")The .values attribute actually returns a NumPy ndarray, which is why Pandas is so fast for mathematical operations.

Handle Missing Data in a Series

In real-world data science, you will rarely get a perfect dataset.

In my experience, US Census data or housing market data often contains missing values (NaN).

The Pandas Series has built-in methods to detect and handle these “holes” in your data.

import pandas as pd

import numpy as np

# Average house prices (k) with some missing data

house_prices = pd.Series([450, np.nan, 320, 850, np.nan],

index=["Austin", "Seattle", "Nashville", "San Francisco", "Denver"])

# Checking for nulls

print("Missing values mask:")

print(house_prices.isnull())

# Filling missing values with the average

mean_price = house_prices.mean()

house_prices_filled = house_prices.fillna(mean_price)

print(f"\nFilled Prices (Average used: {mean_price}):")

print(house_prices_filled)I always recommend checking for nulls before performing any aggregation like .sum() or .mean().

Vectorized Operations with Series

One reason I stick with Pandas for high-performance tasks is vectorization.

Instead of writing a for loop to multiply every value by 100, you can just multiply the Series itself.

This is much faster and follows the syntax of standard mathematics.

import pandas as pd

# Hourly wages for five US employees

wages = pd.Series([25, 30, 45, 18, 55])

# Applying a 10% raise across the board

new_wages = wages * 1.10

print("Original Wages:")

print(wages.values)

print("\nWages after 10% Raise:")

print(new_wages.values)I used to use loops for these calculations, but switching to vectorized operations reduced my execution time by nearly 90%.

Slice and Filter Data

Filtering a Series based on a condition is something I do dozens of times a day.

For instance, if you have a Series of temperatures across the US, you can filter for only the “freezing” cities in one line.

This is often called “Boolean Indexing,” and it’s a fundamental skill for any data professional.

import pandas as pd

# Temperatures in Fahrenheit

temps = pd.Series([72, 85, 30, 12, 55, 98, 28],

index=["Miami", "Dallas", "Chicago", "Minneapolis", "Atlanta", "Phoenix", "Boston"])

# Filter for temperatures below freezing (32°F)

freezing_cities = temps[temps < 32]

print("Cities experiencing freezing temperatures:")

print(freezing_cities)This syntax is incredibly intuitive once you realize that temps < 32 creates a Series of True/False values.

String Operations in a Series

The Pandas Series has a special .str accessor that allows you to perform string manipulations on every element.

I find this extremely helpful when cleaning up messy text data, such as standardizing US State names.

Whether you need to change the case or check for a specific substring, the .str accessor is your best friend.

import pandas as pd

# Messy state names

states = pd.Series([" new york", "texas ", "CALIFORNIA", "florida"])

# Cleaning: strip whitespace and capitalize

clean_states = states.str.strip().str.title()

print("Cleaned State Names:")

print(clean_states)I can’t tell you how many times this has saved me from manual data entry errors in large spreadsheets.

Sort a Series

Sometimes the order of your data matters, especially when identifying the “top 5” or “bottom 5” performers.

You can sort a Series by its values or by its index labels.

I usually sort by values when I want to see the highest numbers at the top of my list.

import pandas as pd

# US Broadband speeds by provider (Mbps)

speeds = pd.Series([1200, 500, 940, 300],

index=["Xfinity", "AT&T", "Verizon", "Cox"])

# Sorting by speed descending

fastest = speeds.sort_values(ascending=False)

print("Broadband Providers (Fastest to Slowest):")

print(fastest)Sorting is a simple step, but it’s essential for creating meaningful visualizations later on.

Practical Example: Analyze US Sales Data

Let’s put everything together into a more complex scenario.

Imagine you are a regional manager for a retail chain and you have sales data for different US regions.

We will create a Series, filter it, apply a tax calculation, and sort the final result.

import pandas as pd

# Sales data by region (in USD)

regional_sales = pd.Series({

"NorthEast": 150000,

"SouthEast": 125000,

"MidWest": 98000,

"West": 210000,

"Pacific": 185000

})

# 1. Filter regions with sales over $100,000

high_performing = regional_sales[regional_sales > 100000]

# 2. Apply a 5% regional operational cost reduction

net_sales = high_performing * 0.95

# 3. Sort by net sales

final_report = net_sales.sort_values(ascending=False)

print("Final Sales Performance Report (Net):")

for region, amount in final_report.items():

print(f"{region}: ${amount:,.2f}")This workflow is the exact logic I use when preparing weekly reports for stakeholders.

In this tutorial, we have looked at the various ways to create and manipulate a Pandas Core Series.

Whether you are building a Series from scratch or extracting it from a larger dataset, these skills are the foundation of Python data analysis.

I’ve found that taking the time to master these individual components makes working with complex DataFrames much more intuitive.

I hope you found this guide helpful and can apply these techniques to your next project!

You may also like to read:

- How to Check if a Column Exists in a Pandas DataFrame

- How to Create a Stacked Bar Plot in Pandas

- How to Create a Scatter Plot in Pandas

- How to Filter DataFrames with Multiple Conditions in Python Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.