Filtering data is one of those tasks I find myself doing every single day. It is the bread and butter of data analysis.

When I first started using Pandas, I often struggled with the syntax for complex filters. It felt a bit different from standard Python logic.

Once you get the hang of using the right operators and brackets, it becomes second nature. It’s like having a superpower for your datasets.

In this tutorial, I’ll show you exactly how I handle multiple conditions in Pandas. We’ll use real-world scenarios so you can see how this works in practice.

The Core Operators for Multiple Conditions

To filter a DataFrame based on more than one criterion, you need to use bitwise operators.

In standard Python, we use and, or, and not. However, in Pandas, we use:

- & for AND (both conditions must be true)

- | for OR (at least one condition must be true)

- ~ for NOT (the condition must be false)

I’ve learned the hard way that you must wrap each condition in parentheses (). If you forget them, Pandas gets confused about the order of operations and throws an error.

Method 1: Use the Boolean Indexing with & (AND)

I use this method most frequently when I need to narrow down a dataset based on strict requirements.

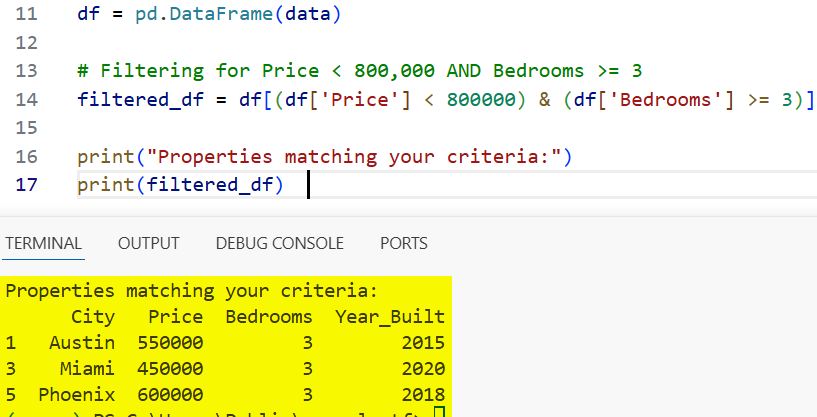

Imagine we have a dataset of California real estate listings. We want to find homes that are priced under $800,000 AND have at least 3 bedrooms.

Here is how I would write the code for this:

import pandas as pd

# Creating a sample US Real Estate dataset

data = {

'City': ['San Francisco', 'Austin', 'Seattle', 'Miami', 'Dallas', 'Phoenix'],

'Price': [1200000, 550000, 850000, 450000, 350000, 600000],

'Bedrooms': [2, 3, 4, 3, 2, 3],

'Year_Built': [1950, 2015, 1998, 2020, 2010, 2018]

}

df = pd.DataFrame(data)

# Filtering for Price < 800,000 AND Bedrooms >= 3

filtered_df = df[(df['Price'] < 800000) & (df['Bedrooms'] >= 3)]

print("Properties matching your criteria:")

print(filtered_df)You can see the output in the screenshot below.

In this example, the & operator ensures that only the rows meeting both constraints are returned.

Method 2: Filter with the | (OR) Operator

Sometimes your criteria are more flexible. You might want to see data that hits one target or another.

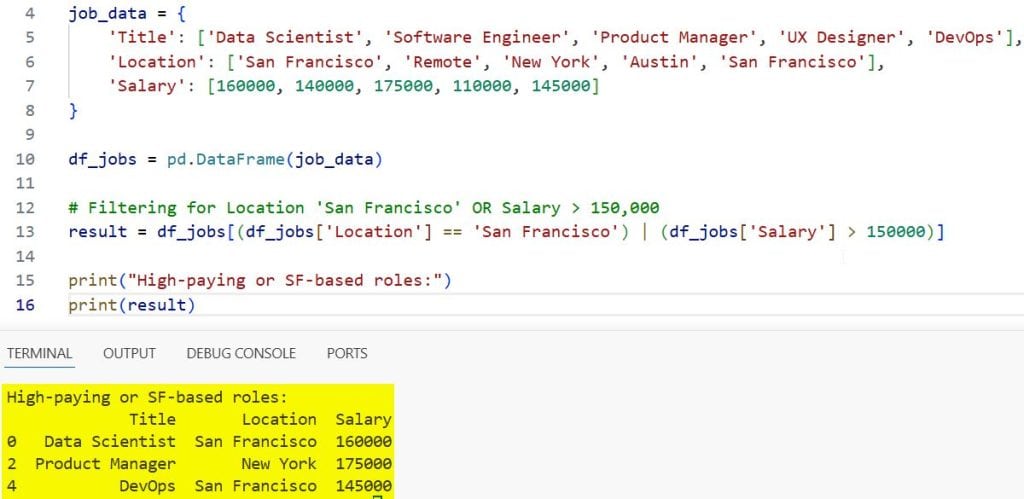

Suppose you are looking at a list of tech job openings in the US. You want to see roles that are either in “San Francisco” OR pay more than $150,000.

I find the | operator perfect for building these “inclusive” lists.

import pandas as pd

# Sample Tech Salaries dataset

job_data = {

'Title': ['Data Scientist', 'Software Engineer', 'Product Manager', 'UX Designer', 'DevOps'],

'Location': ['San Francisco', 'Remote', 'New York', 'Austin', 'San Francisco'],

'Salary': [160000, 140000, 175000, 110000, 145000]

}

df_jobs = pd.DataFrame(job_data)

# Filtering for Location 'San Francisco' OR Salary > 150,000

result = df_jobs[(df_jobs['Location'] == 'San Francisco') | (df_jobs['Salary'] > 150000)]

print("High-paying or SF-based roles:")

print(result)You can see the output in the screenshot below.

The pipe | The symbol tells Pandas to include the row if either side of the expression is true.

Method 3: Use the .query() Method for Better Readability

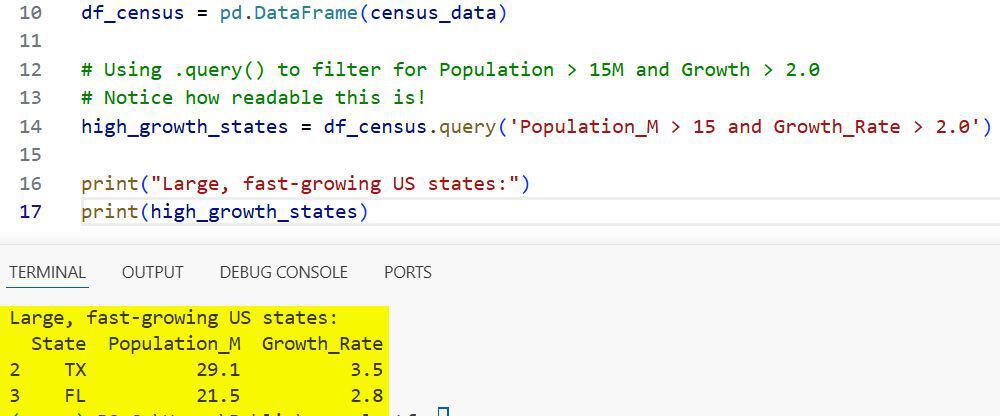

As my DataFrames get larger and my conditions get more complex, the syntax with brackets and quotes can get messy.

This is where I prefer using the .query() method. It allows you to write your conditions as a string, which looks much cleaner.

One thing I love about query() is that you can use the literal words and and or instead of & and |.

import pandas as pd

# Sample US Census style data

census_data = {

'State': ['NY', 'CA', 'TX', 'FL', 'WA', 'IL'],

'Population_M': [19.5, 39.2, 29.1, 21.5, 7.7, 12.6],

'Growth_Rate': [0.5, 1.2, 3.5, 2.8, 1.1, -0.2]

}

df_census = pd.DataFrame(census_data)

# Using .query() to filter for Population > 15M and Growth > 2.0

# Notice how readable this is!

high_growth_states = df_census.query('Population_M > 15 and Growth_Rate > 2.0')

print("Large, fast-growing US states:")

print(high_growth_states)You can see the output in the screenshot below.

The code above is much easier for a teammate to read during a code review. It feels almost like writing SQL.

Method 4: Filter with .isin() for Multiple Values

I often run into situations where I need to filter a column against a list of specific values.

Instead of writing five different OR conditions, I use the .isin() method. It is much more efficient.

Let’s say we have a dataset of US retail stores and we only want to see data for stores located in “New York”, “Texas”, or “California”.

import pandas as pd

# Sample Retail Store data

retail_data = {

'StoreID': [101, 102, 103, 104, 105, 106],

'State': ['NY', 'NJ', 'TX', 'CA', 'FL', 'TX'],

'Sales_k': [500, 300, 450, 700, 250, 400]

}

df_retail = pd.DataFrame(retail_data)

# List of states we are interested in

target_states = ['NY', 'TX', 'CA']

# Filtering using .isin()

filtered_retail = df_retail[df_retail['State'].isin(target_states)]

print("Sales data for target states:")

print(filtered_retail)If you combine this with other conditions, you can create very powerful filters without the code becoming a wall of text.

Method 5: Use the ~ (NOT) Operator to Exclude Data

There are times when it’s easier to define what you don’t want. I use the tilde ~ operator to negate a condition.

Imagine you have a list of US flight data and you want to exclude any flights that were “Cancelled” or “Delayed”.

import pandas as pd

# Sample Flight Status data

flight_data = {

'FlightNum': ['AA101', 'DL202', 'UA303', 'SW404', 'B6505'],

'Status': ['On Time', 'Delayed', 'On Time', 'Cancelled', 'On Time'],

'Destination': ['JFK', 'LAX', 'ORD', 'DFW', 'SFO']

}

df_flights = pd.DataFrame(flight_data)

# Exclude Delayed and Cancelled flights

# We use ~ to say "NOT in this list"

active_flights = df_flights[~df_flights['Status'].isin(['Delayed', 'Cancelled'])]

print("Flights currently on schedule:")

print(active_flights)The ~ operator simply flips the True/False values of your filter.

Advanced Tip: Filter with String Methods

I frequently have to filter based on partial text. For example, finding all US cities that start with the word “San”.

You can combine string methods like .str.startswith() with other numerical conditions.

import pandas as pd

city_data = {

'City': ['San Diego', 'San Jose', 'Los Angeles', 'San Francisco', 'Sacramento'],

'Population': [1381000, 1013000, 3898000, 815000, 524000]

}

df_cities = pd.DataFrame(city_data)

# Filter for cities starting with 'San' AND population over 1 million

san_cities = df_cities[(df_cities['City'].str.startswith('San')) & (df_cities['Population'] > 1000000)]

print("Large 'San' cities in California:")

print(san_cities)Deal with Null Values in Multiple Filters

One thing that tripped me up early on was how Pandas handles NaN (null) values during filtering.

If a column has missing data, your condition might return NaN instead of True or False, which can lead to empty results or errors.

I always recommend using .fillna() or checking for nulls with .notnull() if you suspect your dataset is “dirty.”

Performance Considerations for Large Datasets

When working with millions of rows of US financial data, I’ve noticed that .query() can sometimes be faster than traditional boolean indexing.

This is because .query() uses a library called numexpr under the hood, which is optimized for large-scale element-wise operations.

However, for small to medium datasets, the difference is negligible. I usually choose the method that makes the code the most readable.

I hope this guide helps you feel more confident when slicing and dicing your data in Pandas.

Filtering with multiple conditions is something you will do constantly. My advice is to keep your code clean and always double-check those parentheses!

You may read:

- How to Use Pandas pivot_table in Python

- How to Check if a Column Exists in a Pandas DataFrame

- How to Create a Stacked Bar Plot in Pandas

- How to Create a Scatter Plot in Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.