In my years of working with Python and data analysis, I’ve found that data rarely comes in a perfect format. Most of the time, I receive data from web APIs or JSON files as a list of dictionaries.

Converting this data into a structured format is the first step in any real-world project. Pandas makes this process incredibly easy, and today I’ll show you exactly how I handle it.

The Standard Approach Using pd.DataFrame()

Whenever I need to move quickly, I rely on the built-in DataFrame constructor.

It is the most direct way to transform your list into a tabular format.

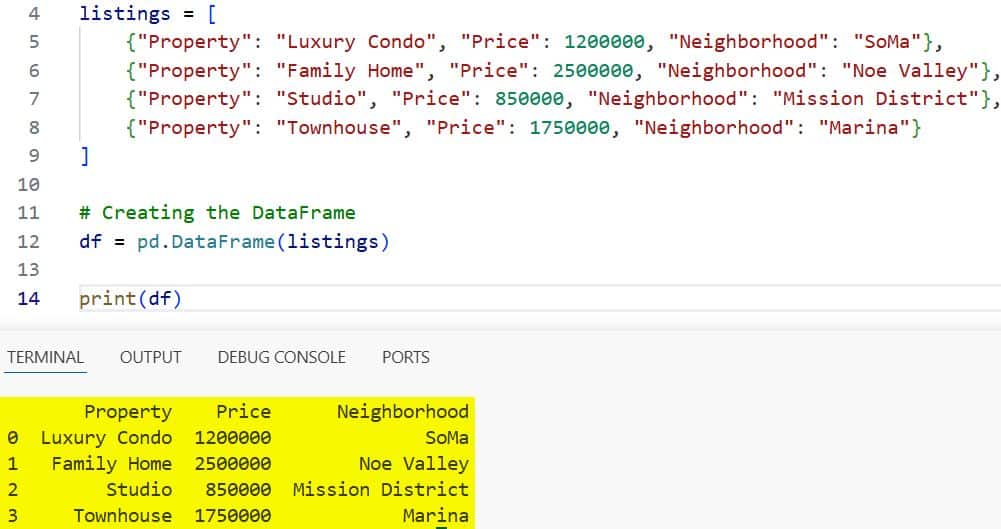

Suppose we are tracking real estate listings in San Francisco. Each dictionary represents a single property.

import pandas as pd

# List of dictionaries representing SF Real Estate

listings = [

{"Property": "Luxury Condo", "Price": 1200000, "Neighborhood": "SoMa"},

{"Property": "Family Home", "Price": 2500000, "Neighborhood": "Noe Valley"},

{"Property": "Studio", "Price": 850000, "Neighborhood": "Mission District"},

{"Property": "Townhouse", "Price": 1750000, "Neighborhood": "Marina"}

]

# Creating the DataFrame

df = pd.DataFrame(listings)

print(df)You can refer to the screenshot below to see the output.

In this example, Pandas automatically uses the keys of the dictionaries as column headers. I like this method because it requires very little configuration to get started.

Handle Missing Data in Dictionaries

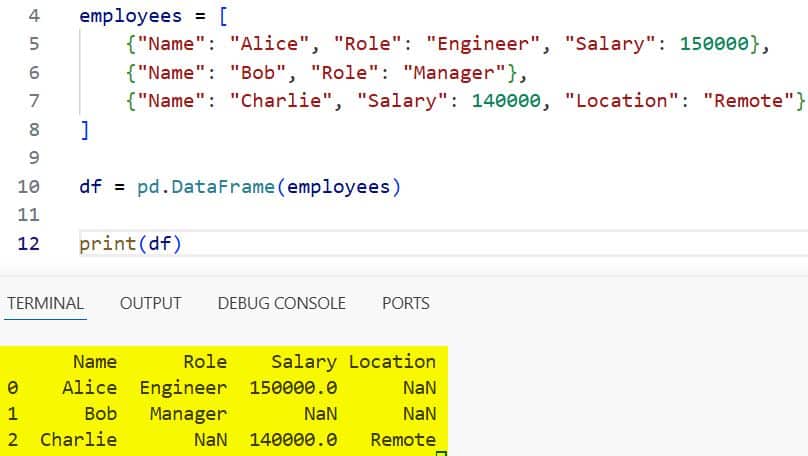

In real-world datasets, not every dictionary will have the same keys. I often encounter “jagged” data where some entries are missing specific attributes.

Let’s look at a scenario involving tech employee records in Seattle.

import pandas as pd

# Data with missing keys

employees = [

{"Name": "Alice", "Role": "Engineer", "Salary": 150000},

{"Name": "Bob", "Role": "Manager"},

{"Name": "Charlie", "Salary": 140000, "Location": "Remote"}

]

df = pd.DataFrame(employees)

print(df)You can refer to the screenshot below to see the output.

Pandas handles this by filling missing values with NaN (Not a Number). I find this helpful because it preserves the integrity of the dataset without crashing the script.

Create a DataFrame from a List of Dictionaries Using From_dict

Sometimes, I need more control over how the data is oriented. The from_dict method is a great alternative when your data structure is a bit more complex.

I typically use this when I want to ensure the columns are mapped correctly.

import pandas as pd

# E-commerce sales data for NYC

sales_data = [

{"Order_ID": 101, "Item": "Laptop", "Amount": 1200},

{"Order_ID": 102, "Item": "Monitor", "Amount": 300},

{"Order_ID": 103, "Item": "Keyboard", "Amount": 100}

]

df = pd.DataFrame.from_dict(sales_data)

print(df)While it looks similar to the standard constructor, it offers a “columns” parameter that I use for filtering.

Specify Column Order and Filtering

I often find that my dictionaries contain more data than I actually need for an analysis. You can pass a specific list of columns to the constructor to filter and reorder them.

Let’s use an example of national park visitors in the USA.

import pandas as pd

parks = [

{"Park": "Yellowstone", "State": "WY", "Visitors": 3800000, "Established": 1872},

{"Park": "Zion", "State": "UT", "Visitors": 4500000, "Established": 1919},

{"Park": "Grand Canyon", "State": "AZ", "Visitors": 5900000, "Established": 1919}

]

# I only want Park and Visitors, and I want Visitors first

df = pd.DataFrame(parks, columns=["Visitors", "Park"])

print(df)By defining the columns, I can ignore the “State” and “Established” keys entirely.

Deal with Nested Dictionaries

One challenge I frequently face is when a dictionary contains another dictionary. This is common when working with cloud service logs or complex JSON.

To handle this, I use json_normalize from the Pandas library.

import pandas as pd

# Cloud Server Logs

logs = [

{"Instance": "Server-01", "Details": {"Region": "US-East", "Status": "Active"}},

{"Instance": "Server-02", "Details": {"Region": "US-West", "Status": "Maintenance"}}

]

# Flattening the nested structure

df = pd.json_normalize(logs)

print(df)This “flattens” the data, creating columns like Details.Region and Details.Status. In my experience, this is the cleanest way to avoid manual loops to extract nested values.

Performance Considerations for Large Lists

When I am dealing with millions of records, performance becomes a priority.

Creating a list of dictionaries is often slower than using a list of lists or a NumPy array.

However, if you already have the list of dicts, passing it directly to pd.DataFrame() is generally optimized.

If I notice a bottleneck, I sometimes convert the list to a dictionary of lists first.

This “columnar” format is much faster for Pandas to process.

import pandas as pd

# Large dataset simulation

data = [

{"ID": 1, "Val": 10},

{"ID": 2, "Val": 20},

# Imagine 1 million rows here...

]

# Faster if converted to dict of lists

fast_data = {

"ID": [d["ID"] for d in data],

"Val": [d["Val"] for d in data]

}

df = pd.DataFrame(fast_data)I only suggest this if you are working with extremely large datasets where every second counts.

Handle Date Strings During Conversion

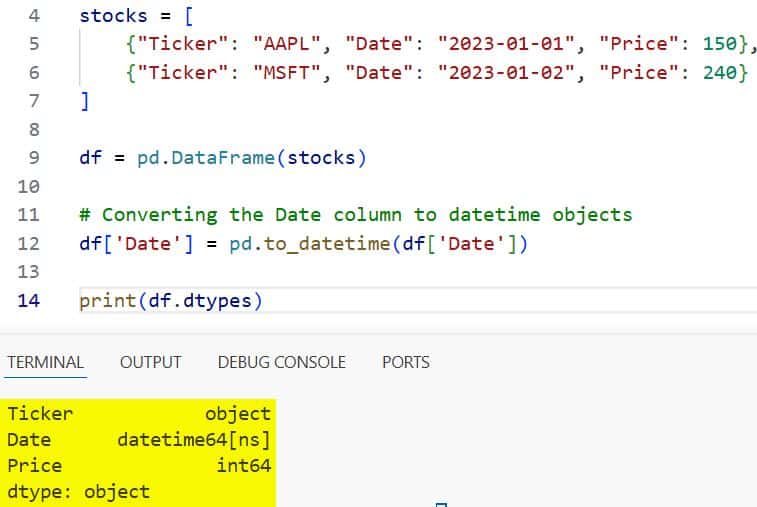

I’ve noticed that Pandas treats date strings as generic objects by default. When I create a DataFrame from a list of dicts containing dates, I always add an extra step.

Using pd.to_datetime ensures that I can perform time-series analysis later.

import pandas as pd

# Stock market data

stocks = [

{"Ticker": "AAPL", "Date": "2023-01-01", "Price": 150},

{"Ticker": "MSFT", "Date": "2023-01-02", "Price": 240}

]

df = pd.DataFrame(stocks)

# Converting the Date column to datetime objects

df['Date'] = pd.to_datetime(df['Date'])

print(df.dtypes)You can refer to the screenshot below to see the output.

Doing this right at the beginning saves me from troubleshooting errors when I try to plot the data.

Set an Index from the List of Dictionaries

Often, one of the keys in my dictionary is a unique identifier, like an SSN or a Serial Number.

Instead of having a default numeric index, I prefer to set one of the columns as the index.

import pandas as pd

# US Car Manufacturing Data

cars = [

{"VIN": "1A2B", "Make": "Tesla", "Model": "Model 3"},

{"VIN": "3C4D", "Make": "Ford", "Model": "F-150"},

{"VIN": "5E6F", "Make": "Chevrolet", "Model": "Silverado"}

]

df = pd.DataFrame(cars).set_index("VIN")

print(df)This makes it much easier for me to look up specific rows later in my workflow.

Convert Data Types on the Fly

Sometimes the data types inferred by Pandas aren’t what I intended. For instance, a Zip Code might be read as an integer, which removes leading zeros.

I use the astype method immediately after creating the DataFrame to fix this.

import pandas as pd

# Customer data with Zip Codes

customers = [

{"User": "John", "Zip": "02108"},

{"User": "Jane", "Zip": "90210"}

]

df = pd.DataFrame(customers)

# Ensuring Zip stays as a string

df['Zip'] = df['Zip'].astype(str)

print(df)In my work, ensuring data types are correct from the start prevents major headaches during the reporting phase.

Add New Columns After Creation

It is very rare that I stop once the DataFrame is created. Usually, I need to add calculated columns based on the existing dictionary data.

Suppose we are calculating the tax for retail sales in Texas.

import pandas as pd

# Retail Transactions

transactions = [

{"Item": "Desk", "Price": 500},

{"Item": "Chair", "Price": 150}

]

df = pd.DataFrame(transactions)

# Adding a 8.25% Sales Tax column

df['Tax'] = df['Price'] * 0.0825

print(df)The flexibility of Pandas allows me to grow the dataset as the project requirements change.

Conclusion

Creating a Pandas DataFrame from a list of dictionaries is a fundamental skill for any Python developer.

Whether you are handling missing values, flattening nested data, or optimizing for speed, the tools are built right in.

You may also like to read:

- How to Drop Column by Index in Pandas

- How to Merge Two Columns in Pandas

- Pandas Series vs DataFrame

- How to Change Column Type in Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.