In my Python developer journey, I have spent a massive amount of time cleaning messy datasets. One of the most frequent tasks I encounter is removing unnecessary data to keep my analysis focused.

In Pandas, dropping rows based on a specific column value is a fundamental skill that every data professional needs to master.

I have found that there isn’t just one way to do this; the “best” method usually depends on how complex your conditions are.

In this tutorial, I will walk you through the various techniques I use daily to filter and drop rows efficiently.

Use the Boolean Indexing Method (My Go-To Approach)

When I need to quickly remove rows, I usually reach for Boolean indexing first. It is the most “Pandas-native” way to handle filtering.

Instead of thinking about “deleting,” I think about “selecting” the data I want to keep.

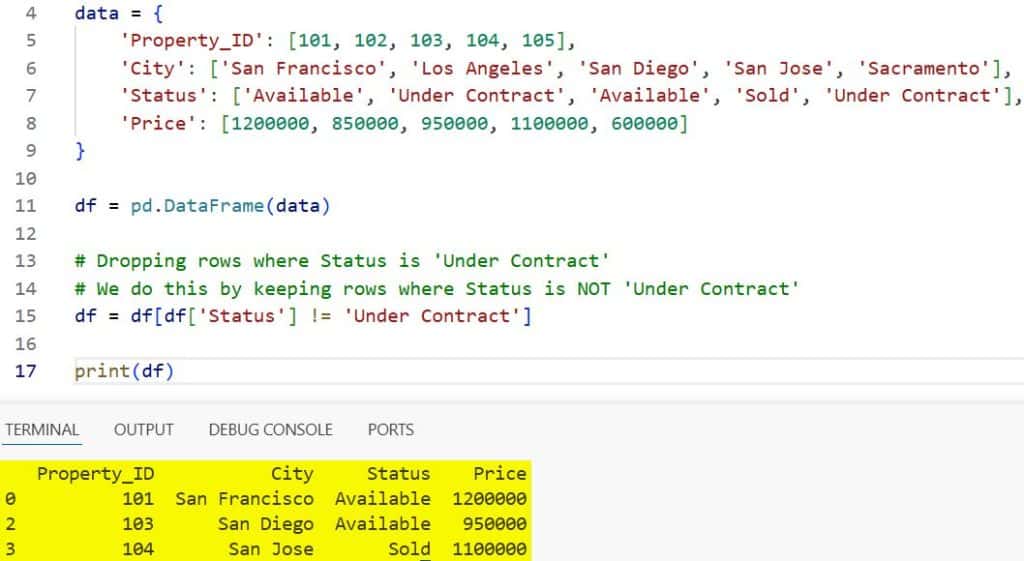

Suppose you have a dataset of California real estate listings, and you want to remove all properties that are currently “Under Contract.”

import pandas as pd

# Creating a sample dataset of US Real Estate

data = {

'Property_ID': [101, 102, 103, 104, 105],

'City': ['San Francisco', 'Los Angeles', 'San Diego', 'San Jose', 'Sacramento'],

'Status': ['Available', 'Under Contract', 'Available', 'Sold', 'Under Contract'],

'Price': [1200000, 850000, 950000, 1100000, 600000]

}

df = pd.DataFrame(data)

# Dropping rows where Status is 'Under Contract'

# We do this by keeping rows where Status is NOT 'Under Contract'

df = df[df['Status'] != 'Under Contract']

print(df)I executed the above example code and added the screenshot below.

In this example, the != operator tells Pandas to keep everything except the rows we want to drop.

Drop Rows Using the .drop() Index Method

Sometimes, I prefer to identify the index of the rows that meet a condition and then drop them explicitly.

This is very helpful when I want to see which rows are about to be deleted before I actually execute the command.

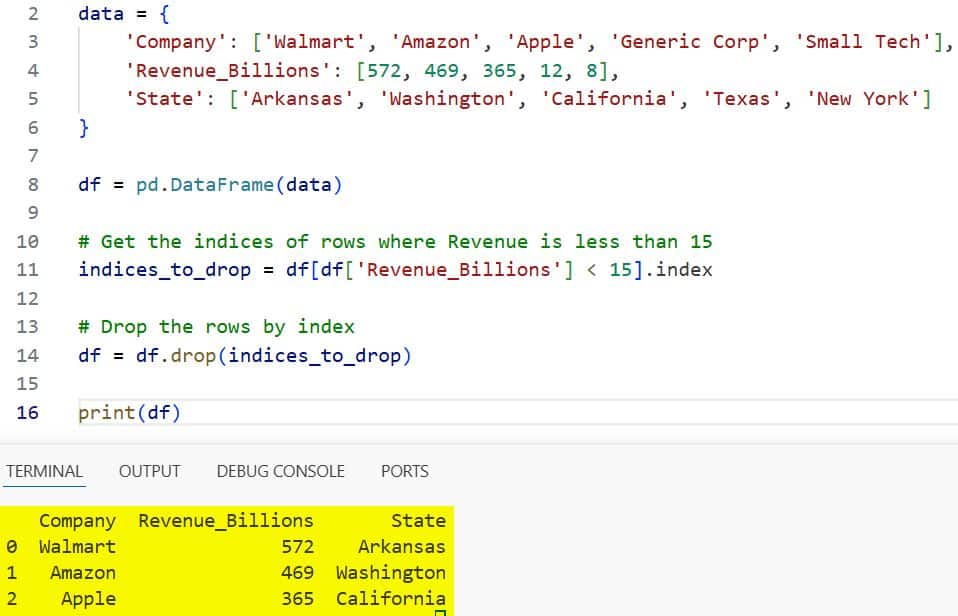

Let’s say we are looking at a list of Fortune 500 companies and want to remove any company with a revenue of less than $15 Billion.

# Sample Fortune 500 Data

data = {

'Company': ['Walmart', 'Amazon', 'Apple', 'Generic Corp', 'Small Tech'],

'Revenue_Billions': [572, 469, 365, 12, 8],

'State': ['Arkansas', 'Washington', 'California', 'Texas', 'New York']

}

df = pd.DataFrame(data)

# Get the indices of rows where Revenue is less than 15

indices_to_drop = df[df['Revenue_Billions'] < 15].index

# Drop the rows by index

df = df.drop(indices_to_drop)

print(df)I executed the above example code and added the screenshot below.

I like this method because it separates the logic of “finding” the data from the action of “removing” it.

Handle Multiple Values with .isin()

In many of my projects, I don’t just want to drop one specific value; I want to drop a whole list of them.

Using multiple or conditions can make your code look cluttered and hard to read.

If I am analyzing US Census data and want to exclude specific states like ‘New York’ and ‘Florida’, I use the .isin() method combined with the tilde (~) operator.

# Sample US Census Data

data = {

'Name': ['John', 'Sarah', 'Mike', 'Emma', 'David'],

'State': ['New York', 'Texas', 'Florida', 'California', 'Illinois'],

'Age': [28, 34, 45, 31, 52]

}

df = pd.DataFrame(data)

# Define the states to exclude

exclude_states = ['New York', 'Florida']

# Use ~ to keep rows NOT in the exclude list

df = df[~df['State'].isin(exclude_states)]

print(df)The ~ symbol is a “NOT” operator, which makes this code incredibly clean and easy for others to understand.

Use the .query() Method for Readability

If you are coming from a SQL background, you might find the standard Pandas syntax a bit jarring.

I often use the .query() method when I want my code to read more like a natural English sentence.

Imagine we are looking at a dataset of internal flights within the USA, and we want to drop any flight that has a delay of more than 60 minutes.

# Sample Flight Delay Data

data = {

'Flight_No': ['UA123', 'DL456', 'AA789', 'WN101', 'B6202'],

'Origin': ['ORD', 'LAX', 'JFK', 'DFW', 'BOS'],

'Delay_Minutes': [10, 75, 5, 120, 0]

}

df = pd.DataFrame(data)

# Use query to keep rows where Delay_Minutes is 60 or less

df = df.query("Delay_Minutes <= 60")

print(df)This is particularly useful when you have many columns and complex filtering logic.

Drop Rows Based on String Patterns

There are times when you don’t have an exact match. You might need to drop rows based on a partial string.

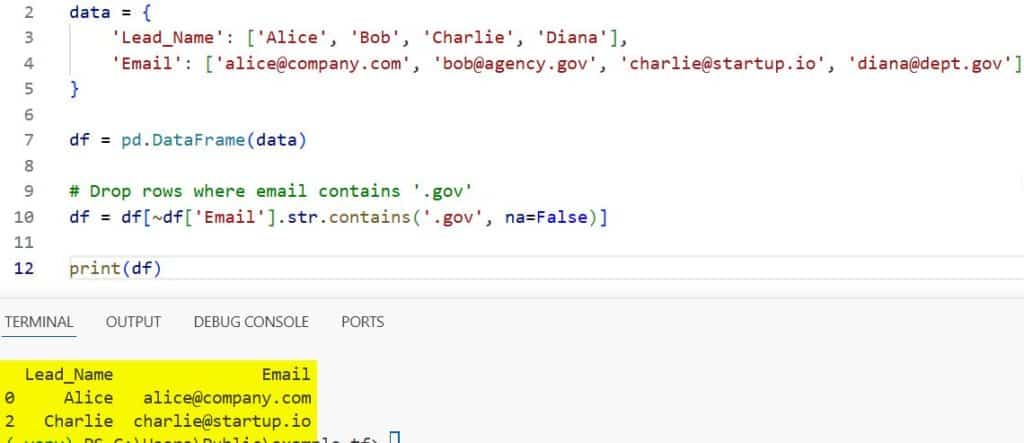

I once worked on a marketing dataset where I had to remove any email address that came from a “.gov” domain to focus on private sector leads.

# Sample Lead Data

data = {

'Lead_Name': ['Alice', 'Bob', 'Charlie', 'Diana'],

'Email': ['alice@company.com', 'bob@agency.gov', 'charlie@startup.io', 'diana@dept.gov']

}

df = pd.DataFrame(data)

# Drop rows where email contains '.gov'

df = df[~df['Email'].str.contains('.gov', na=False)]

print(df)I executed the above example code and added the screenshot below.

Using .str.contains() allows for powerful pattern matching, including the use of Regular Expressions (regex) if needed.

Drop Rows with Null Values in Specific Columns

Sometimes a “value” isn’t a number or a string; it’s simply missing data.

If I am looking at US Social Security data and a specific record is missing the “Birth_Year,” that row is useless for my analysis.

Instead of dropping all rows with any missing values, I target the specific column.

import numpy as np

# Sample Data with NaNs

data = {

'Person': ['James', 'Mary', 'Robert', 'Patricia'],

'Birth_Year': [1985, np.nan, 1992, np.nan],

'City': ['Phoenix', 'Philadelphia', 'San Antonio', 'San Diego']

}

df = pd.DataFrame(data)

# Drop rows where 'Birth_Year' is null

df = df.dropna(subset=['Birth_Year'])

print(df)This ensures I don’t accidentally delete rows that have missing data in other, less important columns.

Drop Rows Based on Numeric Thresholds (Quantiles)

In more advanced data science tasks, I often drop outliers based on statistical thresholds.

For example, if you are looking at credit card transactions in the US, you might want to drop the top 1% of transactions to avoid skewing your “average” spending report.

# Sample Transaction Data

data = {

'Transaction_ID': range(1, 11),

'Amount': [20, 50, 15, 3000, 45, 60, 12, 10000, 35, 55]

}

df = pd.DataFrame(data)

# Calculate the 90th percentile

threshold = df['Amount'].quantile(0.90)

# Keep only rows below the threshold

df = df[df['Amount'] < threshold]

print(df)This is a dynamic way to drop rows without hardcoding a specific value.

Common Mistakes to Avoid

One mistake I made frequently as a beginner was forgetting that Pandas operations often return a copy of the data.

If you don’t assign the result back to the variable (e.g., df = df[…]), your rows won’t actually be dropped in the original DataFrame.

While some functions offer an inplace=True parameter, the Pandas team is actually moving away from it.

I now always recommend re-assigning the variable to keep your code future-proof and clear.

Another tip: always check the shape of your DataFrame (df.shape) before and after dropping rows.

It’s a simple habit that has saved me from accidentally deleting my entire dataset more times than I can count!

Final Thoughts on Pandas Row Deletion

Working with data in Python is incredibly rewarding once you get the hang of these filtering techniques.

Whether you are cleaning up a small CSV or processing millions of rows of US financial data, these methods will serve as your toolkit.

I recommend starting with boolean indexing for most tasks and moving to .query() or .isin() as your logic gets more complex.

I hope this tutorial helped you understand the different ways to drop rows in Pandas based on column values.

It is one of those skills that you will use in almost every single data project you touch.

If you found this helpful, be sure to check out my other guides on Pandas and Python data manipulation.

You may also like to read other Pandas tutorials:

- How to Merge Two Columns in Pandas

- Pandas Series vs DataFrame

- How to Change Column Type in Pandas

- How to Create a Pandas DataFrame from a List of Dictionaries

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.