In my years of working as a Python developer, I have spent a significant amount of time cleaning messy datasets.

One of the most common tasks I face is updating or correcting specific values within a column.

Whether it is fixing inconsistent state abbreviations or updating pricing categories, knowing how to replace values efficiently is a core skill.

I have found that there isn’t just one way to do it; the “best” way usually depends on the size of your data and the complexity of the replacement.

In this guide, I will share the exact methods I use in my daily workflow to handle value replacements in Pandas.

The Dataset We Will Use

Before we jump into the methods, let’s create a dataset that reflects a real-world scenario.

I have put together a small DataFrame representing a list of employees in various tech hubs across the USA.

import pandas as pd

import numpy as np

# Creating a sample dataset of USA-based tech employees

data = {

'Employee_Name': ['Alice Smith', 'Bob Jones', 'Charlie Brown', 'David Miller', 'Eve Davis'],

'State': ['NY', 'CA', 'Texas', 'california', 'NY'],

'Department': ['IT', 'HR', 'Admin', 'IT', 'Exec'],

'Salary': [105000, 95000, 88000, 112000, 150000],

'Remote': ['Yes', 'No', 'n', 'yes', 'No']

}

df = pd.DataFrame(data)

print("Original DataFrame:")

print(df)1. Use the replace() Method for Simple Matches

The replace() function is the most direct tool in my arsenal for simple value updates. I use this when I have a specific value that needs to be changed to something else across the entire column.

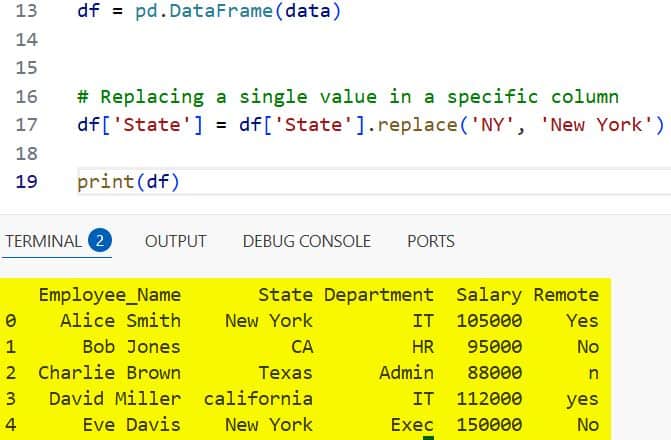

In our dataset, the “State” column has “NY” which I want to expand to “New York” for better reporting.

# Replacing a single value in a specific column

df['State'] = df['State'].replace('NY', 'New York')

print(df)You can refer to the screenshot below to see the output.

I like this method because it is readable and explicit. It tells anyone reading my code exactly what is happening without needing complex logic.

2. Replace Multiple Values Using a Dictionary

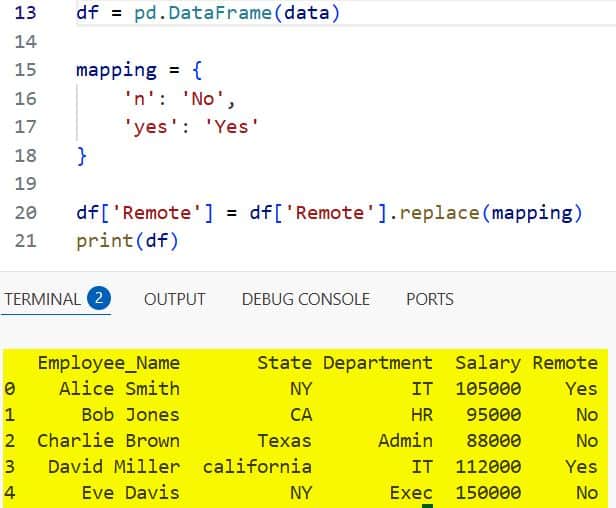

Often, I find myself needing to clean up multiple values at once. For example, our “Remote” column has inconsistent entries like ‘n’ for ‘No’ and ‘yes’ for ‘Yes’.

Instead of calling the replace function multiple times, I pass a dictionary.

# Using a dictionary to clean up inconsistent entries

mapping = {

'n': 'No',

'yes': 'Yes'

}

df['Remote'] = df['Remote'].replace(mapping)

print(df)You can refer to the screenshot below to see the output.

This approach keeps my code clean. I usually define the mapping dictionary at the top of my script if I plan to reuse it across multiple DataFrames.

3. Use loc for Conditional Replacements

Sometimes, I don’t want to replace every instance of a value; I only want to replace it if it meets a certain condition.

I use .loc when the replacement depends on logic rather than just a simple match. Let’s say I want to flag anyone in the ‘IT’ department as ‘Technical’ in a new category.

# Using loc to replace values based on a condition

df.loc[df['Department'] == 'IT', 'Department'] = 'Technical'

print(df)I prefer .loc because it is highly efficient and prevents the “SettingWithCopyWarning” that often plagues beginners.

It ensures I am modifying the original DataFrame directly.

4. Handle Case Sensitivity with String Methods

Data entry errors often lead to inconsistent casing, such as ‘CA’ and ‘california’ in our state column.

I have found that using the .str.replace() method is great when dealing with strings.

However, a more robust way is to normalize the case first.

# Standardizing state names to Title Case

df['State'] = df['State'].replace('california', 'California')

df['State'] = df['State'].replace('Texas', 'Texas') # Already correct, but good to be sure

# Alternatively, using a regex-based string replace for partial matches

df['State'] = df['State'].str.replace('CA', 'California')

print(df)In my experience, cleaning string data is 80% of the battle in data science. Always check for trailing spaces or case differences before you assume your replace() call failed.

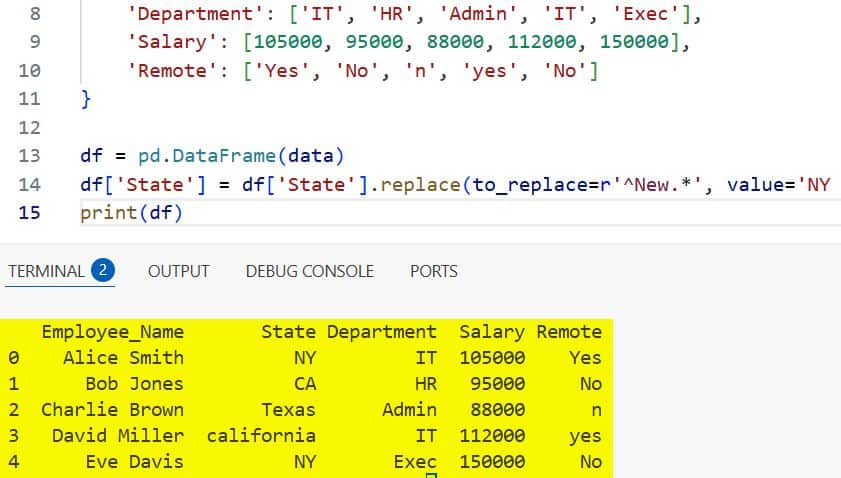

5. Replace Values Using Regular Expressions (Regex)

When I need to match patterns rather than exact strings, I turn to Regex.

Suppose our “Employee_Name” column had some extra symbols or digits that shouldn’t be there.

Regex allows me to strip out unwanted characters across an entire column instantly.

# Using Regex to replace patterns

# Let's pretend we want to replace any mention of 'New York' with 'NY (Tri-State Area)'

df['State'] = df['State'].replace(to_replace=r'^New.*', value='NY (Tri-State Area)', regex=True)

print(df)You can refer to the screenshot below to see the output.

I use regex=True sparingly because it can be slower on very large datasets. But for complex text cleaning, it is an absolute lifesaver.

6. Use the map() Function for Exhaustive Replacement

When I want to transform every single value in a column, and I have a complete map of what those values should be, I use map().

Be careful with map(), though. If a value isn’t in your dictionary, it will be turned into NaN.

I use this when I am converting categorical data into numeric codes for machine learning.

# Mapping departments to specific ID numbers

dept_map = {

'Technical': 101,

'HR': 102,

'Admin': 103,

'Exec': 104

}

df['Dept_ID'] = df['Department'].map(dept_map)

print(df)I find map() to be faster than replace() when the dictionary is large. It is my go-to for feature engineering.

7. Replace Values Based on Numeric Thresholds with NumPy

When I deal with financial data, like the “Salary” column in our example, I often need to bin values.

If I want to replace salaries over $100,000 with the label ‘High’ and others with ‘Standard’, I use np.where.

It is essentially an “IF” statement for your DataFrame.

# Using NumPy where to replace values based on a threshold

df['Salary_Bracket'] = np.where(df['Salary'] > 100000, 'High', 'Standard')

print(df)This is significantly faster than using a custom lambda function or a loop. In my professional work, performance matters, and np.where is built for speed.

8. Fill Missing Values with fillna()

Technically, replacing a null value is a form of replacement. I often encounter datasets where some entries are missing.

If a “Remote” status was missing, I might want to default it to “No”.

# Adding a row with a missing value for demonstration

df.loc[5] = ['John Doe', 'FL', 'IT', 90000, np.nan, 101, 'Standard']

# Replacing NaN values with a default

df['Remote'] = df['Remote'].fillna('No')

print(df)I always check for NaN values before performing other replacements. Missing data can often break your logic if you aren’t expecting it.

Summary of When to Use Which Method

Throughout my career, I’ve developed a bit of a mental flowchart for this:

- Use replace(): For simple, one-to-one or many-to-one value swaps.

- Use .loc: When the replacement depends on a condition in another column.

- Use map(): When you have a dictionary and want to transform the whole column.

- Use np.where(): For numeric conditions and high-performance needs.

- Use fillna(): Specifically for handling missing data.

Mastering these methods has made my data cleaning process much smoother.

It allows me to spend less time fighting with the data and more time actually analyzing it.

I hope this guide helps you clean your USA-specific datasets (or any data!) more effectively.

Pandas is a powerful tool, and once you get comfortable with these techniques, you’ll find it incredibly flexible.

You may also like to read:

- How to Delete Columns in a Pandas DataFrame

- How to Concatenate Two DataFrames in Pandas

- How to Count Unique Values in a Pandas Column

- Ways to Convert Pandas Series to DataFrame in Python

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.