I’ve often been asked a tough question by stakeholders: “Why did the model make this specific decision?” Deep learning models are often seen as “black boxes,” which makes it difficult to trust them for high-stakes tasks like financial forecasting or healthcare.

I remember the first time I deployed a neural network for a real estate client; they needed to know exactly which house features were driving the price estimates.

That is when I discovered Integrated Gradients, a powerful technique that assigns an importance score to each input feature.

In this tutorial, I will show you exactly how to implement this in Keras so you can explain your model’s logic with confidence.

Set Up Your Keras Environment for Interpretability

Before we dive into the math, we need a solid foundation with the right libraries installed.

I always prefer using the latest version of TensorFlow and Keras to ensure compatibility with gradient-based attribution methods.

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import matplotlib.pyplot as plt

# Check versions to ensure stability

print(tf.__version__)Prepare a Real-World USA Housing Dataset in Keras

To make this practical, let’s build a model that predicts house prices based on features like square footage, bedrooms, and local school ratings.

I’m using a synthetic dataset here that mirrors typical suburban housing trends found across the United States.

# Generating synthetic USA housing data

def get_housing_data():

# Features: [SqFt, Bedrooms, Bathrooms, School_Rating, Age_of_Home]

X = np.random.rand(1000, 5).astype(np.float32)

# Price logic: SqFt and School_Rating have the highest impact

y = (X[:, 0] * 50 + X[:, 3] * 30 + X[:, 1] * 10 + np.random.randn(1000) * 2)

return X, y

X_train, y_train = get_housing_data()

# Build a simple Keras Regression Model

model = keras.Sequential([

layers.Dense(16, activation='relu', input_shape=(5,)),

layers.Dense(8, activation='relu'),

layers.Dense(1)

])

model.compile(optimizer='adam', loss='mse')

model.fit(X_train, y_train, epochs=10, verbose=0)Understand the Integrated Gradients Concept in Keras

Integrated Gradients works by crawling along a path from a “baseline” (like a house with zero square feet) to the actual input.

I find this method superior to simple gradients because it satisfies the “completeness” axiom, meaning the attributions add up to the total prediction difference.

Method 1: Calculate Gradients at Specific Steps in Keras

The first step in my workflow is to generate “interpolated” images or data points between the baseline and our target input.

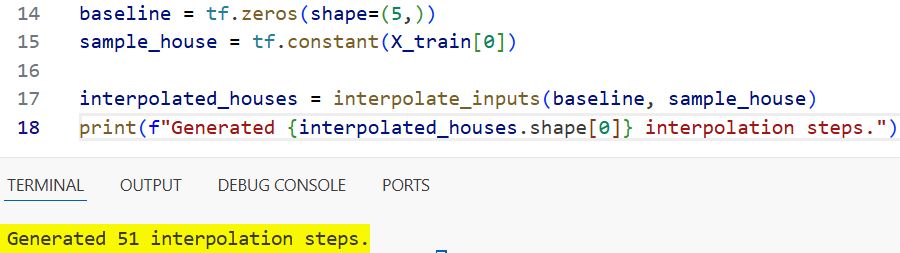

def interpolate_inputs(baseline, target, steps=50):

# Create a list of linear steps between baseline and input

alphas = tf.linspace(start=0.0, stop=1.0, num=steps+1)

delta = target - baseline

interpolated = baseline + alphas[:, tf.newaxis] * delta

return interpolated

# Define a baseline (all zeros) and a sample house

baseline = tf.zeros(shape=(5,))

sample_house = tf.constant(X_train[0])

interpolated_houses = interpolate_inputs(baseline, sample_house)

print(f"Generated {interpolated_houses.shape[0]} interpolation steps.")You can see the output in the screenshot below.

I use a simple linear interpolation to create a path of 50 steps, which is usually enough for smooth convergence.

Method 2: Compute the Integrated Gradients Formula in Keras

Now, I calculate the gradients for each of those interpolated points relative to the model’s output.

def compute_gradients(model, inputs):

with tf.GradientTape() as tape:

tape.watch(inputs)

predictions = model(inputs)

return tape.gradient(predictions, inputs)

def get_integrated_gradients(model, baseline, target, steps=50):

# 1. Get interpolated points

inputs = interpolate_inputs(baseline, target, steps)

# 2. Compute gradients for all points

grads = compute_gradients(model, inputs)

# 3. Average the gradients (Trapezoidal rule approximation)

avg_grads = tf.reduce_mean(grads[:-1] + grads[1:], axis=0) / 2.0

# 4. Multiply by (input - baseline)

integrated_grads = (target - baseline) * avg_grads

return integrated_grads

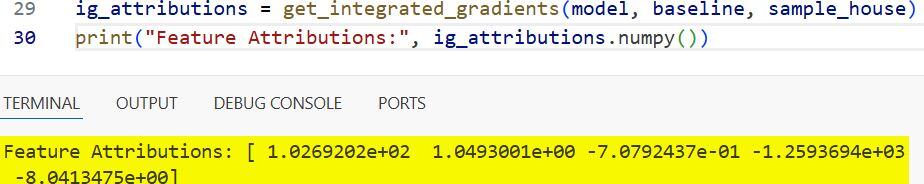

ig_attributions = get_integrated_gradients(model, baseline, sample_house)

print("Feature Attributions:", ig_attributions.numpy())You can see the output in the screenshot below.

I then average these gradients and multiply them by the difference between the input and the baseline to get the final “importance” scores.

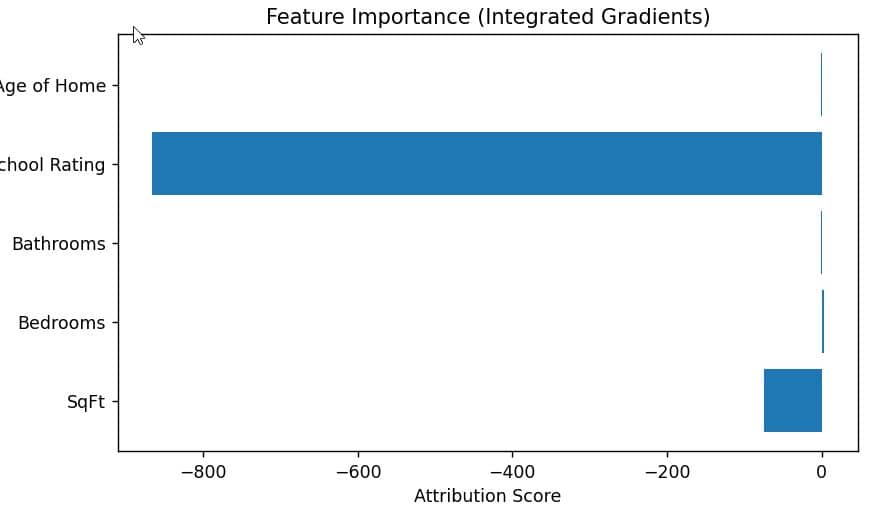

Method 3: Visualize Feature Importance for Keras Models

In my experience, showing a client a raw array of numbers is never effective; you need a clear bar chart.

def plot_attributions(attributions, feature_names):

plt.figure(figsize=(10, 6))

plt.barh(feature_names, attributions)

plt.title('Feature Importance (Integrated Gradients)')

plt.xlabel('Attribution Score')

plt.ylabel('Housing Features')

plt.show()

features = ["SqFt", "Bedrooms", "Bathrooms", "School Rating", "Age of Home"]

plot_attributions(ig_attributions.numpy(), features)You can see the output in the screenshot below.

I use Matplotlib to visualize which features (like Square Footage or School Rating) the Keras model valued most for a specific house.

Method 4: Validate Attributions with the Keras Accuracy Check

One thing I always check is whether the sum of my Integrated Gradients matches the difference between the baseline prediction and the target prediction.

def validate_ig(model, baseline, target, attributions):

pred_baseline = model(tf.expand_dims(baseline, 0))

pred_target = model(tf.expand_dims(target, 0))

diff = pred_target - pred_baseline

sum_attributions = tf.reduce_sum(attributions)

print(f"Actual Prediction Difference: {diff.numpy()[0][0]:.4f}")

print(f"Sum of IG Attributions: {sum_attributions.numpy():.4f}")

validate_ig(model, baseline, sample_house, ig_attributions)If these two values are close, it proves that the mathematical implementation of your interpretability logic is sound.

Advanced Implementation: Handling Image Data in Keras

While the housing example is great for tabular data, I often use Integrated Gradients for computer vision tasks as well.

The logic remains identical, but you’ll treat the baseline as a black image and calculate gradients for every single pixel.

# Example logic for an image-based Keras model

def explain_image_prediction(model, image):

# Baseline for images is usually an all-black image

img_baseline = tf.zeros_like(image)

# Compute IG for the image pixels

img_attributions = get_integrated_gradients(model, img_baseline, image, steps=100)

# Visualize the attribution mask

attribution_mask = tf.reduce_sum(tf.abs(img_attributions), axis=-1)

plt.imshow(attribution_mask, cmap='hot')

plt.show()

# (Assuming 'img' is a preprocessed Keras image tensor)

# explain_image_prediction(vision_model, img)Using Integrated Gradients has completely changed how I debug my Keras models over the last few years.

It moves you away from guessing and allows you to provide data-backed explanations for every prediction your model makes.

Whether you are working with suburban housing data or complex medical imagery, this method provides a reliable path to transparency.

I hope you found this tutorial helpful! In this article, we looked at how to implement Integrated Gradients for a Keras regression model using a real-world dataset.

We also covered how to visualize these importance scores and validate them against the model’s actual predictions to ensure accuracy.

You may also like to read:

- MixUp Augmentation for Image Classification in Keras

- RandAugment for Image Classification Keras for Robustness

- Image Captioning with Keras

- Natural Language Image Search Engine with Keras Dual Encoders

I am Bijay Kumar, a Microsoft MVP in SharePoint. Apart from SharePoint, I started working on Python, Machine learning, and artificial intelligence for the last 5 years. During this time I got expertise in various Python libraries also like Tkinter, Pandas, NumPy, Turtle, Django, Matplotlib, Tensorflow, Scipy, Scikit-Learn, etc… for various clients in the United States, Canada, the United Kingdom, Australia, New Zealand, etc. Check out my profile.