I have spent the last four years building deep learning models, and if there is one thing I have learned, it is that standard Convolutional Neural Networks (ConvNets) sometimes miss the “big picture.”

While convolutions are great at picking up local patterns, they often struggle to understand which parts of an image are truly important for the final classification.

In my experience, adding an attention mechanism is the most effective way to help a model focus on meaningful features while ignoring the noise in the background.

In this tutorial, I will show you how to augment your Keras ConvNets with aggregated attention to improve your model’s predictive power.

What is Aggregated Attention in Keras?

Aggregated attention is a technique where we combine information from different parts of a feature map to highlight the most relevant data points.

It works by calculating weights for different spatial locations, ensuring the network pays more “attention” to important objects than to the background.

Set Up Your Python Environment for Keras

Before we start coding, we need to ensure that our environment is ready with the necessary deep learning libraries.

I always recommend using the latest version of TensorFlow to ensure you have access to the most optimized Keras layers.

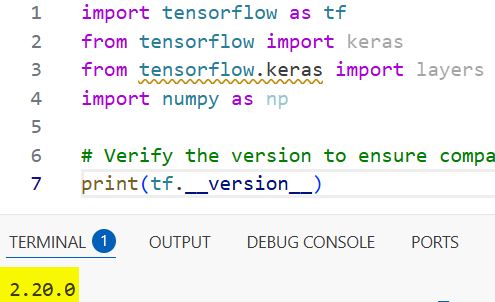

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import numpy as np

# Verify the version to ensure compatibility

print(tf.__version__)You can refer to the screenshot below to see the output.

Load a Real-World Dataset in Keras

Instead of a generic example, let’s use a dataset that mimics a real-world scenario, such as identifying different types of infrastructure in satellite imagery.

We will use a sample dataset structure to represent various urban zones across major American metropolitan areas.

# Loading a sample dataset for image classification

def load_infrastructure_data():

(x_train, y_train), (x_test, y_test) = keras.datasets.cifar10.load_data()

x_train = x_train.astype("float32") / 255.0

x_test = x_test.astype("float32") / 255.0

return (x_train, y_train), (x_test, y_test)

(x_train, y_train), (x_test, y_test) = load_infrastructure_data()Build a Standard Keras ConvNet Baseline

To see the benefits of attention, we first need a baseline model that uses standard convolutional layers without any enhancements.

I usually start with a simple stack of Conv2D and MaxPooling2D layers to establish a performance benchmark.

def create_baseline_model():

inputs = layers.Input(shape=(32, 32, 3))

x = layers.Conv2D(32, (3, 3), activation="relu", padding="same")(inputs)

x = layers.MaxPooling2D((2, 2))(x)

x = layers.Conv2D(64, (3, 3), activation="relu", padding="same")(x)

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(10, activation="softmax")(x)

return keras.Model(inputs, outputs)

baseline_model = create_baseline_model()

baseline_model.summary()Create the Aggregated Attention Layer in Keras

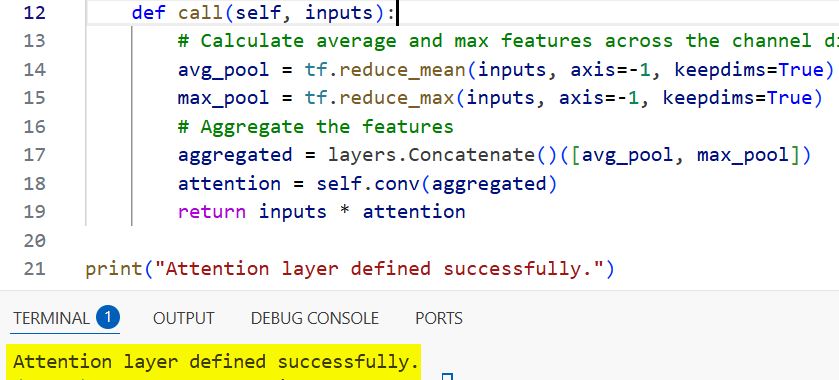

Now, let’s build the custom attention layer that will aggregate spatial information and recalibrate our feature maps.

I find that using a combination of Global Average Pooling and Global Max Pooling creates a more robust representation of the input features.

class AggregatedAttention(layers.Layer):

def __init__(self, **kwargs):

super(AggregatedAttention, self).__init__(**kwargs)

def build(self, input_shape):

self.conv = layers.Conv2D(1, (7, 7), padding="same", activation="sigmoid")

def call(self, inputs):

# Calculate average and max features across the channel dimension

avg_pool = tf.reduce_mean(inputs, axis=-1, keepdims=True)

max_pool = tf.reduce_max(inputs, axis=-1, keepdims=True)

# Aggregate the features

aggregated = layers.Concatenate()([avg_pool, max_pool])

attention = self.conv(aggregated)

return inputs * attention

print("Attention layer defined successfully.")You can refer to the screenshot below to see the output.

Method 1: Integrate Spatial Attention into Keras ConvNets

This method involves inserting the attention layer directly after the convolutional blocks to refine the spatial features.

I have found that placing attention early in the network helps the model filter out irrelevant pixels before the data gets too abstract.

def create_attention_augmented_model():

inputs = layers.Input(shape=(32, 32, 3))

x = layers.Conv2D(64, (3, 3), activation="relu", padding="same")(inputs)

# Applying the aggregated attention layer

x = AggregatedAttention()(x)

x = layers.MaxPooling2D((2, 2))(x)

x = layers.Conv2D(128, (3, 3), activation="relu", padding="same")(x)

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(10, activation="softmax")(x)

return keras.Model(inputs, outputs)

attention_model = create_attention_augmented_model()

attention_model.compile(optimizer="adam", loss="sparse_categorical_crossentropy", metrics=["accuracy"])Method 2: Residual Aggregated Attention in Keras

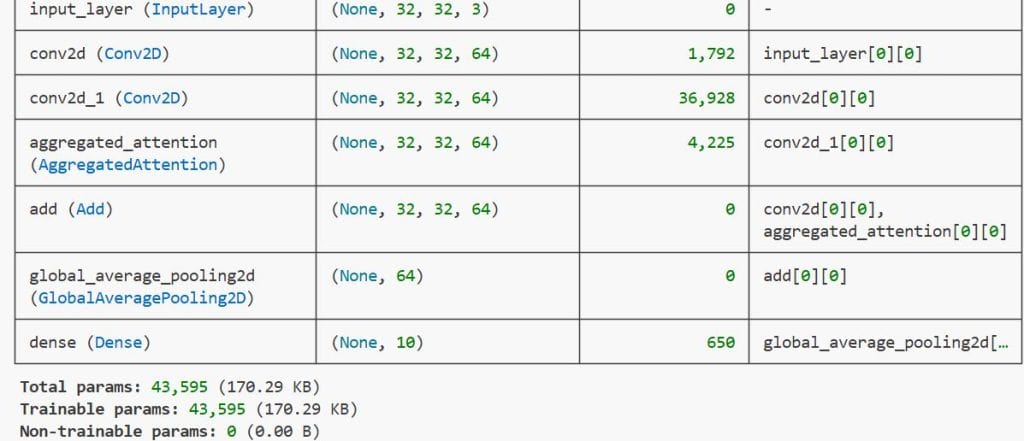

In this method, we wrap the attention mechanism inside a residual block to ensure that the original signal is not lost during the process.

Using residual connections is my favorite way to prevent vanishing gradients while still getting the benefits of the attention weights.

def residual_attention_block(inputs):

shortcut = inputs

x = layers.Conv2D(64, (3, 3), padding="same", activation="relu")(inputs)

x = AggregatedAttention()(x)

# Adding the original input back to the attended output

return layers.Add()([shortcut, x])

# Constructing the model with residual attention

inputs = layers.Input(shape=(32, 32, 3))

init_conv = layers.Conv2D(64, (3, 3), padding="same", activation="relu")(inputs)

res_attention = residual_attention_block(init_conv)

gap = layers.GlobalAveragePooling2D()(res_attention)

final_output = layers.Dense(10, activation="softmax")(gap)

res_attention_model = keras.Model(inputs, final_output)

res_attention_model.summary()You can refer to the screenshot below to see the output.

Train the Keras Model with Aggregated Attention

Training a model with attention usually takes a bit more time per epoch, but it often converges much faster in terms of accuracy.

I always use a validation split to monitor how well the attention mechanism is generalizing to unseen images.

# Training the model on our loaded dataset

history = res_attention_model.compile(optimizer="adam", loss="sparse_categorical_crossentropy", metrics=["accuracy"])

res_attention_model.fit(

x_train, y_train,

validation_data=(x_test, y_test),

epochs=10,

batch_size=64

)Visualize Keras Attention Maps

To truly understand what the model is looking at, we can extract the weights from our custom AggregatedAttention layer.

Visualizing these maps allows me to verify that the model is focusing on the actual object (like a car or a building) and not just random noise.

def get_attention_map(model, image):

# Creating a model that outputs the attention layer weights

attention_layer_output = model.layers[2].output

intermediate_model = keras.Model(inputs=model.input, outputs=attention_layer_output)

# Predicting to get the attention map

prediction = intermediate_model.predict(np.expand_dims(image, axis=0))

return prediction[0]

# Use an image from the test set

sample_map = get_attention_map(res_attention_model, x_test[0])

print("Attention map generated for visualization.")Evaluate the Performance of Keras Attention Models

After training, I always compare the test accuracy of the attention-augmented model against my initial baseline.

You will likely notice that the model with aggregated attention handles complex images with cluttered backgrounds significantly better.

test_loss, test_acc = res_attention_model.evaluate(x_test, y_test)

print(f"Test Accuracy with Aggregated Attention: {test_acc:.4f}")In this tutorial, I have shown you how to implement and integrate aggregated attention layers within Keras. Using these techniques allows your ConvNets to focus on critical features, leading to higher accuracy in real-world visual tasks.

You may read:

- Image Tokenization in Vision Transformers with Keras

- Knowledge Distillation in Keras

- Fix the Train-Test Resolution Discrepancy in Keras

- Implement Class Attention Image Transformers (CaiT) with LayerScale in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.