I have spent years working with Pandas for data analysis. It is my go-to tool when I am working on my local machine.

However, there comes a point in every developer’s life where the data outgrows the memory. This is when I usually make the jump to Apache Spark.

Converting a Pandas DataFrame to a PySpark DataFrame is a common task I perform when scaling up my data pipelines.

In this tutorial, I will show you the exact methods I use to move data from Pandas to Spark.

Method 1: Use the createDataFrame() Method

This is the simple way to handle the conversion. I use the createDataFrame() function provided by the SparkSession.

When I use this method, Spark tries to guess the data types for me. This is usually fine for smaller, simple datasets.

In this example, I am using a dataset of real estate listings in Texas. This is a common scenario I encounter when analyzing US housing market trends.

import pandas as pd

from pyspark.sql import SparkSession

# Initialize a Spark Session

spark = SparkSession.builder.appName("TexasRealEstate").getOrCreate()

# Create a Pandas DataFrame with Texas housing data

data = {

'City': ['Austin', 'Dallas', 'Houston', 'San Antonio', 'Fort Worth'],

'Average_Price': [550000, 420000, 390000, 310000, 340000],

'Available_Units': [1200, 2500, 3100, 1800, 950]

}

pandas_df = pd.DataFrame(data)

# Convert Pandas DataFrame to PySpark DataFrame

spark_df = spark.createDataFrame(pandas_df)

# Check the results

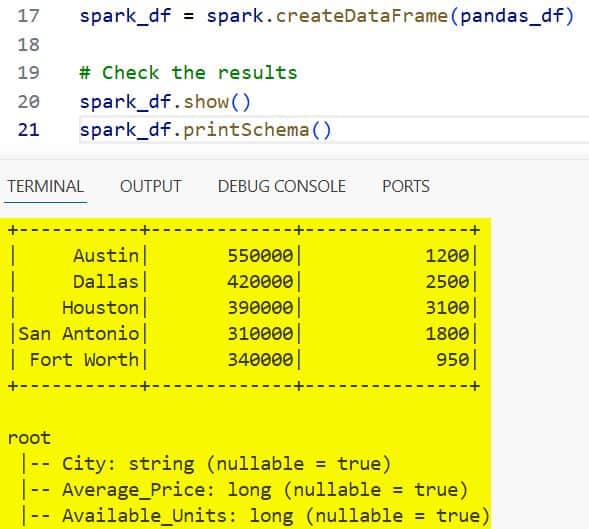

spark_df.show()

spark_df.printSchema()You can see the output in the screenshot below.

When I run this, Spark creates a distributed version of my local data. It is important to note that the column names are preserved during this process.

Method 2: Conversion with a Defined Schema

Sometimes Spark doesn’t guess the data types correctly. I have seen cases where a zip code is treated as an integer when it should be a string.

To avoid these headaches, I prefer to define the schema manually. This gives me full control over the data structure.

Here is how I convert a dataset of US employee records while enforcing specific data types for each column.

from pyspark.sql.types import StructType, StructField, StringType, IntegerType, DoubleType

# Define the schema manually

my_schema = StructType([

StructField("Employee_Name", StringType(), True),

StructField("State", StringType(), True),

StructField("Salary", IntegerType(), True),

StructField("Bonus_Percentage", DoubleType(), True)

])

# Pandas DataFrame with employee data

employee_data = {

'Employee_Name': ['Alice Smith', 'Bob Johnson', 'Charlie Davis'],

'State': ['NY', 'CA', 'TX'],

'Salary': [110000, 125000, 95000],

'Bonus_Percentage': [10.5, 12.0, 8.5]

}

pd_employee_df = pd.DataFrame(employee_data)

# Convert using the manual schema

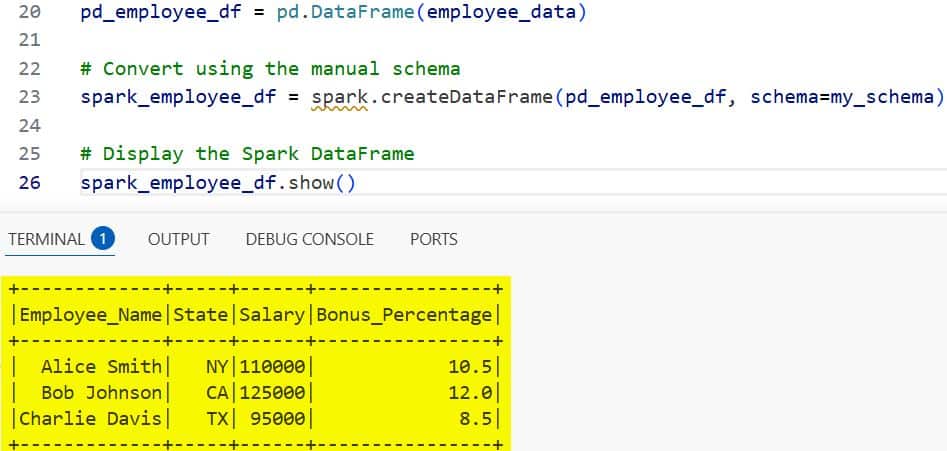

spark_employee_df = spark.createDataFrame(pd_employee_df, schema=my_schema)

# Display the Spark DataFrame

spark_employee_df.show()You can see the output in the screenshot below.

Defining the schema ahead of time is a best practice I highly recommend. It prevents “TypeMismatch” errors later in your Spark transformations.

Method 3: Optimize with Apache Arrow

When I am dealing with millions of rows, the standard conversion can be very slow. This is because Spark has to serialize the data row by row.

To speed this up, I use Apache Arrow. It allows for high-performance data transfer between Python and the Spark JVM.

I always enable this when I am working with large US census datasets or financial records.

# Enable Apache Arrow optimization

spark.conf.set("spark.sql.execution.arrow.pyspark.enabled", "true")

# Create a large Pandas DataFrame (simulating US Census data)

import numpy as np

census_data = {

'County_ID': np.random.randint(1000, 9999, size=10000),

'Population': np.random.randint(50000, 1000000, size=10000),

'Median_Income': np.random.uniform(40000, 120000, size=10000)

}

pd_census_df = pd.DataFrame(census_data)

# Convert using Arrow optimization

spark_census_df = spark.createDataFrame(pd_census_df)

# Show the first few rows

spark_census_df.show(5)By setting the configuration to true, I have seen conversion times drop significantly. You will need to have pyarrow installed in your environment for this to work.

Handle Timezones in Conversions

One trick I have learned over the years is that Spark and Pandas handle timezones differently.

If your Pandas DataFrame has localized timestamps (like US/Eastern), Spark might shift the times during conversion.

I usually convert my Pandas datetime columns to UTC before I move them into Spark. This ensures that my timestamps remain consistent across the cluster.

# Convert to UTC before shifting to Spark

pandas_df['Timestamp'] = pandas_df['Timestamp'].dt.tz_convert('UTC')

spark_df = spark.createDataFrame(pandas_df)Why Convert Pandas to Spark?

In my experience, Pandas is excellent for exploration, but it lives on a single machine. Spark is a distributed engine.

If you are working with data from the US Bureau of Labor Statistics that spans several gigabytes, Pandas will likely crash your machine.

By converting to Spark, you can distribute that data across multiple nodes in a cluster. This allows you to perform complex aggregations in seconds rather than minutes.

Common Issues to Avoid

I have made plenty of mistakes when I first started doing this. One major issue is memory overhead.

Even though Spark is distributed, the createDataFrame() method still requires the Pandas DataFrame to be in the memory of the “Driver” node.

If your local Pandas DataFrame is 20GB and your Driver only has 16GB of RAM, you will get an Out of Memory (OOM) error.

Always make sure your Driver has enough resources to hold the initial Pandas object before the conversion starts.

Conclusion

In this tutorial, I showed you how to convert a Pandas DataFrame to a PySpark DataFrame using three different methods.

I personally prefer using Method 2 with a defined schema for most projects. It saves me from a lot of debugging later on. If you are working with very large datasets, Method 3 with Apache Arrow is the way to go.

You may also like to read:

- How to Get Row by Index in Pandas

- How to Get the Number of Rows in a Pandas DataFrame

- Pandas Split Column by Delimiter

- How to Iterate Through Rows in Pandas

I am Bijay Kumar, a Microsoft MVP in SharePoint. Apart from SharePoint, I started working on Python, Machine learning, and artificial intelligence for the last 5 years. During this time I got expertise in various Python libraries also like Tkinter, Pandas, NumPy, Turtle, Django, Matplotlib, Tensorflow, Scipy, Scikit-Learn, etc… for various clients in the United States, Canada, the United Kingdom, Australia, New Zealand, etc. Check out my profile.