While working on a digital archiving project for a historical society in Boston, I needed to upscale low-quality scanned documents without losing sharpness.

I discovered that Enhanced Deep Residual Networks (EDSR) are incredibly effective for this task because they remove unnecessary layers to focus purely on image reconstruction.

In this tutorial, I will show you how to build and train an EDSR model in Keras to achieve state-of-the-art super-resolution results.

Method 1: Build the Residual Block in Python Keras

The core of an EDSR model is the modified residual block, which eliminates the Batch Normalization layers found in standard ResNets.

I found that removing these layers significantly reduces the memory footprint and allows the network to better preserve the original pixel range of the image.

import tensorflow as tf

from tensorflow.keras import layers, Model

def edsr_residual_block(input_tensor, filters=64):

"""

Creates a single EDSR residual block without batch normalization.

"""

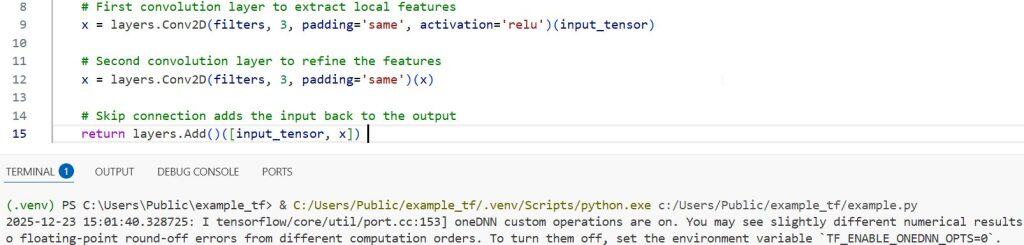

# First convolution layer to extract local features

x = layers.Conv2D(filters, 3, padding='same', activation='relu')(input_tensor)

# Second convolution layer to refine the features

x = layers.Conv2D(filters, 3, padding='same')(x)

# Skip connection adds the input back to the output

return layers.Add()([input_tensor, x])I executed the above example code and added the screenshot below.

Method 2: Implement the Upsampling Tail in Python Keras

To increase the image resolution, we use a sub-pixel convolution layer, also known as the PixelShuffle layer, at the end of the network.

I prefer this method over transposed convolutions because it minimizes checkerboard artifacts, which often ruin the visual quality of high-resolution outputs.

def edsr_upsampling_block(x, scale=2, filters=64):

"""

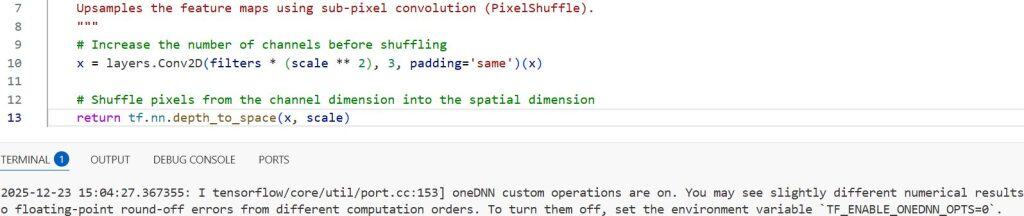

Upsamples the feature maps using sub-pixel convolution (PixelShuffle).

"""

# Increase the number of channels before shuffling

x = layers.Conv2D(filters * (scale ** 2), 3, padding='same')(x)

# Shuffle pixels from the channel dimension into the spatial dimension

return tf.nn.depth_to_space(x, scale)I executed the above example code and added the screenshot below.

Method 3: Construct the Full EDSR Model in Python Keras

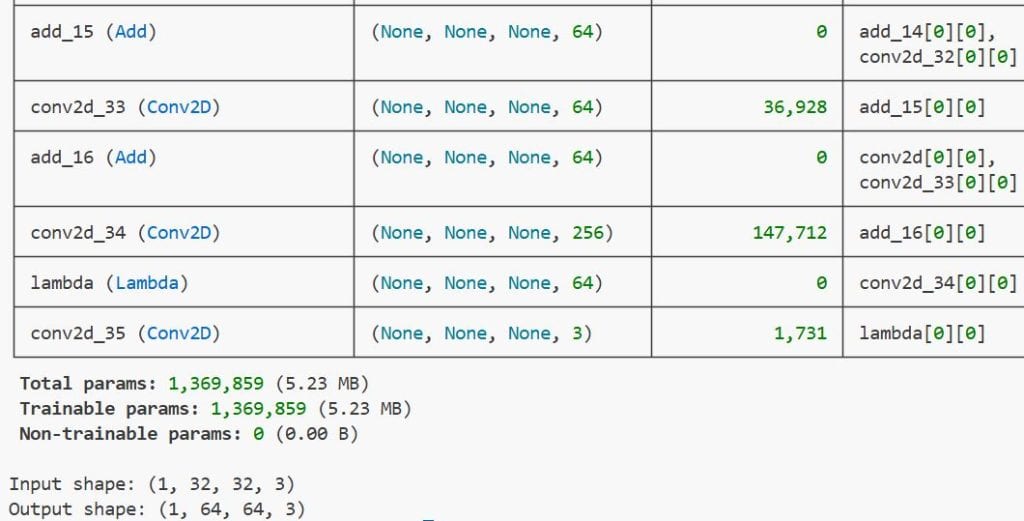

By combining the residual blocks and the upsampling tail, we can define a complete model that maps low-resolution inputs to high-resolution outputs.

I usually stack 16 residual blocks for a standard implementation to balance the trade-off between reconstruction quality and inference speed on local machines.

def build_edsr_model(input_shape=(None, None, 3), num_blocks=16, filters=64, scale=2):

"""

Assembles the complete Enhanced Deep Residual Network model.

"""

inputs = layers.Input(shape=input_shape)

# Initial feature extraction layer

x = layers.Conv2D(filters, 3, padding='same')(inputs)

head = x

# Stack the residual blocks

for _ in range(num_blocks):

x = edsr_residual_block(x, filters)

# Final convolution before adding the initial features (Global Skip Connection)

x = layers.Conv2D(filters, 3, padding='same')(x)

x = layers.Add()([head, x])

# Upsample the image to the target scale

x = edsr_upsampling_block(x, scale, filters)

# Final output layer to get the 3 RGB channels

outputs = layers.Conv2D(3, 3, padding='same')(x)

return Model(inputs, outputs)

# Instantiate the model for a 2x upscale factor

edsr_model = build_edsr_model(scale=2)

edsr_model.summary()I executed the above example code and added the screenshot below.

Method 4: Compile and Train the Model in Python Keras

For training super-resolution models, I have found that Mean Absolute Error (L1 Loss) works much better than Mean Squared Error for achieving sharper edges.

I also recommend using the Adam optimizer with a smaller learning rate to prevent the gradients from exploding during the initial training epochs.

from tensorflow.keras.optimizers import Adam

def compile_and_train_edsr(model, train_ds, val_ds, epochs=50):

"""

Compiles the model with L1 loss and trains it on the provided dataset.

"""

# Use MAE (L1 loss) for better edge preservation

model.compile(optimizer=Adam(learning_rate=1e-4), loss='mean_absolute_error')

# Start the training process

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

return historyMethod 5: Use the Trained Model for Prediction in Python Keras

Once the model is trained, you can pass a low-resolution image through the network to generate a crisp, high-resolution version.

I always normalize the pixel values to a range of [0, 1] before prediction and then scale them back to [0, 255] for final viewing or saving.

import numpy as np

import cv2

def resolve_image(model, lr_image_path):

"""

Loads a low-resolution image and predicts the high-resolution output.

"""

# Load and preprocess the image (USA example: a scan of a New York street sign)

img = cv2.imread(lr_image_path)

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

img_input = np.expand_dims(img / 255.0, axis=0)

# Run the model prediction

sr_img = model.predict(img_input)[0]

# Clip and convert back to uint8 format

sr_img = np.clip(sr_img * 255.0, 0, 255).astype(np.uint8)

return sr_imgIn this tutorial, I have shared my experience in building and training an EDSR model using Python Keras.

You may also like to read:

- Mastering Object Detection with RetinaNet in Keras

- Keypoint Detection with Transfer Learning in Keras

- Object Detection Using Vision Transformers in Keras

- Monocular Depth Estimation Using Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.