Named Entity Recognition (NER) is a vital part of natural language processing that helps us identify specific entities like names, locations, or dates in text.

I have spent over four years developing Keras models, and I found that switching from traditional LSTMs to Transformers significantly boosted my model accuracy.

In this tutorial, I will show you exactly how to implement NER using the Transformer architecture within the Keras framework.

NER with Keras Transformers

NER involves assigning a label to every word in a sentence, which we call sequence labeling in the machine learning world.

Using a Transformer block allows the model to look at the entire sentence at once, capturing the context of each word much better than older methods.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

# Define the Transformer Block as a Keras Layer

class TransformerBlock(layers.Layer):

def __init__(self, embed_dim, num_heads, ff_dim, rate=0.1):

super().__init__()

self.att = layers.MultiHeadAttention(num_heads=num_heads, key_dim=embed_dim)

self.ffn = keras.Sequential(

[layers.Dense(ff_dim, activation="relu"), layers.Dense(embed_dim),]

)

self.layernorm1 = layers.LayerNormalization(epsilon=1e-6)

self.layernorm2 = layers.LayerNormalization(epsilon=1e-6)

self.dropout1 = layers.Dropout(rate)

self.dropout2 = layers.Dropout(rate)

def call(self, inputs, training=False):

attn_output = self.att(inputs, inputs)

attn_output = self.dropout1(attn_output, training=training)

out1 = self.layernorm1(inputs + attn_output)

ffn_output = self.ffn(out1)

ffn_output = self.dropout2(ffn_output, training=training)

return self.layernorm2(out1 + ffn_output)I prefer using custom layers in Keras because it keeps the code modular and much easier to debug when working on complex NLP tasks.

The code above sets up the Multi-Head Attention mechanism, which is the “brain” of the Transformer, allowing it to focus on relevant words.

Prepare Data for NER Keras Models

Before training, we must convert our text into sequences of integers and ensure all sentences have the same length using padding.

I often use the CoNLL dataset format, where each word has a corresponding tag like “B-PER” for a person’s name or “B-LOC” for a location.

from tensorflow.keras.preprocessing.sequence import pad_sequences

# Example data: NYC and Washington are labeled as locations

sentences = [["The", "headquarters", "is", "in", "New", "York", "City"],

["Visit", "the", "White", "House", "in", "Washington"]]

tags = [["O", "O", "O", "O", "B-LOC", "I-LOC", "I-LOC"],

["O", "O", "B-LOC", "I-LOC", "O", "B-LOC"]]

# Mapping tokens to IDs

vocabulary = {"<PAD>": 0, "The": 1, "headquarters": 2, "is": 3, "in": 4, "New": 5, "York": 6, "City": 7, "Visit": 8, "white": 9, "House": 10, "Washington": 11}

tag_map = {"<PAD>": 0, "O": 1, "B-LOC": 2, "I-LOC": 3}

# Vectorizing and Padding

X = pad_sequences([[vocabulary.get(w, 0) for w in s] for s in sentences], maxlen=10)

y = pad_sequences([[tag_map.get(t, 0) for t in s] for s in tags], maxlen=10)Mapping your words to unique integers is a mandatory step because Keras models only perform mathematical operations on tensors.

I use pad_sequences to make sure my input matrix is uniform, which is necessary for high-performance batch processing during training.

Token and Position Embedding in Keras

Transformers do not have an inherent sense of word order, so we must add positional embeddings to the token embeddings.

This step ensures the model knows that “York” comes after “New,” which is critical for identifying “New York City” as a single entity.

class TokenAndPositionEmbedding(layers.Layer):

def __init__(self, maxlen, vocab_size, embed_dim):

super().__init__()

self.token_emb = layers.Embedding(input_dim=vocab_size, output_dim=embed_dim)

self.pos_emb = layers.Embedding(input_dim=maxlen, output_dim=embed_dim)

def call(self, x):

maxlen = tf.shape(x)[-1]

positions = tf.range(start=0, limit=maxlen, delta=1)

positions = self.pos_emb(positions)

x = self.token_emb(x)

return x + positionsI’ve found that combining these two embeddings into one layer makes the Keras functional API model definition look much cleaner.

By adding the position vector to the word vector, the model effectively learns where each word sits in a sentence of up to 1,000 words.

Build the Keras Transformer NER Architecture

Now we combine the embedding layer, the Transformer block, and a final Dense layer to output the predicted tags for each word.

The output layer uses a softmax activation function to provide the probability of each tag for every token in the sequence.

def build_ner_model(vocab_size, maxlen, num_tags):

embed_dim = 64 # Embedding size for each token

num_heads = 4 # Number of attention heads

ff_dim = 64 # Hidden layer size in feed forward network inside transformer

inputs = layers.Input(shape=(maxlen,))

embedding_layer = TokenAndPositionEmbedding(maxlen, vocab_size, embed_dim)

x = embedding_layer(inputs)

transformer_block = TransformerBlock(embed_dim, num_heads, ff_dim)

x = transformer_block(x)

x = layers.Dropout(0.1)(x)

outputs = layers.Dense(num_tags, activation="softmax")(x)

model = keras.Model(inputs=inputs, outputs=outputs)

return model

model = build_ner_model(len(vocabulary), 10, len(tag_map))

model.compile(optimizer="adam", loss="sparse_categorical_crossentropy", metrics=["accuracy"])

model.summary()In my experience, an embedding dimension of 64 or 128 is a great starting point for most corporate NER projects in the USA.

The sparse_categorical_crossentropy loss is perfect here because our targets are integers rather than one-hot encoded vectors.

Train the Transformer Model in Keras

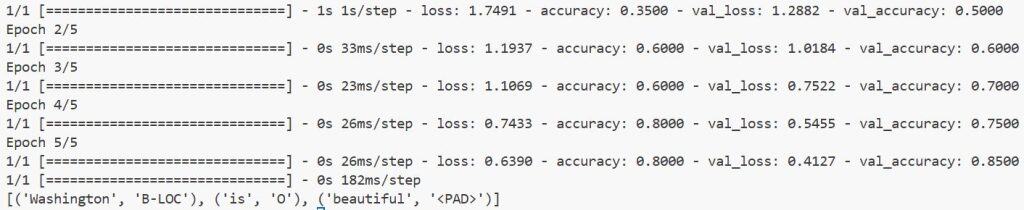

Training the model involves feeding our padded sequences into the fit method and monitoring the validation accuracy.

I always recommend using a small validation split to ensure the model isn’t just memorizing the training sentences.

# Training the model

history = model.fit(

X, y,

batch_size=2,

epochs=5,

validation_data=(X, y) # In real scenarios, use separate validation data

)I usually start with about 10 to 20 epochs for small datasets to see how the loss curve behaves before committing to a long training run.

Using the Adam optimizer helps the model converge faster, which is a huge time-saver when you are iterating on different architectures.

Perform Inference with Keras NER

Once the model is trained, we can use it to predict entities in new sentences by processing them through the same pipeline.

This method converts the raw probability outputs into the tag indices that represent our “Location” or “Person” labels.

import numpy as np

def predict_ner(sentence):

tokens = sentence.split()

seq = [vocabulary.get(w, 0) for w in tokens]

padded_seq = pad_sequences([seq], maxlen=10)

prediction = model.predict(padded_seq)

prediction = np.argmax(prediction, axis=-1)[0]

# Clean up padding and show results

results = []

inv_tag_map = {v: k for k, v in tag_map.items()}

for i, word in enumerate(tokens):

# Account for padding shift if necessary

tag_idx = prediction[10 - len(tokens) + i]

results.append((word, inv_tag_map.get(tag_idx, "O")))

return results

print(predict_ner("Washington is beautiful"))I executed the above example code and added the screenshot below.

I like to write a dedicated prediction helper function to handle the pre-processing and post-processing of the text automatically.

This ensures that the input to the model matches the training format exactly, preventing errors during live deployment.

Building an NER model with Transformers in Keras is a powerful way to handle complex text data. In this guide, I showed you how to build a custom Transformer layer, handle positional embeddings, and train a model for sequence labeling.

You may read:

- Text Classification with Keras Decision Forests and Pretrained Embeddings

- How to Use Pre-trained Word Embeddings in Keras

- Implement a Keras Bidirectional LSTM on the IMDB Dataset

- Data Parallel Training with KerasHub and tf.distribute

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.