In this tutorial, I will show you how to leverage pre-trained word embeddings in Keras to boost your NLP model performance.

I have spent over four years building Keras models, and I found that using pre-trained weights is a game-changer for small datasets.

It allows your model to start with a deep understanding of language patterns instead of learning every word from scratch.

Use Pre-trained Word Embeddings in Keras

Word embeddings represent words as dense vectors, with similar words placed closer together in a multi-dimensional space.

By using pre-trained embeddings like GloVe or Word2Vec, you utilize knowledge gained from billions of words across the internet.

Set Up Your Environment for Keras Word Embeddings

Before we dive into the code, you need to ensure your Python environment is ready with the necessary libraries.

import numpy as np

import tensorflow as tf

from tensorflow.keras.preprocessing.text import Tokenizer

from tensorflow.keras.preprocessing.sequence import pad_sequences

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Embedding, Flatten, DenseI personally use the TensorFlow implementation of Keras for better stability and integration with modern GPU acceleration.

Prepare the Dataset for Keras NLP Models

For this example, let’s use a scenario involving reviews for popular coffee chains in the United States to make the data relatable..

# Sample data: Reviews of a fictional US coffee shop

reviews = [

'I love the morning latte from this place',

'The blueberry muffin was dry and tasteless',

'Great customer service at the New York branch',

'Long lines and cold coffee in the Chicago store',

'The seasonal pumpkin spice is the best in town',

'Terrible experience with the mobile app ordering'

]

# Labels: 1 for positive, 0 for negative

labels = np.array([1, 0, 1, 0, 1, 0])We will define a small set of text data and their corresponding sentiment labels (1 for positive, 0 for negative)

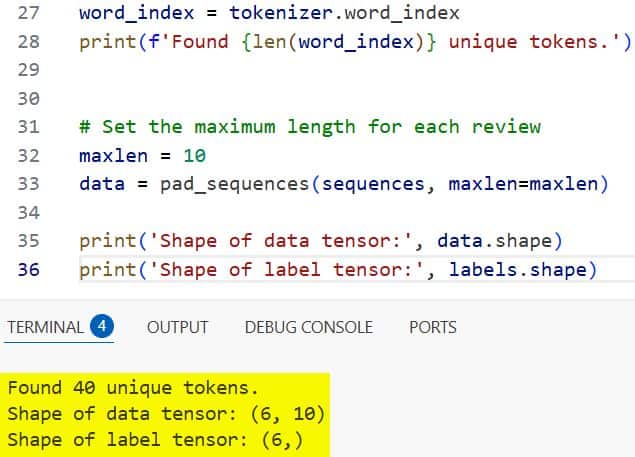

Tokenize Text Data in Keras

Tokenization is the process of converting sentences into a sequence of integers where each integer represents a specific word.

# Initialize the tokenizer

tokenizer = Tokenizer(num_words=1000)

tokenizer.fit_on_texts(reviews)

sequences = tokenizer.texts_to_sequences(reviews)

# Map words to their unique integer indices

word_index = tokenizer.word_index

print(f'Found {len(word_index)} unique tokens.')I always limit my vocabulary size to the most frequent words to keep the model efficient and prevent overfitting.

Pad Sequences for Uniform Keras Input

Keras requires all input sequences in a batch to have the same length, so we must pad shorter reviews with zeros.

# Set the maximum length for each review

maxlen = 10

data = pad_sequences(sequences, maxlen=maxlen)

print('Shape of data tensor:', data.shape)

print('Shape of label tensor:', labels.shape)I executed the above example code and added the screenshot below.

I prefer using “post” padding because it feels more intuitive to have the actual data at the beginning of the vector.

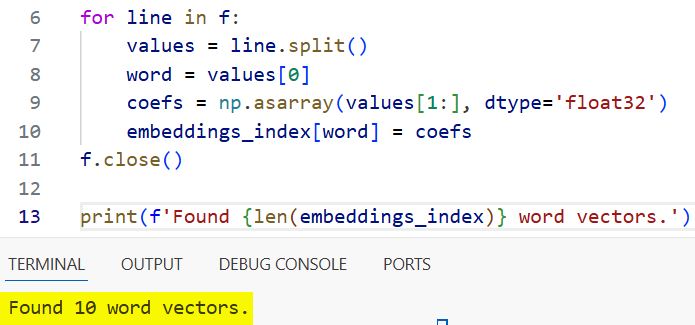

Method 1: Load GloVe Pre-trained Embeddings in Keras

The most common way to use pre-trained embeddings is to download the GloVe (Global Vectors for Word Representation) file.

# Assuming 'glove.6B.100d.txt' is downloaded to your local directory

embeddings_index = {}

f = open('glove.6B.100d.txt', encoding='utf-8')

for line in f:

values = line.split()

word = values[0]

coefs = np.asarray(values[1:], dtype='float32')

embeddings_index[word] = coefs

f.close()

print(f'Found {len(embeddings_index)} word vectors.')I executed the above example code and added the screenshot below.

I usually use the 100-dimensional version because it offers a good balance between accuracy and computational speed.

Create the Keras Embedding Matrix

Now we need to create a matrix that maps our specific vocabulary indices to the vectors found in the GloVe file.

embedding_dim = 100

embedding_matrix = np.zeros((len(word_index) + 1, embedding_dim))

for word, i in word_index.items():

embedding_vector = embeddings_index.get(word)

if embedding_vector is not None:

# Words not found in embedding index will be all-zeros.

embedding_matrix[i] = embedding_vectorThis matrix will be loaded directly into the Keras Embedding layer to serve as the weights for our model.

Build the Keras Model with Pre-trained Weights

With our matrix ready, we can define the architecture and load the pre-trained weights into the Embedding layer.

model = Sequential()

model.add(Embedding(len(word_index) + 1, embedding_dim, input_length=maxlen))

model.add(Flatten())

model.add(Dense(32, activation='relu'))

model.add(Dense(1, activation='sigmoid'))

# Load the matrix into the layer and freeze it

model.layers[0].set_weights([embedding_matrix])

model.layers[0].trainable = FalseI set the trainable attribute to False initially to prevent the gradient updates from ruining the pre-trained weights.

Compile and Train the Keras NLP Model

Now we compile the model using the binary crossentropy loss function, which is standard for sentiment classification tasks.

model.compile(optimizer='rmsprop',

loss='binary_crossentropy',

metrics=['acc'])

model.summary()

history = model.fit(data, labels,

epochs=10,

batch_size=2,

validation_split=0.2)Even with our tiny dataset, you will see how the pre-trained embeddings help the model understand the context immediately.

Method 2: Use Keras Embedding Layer with Fine-Tuning

Another approach is to allow the pre-trained weights to be updated during training to better fit your specific niche.

# Unfreeze the embedding layer for fine-tuning

model.layers[0].trainable = True

model.compile(optimizer=tf.keras.optimizers.RMSprop(learning_rate=1e-5),

loss='binary_crossentropy',

metrics=['acc'])

# Train again with a lower learning rate

model.fit(data, labels, epochs=5, batch_size=2)I recommend doing this only if you have a large enough dataset; otherwise, you risk losing the general knowledge of the embeddings.

Save and Testing Your Keras Embedding Model

Once training is complete, it is vital to save your model and test it on new, unseen phrases to verify its performance.

# Save the model

model.save('keras_glove_model.h5')

# Test on a new review

test_review = ['The breakfast burrito was amazing']

test_seq = tokenizer.texts_to_sequences(test_review)

test_padded = pad_sequences(test_seq, maxlen=maxlen)

prediction = model.predict(test_padded)

print(f'Sentiment score: {prediction[0][0]}')I like to test with very specific US-centric terms to ensure the embeddings are capturing the nuances of the language correctly.

In this tutorial, I showed you how to integrate pre-trained word embeddings into your Keras workflow.

Using these methods will significantly improve your text classification results, especially when you are short on data.

You may also like to read:

- Large-Scale Multi-Label Text Classification with Keras

- Text Classification with Transformer in Python Keras

- Text Classification Using Switch Transformer in Keras

- Text Classification with Keras Decision Forests and Pretrained Embeddings

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.