I have found that understanding context is everything when processing natural language.

Standard LSTMs process text from left to right, but often, the meaning of a word depends on what comes after it.

I remember the first time I switched from a simple LSTM to a Bidirectional LSTM; the accuracy jump on sentiment tasks was immediate and impressive.

In this tutorial, I will show you how to leverage Keras to build a powerful Bidirectional LSTM model using the classic IMDB movie review dataset.

Set Up Your Keras Environment for Bidirectional LSTM

Before we dive into the architecture, we need to ensure our environment is ready with the necessary libraries.

import numpy as np

import tensorflow as tf

from tensorflow.keras.datasets import imdb

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, LSTM, Bidirectional, Embedding, Dropout

from tensorflow.keras.preprocessing import sequence

# Checking the version to ensure compatibility

print(tf.__version__)I always prefer using the latest version of TensorFlow to ensure I have access to optimized GPU kernels for faster training.

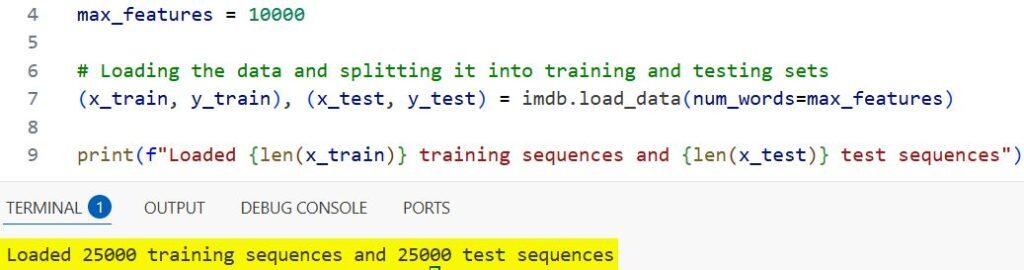

Load the IMDB Dataset for Keras LSTM Training

The IMDB dataset is a gold mine for sentiment analysis, containing 50,000 highly polar movie reviews.

# Defining the number of words to consider as features

max_features = 10000

# Loading the data and splitting it into training and testing sets

(x_train, y_train), (x_test, y_test) = imdb.load_data(num_words=max_features)

print(f"Loaded {len(x_train)} training sequences and {len(x_test)} test sequences")You can see the output in the screenshot below.

I typically limit the vocabulary to the top 10,000 words to prevent the model from getting bogged down by rare “noise” words.

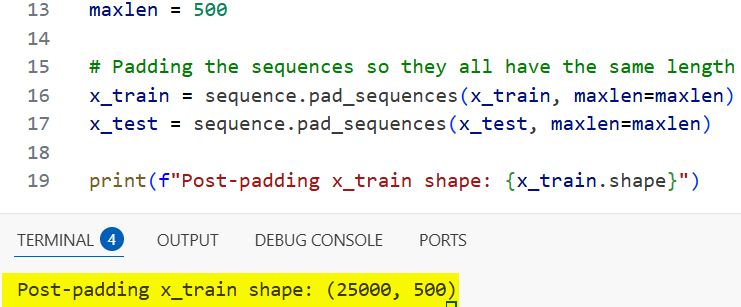

Pad Sequences for Uniform Keras LSTM Input

Neural networks require input arrays of the same shape, but movie reviews vary greatly in length.

# Setting the maximum sequence length

maxlen = 500

# Padding the sequences so they all have the same length

x_train = sequence.pad_sequences(x_train, maxlen=maxlen)

x_test = sequence.pad_sequences(x_test, maxlen=maxlen)

print(f"Post-padding x_train shape: {x_train.shape}")You can see the output in the screenshot below.

I use the sequence.pad_sequences utility to ensure every review is exactly 500 words long, truncating longer ones and padding shorter ones.

Define the Bidirectional LSTM Layer in Keras

The magic happens here, where the Bidirectional wrapper duplicates the LSTM layer to process input in both directions.

# Initializing the sequential model

model = Sequential()

# Adding the Embedding layer to turn integers into dense vectors

model.add(Embedding(max_features, 128, input_length=maxlen))

# Adding the Bidirectional LSTM layer

model.add(Bidirectional(LSTM(64)))In my experience, this “double-look” at the data helps the model catch sarcasm or nuanced critiques that a forward-only pass might miss.

Add Dropout and Dense Layers to Keras Model

To prevent overfitting, a common headache in sentiment models, I always inject a Dropout layer before the final output.

# Adding dropout to reduce overfitting during training

model.add(Dropout(0.5))

# Final output layer for binary classification

model.add(Dense(1, activation='sigmoid'))

# Compiling the model with Adam optimizer

model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy'])The final Dense layer uses a sigmoid activation function to output a probability score between 0 (negative) and 1 (positive).

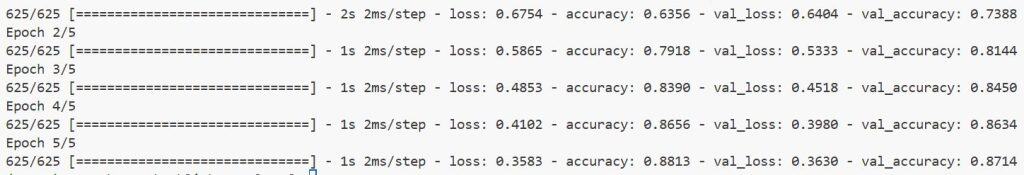

Method 1: Train the Keras Bidirectional LSTM with Validation Split

This is the standard approach I use when I want to monitor how well the model generalizes to unseen data during the training process.

# Training the model with a clear view of validation performance

history = model.fit(x_train, y_train,

batch_size=32,

epochs=5,

validation_split=0.2)You can see the output in the screenshot below.

I find that using a 20% validation split gives a reliable indicator of whether the model is beginning to overfit.

Method 2: Utilize Early Stopping in Keras LSTM Training

Sometimes, training for a fixed number of epochs is inefficient and leads to degraded performance.

from tensorflow.keras.callbacks import EarlyStopping

# Defining the early stopping criteria

early_stop = EarlyStopping(monitor='val_loss', patience=2)

# Training with the callback enabled

model.fit(x_train, y_train,

batch_size=32,

epochs=10,

validation_data=(x_test, y_test),

callbacks=[early_stop])I use the EarlyStopping callback to halt training the moment the validation loss stops improving, saving both time and compute resources.

Evaluate the Keras Bidirectional LSTM Performance

Once the training is complete, it is vital to see how the model performs on the test set it has never seen before.

# Evaluating the model on the test dataset

loss, accuracy = model.evaluate(x_test, y_test, batch_size=32)

print(f"Test Loss: {loss:.4f}")

print(f"Test Accuracy: {accuracy:.4f}")I always look for a balance between loss and accuracy to ensure the model isn’t just “guessing” based on high-frequency words.

Visualize Keras Bidirectional LSTM Accuracy Trends

Numbers are great, but I find that plotting the training history helps me visualize the learning curve of the network.

import matplotlib.pyplot as plt

# Plotting the accuracy over epochs

plt.plot(history.history['accuracy'], label='train')

plt.plot(history.history['val_accuracy'], label='validation')

plt.title('Bidirectional LSTM Accuracy')

plt.legend()

plt.show()A smooth convergence between the training and validation lines usually indicates a well-tuned model.

Make Predictions with Keras Bidirectional LSTM

The ultimate test of any sentiment model is feeding it a custom review and seeing if it correctly identifies the mood.

I wrote this small helper function to convert raw text into the format our Keras model expects.

def predict_sentiment(review_text):

# This is a simplified preprocessing step for demonstration

word_to_id = imdb.get_word_index()

words = review_text.lower().split()

ids = [word_to_id.get(w, 2) + 3 for w in words] # Offset by 3 for IMDB

ids = [i if i < max_features else 2 for i in ids]

padded = sequence.pad_sequences([ids], maxlen=maxlen)

prediction = model.predict(padded)

return "Positive" if prediction > 0.5 else "Negative"

# Example usage with a sample review

sample_review = "The cinematography was stunning and the acting was top notch."

print(f"Review: {sample_review} -> Sentiment: {predict_sentiment(sample_review)}")Building a Bidirectional LSTM in Keras is a simple process that yields powerful results for NLP tasks.

I have found that this architecture is particularly robust for sentiment analysis because it captures the context of words from both directions.

While the IMDB dataset is a great starting point, the same principles apply to more complex datasets like customer feedback or social media monitoring.

You may also read:

- Text Classification with Transformer in Python Keras

- Text Classification Using Switch Transformer in Keras

- Text Classification with Keras Decision Forests and Pretrained Embeddings

- How to Use Pre-trained Word Embeddings in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.