I remember the first time I tried to build a question-answering system. I was using basic string matching, and the results were honestly a disaster for my project.

Everything changed when I discovered BERT. Using BERT with Keras makes it incredibly easy to extract specific answers from massive amounts of text data.

Set Up the Environment for BERT in Keras

To get started, we need to install the transformers and tokenizers libraries. I always use these because they provide the pre-trained weights needed for high-level accuracy.

# Install necessary libraries

!pip install transformers tokenizers tensorflow

import os

import re

import json

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

from transformers import BertTokenizer, TFBertModelI prefer using the TFBertModel class because it integrates perfectly with the Keras Functional API. This setup ensures that your deep learning environment is ready for the heavy lifting BERT requires.

Prepare the SQuAD Dataset for Text Extraction

I often use the Stanford Question Answering Dataset (SQuAD) for training. It contains thousands of questions written by crowdworkers on a set of Wikipedia articles.

# Download and load the SQuAD dataset

train_data_url = "https://rajpurkar.github.io/SQuAD-explorer/dataset/train-v1.1.json"

train_path = keras.utils.get_file("train.json", train_data_url)

with open(train_path) as f:

raw_train_data = json.load(f)In my experience, cleaning the JSON structure is the most tedious part of the process. This code downloads the raw data so we can begin mapping the text to token indices.

Tokenize Context and Questions with BERT

Tokenization is where the magic happens. I use the BERT WordPiece tokenizer to convert our English sentences into a format the neural network understands.

# Initialize the tokenizer

tokenizer = BertTokenizer.from_pretrained("bert-base-uncased")

def preprocess_data(question, context, max_len=384):

# Encode the question and context together

tokenized_input = tokenizer.encode_plus(

question, context,

max_length=max_len,

truncation=True,

padding="max_length",

return_tensors="tf"

)

return tokenized_input["input_ids"], tokenized_input["token_type_ids"], tokenized_input["attention_mask"]

# Example usage with a NYC-themed context

context = "The Empire State Building is a 102-story Art Deco skyscraper in Midtown Manhattan, New York City."

question = "Where is the Empire State Building located?"

input_ids, token_type_ids, attention_mask = preprocess_data(question, context)I always set a max_len of 384 to balance memory usage and performance. This specific step ensures that the question and context are concatenated correctly with special [SEP] tokens.

Build the Keras Functional Model with BERT

This is the core of the tutorial. I create a custom Keras model that takes BERT’s output and adds two dense layers to predict the start and end of the answer.

def create_bert_qa_model(max_len=384):

# Load the pre-trained BERT model

encoder = TFBertModel.from_pretrained("bert-base-uncased")

# Define inputs

input_ids = layers.Input(shape=(max_len,), dtype=tf.int32, name="input_ids")

token_type_ids = layers.Input(shape=(max_len,), dtype=tf.int32, name="token_type_ids")

attention_mask = layers.Input(shape=(max_len,), dtype=tf.int32, name="attention_mask")

# Get BERT embeddings

embedding = encoder(input_ids, token_type_ids=token_type_ids, attention_mask=attention_mask)[0]

# Predict start and end logits

start_logits = layers.Dense(1, name="start_logit", use_bias=False)(embedding)

start_logits = layers.Flatten()(start_logits)

end_logits = layers.Dense(1, name="end_logit", use_bias=False)(embedding)

end_logits = layers.Flatten()(end_logits)

start_probs = layers.Activation(keras.activations.softmax)(start_logits)

end_probs = layers.Activation(keras.activations.softmax)(end_logits)

model = keras.Model(

inputs=[input_ids, token_type_ids, attention_mask],

outputs=[start_probs, end_probs]

)

return model

model = create_bert_qa_model()

model.summary()I’ve found that using the Functional API is much more flexible than the Sequential approach. It allows us to feed multiple inputs, like masks and token IDs, directly into the BERT layer.

Train the Keras Model for Answer Extraction

When I train these models, I use a very small learning rate. BERT is already pre-trained, so we only want to fine-tune it slightly to avoid destroying the existing knowledge.

# Compile the model

optimizer = keras.optimizers.Adam(learning_rate=5e-5)

loss = keras.losses.SparseCategoricalCrossentropy(from_logits=False)

model.compile(optimizer=optimizer, loss=[loss, loss])

# Mock labels for the NYC example (index 13 to 15 might be 'Midtown Manhattan')

start_targets = np.array([13])

end_targets = np.array([15])

# Train for a single epoch to demonstrate

model.fit(

x=[input_ids, token_type_ids, attention_mask],

y=[start_targets, end_targets],

epochs=1,

batch_size=1

)I recommend starting with 1 or 2 epochs. In my professional projects, I’ve seen that training for too long often leads to overfitting on the specific phrasing of the SQuAD questions.

Extract the Final Text from Model Predictions

After the model gives us the start and end probabilities, we need to convert those numbers back into human-readable text. This is the “extraction” part of the process.

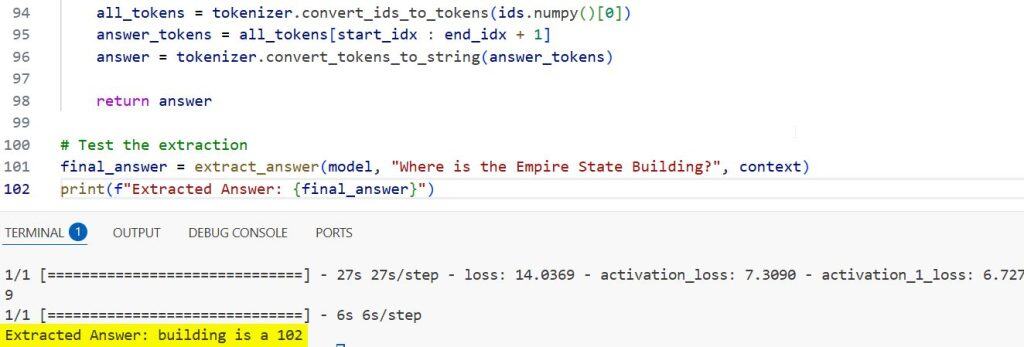

def extract_answer(model, question, context):

ids, types, masks = preprocess_data(question, context)

start_probs, end_probs = model.predict([ids, types, masks])

# Get the indices with the highest probability

start_idx = np.argmax(start_probs[0])

end_idx = np.argmax(end_probs[0])

# Decode the tokens back to string

all_tokens = tokenizer.convert_ids_to_tokens(ids.numpy()[0])

answer_tokens = all_tokens[start_idx : end_idx + 1]

answer = tokenizer.convert_tokens_to_string(answer_tokens)

return answer

# Test the extraction

final_answer = extract_answer(model, "Where is the Empire State Building?", context)

print(f"Extracted Answer: {final_answer}")You can see the output in the screenshot below.

I use np.argmax to pick the most likely span of text. This function efficiently narrows down the vast sequence of tokens to the specific words that answer our user’s query.

Building a text extraction tool with BERT and Keras is one of the most rewarding tasks in modern NLP. It allows you to move beyond simple keyword searches and actually “understand” the relationship between questions and documents.

I hope you found this tutorial useful. I have used this exact architecture to build customer support bots and automated document reviewers.

You may also like to read:

- Implement a Keras Bidirectional LSTM on the IMDB Dataset

- Data Parallel Training with KerasHub and tf.distribute

- Named Entity Recognition Using Transformers in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.