During my years building data pipelines for retail analytics firms in Chicago, I’ve often run into a common “silent” bug.

You write a perfect script to pull quarterly sales data, but the source file is missing or filtered down to nothing.

If your code keeps running on an empty DataFrame, it usually crashes with a messy KeyError or a cryptic calculation error.

In this tutorial, I will show you exactly how I check if a Pandas DataFrame is empty using several reliable methods.

Why Checking for Empty DataFrames Matters

When I’m processing large datasets, like tracking inventory across California warehouses, I can’t always assume the data is there.

An empty DataFrame isn’t just a “null” object; it’s a container that exists but happens to have no rows or columns.

Checking for this early in your script (often called a “guard clause”) saves you from wasting computing time on empty objects.

Method 1: Use the DataFrame.empty Property

This is my go-to method. It is the most “Pythonic” and readable way to verify if your data is missing.

The .empty attribute returns a simple Boolean value: True if the DataFrame has no data, and False otherwise.

I prefer this because it accounts for DataFrames that might have column names but zero actual records.

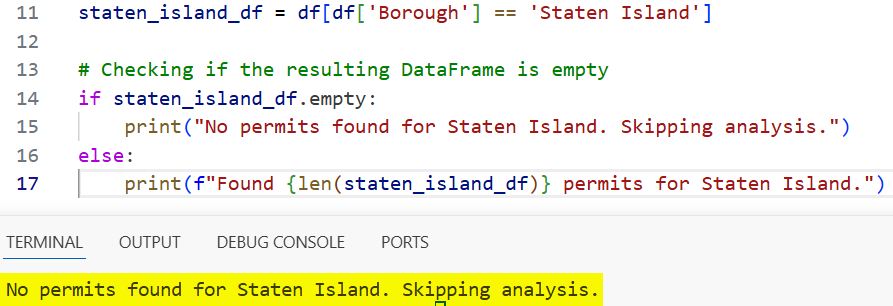

Real-World Example: Analyze NYC Housing Starts

Imagine we are filtering a dataset of New York City housing permits to find projects specifically in a neighborhood that hasn’t seen new builds this year.

import pandas as pd

# Simulating a dataset of NYC housing permits

data = {

'Borough': ['Manhattan', 'Brooklyn', 'Queens'],

'Permits_Issued': [120, 450, 310]

}

df = pd.DataFrame(data)

# Filtering for a borough not in our list

staten_island_df = df[df['Borough'] == 'Staten Island']

# Checking if the resulting DataFrame is empty

if staten_island_df.empty:

print("No permits found for Staten Island. Skipping analysis.")

else:

print(f"Found {len(staten_island_df)} permits for Staten Island.")

# Output: No permits found for Staten Island. Skipping analysis.You can see the output in the screenshot below.

I like this method because the code reads like an English sentence: “If the DataFrame is empty, do this.”

Method 2: Use the len() Function

If you come from a traditional programming background, you might instinctively reach for the len() function.

In Pandas, calling len(df) returns the number of rows in the DataFrame. If the length is 0, it means the DataFrame has no rows.

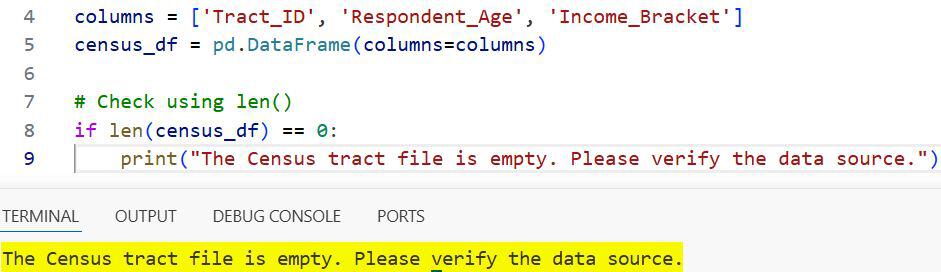

Example: Check US Census Data Participation

Let’s look at a scenario where we are analyzing survey responses from a specific US Census tract.

import pandas as pd

# Create an empty DataFrame with headers

columns = ['Tract_ID', 'Respondent_Age', 'Income_Bracket']

census_df = pd.DataFrame(columns=columns)

# Check using len()

if len(census_df) == 0:

print("The Census tract file is empty. Please verify the data source.")You can see the output in the screenshot below.

While this works perfectly, keep in mind that len() only checks the row count.

In most cases, this is exactly what you want, as a DataFrame with columns but no rows is functionally “empty” for analysis.

Method 3: Use the DataFrame.index Attribute

Another trick I’ve used in high-performance loops is checking the length of the index directly.

Accessing df.index can sometimes feel more explicit when you are dealing with complex multi-index datasets.

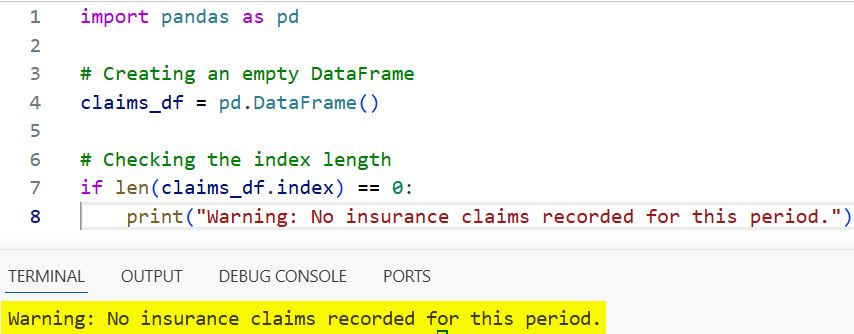

Example: Process Florida Hurricane Insurance Claims

Suppose you are iterating through daily claim files during hurricane season in Florida.

import pandas as pd

# Creating an empty DataFrame

claims_df = pd.DataFrame()

# Checking the index length

if len(claims_df.index) == 0:

print("Warning: No insurance claims recorded for this period.")You can see the output in the screenshot below.

Using .index is essentially the same as using len(df), but some developers prefer it because it explicitly targets the row axis.

Method 4: Check the .shape Property

The .shape attribute returns a tuple representing the dimensions of the DataFrame: (rows, columns).

You can check if the first element of that tuple (index 0) is equal to zero.

Example: High-Frequency Trading Data on Wall Street

In financial applications where you need to know exactly why a dataset is empty, .shape is very helpful.

import pandas as pd

# A DataFrame with 0 rows and 5 columns

trade_df = pd.DataFrame(columns=['Ticker', 'Price', 'Volume', 'Exchange', 'Time'])

# Check shape

if trade_df.shape[0] == 0:

print(f"Data received but contains {trade_df.shape[1]} empty columns.")This method is slightly more verbose, but it gives you more context about the structure of your empty object.

Method 5: Use the .any() or .all() Logic (For Truthiness)

Sometimes, you don’t just want to know if the DataFrame is empty, you want to know if it’s “effectively” empty (i.e., all values are NaN).

While not technically checking for an “empty object,” this is a situation I encounter often when cleaning US Bureau of Labor Statistics data.

import pandas as pd

import numpy as np

# A DataFrame filled with NaNs

labor_df = pd.DataFrame({'Unemployment_Rate': [np.nan, np.nan]})

# Check if all values are null

if labor_df.isnull().all().all():

print("The DataFrame contains only null values.")This is a deeper check that ensures your calculations won’t fail due to missing data, even if the “rows” technically exist.

Comparing the Methods

I put together this table to help you decide which method fits your specific Python project.

| Method | Syntax | Best Use Case |

| .empty | df.empty | Standard, readable, and fastest for general use. |

| len() | len(df) == 0 | Quick check when you only care about row count. |

| .index | len(df.index) == 0 | Explicitly checking the row index length. |

| .shape | df.shape[0] == 0 | Useful if you also need to check column counts. |

Important Considerations for Real-World Data

When working with DataFrames in a production environment, there are a few “gotchas” I’ve learned the hard way.

Handle None vs. Empty DataFrame

A variable might be None instead of an empty DataFrame. I always recommend a double check if you aren’t sure of the source.

if df is not None and not df.empty:

# Proceed with data processing

passPerformance Notes

For most US-based enterprise applications, the performance difference between .empty and len(df) is negligible.

However, .empty is slightly faster because it doesn’t need to calculate the actual length; it just checks if the underlying data is null.

Checking for an empty DataFrame is a small but critical step in writing robust Python code.

Whether you are analyzing Tesla stock prices or managing customer data for a Texas-based retailer, using the .empty property will make your scripts cleaner.

I’ve found that being proactive with these checks prevents 90% of the runtime errors I used to face early in my career.

I hope you found this tutorial helpful!

How do you usually handle empty datasets in your workflows? Do you prefer a specific method for a particular reason?

You may also like to read:

- How to Create a Pandas DataFrame from a List of Dictionaries

- How to Drop Rows in Pandas Based on Column Values

- How to Use the Pandas Apply Function to Each Row

- How to Drop Rows with NaN Values in Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.