In my years of working as a Python developer, I’ve found that handling dates is one of the most common, yet frustrating, tasks you’ll face.

Whether I’m analyzing Wall Street stock trends or processing retail sales from a Chicago warehouse, the data rarely arrives in the right format.

Usually, the dates are stuck as strings or integers, which makes it impossible to perform time-series analysis or filter by month.

In this tutorial, I’ll show you exactly how I convert Pandas columns to datetime objects using real-world US-based examples.

The Problem with String Dates

When you load a CSV file, Pandas often treats date columns as “object” types (which are essentially strings).

If you try to calculate the number of days between two sales transactions while they are strings, Python will throw an error.

By converting these to a proper datetime format, you unlock powerful features like extracting the day of the week or calculating quarterly growth.

Method 1: Use the pd.to_datetime() Function

This is my “go-to” method for almost every project I handle. It is incredibly flexible and usually guesses the format correctly.

Let’s look at a dataset involving public holidays in the United States.

import pandas as pd

# Creating a dataset of US Holidays

data = {

'Holiday': ['New Year\'s Day', 'Independence Day', 'Labor Day', 'Veterans Day'],

'Date_String': ['2025-01-01', '2025-07-04', '2025-09-01', '2025-11-11']

}

df = pd.DataFrame(data)

# Checking the data types before conversion

print("Before Conversion:")

print(df.dtypes)

# Converting the column to datetime

df['Date_String'] = pd.to_datetime(df['Date_String'])

print("\nAfter Conversion:")

print(df.dtypes)

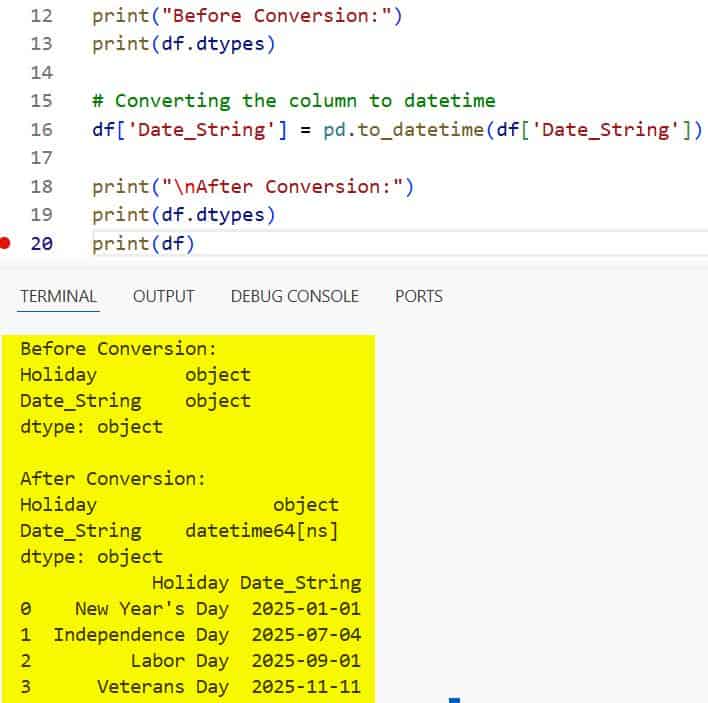

print(df)You can see the output in the screenshot below.

In this example, I used pd.to_datetime() to transform the Date_String column. Notice how the Dtype changes from object to datetime64[ns].

Method 2: Handle US Date Formats (MM/DD/YYYY)

In the United States, we typically use the Month-Day-Year format. This can sometimes confuse Pandas if the day is less than 12.

I’ve learned the hard way that it’s always safer to explicitly define the format to avoid data corruption.

# Sales data from a New York retail store

sales_data = {

'Store_ID': [101, 102, 103],

'Sale_Date': ['12/25/2024', '01/15/2025', '02/20/2025'] # MM/DD/YYYY

}

df_sales = pd.DataFrame(sales_data)

# Specifying the format to ensure accuracy

df_sales['Sale_Date'] = pd.to_datetime(df_sales['Sale_Date'], format='%m/%d/%Y')

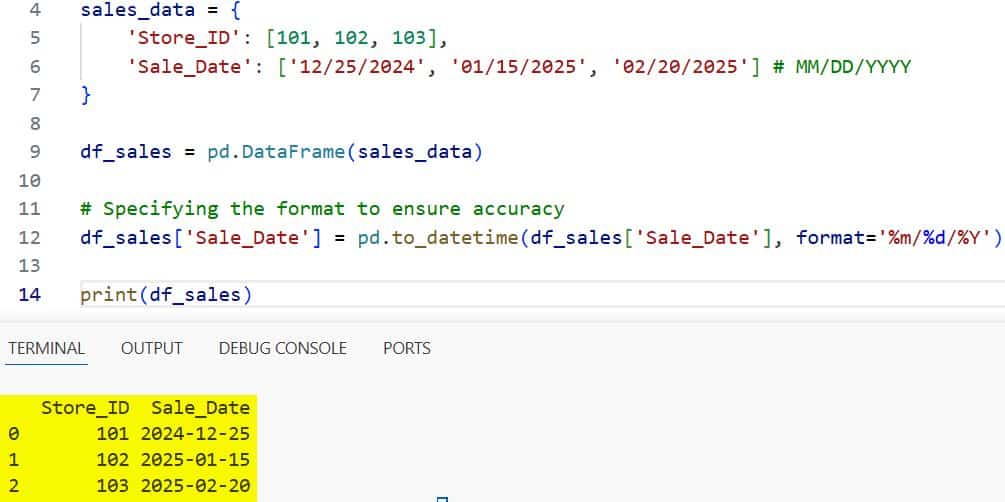

print(df_sales)You can see the output in the screenshot below.

Using %m/%d/%Y tells Pandas exactly what to expect. This is a practice I follow to ensure my reports for US stakeholders are always accurate.

Method 3: Convert Multiple Columns at Once

Sometimes I deal with large financial datasets where I have “Start Date” and “End Date” columns. Converting them one by one is tedious.

Instead, I use a more efficient approach to handle multiple columns in a single line of code.

# Project tracking for a tech firm in Silicon Valley

projects = {

'Project_Name': ['Cloud Migration', 'AI Integration'],

'Start_Date': ['2024-05-01', '2024-06-15'],

'End_Date': ['2024-12-01', '2025-03-20']

}

df_projs = pd.DataFrame(projects)

# Converting multiple columns

cols_to_fix = ['Start_Date', 'End_Date']

df_projs[cols_to_fix] = df_projs[cols_to_fix].apply(pd.to_datetime)

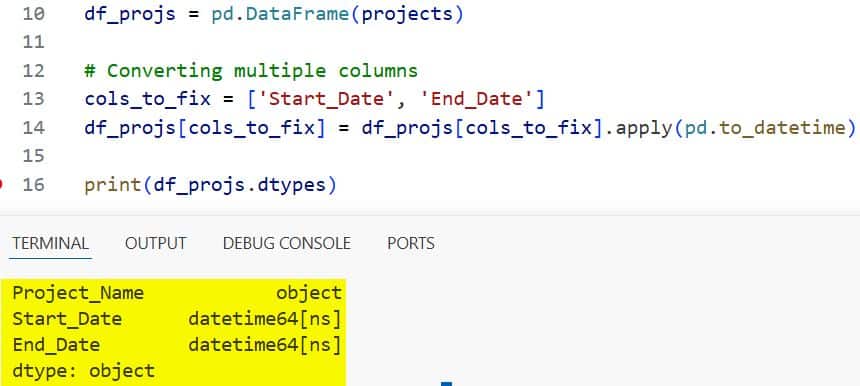

print(df_projs.dtypes)You can see the output in the screenshot below.

Method 4: Deal with Dirty Data (The ‘Errors’ Parameter)

In real-world data science, columns often contain “garbage” values like “N/A” or “TBD”. I’ve seen this happen a lot with manual entry logs in logistics.

If pd.to_datetime() hits a value it doesn’t recognize, it will crash. I use the errors parameter to manage this.

# Shipping data with some invalid entries

shipping_data = {

'Package_ID': ['A1', 'B2', 'C3'],

'Ship_Date': ['2024-10-10', 'Missing', '2024-12-25']

}

df_ship = pd.DataFrame(shipping_data)

# Using 'coerce' to turn invalid strings into NaT (Not a Time)

df_ship['Ship_Date'] = pd.to_datetime(df_ship['Ship_Date'], errors='coerce')

print(df_ship)Setting errors=’coerce’ is a lifesaver. It turns the “Missing” string into NaT, which stands for “Not a Time.” This allows the rest of the column to be converted smoothly.

Method 5: Convert Unix Timestamps

I often work with web server logs or IoT sensor data from California manufacturing plants. These dates often arrive as Unix Timestamps (large integers).

Pandas makes it easy to convert these into human-readable dates.

# Sensor data from a factory

sensor_data = {

'Sensor_ID': [1, 2],

'Timestamp': [1715347200, 1715433600]

}

df_sensor = pd.DataFrame(sensor_data)

# Converting Unix timestamp to datetime

df_sensor['Timestamp'] = pd.to_datetime(df_sensor['Timestamp'], unit='s')

print(df_sensor)By specifying unit=’s’, I told Pandas that the numbers represent seconds since the Unix Epoch (January 1, 1970).

Method 6: Handle Custom String Formats

Occasionally, I encounter weirdly formatted strings, like “2024-July-04”. While standard, it requires a specific format string.

I find that being precise with format codes prevents the parser from guessing wrong and slowing down my script.

# Event data for a National Park tour

tours = {

'Park': ['Yellowstone', 'Yosemite'],

'Tour_Date': ['2024-July-04', '2024-August-20']

}

df_tours = pd.DataFrame(tours)

# Using %B for full month name

df_tours['Tour_Date'] = pd.to_datetime(df_tours['Tour_Date'], format='%Y-%B-%d')

print(df_tours)Extract Features After Conversion

Once the conversion is done, the real fun begins. I usually extract specific parts of the date to improve my analysis.

For example, if I’m analyzing peak travel times for US Thanksgiving, I might want to know the day of the week.

# Creating a date range

df_dates = pd.DataFrame({'Date': pd.to_datetime(['2024-11-28', '2024-12-25'])})

# Extracting Year, Month, and Day Name

df_dates['Year'] = df_dates['Date'].dt.year

df_dates['Month'] = df_dates['Date'].dt.month

df_dates['Day_Name'] = df_dates['Date'].dt.day_name()

print(df_dates)The .dt accessor is a powerful tool I use daily to slice and dice my time-related data.

Performance Tip: Use infer_datetime_format

If you have a massive dataset—say, millions of rows from a US Treasury report—parsing dates can be slow.

In older versions of Pandas, I used infer_datetime_format=True to speed things up.

In newer versions, Pandas is already quite fast, but providing the format string manually is still the fastest way to process data.

Summary of Format Codes

When I’m defining formats, I always keep these common codes in mind:

%m: Month as a number (01 to 12)%d: Day of the month%Y: Four-digit year (2025)%y: Two-digit year (25)%H: Hour (24-hour clock)%M: Minute

In this tutorial, we looked at several ways to convert columns to datetime objects in Pandas.

I’ve covered the standard to_datetime function, handling US-specific date formats, and dealing with errors in messy datasets.

Getting your date formats right is a foundational skill in Python data analysis. It saves you from countless bugs later in your code.

You may also like to read:

- How to Get Length of DataFrame in Pandas

- How to Display All Columns in a Pandas DataFrame

- How to Export Pandas DataFrame to CSV in Python

- How to Read Text Files in Pandas

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.