Let me be upfront with you: this isn’t a “both are great, it depends!” article. You’ve probably read a dozen of those already. I’m going to tell you what the data actually says, what the job market looks like right now, and then give you a clear recommendation based on where you’re headed.

The short version? If you’re starting from scratch in 2026, learn PyTorch first. But TensorFlow is far from dead, and depending on what you want to build, it might actually be the better choice for you. Let me explain.

A Quick Bit of History (Bear With Me — It Matters)

Google released TensorFlow in 2015 with one clear goal: production-scale ML deployment. It was designed for enterprise systems, TPUs, and distributed training pipelines. It was powerful, but notoriously hard to work with in the early days.

Meta (Facebook) released PyTorch in 2016 with a completely different philosophy: make deep learning feel like writing normal Python. Researchers loved it immediately.

For a few years, the community was split: PyTorch for research, TensorFlow for production. Then something shifted. Researchers started shipping to production, and they brought PyTorch with them. Today, PyTorch dominates 85% of published deep learning research papers, and most new AI projects — especially in generative AI and large language models, are built on PyTorch.

TensorFlow responded with TF 2.0 (adopting eager execution, Keras integration), but the momentum had already shifted.

That’s the backdrop. Now, let’s get into the actual differences.

How They Actually Work Differently

The most fundamental difference between PyTorch and TensorFlow is how they build and execute computation graphs — and this shapes everything from how you debug your code to how fast you can prototype.

PyTorch uses dynamic computation graphs. This means your model runs line by line, just like regular Python. You can set a breakpoint anywhere, print tensor values mid-execution, and modify your architecture on the fly. If something breaks, the error points to the exact line. It feels natural.

TensorFlow originally used static graphs — you’d define the entire computation graph first, then execute it. This was great for optimization but terrible for debugging. TF 2.x switched to eager execution by default, making it much more like PyTorch. But underneath, TF still uses its graph compilation engine (XLA) for performance optimization, which is both its biggest strength and the source of most of its complexity.

Here’s a simple example of building the same neural network in both:

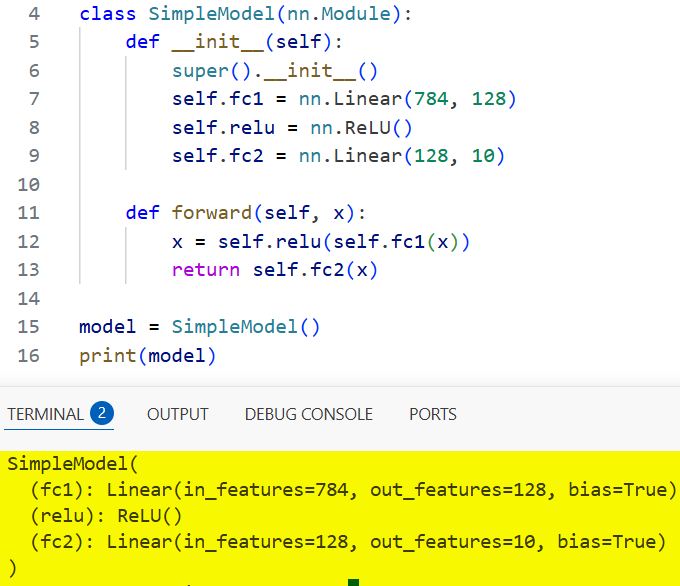

PyTorch:

import torch

import torch.nn as nn

class SimpleModel(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(784, 128)

self.relu = nn.ReLU()

self.fc2 = nn.Linear(128, 10)

def forward(self, x):

x = self.relu(self.fc1(x))

return self.fc2(x)

model = SimpleModel()

print(model)

You can see the output in the screenshot below.

TensorFlow (with Keras):

pythonimport tensorflow as tf

model = tf.keras.Sequential([

tf.keras.layers.Dense(128, activation='relu', input_shape=(784,)),

tf.keras.layers.Dense(10)

])

model.summary()

Both are clean, but notice the difference in style. PyTorch feels like writing a regular Python class. TensorFlow with Keras is more declarative; you describe the structure and let the framework handle the internals. Neither is wrong; they just reflect different philosophies.

The Real-World Numbers in 2026

This is where the honest picture matters. Based on current data:

- 85% of deep learning research papers use PyTorch as their framework

- PyTorch is the default framework for nearly every major AI lab — OpenAI, Hugging Face, Meta, DeepMind, and most university research groups

- TensorFlow still leads in mobile and edge deployment through LiteRT (formerly TensorFlow Lite)

- TensorFlow has a stronger position in enterprise production pipelines, particularly in Google Cloud and organizations already invested in TFX

- Hugging Face’s Transformers library — the backbone of most modern NLP and LLM work — supports both, but defaults to PyTorch

What does the job market look like? Generally:

- Startups, AI research roles, and LLM-related jobs → PyTorch preferred

- Large enterprises, Google ecosystem, ML engineering (MLOps) roles → TensorFlow still very relevant

- Most mid-sized companies in 2026 want experience in at least one framework, with PyTorch increasingly listed first

Read: Load and Preprocess Datasets with TensorFlow

Head-to-Head: The Honest Comparison

| Dimension | PyTorch | TensorFlow |

|---|---|---|

| Learning curve | Easier — feels like Python | Steeper — Keras helps, but the full API is vast |

| Debugging | Excellent — standard Python tools work | Good — improved with eager execution |

| Research adoption | Dominant (85% of papers) | Declining in research |

| Production deployment | Good and improving (TorchServe, ONNX) | Excellent — mature ecosystem (TF Serving, TFX, Lite) |

| Mobile / Edge | PyTorch Mobile (improving) | LiteRT — more mature and widely deployed |

| Generative AI / LLMs | Default choice | Supported but secondary |

| TPU training | Limited (improving via PyTorch/XLA) | Native, years ahead |

| Training speed (GPU) | ~7.7s/epoch (typical benchmark) | ~11.2s/epoch (same setup) |

| Memory usage (training) | ~3.5 GB RAM | ~1.7 GB RAM |

| Ecosystem | Hugging Face, Lightning, TIMM | TFX, Keras, Google Cloud AI |

A couple of notes on the performance numbers above: these come from controlled benchmark environments, and real-world results vary significantly based on model architecture, dataset, and hardware. The accuracy gap between the two frameworks on the same model and data? Essentially zero, both averaged around 78% validation accuracy in head-to-head tests. Framework choice doesn’t change what your model learns.

Where Each Framework Wins

PyTorch is clearly better for:

- Learning deep learning — the Pythonic API lowers the mental overhead so you can focus on understanding concepts, not fighting the framework

- Research and experimentation — dynamic graphs, easy debugging, faster prototyping cycles

- Generative AI, LLMs, and diffusion models — nearly every cutting-edge model (GPT, Llama, Stable Diffusion, BERT fine-tuning) ships with PyTorch code first

- Computer vision — libraries like TIMM (PyTorch Image Models) have become the standard for vision research

- Reinforcement learning research — when you need to modify reward functions on the fly and debug agent behavior mid-run

TensorFlow is clearly better for:

- Production ML pipelines at scale — TensorFlow Extended (TFX) is a full end-to-end pipeline tool for data validation, model versioning, and production monitoring that PyTorch simply doesn’t match yet

- Mobile and edge deployment — LiteRT (TensorFlow Lite) is more mature, more widely supported across Android, iOS, and embedded hardware than PyTorch Mobile

- Google Cloud / TPU workloads — if you’re training at massive scale on Google’s infrastructure, TF’s native TPU support is years ahead

- Organizations already in the Google ecosystem — if your company is on Vertex AI and already has TFX pipelines, switching to PyTorch creates more work than value

- Browser-based ML — TensorFlow.js is the dominant choice for running models directly in a browser

What About Keras?

Keras deserves its own mention. It started as a separate high-level API and was eventually folded into TensorFlow. With Keras 3 (released alongside TF 2.16), it became multi-backend — meaning you can write Keras code and run it on TensorFlow, PyTorch, or JAX.

This is actually a big deal. If you learn Keras, your code becomes framework-agnostic to a meaningful degree. For beginners who want to learn model building without committing to a framework war, starting with Keras on TensorFlow is a genuinely good approach.

What About JAX?

You’ll hear JAX mentioned more and more in 2026. Developed by Google, JAX is what many researchers at DeepMind and Google Brain use for cutting-edge work. It’s essentially NumPy with GPU acceleration and automatic differentiation, with no “framework” abstraction in the way.

JAX isn’t a replacement for PyTorch or TensorFlow for most developers; it has a steep learning curve and is primarily used in research settings. But it’s worth knowing it exists. If you go deep into ML research, you’ll encounter it.

My Honest Recommendation

Here’s how I’d break it down:

Learn PyTorch first if:

- You’re a beginner getting into machine learning or deep learning

- You want to work with LLMs, generative AI, or stay close to the research frontier

- You’re applying for AI/ML roles at startups or research-focused companies

- You want the fastest path from idea to working prototype

Learn TensorFlow if:

- You’re heading into ML engineering or MLOps rather than research

- Your target employer is in the Google ecosystem or uses Vertex AI

- You’re building models for mobile or edge deployment

- You’re joining a team that already has a TensorFlow codebase

Learn both if:

- You’re serious about a career in ML, and honestly, once you know one, picking up the other takes a few days, not months. The core concepts are identical. It’s just syntax and API differences.

The worst thing you can do is spend weeks paralyzed by this decision. Pick one, build something real with it, and the other framework will start to make sense naturally.

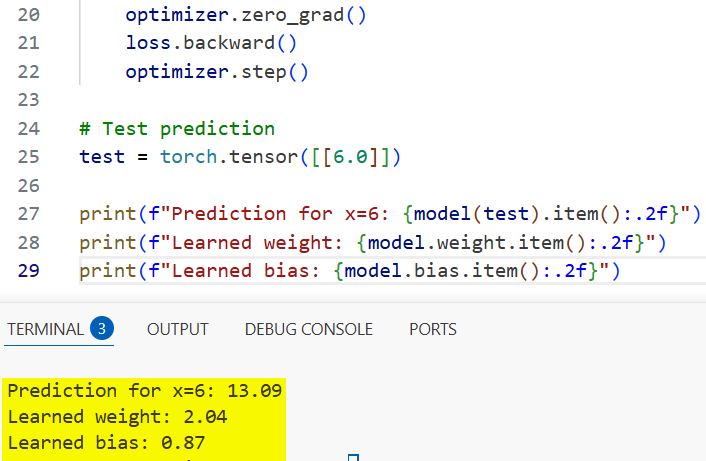

Get Started: Your First PyTorch Program

import torch

import torch.nn as nn

import torch.optim as optim

import numpy as np

# Simple dataset: y = 2x + 1

X = torch.tensor([[1.0], [2.0], [3.0], [4.0], [5.0]])

y = torch.tensor([[3.0], [5.0], [7.0], [9.0], [11.0]])

# Define a one-layer linear model

model = nn.Linear(1, 1)

optimizer = optim.SGD(model.parameters(), lr=0.01)

loss_fn = nn.MSELoss()

# Training loop

for epoch in range(500):

pred = model(X)

loss = loss_fn(pred, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

# Test prediction for x = 6 (should be close to 13)

test = torch.tensor([[6.0]])

print(f"Prediction for x=6: {model(test).item():.2f}")

print(f"Learned weight: {model.weight.item():.2f}") # Should be ~2

print(f"Learned bias: {model.bias.item():.2f}") # Should be ~1

You can see the output in the screenshot below.

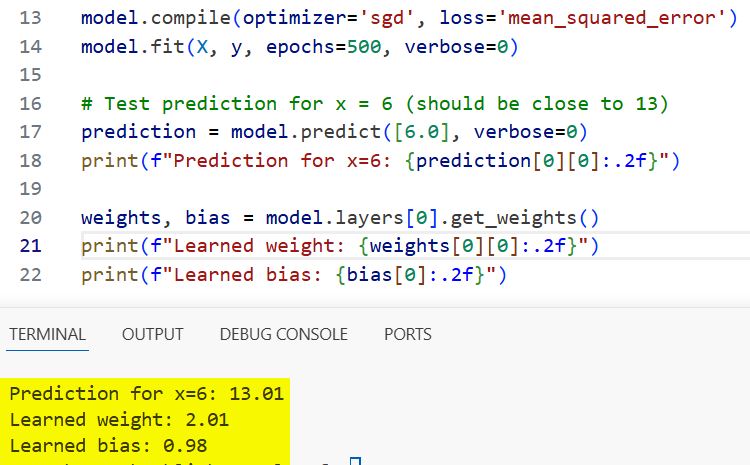

Get Started: Your First TensorFlow Program

import tensorflow as tf

import numpy as np

# Same dataset: y = 2x + 1

X = np.array([1.0, 2.0, 3.0, 4.0, 5.0], dtype=float)

y = np.array([3.0, 5.0, 7.0, 9.0, 11.0], dtype=float)

# Build the model

model = tf.keras.Sequential([

tf.keras.layers.Dense(units=1, input_shape=[1])

])

model.compile(optimizer='sgd', loss='mean_squared_error')

model.fit(X, y, epochs=500, verbose=0)

# Test prediction for x = 6 (should be close to 13)

prediction = model.predict([6.0], verbose=0)

print(f"Prediction for x=6: {prediction[0][0]:.2f}")

weights, bias = model.layers[0].get_weights()

print(f"Learned weight: {weights[0][0]:.2f}") # Should be ~2

print(f"Learned bias: {bias[0]:.2f}") # Should be ~1

You can see the output in the screenshot below.

Both programs do the same thing: learn the relationship y = 2x + 1 from data. Run them both, compare the code, and you’ll immediately feel the difference in style. That hands-on comparison will tell you more than any article can.

Frequently Asked Questions

Q: Is TensorFlow dying?

No. That narrative is overblown. TensorFlow is actively maintained by Google, still widely used in enterprise and mobile deployment, and has a huge installed base. What’s true is that PyTorch has overtaken it in research and new project starts. TensorFlow still has a strong future in production ML engineering.

Q: If I learn PyTorch, will I understand TensorFlow too?

Mostly yes. The core concepts — tensors, gradients, forward/backward passes, loss functions, optimizers — are identical. The APIs look different but the ideas are the same. After learning one well, switching to the other takes days, not months.

Q: Does it matter which framework I use for Kaggle competitions?

Both work. Kaggle’s GPU environments support both frameworks. Most top-ranked Kaggle notebooks in 2026 use PyTorch, but winning a competition is about your data pipeline, feature engineering, and model tuning — not which framework you picked.

Q: What about Hugging Face? Does it use PyTorch or TensorFlow?

Hugging Face’s Transformers library supports both, but PyTorch is the default for almost every model. If you’re planning to work with pre-trained LLMs, BERT, GPT-style models, or diffusion models from Hugging Face, you’ll be more at home in PyTorch.

Q: Should I learn TensorFlow 1.x?

Absolutely not. TF 1.x is end-of-life. If you’re starting fresh, begin with TF 2.x (or PyTorch). If you’ve inherited a TF 1.x codebase, follow the migration guide and move it to TF 2.x.

Q: Can I switch from one to the other mid-project?

Technically, yes, but practically, it’s painful. Models, checkpoints, and training code don’t transfer directly. Pick your framework before you start, and only switch between projects — not during one.

You may also like to read:

- TensorFlow Data Pipelines with tf.data

- Use Keras in TensorFlow for Rapid Prototyping

- Debug TensorFlow Models: Best Practices

- Build Your First Neural Network in TensorFlow

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.