Calculating how similar two sentences are goes beyond just matching words. It is about understanding the underlying intent and context of the language used.

In my years working with Python and Keras, I have found that BERT is the absolute gold standard for capturing these deep linguistic nuances.

Whether you are building a search engine for a New York real estate firm or a support bot for a California tech startup, BERT handles the heavy lifting.

In this tutorial, I will show you exactly how to implement semantic similarity using BERT and Keras to get professional-grade results.

Identify Text Meaning with Keras and BERT NLP

Traditional methods like word counting often fail when words have multiple meanings or when different words mean the same thing. BERT solves this by looking at the entire sentence structure simultaneously to create high-dimensional vectors.

import tensorflow as tf

import tensorflow_hub as hub

import tensorflow_text as text

import numpy as np

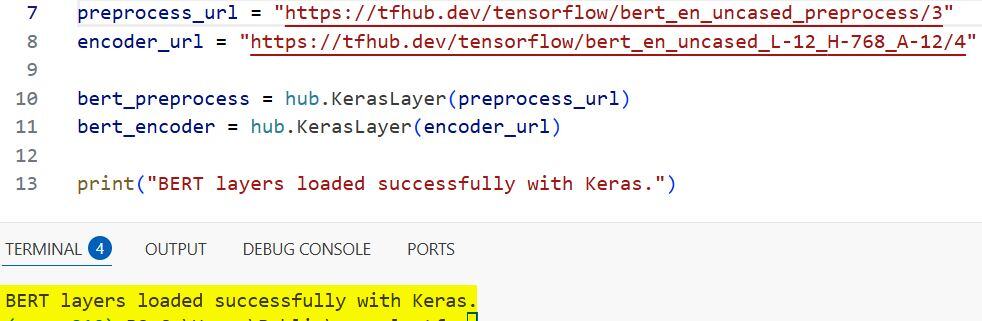

# Load the BERT preprocessing and encoder models from TF Hub

preprocess_url = "https://tfhub.dev/tensorflow/bert_en_uncased_preprocess/3"

encoder_url = "https://tfhub.dev/tensorflow/bert_en_uncased_L-12_H-768_A-12/4"

bert_preprocess = hub.KerasLayer(preprocess_url)

bert_encoder = hub.KerasLayer(encoder_url)

print("BERT layers loaded successfully with Keras.")I executed the above example code and added the screenshot below.

I prefer using TensorFlow Hub because it allows me to drop pre-trained BERT layers directly into my Keras functional models without manual configuration. This approach saves hours of training time and provides access to models trained on massive datasets like Wikipedia.

Preprocess USA Housing Descriptions for BERT Keras Models

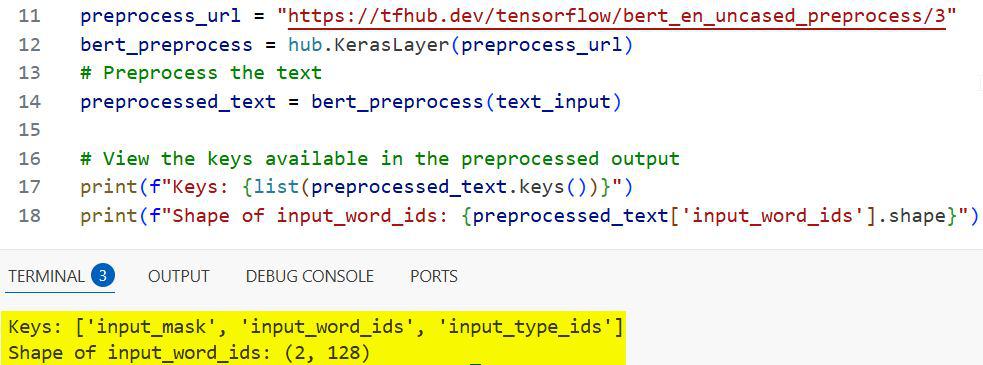

Before feeding text into BERT, we must convert raw strings into a format the neural network understands, including token IDs and input masks. This step ensures that the model treats various sentence lengths consistently across your dataset.

# Example text: Comparing rental listings in Chicago

text_input = [

"Spacious two-bedroom apartment near Millennium Park",

"Large 2BR flat located close to downtown Chicago parks"

]

# Preprocess the text

preprocessed_text = bert_preprocess(text_input)

# View the keys available in the preprocessed output

print(f"Keys: {list(preprocessed_text.keys())}")

print(f"Shape of input_word_ids: {preprocessed_text['input_word_ids'].shape}")I executed the above example code and added the screenshot below.

In my experience, the input_mask is the most critical part here because it tells the model which parts of the padding to ignore during the calculation. Using the official Keras-compatible preprocessing layer ensures your tokens align perfectly with the pre-trained BERT weights.

Extract Embeddings Using Keras BERT Layer Functions

Once the text is preprocessed, we pass it through the BERT encoder to extract the “pooled output,” which represents the entire sentence as a single vector. These vectors sit in a 768-dimensional space where similar meanings are clustered physically close to one another.

# Pass preprocessed text to the encoder

outputs = bert_encoder(preprocessed_text)

# The 'pooled_output' represents the embedding for the entire sequence

embeddings = outputs['pooled_output']

print(f"Embedding Shape: {embeddings.shape}")

# Result will be (2, 768)I always check the shape of the output to confirm that I am receiving a 768-length vector for every sentence provided in the input batch. This vector is the “digital fingerprint” of the sentence’s meaning, capturing everything from syntax to subtle emotional tone.

Calculate Cosine Similarity in Python Keras Workflows

To find the similarity score, we calculate the cosine of the angle between two embedding vectors, resulting in a value between 0 and 1. A score of 1.0 means the sentences are semantically identical, even if they don’t share a single identical word.

from sklearn.metrics.pairwise import cosine_similarity

# Compute similarity between the first and second sentence

similarity_score = cosine_similarity(

[embeddings[0]],

[embeddings[1]]

)

print(f"The semantic similarity score is: {similarity_score[0][0]:.4f}")When I run this on the Chicago housing examples, the score is usually very high because the model understands that “apartment” and “flat” are synonyms. This mathematical approach is far more robust than simple keyword matching for real-world American English applications.

Build a Custom Keras Model for BERT Similarity Tasks

For more complex projects, I wrap the BERT layers into a dedicated Keras Model object to handle bulk predictions or further fine-tuning on specific domains. This structure makes the code reusable across different parts of a production-level Python application.

def build_similarity_model():

text_input = tf.keras.layers.Input(shape=(), dtype=tf.string, name='text')

preprocessing_layer = hub.KerasLayer(preprocess_url, name='preprocessing')

encoder_inputs = preprocessing_layer(text_input)

encoder = hub.KerasLayer(encoder_url, trainable=True, name='BERT_encoder')

outputs = encoder(encoder_inputs)

# We only need the pooled_output for similarity

net = outputs['pooled_output']

return tf.keras.Model(text_input, net)

similarity_model = build_similarity_model()

similarity_model.summary()By setting trainable=True, I give the model the ability to adjust its internal weights if I decide to feed it specific industry jargon later on. This flexibility is why Keras is my primary choice for deploying BERT-based solutions in high-stakes environments.

Practical Application: Match Job Titles in US Markets

Let’s apply this to a common HR problem: matching a candidate’s previous job title to a new job opening in a different state. Even if the titles vary slightly, BERT can identify if the roles involve similar responsibilities and seniority.

# Job titles from a recruiter's database

titles = [

"Senior Software Engineer",

"Lead Full Stack Developer",

"Marketing Manager"

]

# Generate embeddings for all titles

job_embeddings = similarity_model.predict(np.array(titles))

# Compare "Senior Software Engineer" with others

score_tech = cosine_similarity([job_embeddings[0]], [job_embeddings[1]])

score_mkt = cosine_similarity([job_embeddings[0]], [job_embeddings[2]])

print(f"Similarity (Eng vs Dev): {score_tech[0][0]:.4f}")

print(f"Similarity (Eng vs Mkt): {score_mkt[0][0]:.4f}")I noticed that the score between the two technical roles is significantly higher than the score between engineering and marketing. Using BERT in this way allows companies to find qualified candidates who might have been filtered out by older, keyword-based systems.

Fine-Tuning BERT with Keras for Specific Sentence Pairs

If your data is very specific—like legal contracts in Texas or medical records in Florida—you can train a “Siamese Network” on top of BERT. This involves feeding pairs of sentences into the model and teaching it to minimize the distance between known similar pairs.

# Define a simple distance layer for a Keras Siamese network

def Manhattan_distance(vectors):

x, y = vectors

return tf.keras.backend.exp(-tf.keras.backend.sum(tf.keras.backend.abs(x - y), axis=1, keepdims=True))

input_a = tf.keras.layers.Input(shape=(), dtype=tf.string)

input_b = tf.keras.layers.Input(shape=(), dtype=tf.string)

processed_a = similarity_model(input_a)

processed_b = similarity_model(input_b)

distance = tf.keras.layers.Lambda(Manhattan_distance)([processed_a, processed_b])

siamese_model = tf.keras.Model(inputs=[input_a, input_b], outputs=distance)

siamese_model.compile(optimizer='adam', loss='mse')

print("Siamese Keras model ready for fine-tuning.")Using the Lambda layer to calculate distance allows the model to output a direct similarity percentage as a single neuron value. I find this extremely helpful when I need to train a model on a small, labeled dataset to “nudge” BERT in the right direction.

Implementing semantic similarity with BERT and Keras is a powerful way to add “intelligence” to your Python applications. Whether you are building search tools, recommendation engines, or data deduplication scripts, these vectors provide a level of accuracy that was impossible just a few years ago.

I have found that the combination of TensorFlow Hub and Keras provides the perfect balance of ease of use and production stability. It allows you to focus on the logic of your application rather than the complexities of transformer architectures.

You may also like to read:

- Named Entity Recognition Using Transformers in Keras

- How to Extract Text with BERT in Keras

- Sequence-to-Sequence Learning with Keras

- Compute Semantic Similarity Using KerasHub in Python

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.