Neural Radiance Fields (NeRF) have revolutionized 3D volumetric rendering by enabling photorealistic novel view synthesis from 2D images. As an experienced Python Keras developer, I found implementing NeRF both challenging and rewarding.

In this tutorial, I’ll walk you through building a NeRF model in Keras from scratch. You’ll get full code examples for each method, making it easy to understand and apply.

What is NeRF and Why Use Keras?

NeRF models represent 3D scenes as continuous volumetric radiance fields, parameterized by neural networks. They take 3D coordinates and viewing directions as input and output color and density values.

Keras, with its simplicity and TensorFlow backend, is ideal for building and training NeRF models efficiently.

Method 1: Implement the NeRF MLP Model in Keras

The core of NeRF is a multi-layer perceptron (MLP) that predicts color and density from encoded 3D points and view directions.

Step 1: Import Required Libraries

Import the essential libraries needed to build and run the NeRF model.

import tensorflow as tf

from tensorflow.keras import layers, models

import numpy as npStep 2: Positional Encoding Function

Positional encoding helps the MLP learn high-frequency functions by mapping inputs to a higher-dimensional space.

def positional_encoding(x, num_freqs=10):

freq_bands = 2.0 ** tf.range(num_freqs, dtype=tf.float32)

x_expanded = tf.expand_dims(x, -1) # shape (..., input_dims, 1)

encodings = tf.concat([tf.sin(freq_bands * x_expanded), tf.cos(freq_bands * x_expanded)], axis=-1)

encodings = tf.reshape(encodings, shape=tf.concat([tf.shape(x)[:-1], [x.shape[-1] * num_freqs * 2]], axis=0))

return encodingsStep 3: Define the NeRF MLP Model

Build the NeRF MLP that predicts color and density from encoded inputs.

def create_nerf_mlp(num_layers=8, num_units=256, input_dims=3, view_dims=3, skips=[4]):

inputs_xyz = layers.Input(shape=(input_dims,))

inputs_dir = layers.Input(shape=(view_dims,))

# Positional encoding

x = positional_encoding(inputs_xyz)

d = positional_encoding(inputs_dir)

h = x

for i in range(num_layers):

h = layers.Dense(num_units, activation='relu')(h)

if i in skips:

h = tf.concat([h, x], axis=-1)

# Output density (sigma)

sigma = layers.Dense(1, activation='relu')(h)

# Feature vector for color

feature = layers.Dense(num_units, activation='relu')(h)

# Concatenate feature and view direction encoding

h = tf.concat([feature, d], axis=-1)

h = layers.Dense(num_units // 2, activation='relu')(h)

# Output RGB color

rgb = layers.Dense(3, activation='sigmoid')(h)

outputs = tf.concat([rgb, sigma], axis=-1)

model = models.Model(inputs=[inputs_xyz, inputs_dir], outputs=outputs)

return model

nerf_model = create_nerf_mlp()

nerf_model.summary()I executed the above example code and added the screenshot below.

This completes a minimal NeRF MLP setup ready for training or extension.

Method 2: Volume Rendering Integration (Ray Marching)

NeRF requires integrating predicted colors and densities along camera rays to synthesize images.

Step 1: Sample Points Along Rays

Generate sampled points along each camera ray for NeRF integration.

def sample_points(rays_o, rays_d, near, far, num_samples):

t_vals = tf.linspace(near, far, num_samples)

t_vals = tf.broadcast_to(t_vals[None, :], [tf.shape(rays_o)[0], num_samples])

points = rays_o[:, None, :] + rays_d[:, None, :] * t_vals[..., None]

return points, t_valsStep 2: Volume Rendering Function

Compute final color and depth by integrating densities with volume rendering.

def volume_render(rgb_sigma, t_vals, rays_d):

rgb = rgb_sigma[..., :3]

sigma = rgb_sigma[..., 3]

delta = t_vals[..., 1:] - t_vals[..., :-1]

delta = tf.concat([delta, tf.broadcast_to([1e10], tf.shape(delta[..., :1]))], axis=-1)

alpha = 1.0 - tf.exp(-sigma * delta)

transmittance = tf.math.cumprod(1.0 - alpha + 1e-10, axis=-1, exclusive=True)

weights = alpha * transmittance

rgb_map = tf.reduce_sum(weights[..., None] * rgb, axis=-2)

depth_map = tf.reduce_sum(weights * t_vals, axis=-1)

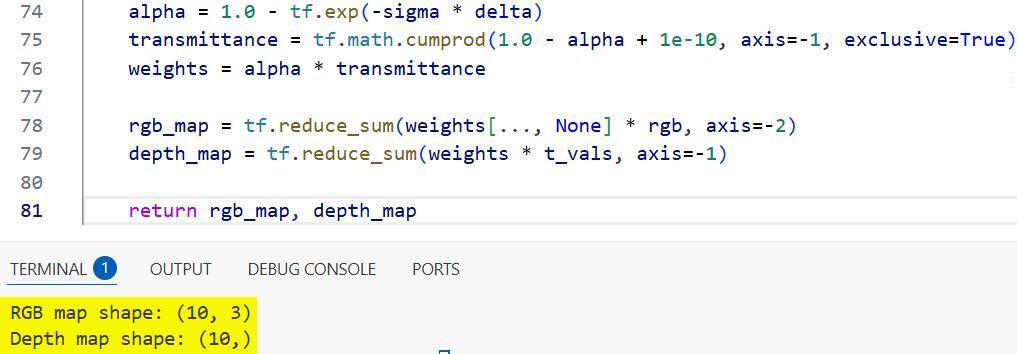

return rgb_map, depth_mapI executed the above example code and added the screenshot below.

This completes the core volume-rendering stage used to synthesize NeRF images.

How to Use the NeRF Model in Keras

Here are some steps that help you know how to use the model in Keras.

Step 1: Generate Dummy Rays and Directions

Create synthetic ray origins and directions to feed into the NeRF pipeline.

num_rays = 1024

near = 2.0

far = 6.0

num_samples = 64

rays_o = tf.random.uniform((num_rays, 3)) # ray origins

rays_d = tf.random.uniform((num_rays, 3)) # ray directions

rays_d = tf.math.l2_normalize(rays_d, axis=-1)Step 2: Sample Points and Predict Colors and Densities

Sample 3D points along each ray and run them through the NeRF model.

points, t_vals = sample_points(rays_o, rays_d, near, far, num_samples)

# Flatten points and directions for batch prediction

points_flat = tf.reshape(points, [-1, 3])

dirs_flat = tf.repeat(rays_d, repeats=num_samples, axis=0)

# Predict rgb and sigma

raw_outputs = nerf_model([points_flat, dirs_flat])

raw_outputs = tf.reshape(raw_outputs, [num_rays, num_samples, 4])Step 3: Volume Render to Get Final RGB and Depth Maps

Integrate model outputs with volume rendering to produce RGB and depth maps.

rgb_map, depth_map = volume_render(raw_outputs, t_vals, rays_d)

print("Rendered RGB shape:", rgb_map.shape)

print("Rendered Depth shape:", depth_map.shape)Training NeRF in Keras

Training NeRF involves comparing rendered images against ground truth images with photometric loss. Due to complexity, I recommend starting with small datasets and ray batch sizes.

Implementing NeRF in Keras requires combining coordinate encoding, MLP modeling, and volume rendering. The methods I shared provide a clear path to build your own 3D volumetric renderer.

Feel free to experiment with positional encoding frequencies, network depth, and sampling strategies to improve results.

Other Python Keras articles you may also like:

- Mastering Object Detection with RetinaNet in Keras

- Keypoint Detection with Transfer Learning in Keras

- Object Detection Using Vision Transformers in Keras

- Monocular Depth Estimation Using Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.