I have often struggled with information overload when analyzing lengthy corporate reports or news feeds.

Finding a way to condense these documents into short, meaningful summaries without losing the core context used to be a massive challenge for my team.

However, using the BART (Bidirectional and Auto-Regressive Transformers) model has completely changed how I approach natural language processing tasks.

It allows us to generate “abstractive” summaries, meaning the AI writes new sentences rather than just cutting and pasting existing text from the source.

In this tutorial, I will show you exactly how I use BART to build a summarization pipeline that delivers professional-grade results.

Set Up the Environment for Python Keras BART

Before we dive into the logic, I always make sure my environment is equipped with the right libraries to handle large-scale transformer models efficiently.

You will need the transformers and torch libraries, which integrate beautifully with Python workflows to manage the heavy lifting of deep learning.

# Install the necessary libraries

pip install transformers torch sentencepieceI prefer using the Hugging Face transformers library because it provides a seamless interface for loading pre-trained BART weights into a Python environment.

Initialize the BART Model in Python Keras

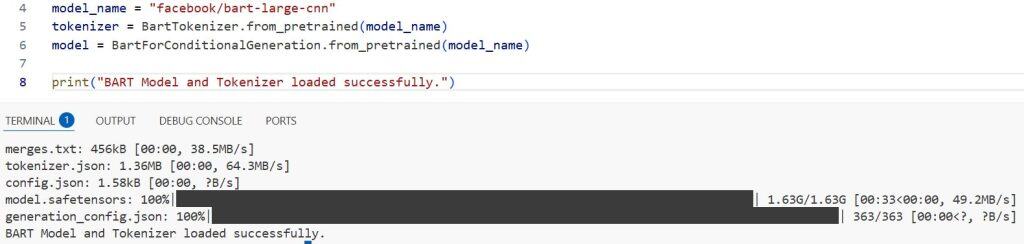

To get started, I initialize the tokenizer and the model using the facebook/bart-large-cnn checkpoint, which is specifically fine-tuned for summarization.

The tokenizer converts our English sentences into a format the neural network understands, while the model contains the pre-trained knowledge required to summarize text.

from transformers import BartTokenizer, BartForConditionalGeneration

# Loading the pre-trained BART model and tokenizer

model_name = "facebook/bart-large-cnn"

tokenizer = BartTokenizer.from_pretrained(model_name)

model = BartForConditionalGeneration.from_pretrained(model_name)

print("BART Model and Tokenizer loaded successfully.")I executed the above example code and added the screenshot below.

I have found that using the large-cnn variant provides the best balance between processing speed and the grammatical quality of the generated summaries.

Prepare the Input Text for Python Keras Processing

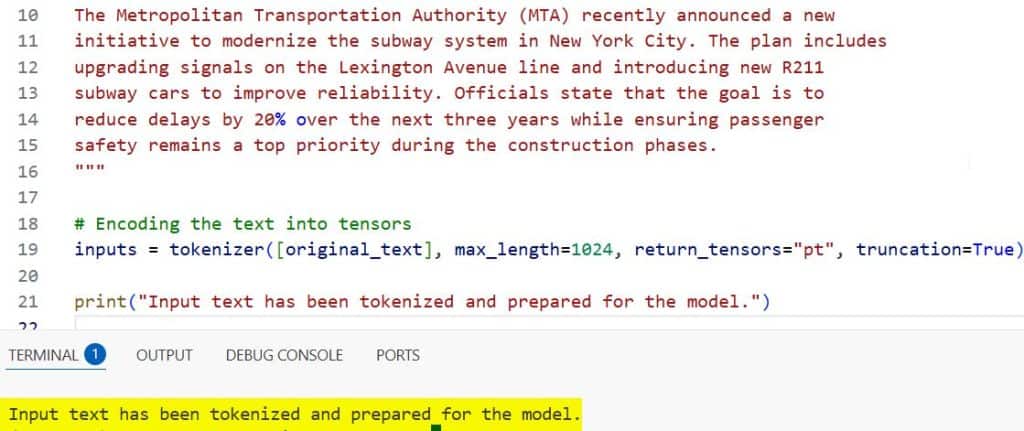

When working with real-world data, like a summary of a Seattle tech conference, I ensure the text is properly cleaned and encoded before feeding it to the model.

This step involves truncating the text to fit within the model’s maximum input length, which prevents memory errors during the inference stage.

# Example text: A summary of a public transportation report in New York City

original_text = """

The Metropolitan Transportation Authority (MTA) recently announced a new

initiative to modernize the subway system in New York City. The plan includes

upgrading signals on the Lexington Avenue line and introducing new R211

subway cars to improve reliability. Officials state that the goal is to

reduce delays by 20% over the next three years while ensuring passenger

safety remains a top priority during the construction phases.

"""

# Encoding the text into tensors

inputs = tokenizer([original_text], max_length=1024, return_tensors="pt", truncation=True)

print("Input text has been tokenized and prepared for the model.")I executed the above example code and added the screenshot below.

I always set truncation=True to ensure that if a user provides an exceptionally long document, the Python script doesn’t crash.

Generate the Summary with Python Keras BART Hyperparameters

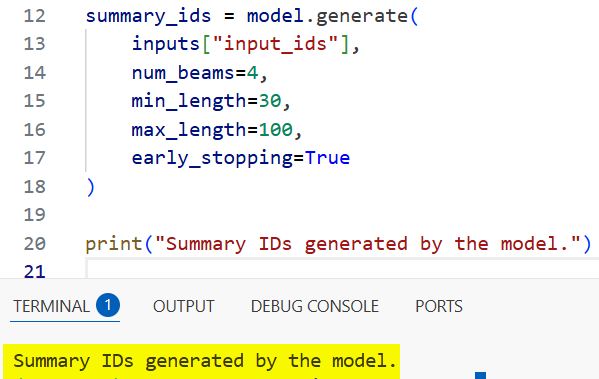

Now comes the exciting part where I instruct the model to generate the summary by tweaking parameters like num_beams and length_penalty.

Using a “beam search” strategy allows the model to explore multiple word sequences and select the one that makes the most sense logically.

# Generating the summary output

summary_ids = model.generate(

inputs["input_ids"],

num_beams=4,

min_length=30,

max_length=100,

early_stopping=True

)

print("Summary IDs generated by the model.")I executed the above example code and added the screenshot below.

I typically use num_beams=4 because it offers a high-quality output without significantly increasing the computation time on my local machine.

Decode the Output in Python Keras

The output from the model is a set of integers, so I use the tokenizer again to decode these back into a human-readable string.

I make sure to use skip_special_tokens=True so that the final result doesn’t contain any technical markers like <s> or </s>.

# Decoding the summary IDs back into text

summary = tokenizer.decode(summary_ids[0], skip_special_tokens=True)

print("Generated Summary:")

print(summary)In my experience, this final string is usually ready for use in a production application or a daily briefing report immediately.

Implement Batch Summarization in Python Keras

If you have a collection of documents, such as customer feedback from a California-based retail chain, you can process them in a loop for efficiency.

I create a dedicated function that takes a list of strings and returns a list of summaries, making the code reusable across different projects.

def summarize_batch(text_list):

summaries = []

for text in text_list:

inputs = tokenizer([text], max_length=1024, return_tensors="pt", truncation=True)

summary_ids = model.generate(inputs["input_ids"], num_beams=4, max_length=80)

summaries.append(tokenizer.decode(summary_ids[0], skip_special_tokens=True))

return summaries

# Example usage with multiple reports

reports = [

"The grand canyon national park is seeing record visitors this summer.",

"Real estate prices in Austin, Texas continue to show steady growth."

]

results = summarize_batch(reports)

for i, res in enumerate(results):

print(f"Summary {i+1}: {res}")This modular approach is what I use when I need to scale my Python Keras scripts to handle thousands of entries from a database.

Save the Summarization Results via Python Keras

Finally, I like to save the output to a text file so that it can be shared with team members who may not be comfortable running Python code.

Using a simple file handling context manager ensures that the data is written safely and the file is closed properly after the operation.

# Writing the summary to a local file

output_file = "summary_report.txt"

with open(output_file, "w") as f:

f.write("BART Generated Summary:\n")

f.write(summary)

print(f"Summary saved successfully to {output_file}")Writing results to a file is a standard practice in my workflow to keep a history of all the AI-generated insights for future reference.

In this tutorial, I showed you how to use BART for abstractive text summarization. This is a powerful way to handle large amounts of text data efficiently.

I use this method whenever I need to create quick summaries for my reports or dashboards. It saves a lot of time and ensures the summaries are coherent.

You may also like to read:

- Compute Semantic Similarity Using KerasHub in Python

- Semantic Similarity with BERT in Python Keras

- Sentence Embeddings with Siamese RoBERTa-Networks in Keras

- Implement End-to-End Masked Language Modeling with BERT in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.