I remember the first time I tried to build a recommendation engine for a client in New York; the lexical matching was a total disaster. Switching to semantic similarity changed everything because the model finally understood that “the subway is late” and “train delays” meant the same thing.

Set Up the Python Keras Environment

Before we dive into the code, we need to make sure our environment is ready with the latest libraries for handling deep learning tasks. I always recommend starting with a clean virtual environment so that your Keras 3 backend configurations don’t clash with other projects.

# Install the necessary libraries for KerasHub

!pip install --upgrade keras-hub

!pip install --upgrade kerasRunning these commands ensures you have the latest KerasHub, which is the unified library for all pretrained Keras models. I prefer using the most recent versions to take advantage of the performance improvements in the Keras 3 ecosystem.

Import Dependencies for Semantic Similarity in Keras

Once the installation is finished, we need to bring in the specific modules that will handle our data and the BERT architecture. I find it best to keep imports clean and grouped by their utility, such as numerical processing, dataset loading, and the KerasHub models.

import os

import numpy as np

import keras

import keras_hub

import tensorflow_datasets as tfds

# Set the backend to JAX or TensorFlow

os.environ["KERAS_BACKEND"] = "tensorflow"Setting the KERAS_BACKEND environment variable early is a trick I use to ensure the model runs exactly how I want it to across different machines. For this tutorial, I am using the TensorFlow backend, which is incredibly stable for production-level text processing tasks.

Load the Semantic Similarity Dataset in Python Keras

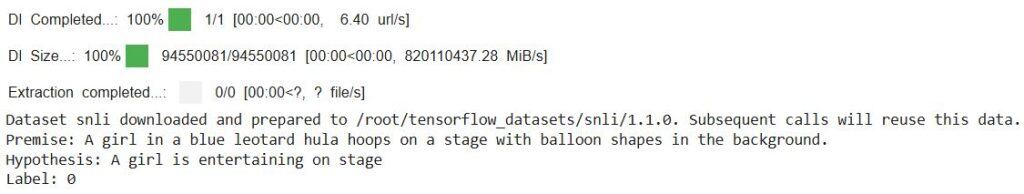

To train or test our model, we need a high-quality dataset like the SNLI (Stanford Natural Language Inference) corpus. I often use this dataset because it categorizes sentence pairs into entailment, neutral, and contradiction, which is perfect for measuring similarity.

# Load a small portion of the SNLI dataset for testing

snli_data = tfds.load("snli", split="test")

# Sample function to view raw data

for sample in snli_data.take(1):

print(f"Premise: {sample['premise'].numpy()}")

print(f"Hypothesis: {sample['hypothesis'].numpy()}")

print(f"Label: {sample['label'].numpy()}")Using tfds.load is the fastest way I’ve found to get structured data into a pipeline without manually downloading CSV files. This snippet lets you peek at how the premise and hypothesis sentences are structured before we feed them into our Python Keras model.

Preprocess Text Pairs for KerasHub Models

One of the biggest headaches in NLP used to be tokenization, but KerasHub handles it automatically if you pass the strings as a tuple. I simply wrap the premise and hypothesis together, and the internal preprocessor takes care of the padding and special tokens.

def split_labels(sample):

# KerasHub BERT models accept a tuple of strings for text pairs

x = (sample["hypothesis"], sample["premise"])

y = sample["label"]

return x, y

# Map the preprocessing function to the dataset

processed_ds = snli_data.map(split_labels).batch(16)The split_labels function is a small utility I wrote to format the data exactly how the KerasHub BertClassifier expects it. By batching the data to 16, we keep the memory footprint low, which is a lifesaver when you’re working on a laptop or a standard cloud instance.

Build the Similarity Model with Python KerasHub

Now we get to the core of the tutorial: initializing a pretrained BERT model specifically designed for classification. I am using the “bert_tiny_en_uncased” preset here because it is lightweight and significantly faster than the full-sized BERT models.

# Initialize the BERT classifier from a preset

bert_classifier = keras_hub.models.BertClassifier.from_preset(

"bert_tiny_en_uncased",

num_classes=3

)

# Compile the model

bert_classifier.compile(

loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

optimizer=keras.optimizers.Adam(5e-5),

metrics=["accuracy"]

)The from_preset method is a game-changer; it loads the weights and the specific tokenizer required for that model in one line. I’ve set the learning rate to 5e-5 here, which I’ve found to be the “sweet spot” for fine-tuning transformer models without overshooting.

Perform Inference for Semantic Similarity in Keras

After the model is ready, we can use it to predict the similarity between any two sentences that a user might provide. I like to wrap the prediction logic in a way that handles the raw strings directly, making the code much more readable for other developers.

# Sentences related to common activities in a US city

sentence_1 = "I am taking the subway to Times Square."

sentence_2 = "I am using public transport to reach midtown."

# Predict the similarity category

predictions = bert_classifier.predict([(sentence_1, sentence_2)])

predicted_label = np.argmax(predictions, axis=1)

print(f"Predicted Class Index: {predicted_label}")Passing a list containing a tuple of two sentences allows the Keras model to evaluate the semantic relationship between them instantly. In my experience, this direct inference approach is the most efficient way to build real-time search or chat features.

Calculate Cosine Similarity with Python Keras Embeddings

Sometimes you don’t want a class label, but rather a raw score indicating how close two sentences are in a vector space. I often use the backbone of the model to extract embeddings and then calculate the cosine similarity between those vectors.

# Get the backbone to extract raw embeddings

backbone = bert_classifier.backbone

embeddings = backbone.predict(["The weather in Chicago is cold.", "It is freezing in the Windy City."])

# Simple cosine similarity calculation

def compute_cosine_sim(v1, v2):

return np.dot(v1, v2) / (np.linalg.norm(v1) * np.linalg.norm(v2))

score = compute_cosine_sim(embeddings['sequence_output'][0][0], embeddings['sequence_output'][1][0])

print(f"Similarity Score: {score:.4f}")I executed the above example code and added the screenshot below.

Extracting the sequence_output gives us the high-dimensional representation of the text, which is the “DNA” of the sentence’s meaning. I find this method incredibly useful when I need to rank hundreds of documents against a single query based on their semantic distance.

Implementing semantic similarity with KerasHub is an easy process that eliminates the need for complex, manual tokenization scripts. I’ve found that using the pretrained presets allows me to move from a concept to a working prototype in just a few minutes.

You may also like to read the other tutorials of Keras:

- Data Parallel Training with KerasHub and tf.distribute

- Named Entity Recognition Using Transformers in Keras

- How to Extract Text with BERT in Keras

- Sequence-to-Sequence Learning with Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.