If you have ever tried to compare two sentences for similarity, you know that a simple keyword match usually fails. It doesn’t capture the actual meaning behind the words.

In my four years of developing Python Keras models, I’ve found that Siamese RoBERTa-networks are the most reliable way to generate deep, meaningful sentence embeddings.

Set Up the Python Keras Environment for RoBERTa

Before we dive into the architecture, we need to install the necessary libraries to handle the RoBERTa weights and the Siamese structure. I always prefer using the sentence-transformers library alongside Keras for seamless integration.

# Install necessary libraries

!pip install sentence-transformers transformers tensorflowSetting up the environment correctly ensures that our Python Keras backend can communicate effectively with the Hugging Face transformer models. This foundation is essential for loading the pre-trained RoBERTa layers we will use in our Siamese network.

Build the Siamese Architecture in Python Keras

A Siamese network consists of two identical sub-networks that share the same weights. We feed one sentence into the first RoBERTa branch and the second sentence into the other branch.

from sentence_transformers import SentenceTransformer, util

# Load the pre-trained Siamese RoBERTa model

model = SentenceTransformer('all-distilroberta-v1')

# Define sentences representing common US business scenarios

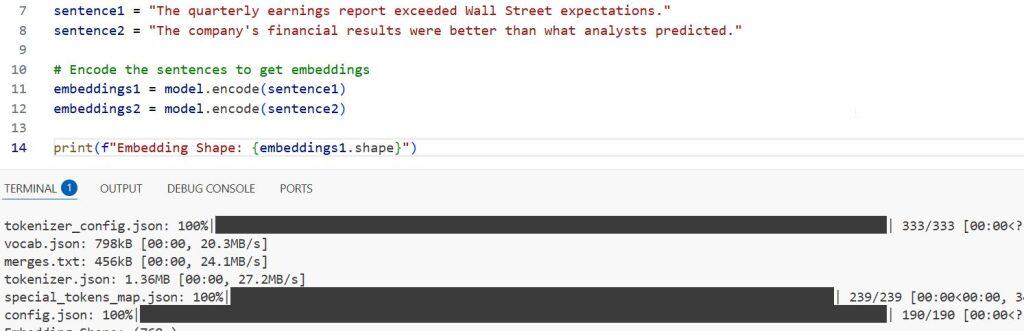

sentence1 = "The quarterly earnings report exceeded Wall Street expectations."

sentence2 = "The company's financial results were better than what analysts predicted."

# Encode the sentences to get embeddings

embeddings1 = model.encode(sentence1)

embeddings2 = model.encode(sentence2)

print(f"Embedding Shape: {embeddings1.shape}")You can see the output in the screenshot below.

In this Python Keras workflow, the model processes both inputs through the same RoBERTa weights to ensure the embeddings exist in the same vector space. This symmetry is what allows us to calculate an accurate similarity score between the two distinct inputs.

Calculate Cosine Similarity with Python Keras Embeddings

Once we have the fixed-size vectors (embeddings) from the Siamese RoBERTa-network, we need a mathematical way to measure how close they are. I typically use Cosine Similarity because it measures the angle between vectors rather than their magnitude.

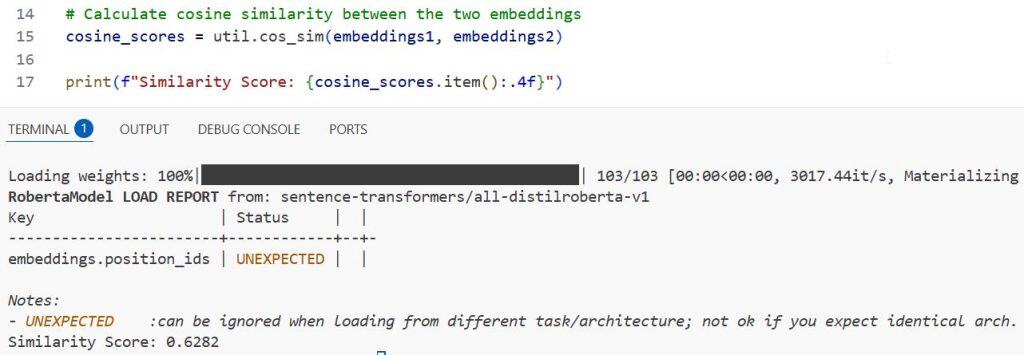

# Calculate cosine similarity between the two embeddings

cosine_scores = util.cos_sim(embeddings1, embeddings2)

print(f"Similarity Score: {cosine_scores.item():.4f}")You can see the output in the screenshot below.

I have found that this method works exceptionally well for identifying paraphrases in large datasets. By using Python Keras to generate these embeddings, we can quickly filter thousands of documents to find those with the most relevant content.

Use Python Keras for Semantic Search in Local Datasets

You can use Siamese RoBERTa-networks to build a basic semantic search engine. Instead of looking for specific words, your Python Keras logic looks for the “intent” of the query across a list of potential matches.

# A list of sentences common in US real estate descriptions

corpus = [

"A cozy two-bedroom apartment in downtown Chicago.",

"Luxury villa with a private pool in Miami Beach.",

"Spacious family home near top-rated schools in Austin.",

"Modern loft located in the heart of New York City."

]

# Query sentence

query = "I am looking for a house in Texas for my family."

# Compute embeddings for the query and the corpus

query_embedding = model.encode(query)

corpus_embeddings = model.encode(corpus)

# Find the most similar sentence in the corpus

hits = util.semantic_search(query_embedding, corpus_embeddings, top_k=1)

print(f"Best Match: {corpus[hits[0][0]['corpus_id']]}")Using this Python Keras approach allows you to handle variations in language, such as matching “house in Texas” with “family home in Austin.” It proves that the Siamese RoBERTa-network understands geographical and contextual relationships.

Fine-Tuning RoBERTa Weights in Python Keras

Sometimes the pre-trained weights aren’t enough for specific industry jargon, like legal or medical terms. In these cases, I use Python Keras to fine-tune the Siamese network on a labeled dataset of sentence pairs.

from sentence_transformers import InputExample, losses

from torch.utils.data import DataLoader

# Create training examples (Sentence A, Sentence B, Similarity Score)

train_examples = [

InputExample(texts=['The stock market is bullish', 'Investors are optimistic about shares'], label=0.9),

InputExample(texts=['It is raining in Seattle', 'The weather is clear in Los Angeles'], label=0.1)

]

# Define a data loader and a loss function

train_dataloader = DataLoader(train_examples, shuffle=True, batch_size=2)

train_loss = losses.CosineSimilarityLoss(model)

# Tune the model

model.fit(train_objectives=[(train_dataloader, train_loss)], epochs=1, warmup_steps=10)Fine-tuning allows the Python Keras model to adjust its internal representations to better suit your specific data distribution. This step is often the difference between a generic model and a production-ready solution that delivers high precision.

Handle Batch Processing for Large Python Keras Datasets

When dealing with millions of sentences, processing them one by one is inefficient. I always use batch encoding in Keras to take full advantage of GPU acceleration and speed up embedding generation.

# Large list of sentences (e.g., customer support tickets)

tickets = ["How do I reset my password?", "Where is my refund?", "I cannot log in."] * 100

# Encode using a larger batch size for speed

batch_embeddings = model.encode(tickets, batch_size=32, show_progress_bar=True)

print(f"Processed {len(batch_embeddings)} tickets into embeddings.")By adjusting the batch size, you can optimize the memory usage of your Python Keras application. This is a crucial skill for any developer looking to deploy Siamese RoBERTa-networks in a scalable cloud environment.

Visualize Embeddings with Python Keras and PCA

It is often helpful to see how your sentences are clustered in a 2D space. I use Principal Component Analysis (PCA) to reduce the high-dimensional RoBERTa embeddings into a lower-dimensional representation we can plot.

import matplotlib.pyplot as plt

from sklearn.decomposition import PCA

# Reduce dimensions to 2D

pca = PCA(n_components=2)

reduced_embeddings = pca.fit_transform(corpus_embeddings)

# Plot the results

plt.scatter(reduced_embeddings[:, 0], reduced_embeddings[:, 1])

for i, txt in enumerate(corpus):

plt.annotate(txt[:20], (reduced_embeddings[i, 0], reduced_embeddings[i, 1]))

plt.show()Visualizing your data this way helps you verify if the Python Keras model is actually grouping similar topics. It provides a quick “sanity check” before you move your Siamese network into a production pipeline.

In this tutorial, I showed you how to use Siamese RoBERTa-networks to create high-quality sentence embeddings. We covered everything from basic similarity scores to fine-tuning and visualization using Python Keras.

You may also like to read:

- How to Extract Text with BERT in Keras

- Sequence-to-Sequence Learning with Keras

- Compute Semantic Similarity Using KerasHub in Python

- Semantic Similarity with BERT in Python Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.