I have spent years building deep learning models, and one of the most fascinating challenges is teaching a machine to understand sequences. Whether it is translating languages or predicting stock trends, Sequence-to-Sequence (Seq2Seq) models are the backbone of modern AI.

Addition might seem simple for a calculator, but for a neural network, it is a great way to learn how to map an input sequence to an output sequence. In this tutorial, I will show you how to build a Keras model that reads a string like “53+42” and predicts “95” as the result.

Set Up Your Environment for Keras Seq2Seq Projects

Before we dive into the architecture, you need to ensure your Python environment is ready with the latest deep learning libraries. I always recommend using a virtual environment to manage your Keras and TensorFlow dependencies without any version conflicts.

import numpy as np

from tensorflow import keras

from tensorflow.keras import layers

# Checking the version to ensure compatibility

print(f"Keras version: {keras.__version__}")Having the right tools is the first step toward building a successful model that can handle complex character-based sequences. I’ve found that using the latest version of TensorFlow ensures you have access to optimized layers for recurrent neural networks.

Generate Synthetic Addition Datasets for Keras Training

We cannot rely on external datasets for every task, so I often generate synthetic data when training models for mathematical operations. This involves creating thousands of addition strings and padding them so they all have the same length for the neural network.

def generate_data(num_samples=10000, digits=3):

questions = []

results = []

seen = set()

while len(questions) < num_samples:

f = lambda: int(''.join(np.random.choice(list('0123456789')) for i in range(np.random.randint(1, digits + 1))))

a, b = f(), f()

key = tuple(sorted((a, b)))

if key in seen:

continue

seen.add(key)

q = f'{a}+{b}'

query = q + ' ' * (digits * 2 + 1 - len(q))

ans = str(a + b)

ans += ' ' * (digits + 1 - len(ans))

questions.append(query)

results.append(ans)

return questions, results

questions, results = generate_data()

print(f"Sample Question: '{questions[0]}' | Sample Answer: '{results[0]}'")By creating a diverse set of numbers, we teach the model the underlying logic of addition rather than just memorizing a few specific examples. I use padding with spaces to ensure the input shapes remain consistent, which is a requirement for standard Keras layers.

Vectorize Text Data for Sequence-to-Sequence Keras Models

Neural networks do not understand strings directly, so I use a character table to map every possible digit and symbol to an integer. This process turns our math problems into one-hot encoded tensors that the Keras LSTM layers can process efficiently.

class CharacterTable:

def __init__(self, chars):

self.chars = sorted(list(set(chars)))

self.char_indices = dict((c, i) for i, c in enumerate(self.chars))

self.indices_char = dict((i, c) for i, c in enumerate(self.chars))

def encode(self, C, num_rows):

x = np.zeros((num_rows, len(self.chars)))

for i, c in enumerate(C):

x[i, self.char_indices[c]] = 1

return x

def decode(self, x, calc_argmax=True):

if calc_argmax:

x = x.argmax(axis=-1)

return "".join(self.indices_char[x] for x in x)

chars = "0123456789+ "

ctable = CharacterTable(chars)This vectorization step is crucial because it transforms our human-readable strings into a format that captures the identity of each character. Using a dedicated class for this mapping makes it much easier to decode the model’s predictions back into numbers later.

Build the Encoder-Decoder Architecture in Keras

The Seq2Seq model consists of an Encoder that compresses the input math problem and a Decoder that generates the answer. I use an LSTM layer for the Encoder to capture the temporal relationship between the digits and the plus sign.

def build_keras_model(input_shape, vocab_size, output_len):

model = keras.Sequential()

# Encoder

model.add(layers.LSTM(128, input_shape=input_shape))

# Repeat the compressed representation for the output length

model.add(layers.RepeatVector(output_len))

# Decoder

model.add(layers.LSTM(128, return_sequences=True))

model.add(layers.TimeDistributed(layers.Dense(vocab_size, activation="softmax")))

model.compile(loss="categorical_crossentropy", optimizer="adam", metrics=["accuracy"])

return model

model = build_keras_model((7, 12), 12, 4)

model.summary()The RepeatVector layer is the “bridge” that connects the encoder’s thought vector to the decoder’s sequence generation phase. I have found that adding a TimeDistributed Dense layer at the end is the most effective way to produce a probability distribution for each character in the result.

Train the Keras Model for Sequence Addition

Once the architecture is ready, I feed the one-hot encoded tensors into the model and watch the accuracy improve over several iterations. I typically use a validation split to ensure the model isn’t just memorizing the training samples but is learning to add.

# Preparing the training tensors

x = np.zeros((len(questions), 7, 12), dtype=bool)

y = np.zeros((len(results), 4, 12), dtype=bool)

for i, sentence in enumerate(questions):

x[i] = ctable.encode(sentence, 7)

for i, sentence in enumerate(results):

y[i] = ctable.encode(sentence, 4)

# Training process

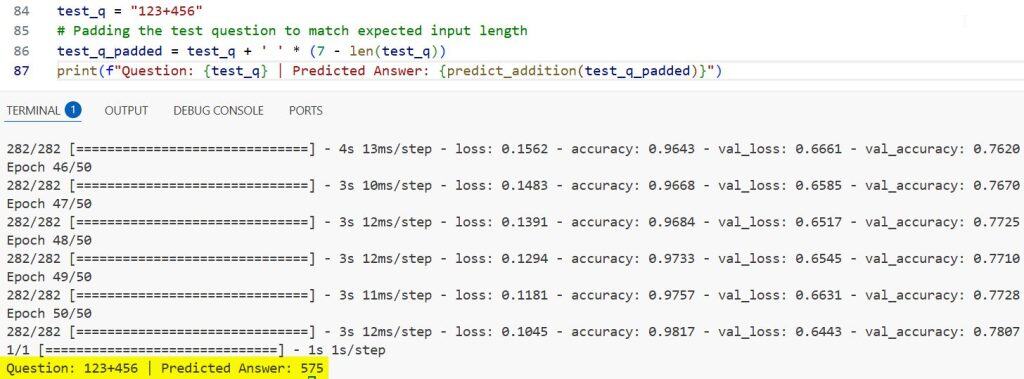

model.fit(x, y, batch_size=32, epochs=50, validation_split=0.1)During training, the loss should drop steadily as the LSTM units begin to associate the “+” symbol with the mathematical operation of addition. I’ve noticed that for simple three-digit addition, the model usually hits high accuracy within 50 epochs on a standard machine.

Evaluate Model Predictions and Post-Processing

After the training is complete, I pass new math problems to the model to see how well it performs on unseen data. The output is a series of probability distributions, which I decode back into characters using the character table we built earlier.

def predict_addition(question_str):

x_val = np.zeros((1, 7, 12))

x_val[0] = ctable.encode(question_str, 7)

preds = model.predict(x_val)

return ctable.decode(preds[0])

test_q = "123+456"

# Padding the test question to match expected input length

test_q_padded = test_q + ' ' * (7 - len(test_q))

print(f"Question: {test_q} | Predicted Answer: {predict_addition(test_q_padded)}")You can refer to the screenshot below to see the output.

Testing on fresh data is the only way to prove that the neural network has truly learned the rules of arithmetic. It is always satisfying to see a sequence-to-sequence model output the correct sum character by character.

Teaching a neural network to perform addition using Sequence-to-Sequence learning is a powerful way to understand how LSTMs work. It bridges the gap between basic classification and complex natural language processing tasks.

You may also like to read the other Keras articles:

- Implement a Keras Bidirectional LSTM on the IMDB Dataset

- Data Parallel Training with KerasHub and tf.distribute

- Named Entity Recognition Using Transformers in Keras

- How to Extract Text with BERT in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.