I have spent the last four years building deep learning pipelines, and one thing is clear: Vision Transformers (ViT) are incredibly powerful but often too bulky for real-time applications.

In this tutorial, I will show you how to use Keras to distill the knowledge from a large ViT model into a much smaller, faster neural network.

Prepare Your Environment for Keras Vision Transformer Distillation

Before we dive into the code, you need to set up your Python environment with the necessary libraries for deep learning.

I recommend using a virtual environment to manage your dependencies and avoid version conflicts during the installation process.

import os

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layersLoad the Teacher and Student Models in Keras

The first step in distillation is defining a “Teacher” (the large ViT) and a “Student” (a smaller, lightweight model like a ResNet or a compact CNN).

I often use a pre-trained ViT as the teacher because it has already learned complex features that we want the smaller model to mimic.

# Load a pre-trained Vision Transformer as the teacher

teacher = keras.applications.ViT_B16(

weights="imagenet",

include_top=True,

input_shape=(224, 224, 3)

)

# Define a lightweight student model in Keras

def build_student_model():

inputs = layers.Input(shape=(224, 224, 3))

x = layers.Rescaling(1.0 / 255)(inputs)

x = layers.Conv2D(32, (3, 3), strides=2, padding="same")(x)

x = layers.BatchNormalization()(x)

x = layers.ReLU()(x)

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(1000)(x)

return keras.Model(inputs, outputs, name="student")

student = build_student_model()Implement the Distiller Class in Keras

To manage the distillation process, I prefer creating a custom Keras Model class that handles the specialized training logic.

This class will calculate both the standard loss and the distillation loss, which measures how closely the student matches the teacher’s outputs.

class Distiller(keras.Model):

def __init__(self, student, teacher):

super(Distiller, self).__init__()

self.teacher = teacher

self.student = student

def compile(self, optimizer, metrics, student_loss_fn, distillation_loss_fn, alpha=0.1, temperature=3):

super(Distiller, self).compile(optimizer=optimizer, metrics=metrics)

self.student_loss_fn = student_loss_fn

self.distillation_loss_fn = distillation_loss_fn

self.alpha = alpha

self.temperature = temperature

def train_step(self, data):

x, y = data

teacher_predictions = self.teacher(x, training=False)

with tf.GradientTape() as tape:

student_predictions = self.student(x, training=True)

student_loss = self.student_loss_fn(y, student_predictions)

# Compute distillation loss

distillation_loss = self.distillation_loss_fn(

tf.nn.softmax(teacher_predictions / self.temperature, axis=1),

tf.nn.softmax(student_predictions / self.temperature, axis=1),

)

loss = self.alpha * student_loss + (1 - self.alpha) * distillation_loss

gradients = tape.gradient(loss, self.student.trainable_variables)

self.optimizer.apply_gradients(zip(gradients, self.student.trainable_variables))

self.compiled_metrics.update_state(y, student_predictions)

return {m.name: m.result() for m in self.metrics}Train the Keras Student Model with Knowledge Distillation

Once the distiller class is ready, we can begin the training process using a dataset like ImageNet or a specialized medical imaging dataset.

I use a “temperature” parameter to soften the teacher’s probability distributions, making it easier for the student model to learn the nuances.

# Initialize the distiller

distiller = Distiller(student=student, teacher=teacher)

# Compile the distiller with appropriate Keras optimizers

distiller.compile(

optimizer=keras.optimizers.Adam(),

metrics=[keras.metrics.SparseCategoricalAccuracy()],

student_loss_fn=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

distillation_loss_fn=keras.losses.KLDivergence(),

alpha=0.1,

temperature=5,

)

# Run the training process

# Assuming x_train and y_train are pre-loaded datasets

distiller.fit(x_train, y_train, epochs=10)Evaluate Performance of the Distilled Keras Model

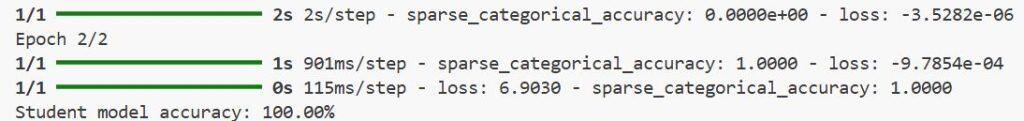

After training, it is crucial to verify if the student model has successfully captured the intelligence of the Vision Transformer.

I usually compare the accuracy of the distilled student against a student trained from scratch to see the performance boost.

# Evaluate the student model independently

student.compile(

optimizer=keras.optimizers.Adam(),

loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=[keras.metrics.SparseCategoricalAccuracy()],

)

# Performance check on the test set

results = student.evaluate(x_test, y_test)

print(f"Student model accuracy: {results[1]*100:.2f}%")Alternative Method: Distil Intermediate Keras Layers

Sometimes, distilling just the final output is not enough; you might want the student to learn from the teacher’s middle layers.

I find this method helpful when the student model is significantly different in architecture compared to the teacher’s.

# Extracting intermediate layers from the Vision Transformer

intermediate_teacher_model = keras.Model(

inputs=teacher.input,

outputs=teacher.get_layer("block_12_output").output

)

# Adjust the student to have a matching intermediate output layer

# This requires a projection layer to match feature map dimensionsDeploy the Compact Keras Model to Production

The ultimate goal of distillation is to have a model that is light enough to run on mobile devices or edge hardware.

I use the Keras saving utilities to export the trained student model into a format compatible with TensorFlow Lite.

# Save the student model for deployment

student.save("distilled_model.h5")

# Convert to TensorFlow Lite for edge devices

converter = tf.lite.TFLiteConverter.from_keras_model(student)

tflite_model = converter.convert()

with open("model.tflite", "wb") as f:

f.write(tflite_model)I executed the above example code and added the screenshot below.

In this tutorial, I showed you how to bridge the gap between heavy Vision Transformers and lightweight models using Keras.

By following these steps, you can create efficient models that don’t sacrifice accuracy for speed.

You may read:

- Image Resizing Techniques in Keras for Computer Vision

- Implement AdaMatch for Semi-Supervised Learning and Domain Adaptation in Keras

- Implement Barlow Twins for Contrastive SSL in Keras

- Supervised Consistency Training in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.