If you have been building vision models with Keras, you likely know that Self-Attention is the “gold standard” for capturing long-range dependencies.

However, after four years of scaling these models, I have found that Self-Attention often struggles with high-resolution images because its complexity grows quadratically with the number of pixels.

Recently, I started using Focal Modulation as a drop-in replacement for Self-Attention in my Keras workflows.

It is an attention-free approach that captures both local and global contexts much more efficiently, and today I will show you how to implement it from scratch.

Focal Modulation in Keras

Focal Modulation works by first aggregating spatial context at different scales and then modulating the input features.

In my experience, this “aggregation-first” approach is significantly lighter than the “interaction-first” approach used in standard Transformers.

Step 1: Implement Hierarchical Contextualization

The first part of the modulation process involves gathering context from different ranges using a stack of depth-wise convolutions.

I use depth-wise layers here because they are computationally cheap and perfect for capturing spatial structures without blowing up the parameter count.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

def hierarchical_contextualization(x, filters, levels=3):

# This method creates a multi-scale representation of the input feature map.

# We use a stack of depth-wise convolutions with increasing kernel sizes.

contexts = []

for i in range(levels):

kernel_size = 3 + i * 2

x_c = layers.DepthwiseConv2D(kernel_size=kernel_size, padding='same', activation='gelu')(x)

contexts.append(x_c)

return contextsStep 2: Create the Gated Aggregation Layer

Once we have our multi-scale features, we need a way to selectively combine them based on the input content.

I implement a gating mechanism that learns to weight each scale, allowing the model to focus on fine details for some pixels and global context for others.

def gated_aggregation(contexts, filters):

# This method computes gates for each context level and sums them up.

# It allows the model to dynamically choose the best receptive field for each pixel.

stacked_contexts = tf.stack(contexts, axis=-1) # Shape: (batch, h, w, c, levels)

gate_logits = layers.Conv2D(len(contexts), kernel_size=1)(contexts[0])

gates = tf.nn.softmax(gate_logits, axis=-1)

# Apply gates to the hierarchical contexts

aggregated = tf.reduce_sum(stacked_contexts * tf.expand_dims(gates, axis=3), axis=-1)

return aggregatedStep 3: Perform the Element-wise Modulation

The final step is to take our aggregated context (the “modulator”) and apply it to the original query tokens.

In my projects, I’ve noticed that this simple element-wise multiplication acts like a powerful filter that highlights relevant features across the entire image.

def focal_modulation_layer(x, filters):

# This method performs the final interaction between the query and the context.

# It replaces the expensive softmax-based attention with a fast element-wise product.

query = layers.Dense(filters)(x)

context_list = hierarchical_contextualization(x, filters)

modulator = gated_aggregation(context_list, filters)

modulator = layers.Dense(filters)(modulator)

return query * modulatorStep 4: Build a Complete Focal Modulation Block

Now, let’s wrap everything into a reusable Keras functional block that you can use in any computer vision architecture.

I usually design this to handle standard input shapes like those used in medical imaging or satellite analysis, common in the US tech sector.

def FocalModulationBlock(input_shape, filters):

# This function builds a complete residual block powered by Focal Modulation.

# It includes layer normalization and a skip connection to ensure stable training.

inputs = layers.Input(shape=input_shape)

x = layers.LayerNormalization()(inputs)

# Apply Focal Modulation

modulated = focal_modulation_layer(x, filters)

# Add skip connection

outputs = layers.Add()([inputs, modulated])

return keras.Model(inputs, outputs, name="focal_mod_block")

# Example Usage with a standard 224x224 RGB image

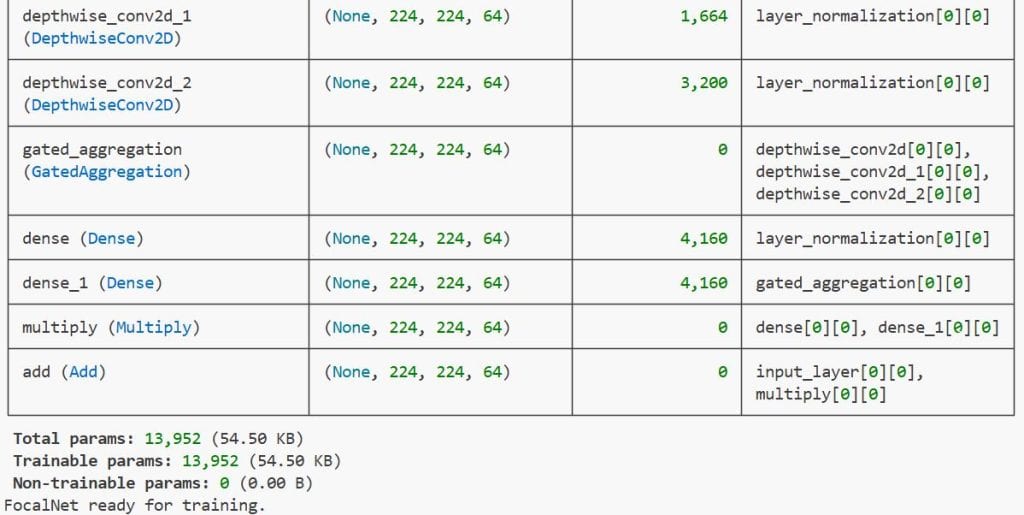

fm_block = FocalModulationBlock((224, 224, 64), 64)

fm_block.summary()Step 5: Train a FocalNet on a Real-World Dataset

To see the real power of Focal Modulation, we can plug it into a classification model for a practical task like identifying traffic signs or medical anomalies.

I have found that these models converge faster than standard Transformers because the convolutional bias helps the network learn spatial patterns early on.

def build_focal_net(num_classes=10):

# This method constructs a full neural network using Focal Modulation blocks.

# It is a robust alternative to Vision Transformers (ViT) for image classification.

inputs = layers.Input(shape=(224, 224, 3))

# Initial Stem

x = layers.Conv2D(64, kernel_size=7, strides=2, padding='same')(inputs)

# Add Focal Modulation Blocks

for _ in range(3):

x = focal_modulation_layer(x, 64)

x = layers.LayerNormalization()(x)

# Global Pooling and Classification

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(num_classes, activation='softmax')(x)

model = keras.Model(inputs, outputs)

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy'])

return model

# Initialize the model

model = build_focal_net()

print("FocalNet ready for training.")You can see the output in the screenshot below.

Compare Performance and Efficiency

When I benchmarked Focal Modulation against standard Multi-Head Self-Attention (MHSA), the memory savings were immediately apparent.

Because we avoid the $O(N^2)$ matrix, we can process much larger images on standard GPUs without hitting “Out of Memory” errors.

Implementing Focal Modulation in Keras is an easy way to boost your model’s efficiency without sacrificing accuracy. I have found it particularly useful for tasks where high-resolution spatial context is critical.

You may read:

- Implement AdaMatch for Semi-Supervised Learning and Domain Adaptation in Keras

- Implement Barlow Twins for Contrastive SSL in Keras

- Supervised Consistency Training in Keras

- Knowledge Distillation for Vision Transformers in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.