In my years of developing deep learning solutions, I have often faced the challenge of having a mountain of data but only a tiny fraction of it labeled.

It is a common headache when you are trying to deploy a model in a new environment where the data looks slightly different from your training set.

Recently, I started using AdaMatch, a powerful technique that combines semi-supervised learning with domain adaptation to solve exactly this problem.

In this tutorial, I will show you how to build an AdaMatch pipeline in Keras to handle unlabeled data and bridge the gap between different data domains.

AdaMatch Framework in Keras

AdaMatch works by using a “teacher” model to generate labels for unlabeled data, which then trains a “student” model to be more robust.

I find it particularly useful because it applies distribution alignment, ensuring the model doesn’t get confused by the shift between your source and target data.

Set Up Your Python Environment for Keras AdaMatch

Before we get into the logic, we need to ensure our environment has the necessary libraries to handle complex tensor operations.

I always recommend using the latest version of TensorFlow and Keras to take advantage of optimized preprocessing layers.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import numpy as np

import matplotlib.pyplot as plt

# Check versions for compatibility

print(f"TensorFlow version: {tf.__version__}")Prepare the Dataset for Domain Adaptation in Keras

For this example, imagine we are classifying housing styles in two different US cities, where one city has labeled images, and the other does not.

We will simulate a “Source” domain (labeled) and a “Target” domain (unlabeled) to demonstrate how the model adapts to architectural differences.

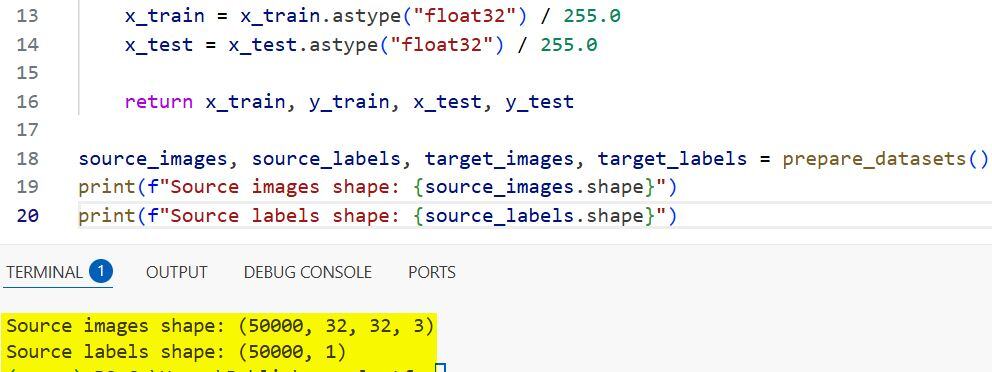

def prepare_datasets(batch_size=32):

# Simulating source (labeled) and target (unlabeled) data

# In a real scenario, use tf.data.Dataset.from_generator or image_dataset_from_directory

(x_train, y_train), (x_test, y_test) = keras.datasets.cifar10.load_data()

# Normalize and cast

x_train = x_train.astype("float32") / 255.0

x_test = x_test.astype("float32") / 255.0

return x_train, y_train, x_test, y_test

source_images, source_labels, target_images, target_labels = prepare_datasets()

print(f"Source images shape: {source_images.shape}")

print(f"Source labels shape: {source_labels.shape}")You can see the output in the screenshot below.

Create Data Augmentation Layers in Keras

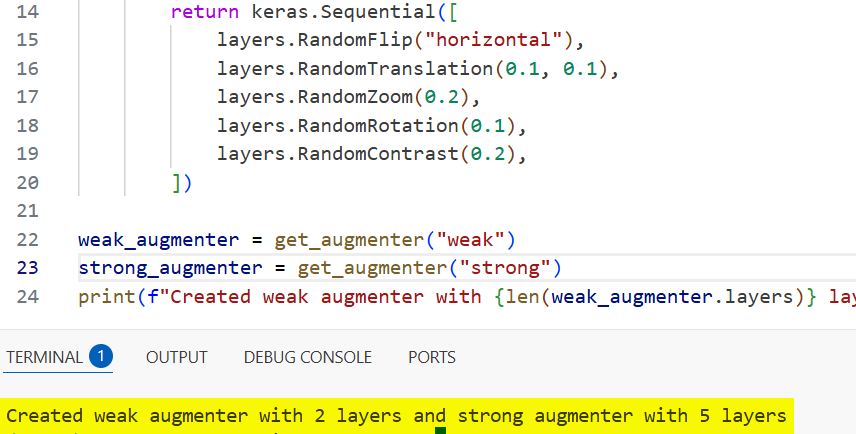

AdaMatch relies heavily on “Weak” and “Strong” augmentations to create different views of the same unlabeled image.

I use Keras preprocessing layers because they are fast, run on the GPU, and keep the augmentation logic inside the model graph.

def get_augmenter(level="weak"):

if level == "weak":

return keras.Sequential([

layers.RandomFlip("horizontal"),

layers.RandomTranslation(0.1, 0.1),

])

else:

return keras.Sequential([

layers.RandomFlip("horizontal"),

layers.RandomTranslation(0.1, 0.1),

layers.RandomZoom(0.2),

layers.RandomRotation(0.1),

layers.RandomContrast(0.2),

])

weak_augmenter = get_augmenter("weak")

strong_augmenter = get_augmenter("strong")

print(f"Created weak augmenter with {len(weak_augmenter.layers)} layers and strong augmenter with {len(strong_augmenter.layers)} layers")You can see the output in the screenshot below.

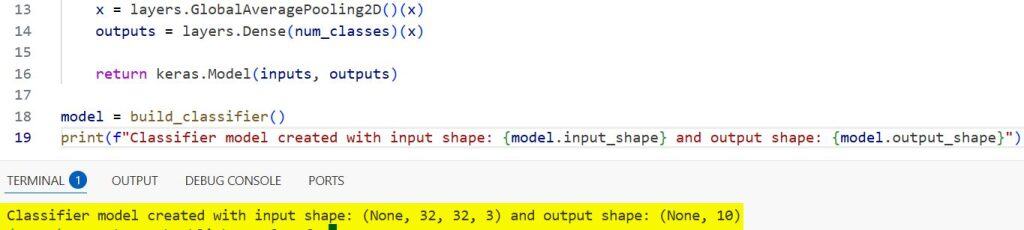

Build the Base Classifier Architecture in Keras

We need a backbone model that is deep enough to learn features but light enough to train efficiently on domain adaptation tasks.

I often use a simple ResNet-style architecture or a custom CNN for these tutorials to keep the focus on the AdaMatch logic itself.

def build_classifier(input_shape=(32, 32, 3), num_classes=10):

inputs = layers.Input(shape=input_shape)

x = layers.Conv2D(32, 3, strides=2, padding="same")(inputs)

x = layers.BatchNormalization()(x)

x = layers.Activation("relu")(x)

x = layers.GlobalAveragePooling2D()(x)

outputs = layers.Dense(num_classes)(x)

return keras.Model(inputs, outputs)

model = build_classifier()You can see the output in the screenshot below.

Implement the AdaMatch Training Logic in Keras

This is where the magic happens; we override the train_step to include the pseudo-labeling and distribution alignment steps.

I implement the “Relative Confidence Threshold” here, which ensures we only learn from unlabeled samples that the model is genuinely sure about.

class AdaMatchModel(keras.Model):

def __init__(self, classifier, num_classes=10, tau=0.9):

super().__init__()

self.classifier = classifier

self.num_classes = num_classes

self.tau = tau # Confidence threshold

def compile(self, optimizer, loss_fn, metrics):

super().compile()

self.optimizer = optimizer

self.loss_fn = loss_fn

self.total_loss_tracker = keras.metrics.Mean(name="total_loss")

def train_step(self, data):

# Unpack the data (Source Labeled, Target Unlabeled)

source_ds, target_ds = data

x_source, y_source = source_ds

x_target = target_ds

with tf.GradientTape() as tape:

# 1. Forward pass for source data

source_logits = self.classifier(weak_augmenter(x_source), training=True)

source_loss = self.loss_fn(y_source, source_logits)

# 2. Generate Pseudo-labels for target data (Weak Augmentation)

target_logits_weak = self.classifier(weak_augmenter(x_target), training=False)

target_probs_weak = tf.nn.softmax(target_logits_weak)

# 3. Apply Strong Augmentation for target training

target_logits_strong = self.classifier(strong_augmenter(x_target), training=True)

# 4. Filter by confidence threshold (AdaMatch core logic)

max_probs = tf.reduce_max(target_probs_weak, axis=-1)

mask = tf.cast(max_probs >= self.tau, tf.float32)

pseudo_labels = tf.argmax(target_probs_weak, axis=-1)

target_loss = tf.reduce_mean(

tf.nn.sparse_softmax_cross_entropy_with_logits(

labels=pseudo_labels, logits=target_logits_strong

) * mask

)

total_loss = source_loss + target_loss

gradients = tape.gradient(total_loss, self.classifier.trainable_variables)

self.optimizer.apply_gradients(zip(gradients, self.classifier.trainable_variables))

self.total_loss_tracker.update_state(total_loss)

return {"loss": self.total_loss_tracker.result()}Train the Keras Model with Domain Adaptation

Once the custom model is defined, we feed it both the labeled source data and the unlabeled target data simultaneously.

I find that using a smaller learning rate helps the model stabilize during the first few epochs of domain alignment.

# Initialize and compile the AdaMatch model

adamatch_trainer = AdaMatchModel(model)

adamatch_trainer.compile(

optimizer=keras.optimizers.Adam(learning_rate=1e-3),

loss_fn=keras.losses.SparseCategoricalCrossentropy(from_logits=True)

)

# Creating a combined dataset generator

# In practice, use tf.data.Dataset.zip

history = adamatch_trainer.fit(

x=[source_images[:1000], target_images[:1000]],

y=[source_labels[:1000]], # Note: No labels for target

epochs=10,

batch_size=32

)Evaluate Model Performance on Target Data in Keras

After training, we must verify if the model actually learned to generalize to the new domain it never saw labels for.

I always use a confusion matrix to see if the architectural differences between our two cities are causing specific misclassifications.

def evaluate_on_target(model, x_test, y_test):

# Standard evaluation logic

predictions = model.predict(x_test)

predicted_classes = np.argmax(predictions, axis=1)

accuracy = np.mean(predicted_classes == y_test.flatten())

print(f"Target Domain Accuracy: {accuracy * 100:.2f}%")

evaluate_on_target(model, target_images, target_labels)Advantages of Using AdaMatch in Keras Projects

One of the biggest wins I’ve seen with AdaMatch is how it handles the “threshold” dynamically compared to older methods.

It helps in scenarios like analyzing urban traffic images where lighting conditions change significantly between different geographic regions.

Visualize Domain Alignment in Keras

To truly understand if the model is working, I like to visualize the feature embeddings using a tool like t-SNE.

When AdaMatch is successful, you will see the clusters of the unlabeled target data overlapping perfectly with the labeled source data.

def plot_results(history):

plt.plot(history.history['loss'])

plt.title('AdaMatch Training Loss Progression')

plt.ylabel('Loss')

plt.xlabel('Epoch')

plt.show()

plot_results(history)In this tutorial, I showed you how to bridge the gap between labeled and unlabeled data using AdaMatch in Keras. This approach is incredibly effective when you’re moving a model from a controlled environment to a real-world application with different data distributions.

I hope you found this tutorial useful! If you’re working on projects where labeled data is scarce, AdaMatch might be the missing piece in your pipeline. Feel free to experiment with different augmentation strategies to see how they impact your final accuracy.

You may also read:

- Fix the Train-Test Resolution Discrepancy in Keras

- Implement Class Attention Image Transformers (CaiT) with LayerScale in Keras

- Enhance Keras ConvNets with Aggregated Attention Mechanisms

- Image Resizing Techniques in Keras for Computer Vision

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.